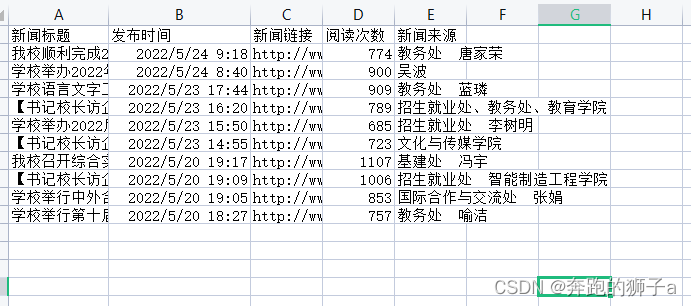

第一次实战爬虫,爬取了新浪国内的最新的首页新闻,附效果截图:

附代码:

import requests

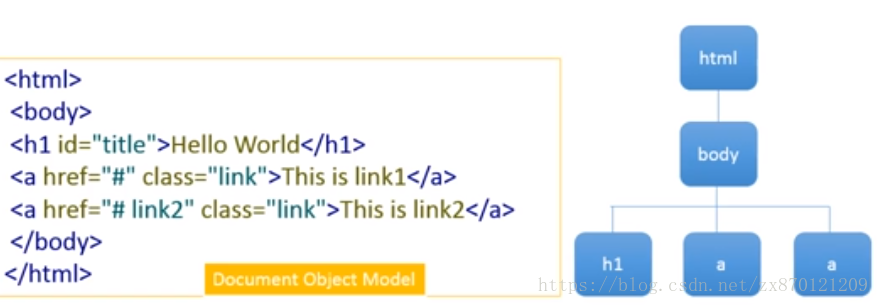

from bs4 import BeautifulSoup

import json

import re

from datetime import datetime

import pandas

import sqlite3

import osurl = 'https://feed.sina.com.cn/api/roll/get?pageid=121&lid=1356&num=20&\

versionNumber=1.2.4&page={}&encode=utf-8&callback=feedCardJsonpCallback&_=1568108608385'commentUrl = 'https://comment.sina.com.cn/page/info?version=1&format=json\

&channel=gn&newsid=comos-{}&group=undefined&compress=0&ie=utf-8&oe=utf-8\

&page=1&page_size=3&t_size=3&h_size=3&thread=1&uid=3537461634'

#爬取网页内详细信息

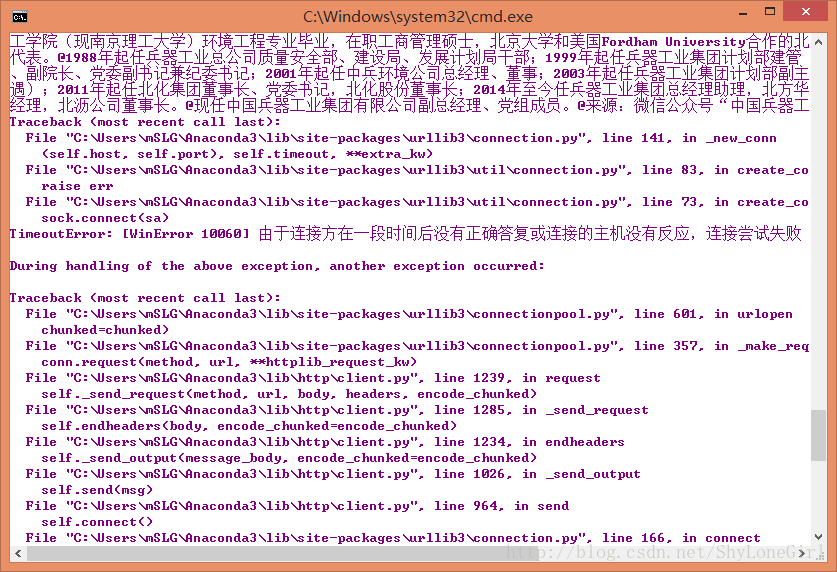

def getNewsDetail(newsurl):result = {}res = requests.get(newsurl)res.encoding = 'utf=8'soup = BeautifulSoup(res.text,'html.parser')result['title'] = soup.select('.main-title')[0].texttimesource = soup.select('.date-source')[0].select('.date')[0].text#dt = datetime.strptime(timesource,'%Y年%m月%d日 %H:%M')result['time'] = timesourceresult['url'] = newsurlresult['origin'] = soup.select('.date-source a')[0].textresult['article'] = ' '.join([p.text.strip() for p in soup.select('#article p')[:-1]])result['author'] = soup.select('.show_author')[0].text.lstrip('责任编辑:')result['comments'] = getCommentCounts(newsurl)return result

#爬取评论数量

def getCommentCounts(newsulr):m = re.search('doc-i(.+).shtml',newsulr)newsId = m.group(1)commentURL = commentUrl.format(newsId)comments = requests.get(commentURL)jd = json.loads(comments.text)return jd['result']['count']['total']#获取每个分页的所有新闻的URL,然后取得详细信息

def parseListLinks(url):newsdetails = []res = requests.get(url)res = res.text.split("try{feedCardJsonpCallback(")[1].split(");}catch(e){};")[0]jd = json.loads(res)for ent in jd['result']['data']:newsdetails.append(getNewsDetail(ent['url']))return newsdetailsnews_total = []

#取得指定分页的新闻信息

for i in range(1,2):#取第一页的新闻信息newsurl = url.format(i)newsary = parseListLinks(newsurl)news_total.extend(newsary)df = pandas.DataFrame(news_total)

#指定生成的列顺序

cols=['title','author','time','origin','article','comments','url']

df=df.loc[:,cols] #df.head(10)

#存储到sqlite数据库中

with sqlite3.connect('news.sqlite') as db:df.to_sql('newsDetail', con=db)

#读取数据库中的信息

with sqlite3.connect('news.sqlite') as db1:df2 = pandas.read_sql_query('SELECT * FROM newsDetail', con=db1)

#保存新闻信息到excle表格中

df2.to_excel('newsDetail.xlsx')

df2