部署版本

首先要确定部署的版本

查询Kubernetes对Docker支持的情况

kubernetes/dependencies.yaml at master · kubernetes/kubernetes (github.com)

查询Kubernetes Dashboard对Kubernetes支持的情况

Releases · kubernetes/dashboard (github.com)

| 名称 | 版本 |

|---|---|

| kubernetes | 1.23 |

| Docker | 20.10.22 |

| Kubernetes Dashboard | 2.5.1 |

部署的步骤为

- 修改服务器hostname及ip

- 配置环境及防火墙

- 调整服务器系统设置

- 部署Docker

- 部署Master节点

- Node节点追加

- 安装k8s Dashboard

准备工作

- 节点CPU核数必须是 ≥2核且内存要求必须≥2G,否则k8s无法启动

- DNS网络: 最好设置为本地网络连通的DNS,否则网络不通,无法下载一些镜像

配置hostname及静态IP

| 节点hostname | 作用 | IP |

|---|---|---|

| kubemaster | master | 192.168.1.4 |

| kubeworker1 | work1 | 192.168.1.5 |

| kubeworker2 | work2 | 192.168.1.6 |

如表格所示,将192.168.1.4服务器的hostname配置为kubemaster,将192.168.1.5服务器的hostname配置为kubeworker1,将192.168.1.6服务器的hostname配置为kubeworker2。并将每个服务器的网卡配置为静态IP,不使用DHCP

Master节点

## 更改节点hostname

[root@localhost ~]# hostnamectl set-hostname kubemaster --static

## 获取节点网卡名

[root@localhost ~]# ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00inet 127.0.0.1/8 scope host lovalid_lft forever preferred_lft foreverinet6 ::1/128 scope host valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000link/ether fa:16:3e:0b:68:40 brd ff:ff:ff:ff:ff:ffinet 192.168.1.4/24 brd 192.168.1.255 scope global noprefixroute dynamic eth0valid_lft 77613sec preferred_lft 77613secinet6 fe80::f816:3eff:fe0b:6840/64 scope link valid_lft forever preferred_lft forever此时需要设置

eth0网卡,命令格式为vi /etc/sysconfig/network-scripts/ifcfg-[网卡名称]

## 设置eth0网卡

[root@localhost ~]# vi /etc/sysconfig/network-scripts/ifcfg-eth0修改以下内容

BOOTPROTO="static" # dhcp改为static

ONBOOT="yes" # 开机启用本配置

IPADDR=192.168.1.4 # 静态IP

GATEWAY=192.168.1.1 # 默认网关

NETMASK=255.255.255.0 # 子网掩码

DNS1=114.114.114.114 # DNS 配置

DNS2=8.8.8.8 # DNS 配置【必须配置,否则SDK镜像下载很慢】

随后重启服务器并编辑hosts文件

## 重启服务器

[root@localhost ~] reboot

## 查看hostname是否生效

[root@kubemaster ~]# hostname

kubemaster

## 编辑/etc/hosts文件,配置映射关系

[root@kubemaster ~]# vi /etc/hosts添加hosts文件的规则

192.168.1.4 kubemaster

192.168.1.5 kubeworker1

192.168.1.6 kubeworker2

worker1节点

# 更改节点hostname

[root@localhost ~]# hostnamectl set-hostname kubeworker1 --static

# 获取节点网卡名

[root@localhost ~]# ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00inet 127.0.0.1/8 scope host lovalid_lft forever preferred_lft foreverinet6 ::1/128 scope hostvalid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000link/ether fa:16:3e:0b:68:40 brd ff:ff:ff:ff:ff:ffinet 192.168.1.5/24 brd 192.168.1.255 scope global noprefixroute dynamic eth0valid_lft 77613sec preferred_lft 77613secinet6 fe80::f816:3eff:fe0b:6840/64 scope link valid_lft forever preferred_lft forever此时需要设置eth0网卡,命令格式为vi /etc/sysconfig/network-scripts/ifcfg-[网卡名称]

# 设置eth0网卡

[root@localhost ~]# vi /etc/sysconfig/network-scripts/ifcfg-eth0修改以下内容

BOOTPROTO="static" #dhcp改为static

ONBOOT="yes" #开机启用本配置

IPADDR=192.168.1.5 #静态IP

GATEWAY=192.168.1.1 #默认网关

NETMASK=255.255.255.0 #子网掩码

DNS1=114.114.114.114 #DNS 配置

DNS2=8.8.8.8 #DNS 配置【必须配置,否则SDK镜像下载很慢】

随后重启服务器并编辑hosts文件

## 重启服务器

[root@localhost ~] reboot

## 查看hostname是否生效

[root@kubeworker1 ~]# hostname

kubeworker1

## 编辑/etc/hosts文件,配置映射关系

[root@kubeworker1 ~]# vi /etc/hosts添加hosts文件的规则

192.168.1.4 kubemaster

192.168.1.5 kubeworker1

192.168.1.6 kubeworker2

worker2节点

# 更改节点hostname

[root@localhost ~]# hostnamectl set-hostname kubeworker2 --static

# 获取节点网卡名

[root@localhost ~]# ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00inet 127.0.0.1/8 scope host lovalid_lft forever preferred_lft foreverinet6 ::1/128 scope host valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000link/ether fa:16:3e:0b:68:40 brd ff:ff:ff:ff:ff:ffinet 192.168.1.6/24 brd 192.168.1.255 scope global noprefixroute dynamic eth0valid_lft 77613sec preferred_lft 77613secinet6 fe80::f816:3eff:fe0b:6840/64 scope link valid_lft forever preferred_lft forever此时需要设置eth0网卡,命令格式为vi /etc/sysconfig/network-scripts/ifcfg-[网卡名称]

# 设置eth0网卡

[root@localhost ~]# vi /etc/sysconfig/network-scripts/ifcfg-eth0修改以下内容

BOOTPROTO="static" #dhcp改为static

ONBOOT="yes" #开机启用本配置

IPADDR=192.168.1.6 #静态IP

GATEWAY=192.168.1.1 #默认网关

NETMASK=255.255.255.0 #子网掩码

DNS1=114.114.114.114 #DNS 配置

DNS2=8.8.8.8 #DNS 配置【必须配置,否则SDK镜像下载很慢】

随后重启服务器并编辑hosts文件

## 重启服务器

[root@localhost ~] reboot

## 查看hostname是否生效

[root@kubeworker2 ~]# hostname

kubeworker2

## 编辑/etc/hosts文件,配置映射关系

[root@kubeworker2 ~]# vi /etc/hosts添加hosts文件的规则

192.168.1.4 kubemaster

192.168.1.5 kubeworker1

192.168.1.6 kubeworker2

环境及防火墙配置

注意:

此项需要每一台机器都安装

安装依赖环境

yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstatlibseccomp wget vim net-tools git iproute lrzsz bash-completion tree bridge-utils unzip bind-utils gcc

用普通的noteport不行,必须用ingress

防火墙配置

关闭防火墙

注意:

生产环境建议放行端口

systemctl stop firewalld && systemctl disable firewalldiptables配置

注意:

iptables -F命令为清空iptables规则,生产环境下会清空已有规则,需谨慎执行

安装、启动iptables,设置开机自启,清空iptables规则,保存当前规则到默认规则

yum -y install iptables-services && systemctl start iptables && systemctl enable iptables && iptables -F && service iptables save

关闭selinux

注意:

关闭Selinux是为了放行脚本(安装的时候需要执行脚本)

# 关闭swap分区【虚拟内存】并且永久关闭虚拟内存

[root@kubemaster ~]# swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

# 关闭selinux

[root@kubemaster ~]# swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

[root@kubemaster ~]# setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

setenforce: SELinux is disabled

系统设置调整

注意:

此项需要每一台机器都设置

调整内核参数

K8s必须禁用ipv6

net.ipv6.conf.all.disable_ipv6=1

cat > kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

EOF#将优化内核文件拷贝到/etc/sysctl.d/文件夹下,这样优化文件开机的时候能够被调用

cp kubernetes.conf /etc/sysctl.d/kubernetes.conf#自动加载br_netfilter模块

modprobe br_netfilter

#自动加载ip_conntrack模块

modprobe ip_conntrack#手动刷新,让优化文件立即生效

sysctl -p /etc/sysctl.d/kubernetes.conf

调整系统时区

#设置系统时区为中国/上海

timedatectl set-timezone "Asia/Shanghai"

#将当前的UTC 时间写入硬件时钟

timedatectl set-local-rtc 0

#重启依赖于系统时间的服务

systemctl restart rsyslog

systemctl restart crond

关闭邮件服务(生产环境别执行)

systemctl stop postfix && systemctl disable postfix

设置日志保存

- 创建保存日志的目录

[root@kubemaster ~]# mkdir /var/log/journal

- 创建配置文件存放目录

[root@kubemaster ~]# mkdir /etc/systemd/journald.conf.d

- 创建配置文件

cat > /etc/systemd/journald.conf.d/99-prophet.conf <<EOF

[Journal]

Storage=persistent

Compress=yes

SyncIntervalSec=5m

RateLimitInterval=30s

RateLimitBurst=1000

SystemMaxUse=10G

SystemMaxFileSize=200M

MaxRetentionSec=2week

ForwardToSyslog=no

EOF

- 重启systemd journald 的配置

systemctl restart systemd-journald

- 打开文件数调整(可忽略,不执行)

echo "* soft nofile 65536" >> /etc/security/limits.conf

echo "* hard nofile 65536" >> /etc/security/limits.conf

开启 ipvs 前置条件

注意:

kube-proxy 的ingress部署,需要开启 ipvs

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

#使用lsmod命令查看这些文件是否被引导

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

ip_vs_sh 12688 0

ip_vs_wrr 12697 0

ip_vs_rr 12600 0

ip_vs 145458 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr

nf_conntrack_ipv4 15053 0

nf_defrag_ipv4 12729 1 nf_conntrack_ipv4

nf_conntrack 139264 2 ip_vs,nf_conntrack_ipv4

libcrc32c 12644 2 ip_vs,nf_conntrack

Docker部署

注意:

此项需要每一台机器都安装

安装

#安装依赖

yum update

yum install -y yum-utils device-mapper-persistent-data lvm2

#配置仓库

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#安装docker ce

yum install docker-ce-20.10.22设置daemon文件

#创建/etc/docker目录

mkdir /etc/docker

#更新daemon.json文件

cat > /etc/docker/daemon.json <<EOF

{"registry-mirrors": ["https://ebkn7ykm.mirror.aliyuncs.com","https://docker.mirrors.ustc.edu.cn","http://f1361db2.m.daocloud.io","https://registry.docker-cn.com"],"exec-opts": ["native.cgroupdriver=systemd"],"log-driver": "json-file","log-opts": {"max-size": "100m"},"storage-driver": "overlay2"

}

EOF

#注意:一定注意编码问题,出现错误---查看命令:journalctl -amu docker 即可发现错误

#创建,存储docker配置文件

# mkdir -p /etc/systemd/system/docker.service.d

重启docker服务

[root@kubemaster ~]# systemctl daemon-reload && systemctl restart docker && systemctl enable docker

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

注意:

安装后需使用docker info查看是否有网络警告,会影响后续k8s部署

kubeadm安装K8S

注意:

此项需要每一台机器都安装

yum仓库镜像

国内镜像配置(国内建议配置)

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpghttp://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

官网镜像配置

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://packages.cloud.google.com/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://packages.cloud.google.com/yum/doc/yum-key.gpg https://packages.cloud.google.com/yum/doc/rpm-package-key.gpg

EOF

安装kubeadm 、kubelet、kubectl

[root@kubemaster ~]# yum install -y kubelet-1.23.15 kubeadm-1.23.15 kubectl-1.23.15 --disableexcludes=kubernetes

[root@kubemaster ~]# systemctl enable kubelet && systemctl start kubelet

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.Master节点部署

注意:

此项需要安装在Master节点

修改配置文件

# 初始化配置文件

kubeadm config print init-defaults > kubeadm-init.yaml

# 修改配置文件

vi kubeadm-init.yaml

# 查看kubeadm版本

kubeadm version需要修改的项

- 将

advertiseAddress: 1.2.3.4修改为本地使用的IP地址,示例上使用的是192.168.1.4,就修改为advertiseAddress: 192.168.1.4 - 将

kubernetesVersion: 1.23.0修改为当前使用的版本,示例上使用的是1.23.15,就修改为kubernetesVersion: 1.23.15 - 将

imageRepository: k8s.gcr.io修改为imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers - 增加Pod子网络,在

networking下添加podSubnet: 10.244.0.0/16

修改完毕后文件如下

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:- system:bootstrappers:kubeadm:default-node-tokentoken: abcdef.0123456789abcdefttl: 24h0m0susages:- signing- authentication

kind: InitConfiguration

localAPIEndpoint:advertiseAddress: 192.168.1.4 # 本机IPbindPort: 6443

nodeRegistration:criSocket: /var/run/dockershim.sockname: k8s-mastertaints:- effect: NoSchedulekey: node-role.kubernetes.io/master

---

apiServer:timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns:type: CoreDNS

etcd:local:dataDir: /var/lib/etcd

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers #镜像仓库

kind: ClusterConfiguration

kubernetesVersion: v1.20.15

networking:dnsDomain: cluster.localserviceSubnet: 10.96.0.0/12podSubnet: 10.244.0.0/16 # 新增Pod子网络

scheduler: {}

拉取镜像

[root@kubemaster ~]# kubeadm config images pull --config kubeadm-init.yaml

安装

[root@kubemaster ~]# kubeadm init --config kubeadm-init.yaml初始化后,会出现以下命令,后面追加Node的时候需要用

kubeadm join 192.168.1.4:6443 --token abcdef.0123456789abcdef \--discovery-token-ca-cert-hash sha256:602efef33cee46c1aa6a95ddd0972606e826ef122f810930e835b4f536cddc14

网络配置

当前Master节点的STATUS是NotReady,是因为没有配置网络

## 配置kubectl执行命令环境

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config## 执行kubectl命令查看机器节点

[root@kubemaster ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

node NotReady control-plane,master 14m v1.23.15

配置Calico网络

下载配置文件

wget https://docs.projectcalico.org/manifests/calico.yaml修改配置文件

这里需要指定网卡(添加IP_AUTODETECTION_METHOD)

## 编辑calico.yaml

vi calico.yaml

下面的示例截取了部分配置文件,eth.*的意思就是以eth为开头的网卡,根据服务器的不同,前缀也会不同

# Cluster type to identify the deployment type

- name: CLUSTER_TYPEvalue: "k8s,bgp"

# IP automatic detection

- name: IP_AUTODETECTION_METHODvalue: "interface=eth.*"

# Auto-detect the BGP IP address.

- name: IPvalue: "autodetect"

# Enable IPIP

- name: CALICO_IPV4POOL_IPIPvalue: "Always"安装

kubectl apply -f calico.yaml

此时查看node信息, Master的状态已经是Ready了.

[root@kubemaster ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

node Ready control-plane,master 14m v1.23.15

Node节点追加

注意:

此项需要执行在Node节点

在其他Node执行以下命令即可

kubeadm join 192.168.1.4:6443 --token abcdef.0123456789abcdef \--discovery-token-ca-cert-hash sha256:602efef33cee46c1aa6a95ddd0972606e826ef122f810930e835b4f536cddc14验证状态

[root@kubemaster ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

kubemaster Ready control-plane,master 14m v1.23.15

kubeworker1 Ready <none> 5m37s v1.23.15

kubeworker2 Ready <none> 5m28s v1.23.15

[root@kubemaster ~]# kubectl get pod -n kube-system -o wide

## 如果看到下面的pod状态都是Running状态,说明K8S集群环境就构建完成

安装 Kubernetes Dashboard

安装Dashboard

下载配置文件

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.5.1/aio/deploy/recommended.yaml

配置外网访问

在recommended.yaml文件中寻找kubernetes-dashboard,添加访问方式为NodePort,端口为30443,示例为配置文件需要修改的部分,需要添加type: NodePort和nodePort: 30443

kind: Service

apiVersion: v1

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboardnamespace: kubernetes-dashboard

spec:type: NodePortports:- port: 443targetPort: 8443nodePort: 30443selector:k8s-app: kubernetes-dashboard

安装

kubectl apply -f recommended.yaml

检查安装情况

查看dashboard是否进行了配置,443:30443/TCP即证明已配置完成

[root@kubemaster ~]# kubectl get svc -A | grep kubernetes-dashboard

kubernetes-dashboard dashboard-metrics-scraper ClusterIP 10.110.95.223 <none> 8000/TCP 107m

kubernetes-dashboard kubernetes-dashboard NodePort 10.111.35.64 <none> 443:30443/TCP 107m

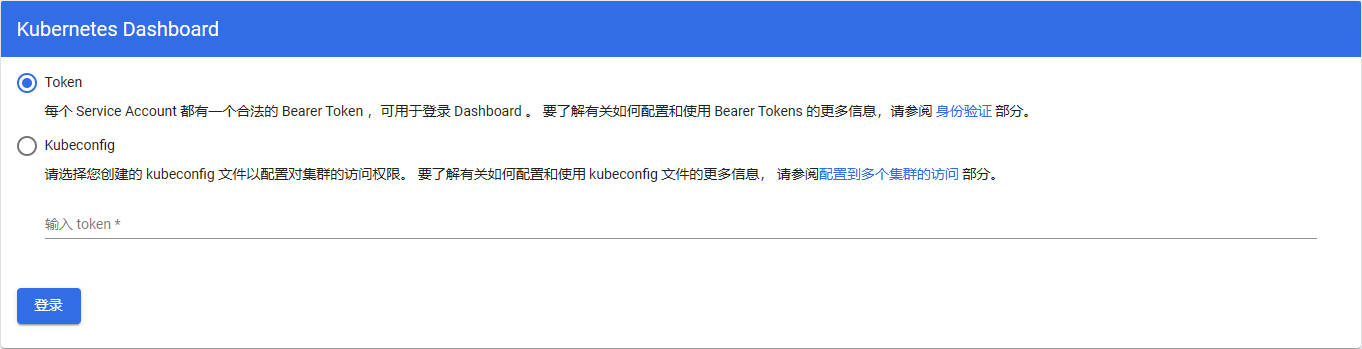

登录

创建配置文件

cat > dashboard-admin.yaml << EOF

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: adminannotations:rbac.authorization.kubernetes.io/autoupdate: "true"

roleRef:kind: ClusterRolename: cluster-adminapiGroup: rbac.authorization.k8s.io

subjects:

- kind: ServiceAccountname: adminnamespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:name: adminnamespace: kube-systemlabels:kubernetes.io/cluster-service: "true"addonmanager.kubernetes.io/mode: Reconcile

EOF

创建登录用户

[root@kubemaster ~]# kubectl apply -f dashboard-admin.yaml

clusterrolebinding.rbac.authorization.k8s.io/admin created

serviceaccount/admin created

查看admin-user账户的token

[root@kubemaster ~]# kubectl -n kube-system get secret|grep admin-token

admin-token-w5gl9 kubernetes.io/service-account-token 3 2m20s

[root@kubemaster ~]# kubectl -n kube-system describe secret admin-token-w5gl9

Name: admin-token-w5gl9

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: adminkubernetes.io/service-account.uid: 958ae7a6-66b0-4685-b1d5-cf4be9523940Type: kubernetes.io/service-account-tokenData

====

ca.crt: 1099 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6InpQUjkxMXJYR1RaUEZMU1AtZV9rU3VLVEs3djVGNFdpWGZQMmtZTlRaQkEifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi10b2tlbi13NWdsOSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJhZG1pbiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6Ijk1OGFlN2E2LTY2YjAtNDY4NS1iMWQ1LWNmNGJlOTUyMzk0MCIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlLXN5c3RlbTphZG1pbiJ9.cfELmWrVeLY4fJsR9b72_Uyy4HJ1sl9IIRCzje17l-ZOcyJq6TUKhIbfGt52YOa7b2ZNF-yjln-kcUKP5hlMEafPRyEy4UzFvOT3e9PW6PolTqB23NUPpcyu_sUflxVzOEZMXngqvvyxqgxk6fmoLOTRhLAnfhyI_cHidn4Pffen3uBMB1pAPXfNp9exDxMjHLhrJDsc9RGOe7gJqVTuvAOe2fV5A4Fd_pxiZmwKrZr4S4EpCHtBYWCz_xil5eclSzjBCvu_ZR9YSGRAsNt0OocEi4QnqPSIxYsm4KzVyDp9AWao9vGpDwmJ5RmFLm6E-0JQJc5hMSUwSbFkte8jHg 进入Dashboard

在浏览器输入https://[yourIP]:30443,填入IP地址并访问,会出现下图,在下图token处填入刚才获取的token即可进入Dashboard

![[数据结构基础]栈和队列的结构及接口函数](https://img-blog.csdnimg.cn/1891b6b26f8d4cbc850b93c7d6c6a697.png)