秋招面试专栏推荐 :深度学习算法工程师面试问题总结【百面算法工程师】——点击即可跳转

💡💡💡本专栏所有程序均经过测试,可成功执行💡💡💡

专栏目录 :《YOLOv8改进有效涨点》专栏介绍 & 专栏目录 | 目前已有100+篇内容,内含各种Head检测头、损失函数Loss、Backbone、Neck、NMS等创新点改进——点击即可跳转

空间注意力虽提高卷积神经网络性能,但有局限。本文介绍了感受野注意力(RFA)新机制,解决大尺寸卷积核参数共享问题。RFA关注感受野空间特征,为大型卷积核提供有效权重。RFAConv操作几乎不增加计算成本,显著提升网络性能。文章在介绍主要的原理后,将手把手教学如何进行模块的代码添加和修改,并将修改后的完整代码放在文章的最后,方便大家一键运行,小白也可轻松上手实践。以帮助您更好地学习深度学习目标检测YOLO系列的挑战。

专栏地址:YOLOv8改进——更新各种有效涨点方法——点击即可跳转

目录

1.原理

2. 将C2f_RFCAConv添加到yolov8网络中

2.1 C2f_RFCAConv代码实现

2.2 C2f_RFCAConv的神经网络模块代码解析

2.3 更改init.py文件

2.4 添加yaml文件

2.5 注册模块

2.6 执行程序

3. 完整代码分享

4. GFLOPs

5. 进阶

6. 总结

1.原理

论文地址:RFAConv: Innovating Spatial Attention and Standard Convolutional Operation——点击即可跳转

官方代码:官方代码仓库——点击即可跳转

RFAConv(受体场注意卷积)是一种新颖的卷积运算,旨在解决标准卷积和现有空间注意机制的局限性,特别是在参数共享和大型卷积核方面。

RFAConv 背后的关键原则:

-

受体场空间特征:与专注于单个空间特征的传统空间注意不同,RFAConv 强调受体场空间特征,这些特征是根据卷积核的大小动态生成的。这种方法通过关注受体场内不同特征的重要性来增强特征提取。

-

解决参数共享问题:在标准卷积中,内核参数在整个输入中共享,限制了网络跨空间位置捕获不同信息的能力。RFAConv 通过将注意力机制与卷积相结合来解决此问题,为每个受体场创建非共享参数。

-

注意力机制集成:RFAConv 集成了一种注意力机制,该机制为接受场中的每个特征分配重要性,使网络能够专注于最重要的信息。此过程避免了 CBAM 和 CA 等传统注意力机制的局限性,这些机制在不同空间区域之间共享注意力权重。

-

高效轻量:尽管引入了注意力机制,但 RFAConv 仅增加了极少的计算开销和参数。它还使用组卷积等技术来高效提取接受场空间特征,使其适用于实时应用。

-

性能提升:通过解决空间注意力和卷积参数共享的局限性,RFAConv 增强了神经网络在分类、对象检测和分割等任务中的性能,在许多情况下优于 CBAM 和 CA 等其他基于注意力的方法。

综上所述,RFAConv 通过关注感受野空间特征进行创新,提供了一种更灵活、更强大的方法来替代标准卷积,同时保持效率并提高网络性能。

2. 将C2f_RFCAConv添加到yolov8网络中

2.1 C2f_RFCAConv代码实现

关键步骤一: 将下面代码粘贴到在/ultralytics/ultralytics/nn/modules/block.py中,并在该文件的__all__中添加“C2f_RFCAConv”

python">

from einops import rearrangeclass h_sigmoid(nn.Module):def __init__(self, inplace=True):super(h_sigmoid, self).__init__()self.relu = nn.ReLU6(inplace=inplace)def forward(self, x):return self.relu(x + 3) / 6class h_swish(nn.Module):def __init__(self, inplace=True):super(h_swish, self).__init__()self.sigmoid = h_sigmoid(inplace=inplace)def forward(self, x):return x * self.sigmoid(x)class RFAConv(nn.Module):def __init__(self,in_channel,out_channel,kernel_size,stride=1):super().__init__()self.kernel_size = kernel_sizeself.get_weight = nn.Sequential(nn.AvgPool2d(kernel_size=kernel_size, padding=kernel_size // 2, stride=stride),nn.Conv2d(in_channel, in_channel * (kernel_size ** 2), kernel_size=1, groups=in_channel,bias=False))self.generate_feature = nn.Sequential(nn.Conv2d(in_channel, in_channel * (kernel_size ** 2), kernel_size=kernel_size,padding=kernel_size//2,stride=stride, groups=in_channel, bias=False),nn.BatchNorm2d(in_channel * (kernel_size ** 2)),nn.ReLU())# self.conv = nn.Sequential(nn.Conv2d(in_channel, out_channel, kernel_size=kernel_size, stride=kernel_size),# nn.BatchNorm2d(out_channel),# nn.ReLU())self.conv = Conv(in_channel, out_channel, k=kernel_size, s=kernel_size, p=0)def forward(self,x):b,c = x.shape[0:2]weight = self.get_weight(x)h,w = weight.shape[2:]weighted = weight.view(b, c, self.kernel_size ** 2, h, w).softmax(2) # b c*kernel**2,h,w -> b c k**2 h w feature = self.generate_feature(x).view(b, c, self.kernel_size ** 2, h, w) #b c*kernel**2,h,w -> b c k**2 h w weighted_data = feature * weightedconv_data = rearrange(weighted_data, 'b c (n1 n2) h w -> b c (h n1) (w n2)', n1=self.kernel_size, # b c k**2 h w -> b c h*k w*kn2=self.kernel_size)return self.conv(conv_data)class SE(nn.Module):def __init__(self, in_channel, ratio=16):super(SE, self).__init__()self.gap = nn.AdaptiveAvgPool2d((1, 1))self.fc = nn.Sequential(nn.Linear(in_channel, ratio, bias=False), # 从 c -> c/rnn.ReLU(),nn.Linear(ratio, in_channel, bias=False), # 从 c/r -> cnn.Sigmoid())def forward(self, x):b, c= x.shape[0:2]y = self.gap(x).view(b, c)y = self.fc(y).view(b, c,1, 1)return yclass RFCBAMConv(nn.Module):def __init__(self,in_channel,out_channel,kernel_size=3,stride=1):super().__init__()if kernel_size % 2 == 0:assert("the kernel_size must be odd.")self.kernel_size = kernel_sizeself.generate = nn.Sequential(nn.Conv2d(in_channel,in_channel * (kernel_size**2),kernel_size,padding=kernel_size//2,stride=stride,groups=in_channel,bias =False),nn.BatchNorm2d(in_channel * (kernel_size**2)),nn.ReLU())self.get_weight = nn.Sequential(nn.Conv2d(2,1,kernel_size=3,padding=1,bias=False),nn.Sigmoid())self.se = SE(in_channel)# self.conv = nn.Sequential(nn.Conv2d(in_channel,out_channel,kernel_size,stride=kernel_size),nn.BatchNorm2d(out_channel),nn.ReLu())self.conv = Conv(in_channel, out_channel, k=kernel_size, s=kernel_size, p=0)def forward(self,x):b,c = x.shape[0:2]channel_attention = self.se(x)generate_feature = self.generate(x)h,w = generate_feature.shape[2:]generate_feature = generate_feature.view(b,c,self.kernel_size**2,h,w)generate_feature = rearrange(generate_feature, 'b c (n1 n2) h w -> b c (h n1) (w n2)', n1=self.kernel_size,n2=self.kernel_size)unfold_feature = generate_feature * channel_attentionmax_feature,_ = torch.max(generate_feature,dim=1,keepdim=True)mean_feature = torch.mean(generate_feature,dim=1,keepdim=True)receptive_field_attention = self.get_weight(torch.cat((max_feature,mean_feature),dim=1))conv_data = unfold_feature * receptive_field_attentionreturn self.conv(conv_data)class RFCAConv(nn.Module):def __init__(self, inp, oup, kernel_size, stride=1, reduction=32):super(RFCAConv, self).__init__()self.kernel_size = kernel_sizeself.generate = nn.Sequential(nn.Conv2d(inp,inp * (kernel_size**2),kernel_size,padding=kernel_size//2,stride=stride,groups=inp,bias =False),nn.BatchNorm2d(inp * (kernel_size**2)),nn.ReLU())self.pool_h = nn.AdaptiveAvgPool2d((None, 1))self.pool_w = nn.AdaptiveAvgPool2d((1, None))mip = max(8, inp // reduction)self.conv1 = nn.Conv2d(inp, mip, kernel_size=1, stride=1, padding=0)self.bn1 = nn.BatchNorm2d(mip)self.act = h_swish()self.conv_h = nn.Conv2d(mip, inp, kernel_size=1, stride=1, padding=0)self.conv_w = nn.Conv2d(mip, inp, kernel_size=1, stride=1, padding=0)self.conv = nn.Sequential(nn.Conv2d(inp,oup,kernel_size,stride=kernel_size))def forward(self, x):b,c = x.shape[0:2]generate_feature = self.generate(x)h,w = generate_feature.shape[2:]generate_feature = generate_feature.view(b,c,self.kernel_size**2,h,w)generate_feature = rearrange(generate_feature, 'b c (n1 n2) h w -> b c (h n1) (w n2)', n1=self.kernel_size,n2=self.kernel_size)x_h = self.pool_h(generate_feature)x_w = self.pool_w(generate_feature).permute(0, 1, 3, 2)y = torch.cat([x_h, x_w], dim=2)y = self.conv1(y)y = self.bn1(y)y = self.act(y) h,w = generate_feature.shape[2:]x_h, x_w = torch.split(y, [h, w], dim=2)x_w = x_w.permute(0, 1, 3, 2)a_h = self.conv_h(x_h).sigmoid()a_w = self.conv_w(x_w).sigmoid()return self.conv(generate_feature * a_w * a_h)class Bottleneck_RFAConv(Bottleneck):"""Standard bottleneck with RFAConv."""def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5): # ch_in, ch_out, shortcut, groups, kernels, expandsuper().__init__(c1, c2, shortcut, g, k, e)c_ = int(c2 * e) # hidden channelsself.cv1 = Conv(c1, c_, k[0], 1)self.cv2 = RFAConv(c_, c2, k[1])class C3_RFAConv(C3):def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):super().__init__(c1, c2, n, shortcut, g, e)c_ = int(c2 * e) # hidden channelsself.m = nn.Sequential(*(Bottleneck_RFAConv(c_, c_, shortcut, g, k=(1, 3), e=1.0) for _ in range(n)))class C2f_RFAConv(C2f):def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):super().__init__(c1, c2, n, shortcut, g, e)self.m = nn.ModuleList(Bottleneck_RFAConv(self.c, self.c, shortcut, g, k=(3, 3), e=1.0) for _ in range(n))class Bottleneck_RFCBAMConv(Bottleneck):"""Standard bottleneck with RFCBAMConv."""def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5): # ch_in, ch_out, shortcut, groups, kernels, expandsuper().__init__(c1, c2, shortcut, g, k, e)c_ = int(c2 * e) # hidden channelsself.cv1 = Conv(c1, c_, k[0], 1)self.cv2 = RFCBAMConv(c_, c2, k[1])class C3_RFCBAMConv(C3):def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):super().__init__(c1, c2, n, shortcut, g, e)c_ = int(c2 * e) # hidden channelsself.m = nn.Sequential(*(Bottleneck_RFCBAMConv(c_, c_, shortcut, g, k=(1, 3), e=1.0) for _ in range(n)))class C2f_RFCBAMConv(C2f):def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):super().__init__(c1, c2, n, shortcut, g, e)self.m = nn.ModuleList(Bottleneck_RFCBAMConv(self.c, self.c, shortcut, g, k=(3, 3), e=1.0) for _ in range(n))class Bottleneck_RFCAConv(Bottleneck):"""Standard bottleneck with RFCBAMConv."""def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5): # ch_in, ch_out, shortcut, groups, kernels, expandsuper().__init__(c1, c2, shortcut, g, k, e)c_ = int(c2 * e) # hidden channelsself.cv1 = Conv(c1, c_, k[0], 1)self.cv2 = RFCAConv(c_, c2, k[1])class C3_RFCAConv(C3):def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):super().__init__(c1, c2, n, shortcut, g, e)c_ = int(c2 * e) # hidden channelsself.m = nn.Sequential(*(Bottleneck_RFCAConv(c_, c_, shortcut, g, k=(1, 3), e=1.0) for _ in range(n)))class C2f_RFCAConv(C2f):def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):super().__init__(c1, c2, n, shortcut, g, e)self.m = nn.ModuleList(Bottleneck_RFCAConv(self.c, self.c, shortcut, g, k=(3, 3), e=1.0) for _ in range(n))2.2 C2f_RFCAConv的神经网络模块代码解析

1. RFAConv 模块

RFAConv 是一种基于动态卷积的模块,它的核心思想是根据输入生成卷积核权重并应用于特征图上,具备空间自适应特性。

-

get_weight:-

先通过

AvgPool2d池化和Conv2d来生成与输入特征图相匹配的权重,这些权重控制卷积核在不同空间位置的响应。

-

-

generate_feature:-

通过深度可分离卷积生成多个特征图(数量为卷积核的大小平方倍),每个特征图捕获不同的局部信息。

-

-

weighted_data:-

权重和生成的特征图通过

softmax进行加权,并通过rearrange操作进行重构,最终输出的特征图经过卷积层得到输出。这一操作类似于对每个空间位置应用不同的卷积核(动态卷积)。

-

2. SE 模块

SE(Squeeze-and-Excitation)模块是一种经典的通道注意力机制。

-

gap(Global Average Pooling): 对特征图的每个通道进行全局平均池化,得到每个通道的全局信息。 -

fc(Fully Connected): 通过两个全连接层,对特征图通道进行缩放。它先将特征图的通道数缩小,再恢复为原始通道数,并通过 Sigmoid 激活生成注意力权重。 -

最终,SE模块会对输入的每个通道进行重新加权,增强重要通道,抑制无关通道。

3. RFCAConv 模块

RFCAConv 模块是 RFAConv 和 CA(Coordinate Attention)的结合体,结合了动态卷积与坐标注意力。

-

generate: 使用深度可分离卷积生成多个特征图。 -

pool_h和pool_w: 类似于CA注意力机制,分别在高度和宽度维度进行全局平均池化,捕捉每个方向上的全局信息。 -

conv1: 将池化后的特征通过1x1卷积和激活函数进行处理,然后将其分成高度和宽度两个部分。 -

conv_h和conv_w: 分别生成高度和宽度的注意力权重(通过 Sigmoid),这些权重会用来调整特征图的空间表示,类似于CA中的注意力机制。 -

输出: 将生成的特征图与高度和宽度的注意力权重相乘,提升空间上的特征表达能力,最终通过卷积层输出。

4. Bottleneck_RFAConv 模块

这是标准的瓶颈结构结合了 RFAConv。其中:

-

cv1: 用标准卷积进行初始特征提取。 -

cv2: 用RFAConv替换标准的第二层卷积,利用其动态卷积特性,增强空间信息的表达。

5. C2f_RFCAConv 模块

这个模块基于 C2f 结构,使用了 Bottleneck_RFCAConv,在其瓶颈结构中引入了 RFCAConv,从而结合了动态卷积和坐标注意力机制。

总结

-

RFAConv主要实现了动态卷积,能够根据输入自适应调整卷积核的权重。 -

RFCAConv则结合了RFAConv和Coordinate Attention,通过坐标注意力机制增强了特征的空间表达。 -

Bottleneck_RFAConv模块是将这些卷积整合到标准的神经网络结构中,增强了其特征提取能力。

这些模块的设计思想是为了提升模型的感受野和空间感知能力,同时通过注意力机制增强特征选择性。

2.3 更改init.py文件

关键步骤二:修改modules文件夹下的__init__.py文件,先导入函数

然后在下面的__all__中声明函数

2.4 添加yaml文件

关键步骤三:在/ultralytics/ultralytics/cfg/models/v8下面新建文件yolov8_C2f_RFCAConv.yaml文件,粘贴下面的内容

- OD【目标检测】

python"># Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'# [depth, width, max_channels]n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPss: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPsm: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPsl: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPsx: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs# YOLOv8.0n backbone

backbone:# [from, repeats, module, args]- [-1, 1, RFCAConv, [64, 3, 2]] # 0-P1/2- [-1, 1, RFCAConv, [128, 3, 2]] # 1-P2/4- [-1, 3, C2f_RFCAConv, [128, True]]- [-1, 1, RFCAConv, [256, 3, 2]] # 3-P3/8- [-1, 6, C2f_RFCAConv, [256, True]]- [-1, 1, RFCAConv, [512, 3, 2]] # 5-P4/16- [-1, 6, C2f_RFCAConv, [512, True]]- [-1, 1, RFCAConv, [1024, 3, 2]] # 7-P5/32- [-1, 3, C2f_RFCAConv, [1024, True]]- [-1, 1, SPPF, [1024, 5]] # 9# YOLOv8.0n head

head:- [-1, 1, nn.Upsample, [None, 2, 'nearest']]- [[-1, 6], 1, Concat, [1]] # cat backbone P4- [-1, 3, C2f_RFCAConv, [512]] # 12- [-1, 1, nn.Upsample, [None, 2, 'nearest']]- [[-1, 4], 1, Concat, [1]] # cat backbone P3- [-1, 3, C2f_RFCAConv, [256]] # 15 (P3/8-small)- [-1, 1, RFCAConv, [256, 3, 2]]- [[-1, 12], 1, Concat, [1]] # cat head P4- [-1, 3, C2f_RFCAConv, [512]] # 18 (P4/16-medium)- [-1, 1, RFCAConv, [512, 3, 2]]- [[-1, 9], 1, Concat, [1]] # cat head P5- [-1, 3, C2f_RFCAConv, [1024]] # 21 (P5/32-large)- [[15, 18, 21], 1, Detect, [nc]] # Detect(P3, P4, P5)

- Seg【语义分割】

python"># Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'# [depth, width, max_channels]n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPss: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPsm: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPsl: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPsx: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs# YOLOv8.0n backbone

backbone:# [from, repeats, module, args]- [-1, 1, RFCAConv, [64, 3, 2]] # 0-P1/2- [-1, 1, RFCAConv, [128, 3, 2]] # 1-P2/4- [-1, 3, C2f_RFCAConv, [128, True]]- [-1, 1, RFCAConv, [256, 3, 2]] # 3-P3/8- [-1, 6, C2f_RFCAConv, [256, True]]- [-1, 1, RFCAConv, [512, 3, 2]] # 5-P4/16- [-1, 6, C2f_RFCAConv, [512, True]]- [-1, 1, RFCAConv, [1024, 3, 2]] # 7-P5/32- [-1, 3, C2f_RFCAConv, [1024, True]]- [-1, 1, SPPF, [1024, 5]] # 9# YOLOv8.0n head

head:- [-1, 1, nn.Upsample, [None, 2, 'nearest']]- [[-1, 6], 1, Concat, [1]] # cat backbone P4- [-1, 3, C2f_RFCAConv, [512]] # 12- [-1, 1, nn.Upsample, [None, 2, 'nearest']]- [[-1, 4], 1, Concat, [1]] # cat backbone P3- [-1, 3, C2f_RFCAConv, [256]] # 15 (P3/8-small)- [-1, 1, RFCAConv, [256, 3, 2]]- [[-1, 12], 1, Concat, [1]] # cat head P4- [-1, 3, C2f_RFCAConv, [512]] # 18 (P4/16-medium)- [-1, 1, RFCAConv, [512, 3, 2]]- [[-1, 9], 1, Concat, [1]] # cat head P5- [-1, 3, C2f_RFCAConv, [1024]] # 21 (P5/32-large)- [[15, 18, 21], 1, Segment, [nc, 32, 256]] # Segment(P3, P4, P5)温馨提示:因为本文只是对yolov8基础上添加模块,如果要对yolov8n/l/m/x进行添加则只需要指定对应的depth_multiple 和 width_multiple。不明白的同学可以看这篇文章: yolov8yaml文件解读——点击即可跳转

2.5 注册模块

关键步骤四:在task.py的parse_model函数中注册

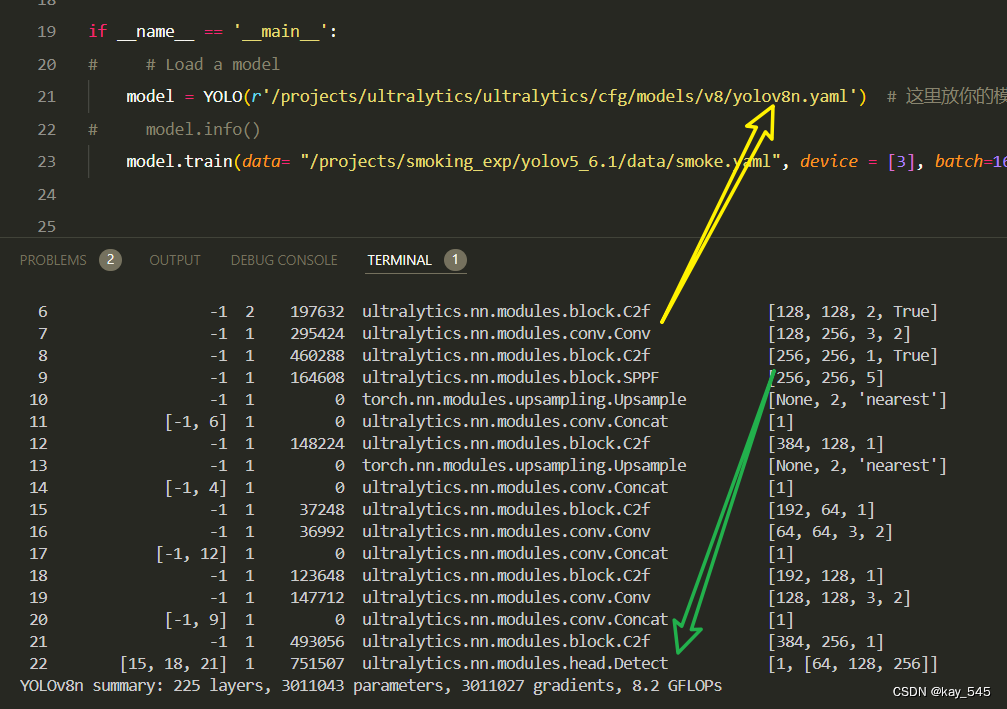

2.6 执行程序

在train.py中,将model的参数路径设置为yolov8_C2f_RFCAConv.yaml的路径

建议大家写绝对路径,确保一定能找到

python">from ultralytics import YOLO

import warnings

warnings.filterwarnings('ignore')

from pathlib import Pathif __name__ == '__main__':# 加载模型model = YOLO("ultralytics/cfg/v8/yolov8.yaml") # 你要选择的模型yaml文件地址# Use the modelresults = model.train(data=r"你的数据集的yaml文件地址",epochs=100, batch=16, imgsz=640, workers=4, name=Path(model.cfg).stem) # 训练模型🚀运行程序,如果出现下面的内容则说明添加成功🚀

python"> from n params module arguments0 -1 1 847 ultralytics.nn.modules.block.RFCAConv [3, 16, 3, 2]1 -1 1 6664 ultralytics.nn.modules.block.RFCAConv [16, 32, 3, 2]2 -1 1 9368 ultralytics.nn.modules.block.C2f_RFCAConv [32, 32, 1, True]3 -1 1 22520 ultralytics.nn.modules.block.RFCAConv [32, 64, 3, 2]4 -1 2 57648 ultralytics.nn.modules.block.C2f_RFCAConv [64, 64, 2, True]5 -1 1 81880 ultralytics.nn.modules.block.RFCAConv [64, 128, 3, 2]6 -1 2 213552 ultralytics.nn.modules.block.C2f_RFCAConv [128, 128, 2, True]7 -1 1 311192 ultralytics.nn.modules.block.RFCAConv [128, 256, 3, 2]8 -1 1 476184 ultralytics.nn.modules.block.C2f_RFCAConv [256, 256, 1, True]9 -1 1 164608 ultralytics.nn.modules.block.SPPF [256, 256, 5]10 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']11 [-1, 6] 1 0 ultralytics.nn.modules.conv.Concat [1]12 -1 1 156184 ultralytics.nn.modules.block.C2f_RFCAConv [384, 128, 1]13 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']14 [-1, 4] 1 0 ultralytics.nn.modules.conv.Concat [1]15 -1 1 41240 ultralytics.nn.modules.block.C2f_RFCAConv [192, 64, 1]16 -1 1 44952 ultralytics.nn.modules.block.RFCAConv [64, 64, 3, 2]17 [-1, 12] 1 0 ultralytics.nn.modules.conv.Concat [1]18 -1 1 131608 ultralytics.nn.modules.block.C2f_RFCAConv [192, 128, 1]19 -1 1 163608 ultralytics.nn.modules.block.RFCAConv [128, 128, 3, 2]20 [-1, 9] 1 0 ultralytics.nn.modules.conv.Concat [1]21 -1 1 508952 ultralytics.nn.modules.block.C2f_RFCAConv [384, 256, 1]22 [15, 18, 21] 1 897664 ultralytics.nn.modules.head.Detect [80, [64, 128, 256]]

YOLOv8_C2f_RFCAConv summary: 446 layers, 3288671 parameters, 3288655 gradients3. 完整代码分享

python">https://pan.baidu.com/s/1JpnGb--OQo4NQsfwvFhySw?pwd=a866提取码: a866

4. GFLOPs

关于GFLOPs的计算方式可以查看:百面算法工程师 | 卷积基础知识——Convolution

未改进的YOLOv8nGFLOPs

改进后的GFLOPs

手里的没有卡了,需要的同学自己测一下吧

5. 进阶

可以与其他的注意力机制或者损失函数等结合,进一步提升检测效果

6. 总结

C2f_RFCACon 模块结合了 C2f 结构和 RFCAConv卷积层的设计,旨在增强模型的特征提取能力,特别是在空间和通道维度上的表达。C2f通过多分支结构将输入特征分解并逐层融合,提升了特征多样性,而 RFCAConv 引入了动态卷积和坐标注意力机制。具体来说,RFCAConv通过自适应地生成卷积核权重,实现了对不同空间位置的卷积操作优化,并通过在高度和宽度维度上分别进行全局池化,生成相应的注意力权重,提升了特征的空间感知能力。在 C2f_RFCAConv中,多个 Bottleneck_RFCAConv模块被堆叠使用,每个模块对特征进行动态卷积和注意力增强,逐步融合和强化不同层次的特征信息,从而提高了模型的感受野和对重要特征的选择性,使其在处理复杂视觉任务时更加高效。