CVPR-2017

文章目录

- 1 Background and Motivation

- 2 Related Work

- 3 Advantages / Contributions

- 4 Method

- 4.1 Channel features for pedestrian detection

- 4.2 Integration techniques

- 4.3 Comparison and analysis

- 5 Jointly learn the channel features

- 5.1 Datasets and Metrics

- 5.2 KITTI Dataset

- 5.3 Cityscapes dataset

- 5.4 Caltech dataset

- 6 Conclusion(own)

1 Background and Motivation

相比于通用目标检测,行人检测有如下两个难点

-

less discriminable from backgrounds,换句话说,the discrimination relies more on the semantic contexts.

-

accurately locate,对于 CNN 来说,convolution and pooling layers generate high-level semantic activation maps, they also blur the boundaries between closely-laid instances. 这使得精确的定位变得更加困难

许多应用中 CNN 与一些先验信息结合能进一步提升效果

what kind of extra features are effective and how they actually work to improve the CNN-based pedestrian detectors?

作者进行了探讨

2 Related Work

-

pedestrian detectors

-

Integrating channel features of different types

3 Advantages / Contributions

基于 faster rcnn 和 HyperNet 改进,提出 HyperLearner 行人检测器,多层特征融合,引入 segmentation 额外监督信息(channel features),在系列行人检测数据集上取得了提升

4 Method

4.1 Channel features for pedestrian detection

-

Apparent-to-semantic channels

-

ICF(Integral channel features) channel:a handy-crafted feature channel composed of LUV color channels, gradient magnitude channel, and histogram of gradient (HOG) channels——low-level but detailed information of an image

-

edge channel:由 HED network 提取——containing both detailed appearance as well as high-level semantics

-

segmentation channel:由 FCN 提取

-

heatmap channel:blur the segmentation channel into the heatmap channel.

-

-

Temporal channels

e.g., optical flow(相邻时间帧中提取光流通道,《The computation of optical flow》1995) and motion -

Depth channels

DispNet

4.2 Integration techniques

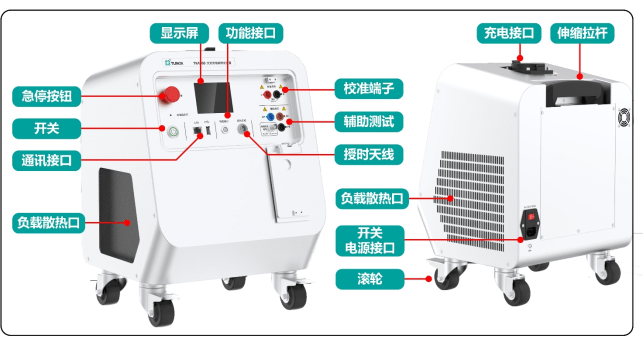

faster RCNN 的 3 scales and 3 ratios to 5 scales and 7 ratios,为了获得更高分辨率的信息,除去了所有的 stage5 层

channel features 作为输入

side branch consists of several convolution layers (with kernel size 3, padding 1 and stride 1) and max pooling layers (with kernel size 2 and stride 2), outputing an 128-channel activation maps of 1/8 input size

pretrained side branch 的含义如下

we employed to pretrain the side branch is to train a Faster R-CNN detector which completely relies on the side branch and intialize the side branch with the weights from this network.

4.3 Comparison and analysis

看到 segmentation channel feature 比较猛

特别是小目标的提升,在输入分辨率为原始大小的时候(1x)非常明显

在 2x 输入分辨率实验,高层语义信息但没有低级的明显特征(即热图通道)未能超过 1X 的实验的效果。作者认为,当图像以大的scale输入时,低级别的细节将显示出更大的重要性。——【论文解读】行人检测:What Can Help Pedestrian Detection?(CVPR’17)

高分辨率输入时,edge 信息可以降低误检率,提升定位精度

5 Jointly learn the channel features

上节是把 channel feature 作为网络的输入,本小节提出 HyperLearner,把 channel features 作为监督信号,这样推理的时候就不需要多输入了(channel features 的获取往往也涉及到了另外一个网络)

上面橙黄色特征图为 Aggregated activation map

损失函数为

L=LCFN+λ1LRPNcls+λ2LRPNbbox+λ3LFRCNNcls+λ4LFRCNNbboxL = L_{CFN} + \lambda_1 L_{RPN_{cls}} + \lambda_2 L_{RPN_{bbox}} + \lambda_3 L_{FRCNN_{cls}} + \lambda_4 L_{FRCNN_{bbox}}L=LCFN+λ1LRPNcls+λ2LRPNbbox+λ3LFRCNNcls+λ4LFRCNNbbox

其中 LCFNL_{CFN}LCFN 为

1H×W∑(x,y)l(Sx,y,Cx,y)\frac{1}{H \times W} \sum_{(x,y)} l (S_{x,y}, C_{x,y})H×W1(x,y)∑l(Sx,y,Cx,y)

segmentation map 中 lll 为 cross-entropy

训练的时候采用了 Multi-stage training,四步走

- only CFN

- only RPN

- only FRCNN

- together

5.1 Datasets and Metrics

- KITTI:pedestrian and cyclist 两类

- Caltech Pedestrian:2, 975 training and 500 validation images with fine annotations, 20, 000 training images with coarse annotations

- Cityscapes

5.2 KITTI Dataset

5.3 Cityscapes dataset

5.4 Caltech dataset

6 Conclusion(own)

semantic channel features can help detectors discriminate hard positive samples and negative samples at low resolution, while apparent channel features inhibit false positives of backgrounds and improve localization accuracy at high resolution.

《Integral channel features》(2009)

ICF

《Holistically-nested edge detection》(ICCV-2015)

HED

光流,场景流

Optical Flow 光流

光流是空间运动物体在成像平面上的像素运动的瞬时速度。 通常将一个描述点的瞬时速度的二维矢量 u⃗=(u,v)\vec u = (u,v)u=(u,v) 称为光流矢量——【入门向】光流法(optical flow)基本原理+深度学习中的应用【FlowNet】【RAFT】

Scene Flow 场景流

场景流指空间中场景运动形成的三维运动场,论文中使用 Disparity,Disparity change 和 Optional Flow 表示。

光流是平面物体运动的二维信息,场景流则包括了空间中物体运动的三维信息。——论文阅读笔记之Dispnet

Scene Flow 可以理解为 3D 的光流,数据换成了点云,Flow 是用 xyz 三个坐标表示。与目标检测相似

Estimating scene flow means providing the depth and 3D motion vectors of all visible points in a stereo video.

It is the “royal league” task when it comes to reconstruction and motion estimation and provides an important basis for numerous higher-level challenges such as advanced driver assistance and autonomous systems.

Diagram of disparity change. As the object ‘A’ moves toward the eyes to position ‘B’, its binocular disparity increases as its position on the retina changes. The purple arrows show direction of motion of the real object and the projection of the object on the retina.

《Stereopsis: are we assessing it in enough depth?》(Clinical and Experimental Optometry, 2018)

《A Large Dataset to Train Convolutional Networks for Disparity, Optical Flow, and Scene Flow Estimation》(CVPR-2016)

DispNet

《HyperNet: Towards Accurate Region Proposal Generation and Joint Object Detection》(CVPR-2016)