文章目录

- 标签

- 定义Label

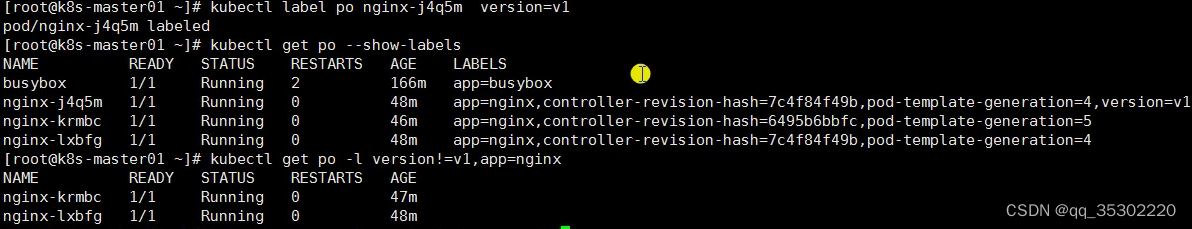

- Selector条件匹配

- 修改标签(Label)

- 删除标签(Label)

- Service

- 创建一个Service

- 使用Service代理k8s外部应用

- 使用service反代外部应用

- 使用Service反代域名

- Service类型

- Ingress概念

- Ingress安装

- helm安装:

- helm添加公用的仓库

- 使用helm安装ingress-nginx:

- 1、下载 ingress-nginx-4.2.5.tgz

- 2、解压,修改文件

- 3、需要修改的地方

- 4、下面是已经修改完毕的,可以直接使用

- 5、开始安装ingress-nginx

- 6、创建一个ingress

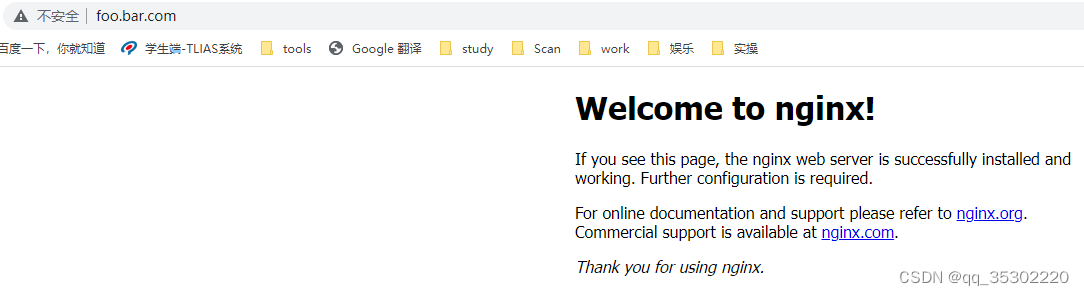

- 7、访问

- 8、进入ingress实例

- 9、配置多域名

标签

Label:对k8s中各种资源进行分类、分组,添加一个具有特别属性的一个标签。

Selector:通过一个过滤的语法进行查找到对应标签的资源

定义Label

[root@k8s-master01 ~]# kubectl label node k8s-node02 region=subnet7

node/k8s-node02 labeled

然后,可以通过Selector对其筛选:

[root@k8s-master01 ~]# kubectl get no -l region=subnet7

NAME STATUS ROLES AGE VERSION

k8s-node02 Ready <none> 3d17h v1.17.3

最后,在Deployment或其他控制器中指定将Pod部署到该节点:

containers:......

dnsPolicy: ClusterFirst

nodeSelector:region: subnet7

restartPolicy: Always

......也可以用同样的方式对Service进行Label:

[root@k8s-master01 ~]# kubectl label svc canary-v1 -n canary-production env=canary version=v1

service/canary-v1 labeled

查看Labels:

[root@k8s-master01 ~]# kubectl get svc -n canary-production --show-labels

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE LABELS

canary-v1 ClusterIP 10.110.253.62 <none> 8080/TCP 24h env=canary,version=v1

还可以查看所有Version为v1的svc:

[root@k8s-master01 canary]# kubectl get svc --all-namespaces -l version=v1

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

canary-production canary-v1 ClusterIP 10.110.253.62 <none> 8080/TCP 25h

还可以通过Selector多标签值筛选

[root@k8s-master01 ~]# kubectl get po -A --show-labels

NAMESPACE NAME READY STATUS RESTARTS AGE LABELS

default busybox 1/1 Running 88 43d <none>

default nginx-8kjj9 1/1 Running 0 4h22m app=nginx,controller-revision-hash=7c4f84f49b,pod-template-generation=3

default nginx-9vjsm 1/1 Running 0 4h22m app=nginx,controller-revision-hash=7c4f84f49b,pod-template-generation=3

kube-system calico-kube-controllers-cdd5755b9-qztxb 1/1 Running 10 44d k8s-app=calico-kube-controllers,pod-template-hash=cdd5755b9

kube-system calico-node-6q95q 1/1 Running 16 44d controller-revision-hash=6f6fbfdcf4,k8s-app=calico-node,pod-template-generation=1

kube-system calico-node-cgp6p 1/1 Running 19 44d controller-revision-hash=6f6fbfdcf4,k8s-app=calico-node,pod-template-generation=1

kube-system calico-node-tmxtg 1/1 Running 14 44d controller-revision-hash=6f6fbfdcf4,k8s-app=calico-node,pod-template-generation=1

kube-system calico-node-wc674 1/1 Running 12 44d controller-revision-hash=6f6fbfdcf4,k8s-app=calico-node,pod-template-generation=1

kube-system calico-node-z8k7p 1/1 Running 11 44d controller-revision-hash=6f6fbfdcf4,k8s-app=calico-node,pod-template-generation=1

kube-system coredns-684d86ff88-mtkjf 1/1 Running 9 43d k8s-app=kube-dns,pod-template-hash=684d86ff88

kube-system metrics-server-64c6c494dc-gdw52 1/1 Running 11 43d k8s-app=metrics-server,pod-template-hash=64c6c494dc

kubernetes-dashboard dashboard-metrics-scraper-86bb69c5f6-6jrch 1/1 Running 9 43d k8s-app=dashboard-metrics-scraper,pod-template-hash=86bb69c5f6

kubernetes-dashboard kubernetes-dashboard-6576c84894-9f7gh 1/1 Running 19 43d k8s-app=kubernetes-dashboard,pod-template-hash=6576c84894[root@k8s-master01 ~]# kubectl get po -A -l 'k8s-app in (metrics-server,kubernetes-dashboard)' # 标签多值筛选

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system metrics-server-64c6c494dc-gdw52 1/1 Running 11 43d

kubernetes-dashboard kubernetes-dashboard-6576c84894-9f7gh 1/1 Running 19 43d

[root@k8s-master01 ~]#

其他资源的Label方式相同。

Selector条件匹配

[root@k8s-master01 ~]# kubectl get svc --show-labels

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE LABELS

details ClusterIP 10.99.9.178 <none> 9080/TCP 45h app=details

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 3d19h component=apiserver,provider=kubernetes

nginx ClusterIP 10.106.194.137 <none> 80/TCP 2d21h app=productpage,version=v1

nginx-v2 ClusterIP 10.108.176.132 <none> 80/TCP 2d20h <none>

productpage ClusterIP 10.105.229.52 <none> 9080/TCP 45h app=productpage,tier=frontend

ratings ClusterIP 10.96.104.95 <none> 9080/TCP 45h app=ratings

reviews ClusterIP 10.102.188.143 <none> 9080/TCP 45h app=reviews选择app为reviews或者productpage的svc:

[root@k8s-master01 ~]# kubectl get svc -l 'app in (details, productpage)' --show-labels

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE LABELS

details ClusterIP 10.99.9.178 <none> 9080/TCP 45h app=details

nginx ClusterIP 10.106.194.137 <none> 80/TCP 2d21h app=productpage,version=v1

productpage ClusterIP 10.105.229.52 <none> 9080/TCP 45h app=productpage,tier=frontend选择app为productpage或reviews但不包括version=v1的svc:

[root@k8s-master01 ~]# kubectl get svc -l version!=v1,'app in (details, productpage)' --show-labels

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE LABELS

details ClusterIP 10.99.9.178 <none> 9080/TCP 45h app=details

productpage ClusterIP 10.105.229.52 <none> 9080/TCP 45h app=productpage,tier=frontend选择labelkey名为app的svc:

[root@k8s-master01 ~]# kubectl get svc -l app --show-labels

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE LABELS

details ClusterIP 10.99.9.178 <none> 9080/TCP 45h app=details

nginx ClusterIP 10.106.194.137 <none> 80/TCP 2d21h app=productpage,version=v1

productpage ClusterIP 10.105.229.52 <none> 9080/TCP 45h app=productpage,tier=frontend

ratings ClusterIP 10.96.104.95 <none> 9080/TCP 45h app=ratings

reviews ClusterIP 10.102.188.143 <none> 9080/TCP 45h app=reviews修改标签(Label)

[root@k8s-master01 canary]# kubectl get svc -n canary-production --show-labels

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE LABELS

canary-v1 ClusterIP 10.110.253.62 <none> 8080/TCP 26h env=canary,version=v1[root@k8s-master01 canary]# kubectl label svc canary-v1 -n canary-production version=v2 --overwrite

service/canary-v1 labeled[root@k8s-master01 canary]# kubectl get svc -n canary-production --show-labels

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE LABELS

canary-v1 ClusterIP 10.110.253.62 <none> 8080/TCP 26h env=canary,version=v2删除标签(Label)

[root@k8s-master01 canary]# kubectl label svc canary-v1 -n canary-production version-

service/canary-v1 labeled[root@k8s-master01 canary]# kubectl get svc -n canary-production --show-labels

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE LABELS

canary-v1 ClusterIP 10.110.253.62 <none> 8080/TCP 26h env=canaryService

Service可以简单的理解为逻辑上的一组Pod。一种可以访问Pod的策略,而且其他Pod可以通过这个Service访问到这个Service代理的Pod。相对于Pod而言,它会有一个固定的名称,一旦创建就固定不变。

[root@k8s-master01 ~]# kubectl get svc -A # 查看所有的service

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 45d

default nginx ClusterIP None <none> 80/TCP 2d7h

kube-system kube-dns ClusterIP 192.168.0.10 <none> 53/UDP,53/TCP,9153/TCP 45d

kube-system metrics-server ClusterIP 192.168.212.74 <none> 443/TCP 45d

kubernetes-dashboard dashboard-metrics-scraper ClusterIP 192.168.216.104 <none> 8000/TCP 45d

kubernetes-dashboard kubernetes-dashboard NodePort 192.168.19.209 <none> 443:31693/TCP 45d[root@k8s-master01 ~]# kubectl get svc -n kube-system # 查看kube-system下的service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 192.168.0.10 <none> 53/UDP,53/TCP,9153/TCP 45d

metrics-server ClusterIP 192.168.212.74 <none> 443/TCP 45d[root@k8s-master01 ~]# kubectl get ep -n kube-system # 查看kube-system下的endpoint

NAME ENDPOINTS AGE

kube-dns 172.27.14.224:53,172.27.14.224:53,172.27.14.224:9153 45d

metrics-server 172.25.92.77:4443 45d[root@k8s-master01 ~]# kubectl get po -n kube-system -owide # 查看kube-system下的pod的详细信息(和endpoint中的ip对应)

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-cdd5755b9-qztxb 1/1 Running 11 45d 10.103.236.201 k8s-master01 <none> <none>

calico-node-6q95q 1/1 Running 17 45d 10.103.236.205 k8s-node02 <none> <none>

calico-node-cgp6p 1/1 Running 21 45d 10.103.236.203 k8s-master03 <none> <none>

calico-node-tmxtg 1/1 Running 16 45d 10.103.236.201 k8s-master01 <none> <none>

calico-node-wc674 1/1 Running 14 45d 10.103.236.202 k8s-master02 <none> <none>

calico-node-z8k7p 1/1 Running 12 45d 10.103.236.204 k8s-node01 <none> <none>

coredns-684d86ff88-mtkjf 1/1 Running 10 45d 172.27.14.224 k8s-node02 <none> <none>

metrics-server-64c6c494dc-gdw52 1/1 Running 14 45d 172.25.92.77 k8s-master02 <none> <none>

[root@k8s-master01 ~]#创建一个Service

[root@k8s-master01 pod]# cat nginx-deploy.yaml # 先创建pod

apiVersion: apps/v1

kind: Deployment

metadata:annotations:deployment.kubernetes.io/revision: "1"creationTimestamp: "2022-10-26T06:32:24Z"generation: 1labels:app: nginxname: nginxnamespace: defaultresourceVersion: "594863"uid: 81733bda-9823-49cc-b12d-caddc2e0c318

spec:progressDeadlineSeconds: 600replicas: 2revisionHistoryLimit: 10selector:matchLabels:app: nginxstrategy:rollingUpdate:maxSurge: 25%maxUnavailable: 25%type: RollingUpdatetemplate:metadata:creationTimestamp: nulllabels:app: nginxspec:containers:- image: nginx:1.15.2imagePullPolicy: IfNotPresentname: nginxresources: {}terminationMessagePath: /dev/termination-logterminationMessagePolicy: FilednsPolicy: ClusterFirstrestartPolicy: AlwaysschedulerName: default-schedulersecurityContext: {}terminationGracePeriodSeconds: 30

status:availableReplicas: 1conditions:- lastTransitionTime: "2022-10-26T06:32:26Z"lastUpdateTime: "2022-10-26T06:32:26Z"message: Deployment has minimum availability.reason: MinimumReplicasAvailablestatus: "True"type: Available- lastTransitionTime: "2022-10-26T06:32:24Z"lastUpdateTime: "2022-10-26T06:32:26Z"message: ReplicaSet "nginx-66bbc9fdc5" has successfully progressed.reason: NewReplicaSetAvailablestatus: "True"type: ProgressingobservedGeneration: 1readyReplicas: 1replicas: 1updatedReplicas: 1============================================================================[root@k8s-master01 pod]# kubectl create -f nginx-deploy.yaml

deployment.apps/nginx created[root@k8s-master01 pod]# kubectl get po

NAME READY STATUS RESTARTS AGE

busybox 1/1 Running 90 45d

nginx-66bbc9fdc5-dhrgc 1/1 Running 0 5s

nginx-66bbc9fdc5-lh7vw 0/1 ContainerCreating 0 5s

[root@k8s-master01 pod]#[root@k8s-master01 ~]# kubectl get po -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

busybox 1/1 Running 93 46d 172.25.244.222 k8s-master01 <none> <none>

nginx-66bbc9fdc5-dhrgc 1/1 Running 1 34h 172.25.245.0 k8s-master01 <none> <none>

nginx-66bbc9fdc5-lh7vw 1/1 Running 1 34h 172.27.14.227 k8s-node02 <none> <none>[root@k8s-master01 ~]# curl 172.25.245.0 # 访问pod ip地址

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>body {width: 35em;margin: 0 auto;font-family: Tahoma, Verdana, Arial, sans-serif;}

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p><p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p><p><em>Thank you for using nginx.</em></p>

</body>

</html>============================================================================[root@k8s-master01 ~]# kubectl get po --show-labels

NAME READY STATUS RESTARTS AGE LABELS

busybox 1/1 Running 93 46d <none>

nginx-66bbc9fdc5-dhrgc 1/1 Running 1 34h app=nginx,pod-template-hash=66bbc9fdc5

nginx-66bbc9fdc5-lh7vw 1/1 Running 1 34h app=nginx,pod-template-hash=66bbc9fdc5[root@k8s-master01 ~]# vim nginx-svc.yaml # 创建service

apiVersion: v1

kind: Service

metadata:labels:app: nginx-svcname: nginx-svc

spec:ports:- name: http # Service端口的名称port: 80 # Service自己的端口, servicea --> serviceb http://serviceb, http://serviceb:8080protocol: TCP # UDP TCP SCTP default: TCPtargetPort: 80 # 后端应用的端口- name: httpsport: 443protocol: TCPtargetPort: 443selector:app: nginx # 选择上述的pod(标签)匹配sessionAffinity: Nonetype: ClusterIP[root@k8s-master01 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 46d[root@k8s-master01 ~]# kubectl create -f nginx-svc.yaml

service/nginx-svc created[root@k8s-master01 ~]# kubectl get svc -owide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 46d <none>

nginx-svc ClusterIP 192.168.193.36 <none> 80/TCP,443/TCP 11s app=nginx[root@k8s-master01 ~]# curl 192.168.193.36 # 请求svc的ip地址 可以访问到pod

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>body {width: 35em;margin: 0 auto;font-family: Tahoma, Verdana, Arial, sans-serif;}

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p><p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p><p><em>Thank you for using nginx.</em></p>

</body>

</html>============================================================================[root@k8s-master01 ~]# kubectl get pod # 查看pod

NAME READY STATUS RESTARTS AGE

busybox 1/1 Running 93 46d

nginx-66bbc9fdc5-dhrgc 1/1 Running 1 34h

nginx-66bbc9fdc5-lh7vw 1/1 Running 1 34h[root@k8s-master01 ~]# kubectl logs -f nginx-66bbc9fdc5-dhrgc # 查看pod访问日志

10.103.236.201 - - [31/Oct/2022:01:25:27 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.29.0" "-"============================================================================[root@k8s-master01 ~]# kubectl get po

NAME READY STATUS RESTARTS AGE

busybox 1/1 Running 93 46d

nginx-66bbc9fdc5-dhrgc 1/1 Running 1 34h

nginx-66bbc9fdc5-lh7vw 1/1 Running 1 34h[root@k8s-master01 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 46d

nginx-svc ClusterIP 192.168.193.36 <none> 80/TCP,443/TCP 7m2s[root@k8s-master01 ~]# kubectl exec -it busybox -- sh # busybox可以访问到svc【集群内部的pod可以通过svc进行访问】

/ # wget http://nginx-svc

Connecting to nginx-svc (192.168.193.36:80)

index.html 100% |**********************************************************************************************************| 612 0:00:00 ETA

/ # cat index.html

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>body {width: 35em;margin: 0 auto;font-family: Tahoma, Verdana, Arial, sans-serif;}

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p><p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p><p><em>Thank you for using nginx.</em></p>

</body>

</html>

使用Service代理k8s外部应用

使用场景:

一:希望在生产环境中使用某个固定的名称而非IP地址进行访问外部的中间件服务

二:希望Service指向另一个Namespace中或其他集群中的服务

三:某个项目正在迁移至k8s集群,但是一部分服务仍然在集群外部,此时可以使用service代理至k8s集群外部的服务

使用service反代外部应用

[root@k8s-master01 ~]# vim nginx-svc-external.yaml

apiVersion: v1

kind: Service

metadata:labels:app: nginx-svc-externalname: nginx-svc-external

spec:ports:- name: http # Service端口的名称port: 80 # Service自己的端口, servicea --> serviceb http://serviceb, http://serviceb:8080protocol: TCP # UDP TCP SCTP default: TCPtargetPort: 80 # 后端应用的端口sessionAffinity: Nonetype: ClusterIP[root@k8s-master01 ~]# vim nginx-ep-external.yaml

apiVersion: v1

kind: Endpoints

metadata:labels:app: nginx-svc-externalname: nginx-svc-externalnamespace: default

subsets:

- addresses:- ip: 14.215.177.38 # 外部的ip地址(这里举例百度地址)ports:- name: httpport: 80 # 外部的端口号protocol: TCP[root@k8s-master01 ~]# kubectl create -f nginx-svc-external.yaml # 创建svc

service/nginx-svc-external created[root@k8s-master01 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 46d

nginx-svc ClusterIP 192.168.193.36 <none> 80/TCP,443/TCP 34m

nginx-svc-external ClusterIP 192.168.77.183 <none> 80/TCP 53s[root@k8s-master01 ~]# kubectl get ep # 未创建同名的nginx-svc-external ep(没有selector的svc不会创建ep)

NAME ENDPOINTS AGE

kubernetes 10.103.236.201:6443,10.103.236.202:6443,10.103.236.203:6443 46d

nginx-svc 172.25.245.0:443,172.27.14.227:443,172.25.245.0:80 + 1 more... 33m[root@k8s-master01 ~]# kubectl create -f nginx-ep-external.yaml # 创建ep

endpoints/nginx-svc-external created[root@k8s-master01 ~]# kubectl get ep # 已经收到创建了ep

NAME ENDPOINTS AGE

kubernetes 10.103.236.201:6443,10.103.236.202:6443,10.103.236.203:6443 46d

nginx-svc 172.25.245.0:443,172.27.14.227:443,172.25.245.0:80 + 1 more... 42m

nginx-svc-external 14.215.177.38:80 36s[root@k8s-master01 ~]# curl baidu.com -I

HTTP/1.1 200 OK

Date: Mon, 31 Oct 2022 02:22:07 GMT

Server: Apache

Last-Modified: Tue, 12 Jan 2010 13:48:00 GMT

ETag: "51-47cf7e6ee8400"

Accept-Ranges: bytes

Content-Length: 81

Cache-Control: max-age=86400

Expires: Tue, 01 Nov 2022 02:22:07 GMT

Connection: Keep-Alive

Content-Type: text/html[root@k8s-master01 ~]# curl 14.215.177.38 -I # 成功访问了外部的应用

HTTP/1.1 200 OK

Accept-Ranges: bytes

Cache-Control: private, no-cache, no-store, proxy-revalidate, no-transform

Connection: keep-alive

Content-Length: 277

Content-Type: text/html

Date: Mon, 31 Oct 2022 02:22:18 GMT

Etag: "575e1f72-115"

Last-Modified: Mon, 13 Jun 2016 02:50:26 GMT

Pragma: no-cache

Server: bfe/1.0.8.18使用Service反代域名

[root@k8s-master01 ~]# vim nginx-externalName.yaml

apiVersion: v1

kind: Service

metadata:labels:app: nginx-externalnamename: nginx-externalname

spec:type: ExternalNameexternalName: www.baidu.com[root@k8s-master01 ~]# kubectl apply -f nginx-externalName.yaml # apply创建,存在则replace,不存在则create

service/nginx-externalname created[root@k8s-master01 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 47d

nginx-externalname ExternalName <none> www.baidu.com <none> 106s

nginx-svc ClusterIP 192.168.193.36 <none> 80/TCP,443/TCP 53m

nginx-svc-external ClusterIP 192.168.77.183 <none> 80/TCP 20m[root@k8s-master01 ~]# kubectl get po

NAME READY STATUS RESTARTS AGE

busybox 1/1 Running 94 46d

nginx-66bbc9fdc5-dhrgc 1/1 Running 1 35h

nginx-66bbc9fdc5-lh7vw 1/1 Running 1 35h[root@k8s-master01 ~]# kubectl exec -it busybox -- sh/ # wget nginx-externalname # 跨域访问 被拒绝

Connecting to nginx-externalname (14.215.177.39:80)

wget: server returned error: HTTP/1.1 403 Forbidden/ # nslookup nginx-externalname

Server: 192.168.0.10

Address 1: 192.168.0.10 kube-dns.kube-system.svc.cluster.localName: nginx-externalname

Address 1: 14.215.177.38 14-215-177-38.nginx-svc-external.default.svc.cluster.local

Address 2: 14.215.177.39/ # wget 14.215.177.38

Connecting to 14.215.177.38 (14.215.177.38:80)

index.html 100% |**********************************************************************************************************| 2381 0:00:00 ETA

/ #Service类型

ClusterIP:在集群内部使用,也是默认值。

ExternalName:通过返回定义的CNAME别名。

NodePort:在所有安装了kube-proxy的节点上打开一个端口,此端口可以代理至后端Pod,然后集群外部可以使用节点的IP地址和NodePort的端口号访问到集群Pod的服务。NodePort端口范围默认是30000-32767。

LoadBalancer:使用云提供商的负载均衡器公开服务。

查看NodePort端口范围:

[root@k8s-master01 ~]# cat /usr/lib/systemd/system/kube-apiserver.service # 二进制安装下的查看方式;若是kubeadm安装方式,/etc/manu...的yaml文件

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target[Service]

ExecStart=/usr/local/bin/kube-apiserver \--v=2 \--logtostderr=true \--allow-privileged=true \--bind-address=0.0.0.0 \--secure-port=6443 \--insecure-port=0 \--advertise-address=10.103.236.201 \--service-cluster-ip-range=192.168.0.0/16 \--service-node-port-range=30000-32767 \--etcd-servers=https://10.103.236.201:2379,https://10.103.236.202:2379,https://10.103.236.203:2379 \--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \--etcd-certfile=/etc/etcd/ssl/etcd.pem \--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \--client-ca-file=/etc/kubernetes/pki/ca.pem \--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \--service-account-key-file=/etc/kubernetes/pki/sa.pub \--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \--service-account-issuer=https://kubernetes.default.svc.cluster.local \--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \--authorization-mode=Node,RBAC \--enable-bootstrap-token-auth=true \--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \--requestheader-allowed-names=aggregator \--requestheader-group-headers=X-Remote-Group \--requestheader-extra-headers-prefix=X-Remote-Extra- \--requestheader-username-headers=X-Remote-User# --token-auth-file=/etc/kubernetes/token.csvRestart=on-failure

RestartSec=10s

LimitNOFILE=65535[Install]

WantedBy=multi-user.target

[root@k8s-master01 ~]#Ingress概念

通俗来讲,ingress和之前提到的Service、Deployment,也是一个k8s的资源类型,ingress用于实现用域名的方式访问k8s内部应用。

Ingress安装

helm安装:

https://helm.sh/docs/intro/install/

下载 需要的版本

解压(tar -zxvf helm-v3.0.0-linux-amd64.tar.gz)

在解压目中找到helm程序,移动到需要的目录中(mv linux-amd64/helm /usr/local/bin/helm)

[root@k8s-master01 ~]# helm version # 查看helm版本

version.BuildInfo{Version:"v3.10.1", GitCommit:"9f88ccb6aee40b9a0535fcc7efea6055e1ef72c9", GitTreeState:"clean", GoVersion:"go1.18.7"}

helm添加公用的仓库

# 配置helm微软源地址

# 配置helm阿里源地址

helm repo add stable http://mirror.azure.cn/kubernetes/chartshelm repo add aliyun https://kubernetes.oss-cn-hangzhou.aliyuncs.com/chartshelm repo listhelm repo update使用helm安装ingress-nginx:

https://kubernetes.github.io/ingress-nginx/deploy/#using-helm

1、下载 ingress-nginx-4.2.5.tgz

helm fetch ingress-nginx/ingress-nginx --version 4.2.5

#或者curl -LO https://github.com/kubernetes/ingress-nginx/releases/download/helm-chart-4.2.5/ingress-nginx-4.2.5.tgz

#或者curl -LO https://storage.corpintra.plus/kubernetes/charts/ingress-nginx-4.2.5.tgz

2、解压,修改文件

[root@k8s-master01 ingress]# tar -xvf ingress-nginx-4.2.5.tgz # 新建一个目录ingress

[root@k8s-master01 ingress]# cd ingress-nginx

3、需要修改的地方

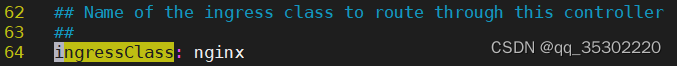

commonLabels: {}controller:name: controllerimage:registry: registry.cn-hangzhou.aliyuncs.com # 根据自己情况修改image: google_containers/nginx-ingress-controller # 同上tag: "v1.3.0"# digest: sha256:31f47c1e202b39fadecf822a9b76370bd4baed199a005b3e7d4d1455f4fd3fe2 # 注释掉dnsPolicy: ClusterFirstWithHostNet # 修改好的hostNetwork: true # 改成true kind: DaemonSet # 已经修改好的nodeSelector:kubernetes.io/os: linuxingress: "true" # 改成truepatch:enabled: trueimage:registry: registry.cn-hangzhou.aliyuncs.com # 根据自己情况修改image: google_containers/kube-webhook-certgen # 同上tag: v1.3.0# digest: sha256:64d8c73dca984af206adf9d6d7e46aa550362b1d7a01f3a0a91b20cc67868660 # 注释掉

4、下面是已经修改完毕的,可以直接使用

[root@k8s-master01 ingress-nginx]# vim values.yaml

## nginx configuration

## Ref: https://github.com/kubernetes/ingress-nginx/blob/main/docs/user-guide/nginx-configuration/index.mdcommonLabels: {}

# scmhash: abc123

# myLabel: aakkmdcontroller:name: controllerimage:chroot: falseregistry: registry.cn-hangzhou.aliyuncs.comimage: google_containers/nginx-ingress-controller## repository:tag: "v1.3.1"#digest: sha256:54f7fe2c6c5a9db9a0ebf1131797109bb7a4d91f56b9b362bde2abd237dd1974#digestChroot: sha256:a8466b19c621bd550b1645e27a004a5cc85009c858a9ab19490216735ac432b1pullPolicy: IfNotPresent# www-data -> uid 101runAsUser: 101allowPrivilegeEscalation: true# -- Use an existing PSP instead of creating oneexistingPsp: ""# -- Configures the controller container namecontainerName: controller# -- Configures the ports that the nginx-controller listens oncontainerPort:http: 80https: 443# -- Will add custom configuration options to Nginx https://kubernetes.github.io/ingress-nginx/user-guide/nginx-configuration/configmap/config: {}# -- Annotations to be added to the controller config configuration configmap.configAnnotations: {}# -- Will add custom headers before sending traffic to backends according to https://github.com/kubernetes/ingress-nginx/tree/main/docs/examples/customization/custom-headersproxySetHeaders: {}# -- Will add custom headers before sending response traffic to the client according to: https://kubernetes.github.io/ingress-nginx/user-guide/nginx-configuration/configmap/#add-headersaddHeaders: {}# -- Optionally customize the pod dnsConfig.dnsConfig: {}# -- Optionally customize the pod hostname.hostname: {}# -- Optionally change this to ClusterFirstWithHostNet in case you have 'hostNetwork: true'.# By default, while using host network, name resolution uses the host's DNS. If you wish nginx-controller# to keep resolving names inside the k8s network, use ClusterFirstWithHostNet.dnsPolicy: ClusterFirstWithHostNet# -- Bare-metal considerations via the host network https://kubernetes.github.io/ingress-nginx/deploy/baremetal/#via-the-host-network# Ingress status was blank because there is no Service exposing the NGINX Ingress controller in a configuration using the host network, the default --publish-service flag used in standard cloud setups does not applyreportNodeInternalIp: false# -- Process Ingress objects without ingressClass annotation/ingressClassName field# Overrides value for --watch-ingress-without-class flag of the controller binary# Defaults to falsewatchIngressWithoutClass: false# -- Process IngressClass per name (additionally as per spec.controller).ingressClassByName: false# -- This configuration defines if Ingress Controller should allow users to set# their own *-snippet annotations, otherwise this is forbidden / dropped# when users add those annotations.# Global snippets in ConfigMap are still respectedallowSnippetAnnotations: true# -- Required for use with CNI based kubernetes installations (such as ones set up by kubeadm),# since CNI and hostport don't mix yet. Can be deprecated once https://github.com/kubernetes/kubernetes/issues/23920# is mergedhostNetwork: true## Use host ports 80 and 443## Disabled by defaulthostPort:# -- Enable 'hostPort' or notenabled: falseports:# -- 'hostPort' http porthttp: 80# -- 'hostPort' https porthttps: 443# -- Election ID to use for status updateelectionID: ingress-controller-leader## This section refers to the creation of the IngressClass resource## IngressClass resources are supported since k8s >= 1.18 and required since k8s >= 1.19ingressClassResource:# -- Name of the ingressClassname: nginx# -- Is this ingressClass enabled or notenabled: true# -- Is this the default ingressClass for the clusterdefault: false# -- Controller-value of the controller that is processing this ingressClasscontrollerValue: "k8s.io/ingress-nginx"# -- Parameters is a link to a custom resource containing additional# configuration for the controller. This is optional if the controller# does not require extra parameters.parameters: {}# -- For backwards compatibility with ingress.class annotation, use ingressClass.# Algorithm is as follows, first ingressClassName is considered, if not present, controller looks for ingress.class annotationingressClass: nginx# -- Labels to add to the pod container metadatapodLabels: {}# key: value# -- Security Context policies for controller podspodSecurityContext: {}# -- See https://kubernetes.io/docs/tasks/administer-cluster/sysctl-cluster/ for notes on enabling and using sysctlssysctls: {}# sysctls:# "net.core.somaxconn": "8192"# -- Allows customization of the source of the IP address or FQDN to report# in the ingress status field. By default, it reads the information provided# by the service. If disable, the status field reports the IP address of the# node or nodes where an ingress controller pod is running.publishService:# -- Enable 'publishService' or notenabled: true# -- Allows overriding of the publish service to bind to# Must be <namespace>/<service_name>pathOverride: ""# Limit the scope of the controller to a specific namespacescope:# -- Enable 'scope' or notenabled: false# -- Namespace to limit the controller to; defaults to $(POD_NAMESPACE)namespace: ""# -- When scope.enabled == false, instead of watching all namespaces, we watching namespaces whose labels# only match with namespaceSelector. Format like foo=bar. Defaults to empty, means watching all namespaces.namespaceSelector: ""# -- Allows customization of the configmap / nginx-configmap namespace; defaults to $(POD_NAMESPACE)configMapNamespace: ""tcp:# -- Allows customization of the tcp-services-configmap; defaults to $(POD_NAMESPACE)configMapNamespace: ""# -- Annotations to be added to the tcp config configmapannotations: {}udp:# -- Allows customization of the udp-services-configmap; defaults to $(POD_NAMESPACE)configMapNamespace: ""# -- Annotations to be added to the udp config configmapannotations: {}# -- Maxmind license key to download GeoLite2 Databases.## https://blog.maxmind.com/2019/12/18/significant-changes-to-accessing-and-using-geolite2-databasesmaxmindLicenseKey: ""# -- Additional command line arguments to pass to nginx-ingress-controller# E.g. to specify the default SSL certificate you can useextraArgs: {}## extraArgs:## default-ssl-certificate: "<namespace>/<secret_name>"# -- Additional environment variables to setextraEnvs: []# extraEnvs:# - name: FOO# valueFrom:# secretKeyRef:# key: FOO# name: secret-resource# -- Use a `DaemonSet` or `Deployment`kind: DaemonSet# -- Annotations to be added to the controller Deployment or DaemonSet##annotations: {}# keel.sh/pollSchedule: "@every 60m"# -- Labels to be added to the controller Deployment or DaemonSet and other resources that do not have option to specify labels##labels: {}# keel.sh/policy: patch# keel.sh/trigger: poll# -- The update strategy to apply to the Deployment or DaemonSet##updateStrategy: {}# rollingUpdate:# maxUnavailable: 1# type: RollingUpdate# -- `minReadySeconds` to avoid killing pods before we are ready##minReadySeconds: 0# -- Node tolerations for server scheduling to nodes with taints## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/##tolerations: []# - key: "key"# operator: "Equal|Exists"# value: "value"# effect: "NoSchedule|PreferNoSchedule|NoExecute(1.6 only)"# -- Affinity and anti-affinity rules for server scheduling to nodes## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/#affinity-and-anti-affinity##affinity: {}# # An example of preferred pod anti-affinity, weight is in the range 1-100# podAntiAffinity:# preferredDuringSchedulingIgnoredDuringExecution:# - weight: 100# podAffinityTerm:# labelSelector:# matchExpressions:# - key: app.kubernetes.io/name# operator: In# values:# - ingress-nginx# - key: app.kubernetes.io/instance# operator: In# values:# - ingress-nginx# - key: app.kubernetes.io/component# operator: In# values:# - controller# topologyKey: kubernetes.io/hostname# # An example of required pod anti-affinity# podAntiAffinity:# requiredDuringSchedulingIgnoredDuringExecution:# - labelSelector:# matchExpressions:# - key: app.kubernetes.io/name# operator: In# values:# - ingress-nginx# - key: app.kubernetes.io/instance# operator: In# values:# - ingress-nginx# - key: app.kubernetes.io/component# operator: In# values:# - controller# topologyKey: "kubernetes.io/hostname"# -- Topology spread constraints rely on node labels to identify the topology domain(s) that each Node is in.## Ref: https://kubernetes.io/docs/concepts/workloads/pods/pod-topology-spread-constraints/##topologySpreadConstraints: []# - maxSkew: 1# topologyKey: topology.kubernetes.io/zone# whenUnsatisfiable: DoNotSchedule# labelSelector:# matchLabels:# app.kubernetes.io/instance: ingress-nginx-internal# -- `terminationGracePeriodSeconds` to avoid killing pods before we are ready## wait up to five minutes for the drain of connections##terminationGracePeriodSeconds: 300# -- Node labels for controller pod assignment## Ref: https://kubernetes.io/docs/user-guide/node-selection/##nodeSelector:kubernetes.io/os: linuxingress: "true"## Liveness and readiness probe values## Ref: https://kubernetes.io/docs/concepts/workloads/pods/pod-lifecycle/#container-probes#### startupProbe:## httpGet:## # should match container.healthCheckPath## path: "/healthz"## port: 10254## scheme: HTTP## initialDelaySeconds: 5## periodSeconds: 5## timeoutSeconds: 2## successThreshold: 1## failureThreshold: 5livenessProbe:httpGet:# should match container.healthCheckPathpath: "/healthz"port: 10254scheme: HTTPinitialDelaySeconds: 10periodSeconds: 10timeoutSeconds: 1successThreshold: 1failureThreshold: 5readinessProbe:httpGet:# should match container.healthCheckPathpath: "/healthz"port: 10254scheme: HTTPinitialDelaySeconds: 10periodSeconds: 10timeoutSeconds: 1successThreshold: 1failureThreshold: 3# -- Path of the health check endpoint. All requests received on the port defined by# the healthz-port parameter are forwarded internally to this path.healthCheckPath: "/healthz"# -- Address to bind the health check endpoint.# It is better to set this option to the internal node address# if the ingress nginx controller is running in the `hostNetwork: true` mode.healthCheckHost: ""# -- Annotations to be added to controller pods##podAnnotations: {}replicaCount: 1minAvailable: 1## Define requests resources to avoid probe issues due to CPU utilization in busy nodes## ref: https://github.com/kubernetes/ingress-nginx/issues/4735#issuecomment-551204903## Ideally, there should be no limits.## https://engineering.indeedblog.com/blog/2019/12/cpu-throttling-regression-fix/resources:## limits:## cpu: 100m## memory: 90Mirequests:cpu: 100mmemory: 90Mi# Mutually exclusive with keda autoscalingautoscaling:enabled: falseminReplicas: 1maxReplicas: 11targetCPUUtilizationPercentage: 50targetMemoryUtilizationPercentage: 50behavior: {}# scaleDown:# stabilizationWindowSeconds: 300# policies:# - type: Pods# value: 1# periodSeconds: 180# scaleUp:# stabilizationWindowSeconds: 300# policies:# - type: Pods# value: 2# periodSeconds: 60autoscalingTemplate: []# Custom or additional autoscaling metrics# ref: https://kubernetes.io/docs/tasks/run-application/horizontal-pod-autoscale/#support-for-custom-metrics# - type: Pods# pods:# metric:# name: nginx_ingress_controller_nginx_process_requests_total# target:# type: AverageValue# averageValue: 10000m# Mutually exclusive with hpa autoscalingkeda:apiVersion: "keda.sh/v1alpha1"## apiVersion changes with keda 1.x vs 2.x## 2.x = keda.sh/v1alpha1## 1.x = keda.k8s.io/v1alpha1enabled: falseminReplicas: 1maxReplicas: 11pollingInterval: 30cooldownPeriod: 300restoreToOriginalReplicaCount: falsescaledObject:annotations: {}# Custom annotations for ScaledObject resource# annotations:# key: valuetriggers: []# - type: prometheus# metadata:# serverAddress: http://<prometheus-host>:9090# metricName: http_requests_total# threshold: '100'# query: sum(rate(http_requests_total{deployment="my-deployment"}[2m]))behavior: {}# scaleDown:# stabilizationWindowSeconds: 300# policies:# - type: Pods# value: 1# periodSeconds: 180# scaleUp:# stabilizationWindowSeconds: 300# policies:# - type: Pods# value: 2# periodSeconds: 60# -- Enable mimalloc as a drop-in replacement for malloc.## ref: https://github.com/microsoft/mimalloc##enableMimalloc: true## Override NGINX templatecustomTemplate:configMapName: ""configMapKey: ""service:enabled: true# -- If enabled is adding an appProtocol option for Kubernetes service. An appProtocol field replacing annotations that were# using for setting a backend protocol. Here is an example for AWS: service.beta.kubernetes.io/aws-load-balancer-backend-protocol: http# It allows choosing the protocol for each backend specified in the Kubernetes service.# See the following GitHub issue for more details about the purpose: https://github.com/kubernetes/kubernetes/issues/40244# Will be ignored for Kubernetes versions older than 1.20##appProtocol: trueannotations: {}labels: {}# clusterIP: ""# -- List of IP addresses at which the controller services are available## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips##externalIPs: []# -- Used by cloud providers to connect the resulting `LoadBalancer` to a pre-existing static IP according to https://kubernetes.io/docs/concepts/services-networking/service/#loadbalancerloadBalancerIP: ""loadBalancerSourceRanges: []enableHttp: trueenableHttps: true## Set external traffic policy to: "Local" to preserve source IP on providers supporting it.## Ref: https://kubernetes.io/docs/tutorials/services/source-ip/#source-ip-for-services-with-typeloadbalancer# externalTrafficPolicy: ""## Must be either "None" or "ClientIP" if set. Kubernetes will default to "None".## Ref: https://kubernetes.io/docs/concepts/services-networking/service/#virtual-ips-and-service-proxies# sessionAffinity: ""## Specifies the health check node port (numeric port number) for the service. If healthCheckNodePort isn’t specified,## the service controller allocates a port from your cluster’s NodePort range.## Ref: https://kubernetes.io/docs/tasks/access-application-cluster/create-external-load-balancer/#preserving-the-client-source-ip# healthCheckNodePort: 0# -- Represents the dual-stack-ness requested or required by this Service. Possible values are# SingleStack, PreferDualStack or RequireDualStack.# The ipFamilies and clusterIPs fields depend on the value of this field.## Ref: https://kubernetes.io/docs/concepts/services-networking/dual-stack/ipFamilyPolicy: "SingleStack"# -- List of IP families (e.g. IPv4, IPv6) assigned to the service. This field is usually assigned automatically# based on cluster configuration and the ipFamilyPolicy field.## Ref: https://kubernetes.io/docs/concepts/services-networking/dual-stack/ipFamilies:- IPv4ports:http: 80https: 443targetPorts:http: httphttps: httpstype: LoadBalancer## type: NodePort## nodePorts:## http: 32080## https: 32443## tcp:## 8080: 32808nodePorts:http: ""https: ""tcp: {}udp: {}external:enabled: trueinternal:# -- Enables an additional internal load balancer (besides the external one).enabled: false# -- Annotations are mandatory for the load balancer to come up. Varies with the cloud service.annotations: {}# loadBalancerIP: ""# -- Restrict access For LoadBalancer service. Defaults to 0.0.0.0/0.loadBalancerSourceRanges: []## Set external traffic policy to: "Local" to preserve source IP on## providers supporting it## Ref: https://kubernetes.io/docs/tutorials/services/source-ip/#source-ip-for-services-with-typeloadbalancer# externalTrafficPolicy: ""# shareProcessNamespace enables process namespace sharing within the pod.# This can be used for example to signal log rotation using `kill -USR1` from a sidecar.shareProcessNamespace: false# -- Additional containers to be added to the controller pod.# See https://github.com/lemonldap-ng-controller/lemonldap-ng-controller as example.extraContainers: []# - name: my-sidecar# - name: POD_NAME# valueFrom:# fieldRef:# fieldPath: metadata.name# - name: POD_NAMESPACE# valueFrom:# fieldRef:# fieldPath: metadata.namespace# volumeMounts:# - name: copy-portal-skins# mountPath: /srv/var/lib/lemonldap-ng/portal/skins# -- Additional volumeMounts to the controller main container.extraVolumeMounts: []# - name: copy-portal-skins# mountPath: /var/lib/lemonldap-ng/portal/skins# -- Additional volumes to the controller pod.extraVolumes: []# - name: copy-portal-skins# emptyDir: {}# -- Containers, which are run before the app containers are started.extraInitContainers: []# - name: init-myservice# command: ['sh', '-c', 'until nslookup myservice; do echo waiting for myservice; sleep 2; done;']extraModules: []## Modules, which are mounted into the core nginx image# - name: opentelemetry## The image must contain a `/usr/local/bin/init_module.sh` executable, which# will be executed as initContainers, to move its config files within the# mounted volume.admissionWebhooks:annotations: {}# ignore-check.kube-linter.io/no-read-only-rootfs: "This deployment needs write access to root filesystem".## Additional annotations to the admission webhooks.## These annotations will be added to the ValidatingWebhookConfiguration and## the Jobs Spec of the admission webhooks.enabled: true# -- Additional environment variables to setextraEnvs: []# extraEnvs:# - name: FOO# valueFrom:# secretKeyRef:# key: FOO# name: secret-resource# -- Admission Webhook failure policy to usefailurePolicy: Fail# timeoutSeconds: 10port: 8443certificate: "/usr/local/certificates/cert"key: "/usr/local/certificates/key"namespaceSelector: {}objectSelector: {}# -- Labels to be added to admission webhookslabels: {}# -- Use an existing PSP instead of creating oneexistingPsp: ""networkPolicyEnabled: falseservice:annotations: {}# clusterIP: ""externalIPs: []# loadBalancerIP: ""loadBalancerSourceRanges: []servicePort: 443type: ClusterIPcreateSecretJob:resources: {}# limits:# cpu: 10m# memory: 20Mi# requests:# cpu: 10m# memory: 20MipatchWebhookJob:resources: {}patch:enabled: trueimage:registry: registry.cn-hangzhou.aliyuncs.comimage: google_containers/kube-webhook-certgen## for backwards compatibility consider setting the full image url via the repository value below## use *either* current default registry/image or repository format or installing chart by providing the values.yaml will fail## repository:tag: v1.3.0# digest: sha256:549e71a6ca248c5abd51cdb73dbc3083df62cf92ed5e6147c780e30f7e007a47pullPolicy: IfNotPresent# -- Provide a priority class name to the webhook patching job##priorityClassName: ""podAnnotations: {}nodeSelector:kubernetes.io/os: linuxtolerations: []# -- Labels to be added to patch job resourceslabels: {}securityContext:runAsNonRoot: truerunAsUser: 2000fsGroup: 2000metrics:port: 10254# if this port is changed, change healthz-port: in extraArgs: accordinglyenabled: falseservice:annotations: {}# prometheus.io/scrape: "true"# prometheus.io/port: "10254"# clusterIP: ""# -- List of IP addresses at which the stats-exporter service is available## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips##externalIPs: []# loadBalancerIP: ""loadBalancerSourceRanges: []servicePort: 10254type: ClusterIP# externalTrafficPolicy: ""# nodePort: ""serviceMonitor:enabled: falseadditionalLabels: {}## The label to use to retrieve the job name from.## jobLabel: "app.kubernetes.io/name"namespace: ""namespaceSelector: {}## Default: scrape .Release.Namespace only## To scrape all, use the following:## namespaceSelector:## any: truescrapeInterval: 30s# honorLabels: truetargetLabels: []relabelings: []metricRelabelings: []prometheusRule:enabled: falseadditionalLabels: {}# namespace: ""rules: []# # These are just examples rules, please adapt them to your needs# - alert: NGINXConfigFailed# expr: count(nginx_ingress_controller_config_last_reload_successful == 0) > 0# for: 1s# labels:# severity: critical# annotations:# description: bad ingress config - nginx config test failed# summary: uninstall the latest ingress changes to allow config reloads to resume# - alert: NGINXCertificateExpiry# expr: (avg(nginx_ingress_controller_ssl_expire_time_seconds) by (host) - time()) < 604800# for: 1s# labels:# severity: critical# annotations:# description: ssl certificate(s) will expire in less then a week# summary: renew expiring certificates to avoid downtime# - alert: NGINXTooMany500s# expr: 100 * ( sum( nginx_ingress_controller_requests{status=~"5.+"} ) / sum(nginx_ingress_controller_requests) ) > 5# for: 1m# labels:# severity: warning# annotations:# description: Too many 5XXs# summary: More than 5% of all requests returned 5XX, this requires your attention# - alert: NGINXTooMany400s# expr: 100 * ( sum( nginx_ingress_controller_requests{status=~"4.+"} ) / sum(nginx_ingress_controller_requests) ) > 5# for: 1m# labels:# severity: warning# annotations:# description: Too many 4XXs# summary: More than 5% of all requests returned 4XX, this requires your attention# -- Improve connection draining when ingress controller pod is deleted using a lifecycle hook:# With this new hook, we increased the default terminationGracePeriodSeconds from 30 seconds# to 300, allowing the draining of connections up to five minutes.# If the active connections end before that, the pod will terminate gracefully at that time.# To effectively take advantage of this feature, the Configmap feature# worker-shutdown-timeout new value is 240s instead of 10s.##lifecycle:preStop:exec:command:- /wait-shutdownpriorityClassName: ""# -- Rollback limit

##

revisionHistoryLimit: 10## Default 404 backend

##

defaultBackend:##enabled: falsename: defaultbackendimage:registry: k8s.gcr.ioimage: defaultbackend-amd64## for backwards compatibility consider setting the full image url via the repository value below## use *either* current default registry/image or repository format or installing chart by providing the values.yaml will fail## repository:tag: "1.5"pullPolicy: IfNotPresent# nobody user -> uid 65534runAsUser: 65534runAsNonRoot: truereadOnlyRootFilesystem: trueallowPrivilegeEscalation: false# -- Use an existing PSP instead of creating oneexistingPsp: ""extraArgs: {}serviceAccount:create: truename: ""automountServiceAccountToken: true# -- Additional environment variables to set for defaultBackend podsextraEnvs: []port: 8080## Readiness and liveness probes for default backend## Ref: https://kubernetes.io/docs/tasks/configure-pod-container/configure-liveness-readiness-probes/##livenessProbe:failureThreshold: 3initialDelaySeconds: 30periodSeconds: 10successThreshold: 1timeoutSeconds: 5readinessProbe:failureThreshold: 6initialDelaySeconds: 0periodSeconds: 5successThreshold: 1timeoutSeconds: 5# -- Node tolerations for server scheduling to nodes with taints## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/##tolerations: []# - key: "key"# operator: "Equal|Exists"# value: "value"# effect: "NoSchedule|PreferNoSchedule|NoExecute(1.6 only)"affinity: {}# -- Security Context policies for controller pods# See https://kubernetes.io/docs/tasks/administer-cluster/sysctl-cluster/ for# notes on enabling and using sysctls##podSecurityContext: {}# -- Security Context policies for controller main container.# See https://kubernetes.io/docs/tasks/administer-cluster/sysctl-cluster/ for# notes on enabling and using sysctls##containerSecurityContext: {}# -- Labels to add to the pod container metadatapodLabels: {}# key: value# -- Node labels for default backend pod assignment## Ref: https://kubernetes.io/docs/user-guide/node-selection/##nodeSelector:kubernetes.io/os: linux# -- Annotations to be added to default backend pods##podAnnotations: {}replicaCount: 1minAvailable: 1resources: {}# limits:# cpu: 10m# memory: 20Mi# requests:# cpu: 10m# memory: 20MiextraVolumeMounts: []## Additional volumeMounts to the default backend container.# - name: copy-portal-skins# mountPath: /var/lib/lemonldap-ng/portal/skinsextraVolumes: []## Additional volumes to the default backend pod.# - name: copy-portal-skins# emptyDir: {}autoscaling:annotations: {}enabled: falseminReplicas: 1maxReplicas: 2targetCPUUtilizationPercentage: 50targetMemoryUtilizationPercentage: 50service:annotations: {}# clusterIP: ""# -- List of IP addresses at which the default backend service is available## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips##externalIPs: []# loadBalancerIP: ""loadBalancerSourceRanges: []servicePort: 80type: ClusterIPpriorityClassName: ""# -- Labels to be added to the default backend resourceslabels: {}## Enable RBAC as per https://github.com/kubernetes/ingress-nginx/blob/main/docs/deploy/rbac.md and https://github.com/kubernetes/ingress-nginx/issues/266

rbac:create: truescope: false## If true, create & use Pod Security Policy resources

## https://kubernetes.io/docs/concepts/policy/pod-security-policy/

podSecurityPolicy:enabled: falseserviceAccount:create: truename: ""automountServiceAccountToken: true# -- Annotations for the controller service accountannotations: {}# -- Optional array of imagePullSecrets containing private registry credentials

## Ref: https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/

imagePullSecrets: []

# - name: secretName# -- TCP service key-value pairs

## Ref: https://github.com/kubernetes/ingress-nginx/blob/main/docs/user-guide/exposing-tcp-udp-services.md

##

tcp: {}

# 8080: "default/example-tcp-svc:9000"# -- UDP service key-value pairs

## Ref: https://github.com/kubernetes/ingress-nginx/blob/main/docs/user-guide/exposing-tcp-udp-services.md

##

udp: {}

# 53: "kube-system/kube-dns:53"# -- Prefix for TCP and UDP ports names in ingress controller service

## Some cloud providers, like Yandex Cloud may have a requirements for a port name regex to support cloud load balancer integration

portNamePrefix: ""# -- (string) A base64-encoded Diffie-Hellman parameter.

# This can be generated with: `openssl dhparam 4096 2> /dev/null | base64`

## Ref: https://github.com/kubernetes/ingress-nginx/tree/main/docs/examples/customization/ssl-dh-param

dhParam:

5、开始安装ingress-nginx

# 选择节点打label

[root@k8s-master01 ingress-nginx]# kubectl label node k8s-node01 ingress=true # k8s-node01是自己自定义的node节点名称

[root@k8s-master01 ingress-nginx]# kubectl get node --show-labels#创建命名空间

[root@k8s-master01 ingress-nginx]# kubectl create ns ingress-nginx

namespace/ingress-nginx created# 使用helm进行安装

[root@k8s-master01 ingress-nginx]# helm install ingress-nginx -f values.yaml -n ingress-nginx .

NAME: ingress-nginx

LAST DEPLOYED: Tue Nov 1 15:53:20 2022

NAMESPACE: ingress-nginx

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

The ingress-nginx controller has been installed.

It may take a few minutes for the LoadBalancer IP to be available.

You can watch the status by running 'kubectl --namespace ingress-nginx get services -o wide -w ingress-nginx-controller'An example Ingress that makes use of the controller:apiVersion: networking.k8s.io/v1kind: Ingressmetadata:name: examplenamespace: foospec:ingressClassName: nginxrules:- host: www.example.comhttp:paths:- pathType: Prefixbackend:service:name: exampleServiceport:number: 80path: /# This section is only required if TLS is to be enabled for the Ingresstls:- hosts:- www.example.comsecretName: example-tlsIf TLS is enabled for the Ingress, a Secret containing the certificate and key must also be provided:apiVersion: v1kind: Secretmetadata:name: example-tlsnamespace: foodata:tls.crt: <base64 encoded cert>tls.key: <base64 encoded key>type: kubernetes.io/tls[root@k8s-master01 ingress-nginx]# helm list -n ingress-nginx

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

ingress-nginx ingress-nginx 1 2022-11-01 15:53:20.941310532 +0800 CST deployed ingress-nginx-4.2.5 1.3.1[root@k8s-master01 ingress-nginx]# kubectl -n ingress-nginx get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

ingress-nginx-controller-4jhhm 1/1 Running 0 36m 10.103.236.204 k8s-node01 <none> <none>[root@k8s-master01 ingress-nginx]# kubectl -n ingress-nginx get svc -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

ingress-nginx-controller LoadBalancer 192.168.61.78 <pending> 80:32746/TCP,443:30404/TCP 36m app.kubernetes.io/component=controller,app.kubernetes.io/instance=ingress-nginx,app.kubernetes.io/name=ingress-nginx

ingress-nginx-controller-admission ClusterIP 192.168.127.250 <none> 443/TCP 36m app.kubernetes.io/component=controller,app.kubernetes.io/instance=ingress-nginx,app.kubernetes.io/name=ingress-nginx# 删除ingress-nginxhelm delete ingress-nginx -n ingress-nginx# 更新ingress-nginxhelm upgrade ingress-nginx -n -f values.yaml -n ingress-nginx .# 扩容ingress-nginx

[root@k8s-master01 ~]# kubectl label node k8s-node02 ingress=true

node/k8s-node02 labeled[root@k8s-master01 ~]# kubectl get po -n ingress-nginx -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

ingress-nginx-controller-4jhhm 1/1 Running 1 18h 10.103.236.204 k8s-node01 <none> <none>

ingress-nginx-controller-9g8jz 0/1 ContainerCreating 0 6s 10.103.236.205 k8s-node02 <none> <none># 缩容ingress-nginx

[root@k8s-master01 ~]# kubectl label node k8s-node02 ingress-

node/k8s-node02 labeled[root@k8s-master01 ~]# kubectl get po -n ingress-nginx -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

ingress-nginx-controller-4jhhm 1/1 Running 1 18h 10.103.236.204 k8s-node01 <none> <none>

ingress-nginx-controller-9g8jz 1/1 Terminating 0 3m49s 10.103.236.205 k8s-node02 <none> <none>

查看宿主机

[root@k8s-node01 ~]# netstat -tnlp | grep 80

tcp 0 0 0.0.0.0:80 0.0.0.0:* LISTEN 2994/nginx: master

tcp6 0 0 :::80 :::* LISTEN 2994/nginx: master

[root@k8s-node01 ~]# ps aux | grep nginx

101 2701 0.0 0.0 208 4 ? Ss 09:52 0:00 /usr/bin/dumb-init -- /nginx-ingress-controller --publish-service=ingress-nginx/ingress-nginx-controller --election-id=ingress-controller-leader --controller-class=k8s.io/ingress-nginx --ingress-class=nginx --configmap=ingress-nginx/ingress-nginx-controller --validating-webhook=:8443 --validating-webhook-certificate=/usr/local/certificates/cert --validating-webhook-key=/usr/local/certificates/key

101 2744 0.1 2.3 743440 46952 ? Ssl 09:52 0:21 /nginx-ingress-controller --publish-service=ingress-nginx/ingress-nginx-controller --election-id=ingress-controller-leader --controller-class=k8s.io/ingress-nginx --ingress-class=nginx --configmap=ingress-nginx/ingress-nginx-controller --validating-webhook=:8443 --validating-webhook-certificate=/usr/local/certificates/cert --validating-webhook-key=/usr/local/certificates/key

101 2994 0.0 1.8 145412 36452 ? S 09:52 0:00 nginx: master process /usr/bin/nginx -c /etc/nginx/nginx.conf

101 94153 0.0 2.0 157312 41796 ? Sl 14:18 0:00 nginx: worker process

101 94154 0.0 2.0 157248 40616 ? Sl 14:18 0:00 nginx: worker process

101 94155 0.0 1.4 143388 29284 ? S 14:18 0:00 nginx: cache manager process

root 103579 0.0 0.1 112828 2280 pts/0 S+ 14:29 0:00 grep --color=auto nginx6、创建一个ingress

# cat ingress.yaml

apiVersion: networking.k8s.io/v1beta1 # networking.k8s.io/v1 / extensions/v1beta1(基本废弃)

kind: Ingress

metadata:annotations:kubernetes.io/ingress.class: "nginx" # 生明使用名字为 nginx的ingress-nginx【去解析此处查看下方注释】name: example

spec:rules: # 一个Ingress可以配置多个rules- host: foo.bar.com # 域名配置,可以不写,匹配*, *.bar.comhttp:paths: # 相当于nginx的location配合,同一个host可以配置多个path / /abc- backend:serviceName: nginx-svc servicePort: 80path: /=========================================================================================[root@k8s-master01 ~]# kubectl create -f ingress.yaml # 创建ingress

Warning: networking.k8s.io/v1beta1 Ingress is deprecated in v1.19+, unavailable in v1.22+; use networking.k8s.io/v1 Ingress

ingress.networking.k8s.io/example created[root@k8s-master01 ~]# kubectl get ingress

NAME CLASS HOSTS ADDRESS PORTS AGE

example <none> foo.bar.com 80 76s注:

[root@k8s-master01 temp]# cd ingress-nginx

[root@k8s-master01 ingress-nginx]# ls

Chart.yaml ci OWNERS README.md templates values.yaml

vim values.yaml

7、访问

找到ingress-nginx所在的节点

[root@k8s-master01 ~]# kubectl get po -A -owide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

default busybox 1/1 Running 112 48d 172.25.244.253 k8s-master01 <none> <none>

default nginx-66bbc9fdc5-dhrgc 1/1 Running 4 3d15h 172.25.244.254 k8s-master01 <none> <none>

default nginx-66bbc9fdc5-lh7vw 1/1 Running 4 3d15h 172.27.14.233 k8s-node02 <none> <none>

ingress-nginx ingress-nginx-controller-4jhhm 1/1 Running 1 22h 10.103.236.204 k8s-node01 <none> <none>

kube-system calico-kube-controllers-cdd5755b9-qztxb 1/1 Running 15 48d 10.103.236.201 k8s-master01 <none> <none>

kube-system calico-node-6q95q 1/1 Running 21 48d 10.103.236.205 k8s-node02 <none> <none>

kube-system calico-node-cgp6p 1/1 Running 27 48d 10.103.236.203 k8s-master03 <none> <none>

kube-system calico-node-tmxtg 1/1 Running 20 48d 10.103.236.201 k8s-master01 <none> <none>

kube-system calico-node-wc674 1/1 Running 19 48d 10.103.236.202 k8s-master02 <none> <none>

kube-system calico-node-z8k7p 1/1 Running 16 48d 10.103.236.204 k8s-node01 <none> <none>

kube-system coredns-684d86ff88-mtkjf 1/1 Running 14 48d 172.27.14.232 k8s-node02 <none> <none>

kube-system metrics-server-64c6c494dc-gdw52 1/1 Running 19 48d 172.25.92.92 k8s-master02 <none> <none>

kubernetes-dashboard dashboard-metrics-scraper-86bb69c5f6-6jrch 1/1 Running 14 48d 172.17.125.23 k8s-node01 <none> <none>

kubernetes-dashboard kubernetes-dashboard-6576c84894-9f7gh 1/1 Running 26 48d 172.18.195.24 k8s-master03 <none> <none>配置hosts文件

10.103.236.204 foo.bar.com

页面访问

8、进入ingress实例

[root@k8s-master01 ~]# kubectl exec -it ingress-nginx-controller-4jhhm -n ingress-nginx -- sh/etc/nginx $ ls

fastcgi.conf geoip mime.types nginx.conf scgi_params uwsgi_params.default

fastcgi.conf.default koi-utf mime.types.default nginx.conf.default scgi_params.default win-utf

fastcgi_params koi-win modsecurity opentracing.json template

fastcgi_params.default lua modules owasp-modsecurity-crs uwsgi_params/etc/nginx $ grep "## start server foo.bar.com" nginx.conf -A 50## start server foo.bar.comserver {server_name foo.bar.com ;listen 80 ;listen [::]:80 ;listen 443 ssl http2 ;listen [::]:443 ssl http2 ;set $proxy_upstream_name "-";ssl_certificate_by_lua_block {certificate.call()}location / {set $namespace "default";set $ingress_name "example";set $service_name "nginx-svc";set $service_port "80";set $location_path "/";set $global_rate_limit_exceeding n;rewrite_by_lua_block {lua_ingress.rewrite({force_ssl_redirect = false,ssl_redirect = true,force_no_ssl_redirect = false,preserve_trailing_slash = false,use_port_in_redirects = false,global_throttle = { namespace = "", limit = 0, window_size = 0, key = { }, ignored_cidrs = { } },})balancer.rewrite()plugins.run()}# be careful with `access_by_lua_block` and `satisfy any` directives as satisfy any# will always succeed when there's `access_by_lua_block` that does not have any lua code doing `ngx.exit(ngx.DECLINED)`# other authentication method such as basic auth or external auth useless - all requests will be allowed.#access_by_lua_block {#}header_filter_by_lua_block {lua_ingress.header()plugins.run()}body_filter_by_lua_block {plugins.run()}

/etc/nginx $# lua 脚本9、配置多域名

[root@k8s-master01 ~]# ingress-mulDomain.yamlapiVersion: networking.k8s.io/v1beta1 # networking.k8s.io/v1 / extensions/v1beta1

kind: Ingress

metadata:annotations:kubernetes.io/ingress.class: "nginx"name: example

spec:rules: # 一个Ingress可以配置多个rules- host: foo.bar.com # 域名配置,可以不写,匹配*, *.bar.comhttp:paths: # 相当于nginx的location配合,同一个host可以配置多个path / /abc- backend:serviceName: nginx-svc servicePort: 80path: /- host: foo2.bar.com # 域名配置,可以不写,匹配*, *.bar.comhttp:paths: # 相当于nginx的location配合,同一个host可以配置多个path / /abc- backend:serviceName: nginx-svc-externalservicePort: 80path: /