结构化CNN模型构建与测试

- 前言

- GoogLeNet

- 结构

- Inception块

- 模型构建

- resNet18

- 模型结构

- 残差块

- 模型构建

- denseNet

- 模型结构

- DenseBlock

- transition_block

- 模型构建

- 结尾

前言

在本专栏的上一篇博客中我们介绍了常用的线性模型,在本文中我们将介绍GoogleNet、resNet、denseNet这类结构化的模型的构建方式。

GoogLeNet

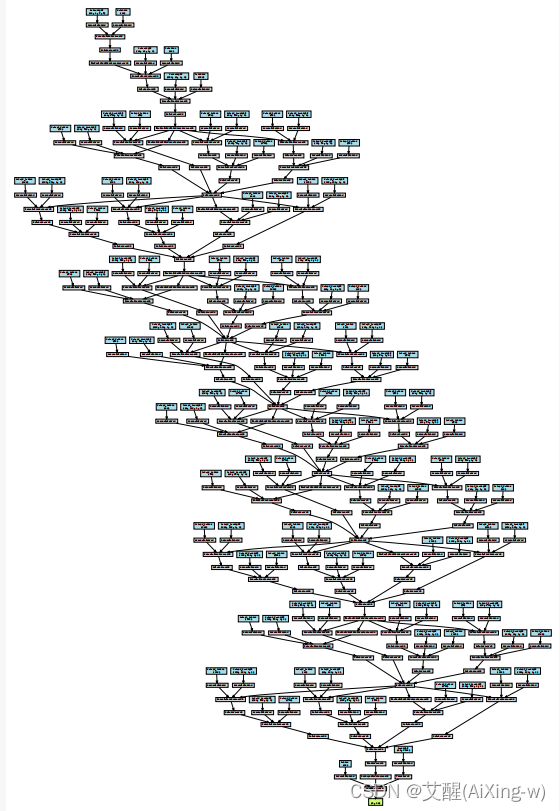

结构

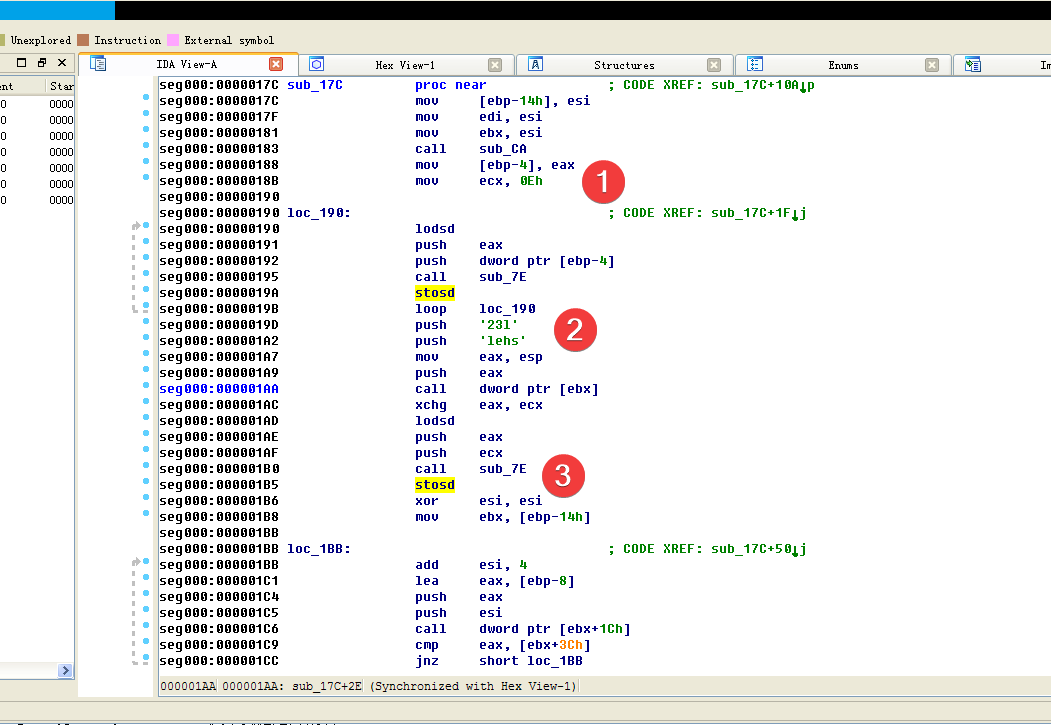

整体的结构似乎有些吓人,但其实他也是用了块的思维,仔细观察可以发现,他中间一段很多层的结构都是相似的

Inception块

这个块就是其中重复的块,这个块分成了四个分支:1x1卷积、1x1卷积+3x3卷积、1x1卷积+5x5卷积、3x3卷积+1x1卷积,最后将这四个分支通道合并

class Inception(nn.Module):def __init__(self, in_channels, c1, c2, c3, c4, **kwargs):super(Inception, self).__init__(**kwargs)self.p1_1 = nn.Conv2d(in_channels, c1, kernel_size=1)self.p2_1 = nn.Conv2d(in_channels, c2[0], kernel_size=1)self.p2_2 = nn.Conv2d(c2[0], c2[1], kernel_size=3, padding=1)self.p3_1 = nn.Conv2d(in_channels, c3[0], kernel_size=1)self.p3_2 = nn.Conv2d(c3[0], c3[1], kernel_size=5, padding=2)self.p4_1 = nn.MaxPool2d(kernel_size=3, stride=1, padding=1)self.p4_2 = nn.Conv2d(in_channels, c4, kernel_size=1)def forward(self, x):p1 = F.relu(self.p1_1(x))p2 = F.relu(self.p2_2(F.relu(self.p2_1(x))))p3 = F.relu(self.p3_2(F.relu(self.p3_1(x))))p4 = F.relu(self.p4_2(self.p4_1(x)))return torch.cat((p1, p2, p3, p4), dim=1)

模型构建

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),nn.ReLU(),nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b2 = nn.Sequential(nn.Conv2d(64, 64, kernel_size=1),nn.ReLU(),nn.Conv2d(64, 192, kernel_size=3, padding=1),nn.ReLU(),nn.MaxPool2d(kernel_size=3, stride=2, padding=1))b3 = nn.Sequential(Inception(192, 64, (96, 128), (16, 32), 32),Inception(256, 128, (128, 192), (32, 96), 64),nn.MaxPool2d(kernel_size=3, stride=2, padding=1))b4 = nn.Sequential(Inception(480, 192, (96, 208), (16, 48), 64),Inception(512, 160, (112, 224), (24, 64), 64),Inception(512, 128, (128, 256), (24, 64), 64),Inception(512, 112, (144, 288), (32, 64), 64),Inception(528, 256, (160, 320), (32, 128), 128),nn.MaxPool2d(kernel_size=3, stride=2, padding=1))b5 = nn.Sequential(Inception(832, 256, (160, 320), (32, 128), 128),Inception(832, 384, (192, 384), (48, 128), 128),nn.AdaptiveAvgPool2d((1, 1)),nn.Flatten())net = nn.Sequential(b1, b2, b3, b4, b5, nn.Linear(1024, 10))

resNet18

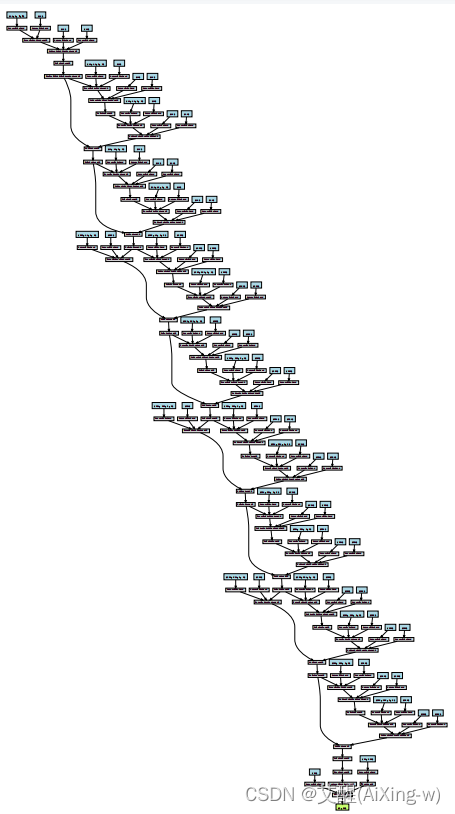

模型结构

这个模型似乎要更加简洁一些,因为这里只有两个分支,但是他有两种分支方式,一种是卷积+残差,另外一种是卷积+经过1x1卷积处理过的残差

残差块

class Residual(nn.Module):def __init__(self, input_channels, num_channels, use_1x1conv=False, strides=1):super().__init__()self.conv1 = nn.Conv2d(input_channels, num_channels, kernel_size=3, padding=1, stride=strides)self.conv2 = nn.Conv2d(num_channels, num_channels, kernel_size=3, padding=1)if use_1x1conv:self.conv3 = nn.Conv2d(input_channels, num_channels, kernel_size=1, stride=strides)else:self.conv3 = Noneself.bn1 = nn.BatchNorm2d(num_channels)self.bn2 = nn.BatchNorm2d(num_channels)def forward(self, X):Y = F.relu(self.bn1(self.conv1(X)))Y = self.bn2(self.conv2(Y))if self.conv3:X = self.conv3(X)Y += Xreturn F.relu(Y)

构建时依次使用两种分支方式

def resnet_block(input_channels, num_channels, num_residuals, first_block=False):blk = []for i in range(num_residuals):if i == 0 and not first_block:blk.append(Residual(input_channels, num_channels, use_1x1conv=True, strides=2))else:blk.append(Residual(num_channels, num_channels))return blk

模型构建

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),nn.BatchNorm2d(64), nn.ReLU(),nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b2 = nn.Sequential(*resnet_block(64, 64, 2, first_block=True))

b3 = nn.Sequential(*resnet_block(64, 128, 2))

b4 = nn.Sequential(*resnet_block(128, 256, 2))

b5 = nn.Sequential(*resnet_block(256, 512, 2))net = nn.Sequential(b1, b2, b3, b4, b5, nn.AdaptiveAvgPool2d((1, 1)),nn.Flatten(),nn.Linear(512, 10))

denseNet

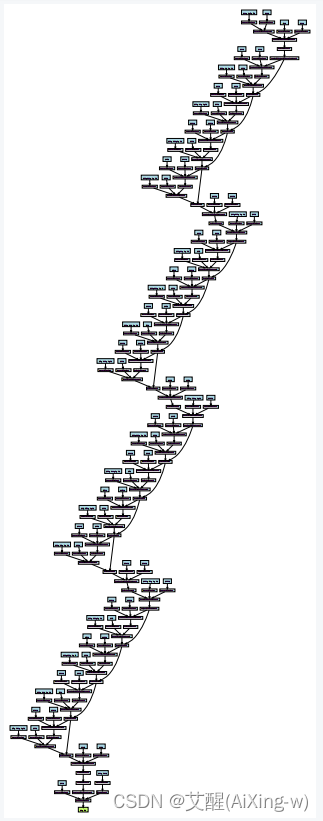

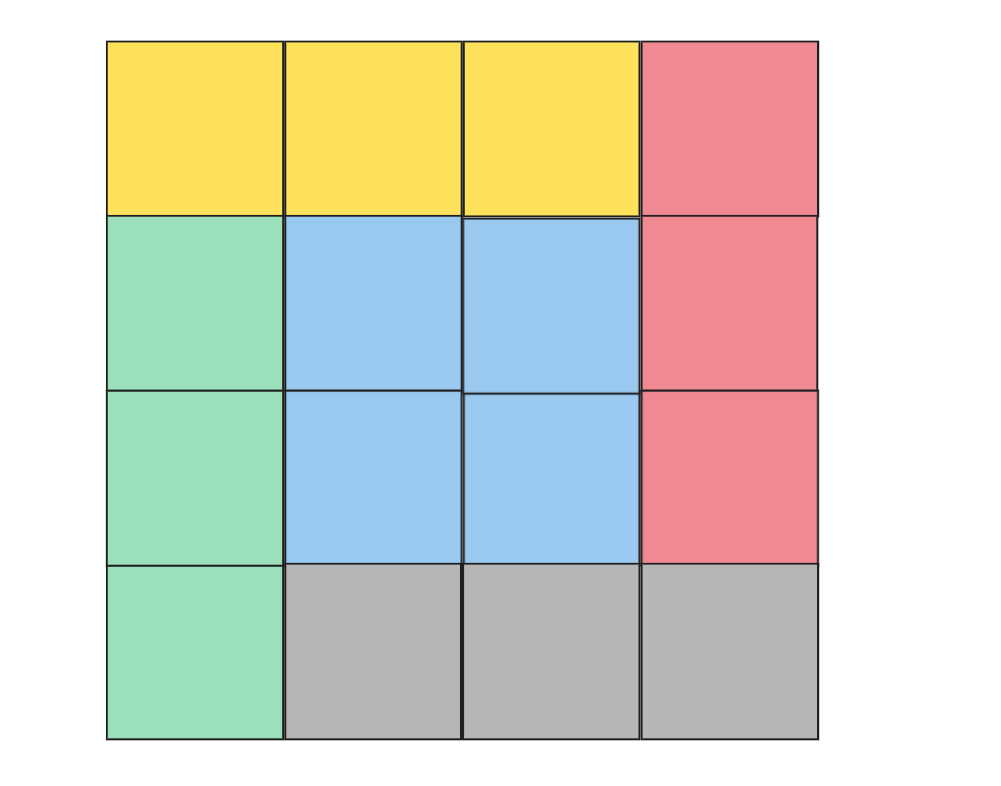

模型结构

这个模型的主要思路就是把每一次的输出和输入合并起来,同时作为下一层的输入,具体的细节还要结合代码解释

DenseBlock

我们看下面的代码,注意init中的循环以及forward时对于X的处理。我们可以发现,每经过一个conv_block都会将conv_block的输出并入到输入,以此作为下一层的输入

def conv_block(input_channels, num_channels):return nn.Sequential(nn.BatchNorm2d(input_channels), nn.ReLU(),nn.Conv2d(input_channels, num_channels, kernel_size=3, padding=1))class DenseBlock(nn.Module):def __init__(self, num_convs, input_channels, num_channels):super(DenseBlock, self).__init__()layer = []for i in range(num_convs):layer.append(conv_block(num_channels * i + input_channels, num_channels))self.net = nn.Sequential(*layer)def forward(self, X):for blk in self.net:Y = blk(X)X = torch.cat((X, Y), dim=1)return X

transition_block

这个块的主要作用是减少通道,因为在前面的块中,通道数会持续的增长,考虑到计算量,需要在中间加入减少通道的块

def transition_block(input_channels, num_channels):return nn.Sequential(nn.BatchNorm2d(input_channels), nn.ReLU(),nn.Conv2d(input_channels, num_channels, kernel_size=1),nn.AvgPool2d(kernel_size=2, stride=2))

模型构建

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),nn.BatchNorm2d(64), nn.ReLU(),nn.MaxPool2d(kernel_size=3, stride=2, padding=1))num_channels, growth_rate = 64, 32

num_convs_in_dense_blocks = [4, 4, 4, 4]

blks = []

for i, num_convs in enumerate(num_convs_in_dense_blocks):blks.append(DenseBlock(num_convs, num_channels, growth_rate))num_channels += num_convs * growth_rateif i != len(num_convs_in_dense_blocks) - 1:blks.append(transition_block(num_channels, num_channels // 2))num_channels = num_channels // 2net = nn.Sequential(b1, *blks,nn.BatchNorm2d(num_channels), nn.ReLU(),nn.AdaptiveAvgPool2d((1, 1)),nn.Flatten(),nn.Linear(num_channels, 10))

结尾

我们到现在模型就已经构建好了,测试的过程可以参照本专栏的上一篇博客

pytorch深度学习基础(十)——常用线性CNN模型的结构与训练

![[Python]调用pytdx的代码示例](https://img-blog.csdnimg.cn/img_convert/bba4e2d5ac95754514e76cc0e9a8f5ba.png)