诸神缄默不语-个人CSDN博文目录

PyTorch Geometric (PyG) 包文档与官方代码示例学习笔记(持续更新ing…)

本文介绍使用PyG实现异质图神经网络(HGNN)的相关操作。

本文主要参考PyG文档异质图部分:Heterogeneous Graph Learning — pytorch_geometric documentation

相关官方代码示例:https://github.com/pyg-team/pytorch_geometric/blob/master/examples/hetero

注意:①很多操作可以不使用PyG的HeteroData对象就直接实现。②部分数据集无法直接通过大陆网络下载的解决方式可参考我之前写的博文:PyG (PyTorch Geometric) Dropbox系图数据集无法下载的解决方案(AMiner, DBLP, IMDB, LastFM)(持续更新ing…) ③我用的是pip安装的2.2.0版本torch-geometric,部分较早的版本可能不支持T.AddMetaPaths对象的drop_orig_edge_types属性

文章目录

- 1. 示例数据集介绍

- 2. HeteroData对象

- 3. 可用方法

- 4. 节点表征类

- 4.1 将同质图GNN直接转换为异质图GNN

- 4.2 使用HeteroConv定义GNN

- 4.3 使用已有或手写的异质图算子

- 5. 节点分类任务

- 5.1 whole-batch

- 5.1.1 使用已有的异质图算子

- 5.1.1.1 HAN

- 5.1.1.2 HGT

- 5.1.2 使用HeteroConv

- 5.1.2.1 GraphSAGE

- 5.2 mini-batch

- 6. 链路预测任务

- 6.1 transductive

- 6.1.1 GraphSAGE编码+MLP解码+预测用户打分

- 6.1.2 二部图预测用户打分

- 6.2 inductive

1. 示例数据集介绍

ogbn-mag异质图的schema:

共有1,939,743个节点,21,111,007条边。

数据集的原始任务是节点分类,预测paper的venue(会议或期刊)。

在PyG中的调用方法(原始数据中只有paper节点的特征,在这里是用preprocess属性增加了其他节点通过图结构获取到的特征):

from torch_geometric.datasets import OGB_MAGdataset = OGB_MAG(root='./data', preprocess='metapath2vec')

#preprocess也可以用TransE等

data = dataset[0]

2. HeteroData对象

from torch_geometric.data import HeteroDatadata = HeteroData()data['paper'].x = ... # [num_papers, num_features_paper]

data['author'].x = ... # [num_authors, num_features_author]

data['institution'].x = ... # [num_institutions, num_features_institution]

data['field_of_study'].x = ... # [num_field, num_features_field]data['paper', 'cites', 'paper'].edge_index = ... # [2, num_edges_cites]

data['author', 'writes', 'paper'].edge_index = ... # [2, num_edges_writes]

data['author', 'affiliated_with', 'institution'].edge_index = ... # [2, num_edges_affiliated]

data['paper', 'has_topic', 'field_of_study'].edge_index = ... # [2, num_edges_topic]data['paper', 'cites', 'paper'].edge_attr = ... # [num_edges_cites, num_features_cites]

data['author', 'writes', 'paper'].edge_attr = ... # [num_edges_writes, num_features_writes]

data['author', 'affiliated_with', 'institution'].edge_attr = ... # [num_edges_affiliated, num_features_affiliated]

data['paper', 'has_topic', 'field_of_study'].edge_attr = ... # [num_edges_topic, num_features_topic]

节点类型用字符串切片,边类型用字符串三元组切片

data.{attribute_name}_dict提取对应的类名和值。这个可以作为GNN模型的传入项:

model = HeteroGNN(...)output = model(data.x_dict, data.edge_index_dict, data.edge_attr_dict)

以在第一节中介绍的ogbn-mag数据为例,data对象打印出来就是这样的:

HeteroData(paper={x=[736389, 128],year=[736389],y=[736389],train_mask=[736389],val_mask=[736389],test_mask=[736389]},author={ x=[1134649, 128] },institution={ x=[8740, 128] },field_of_study={ x=[59965, 128] },(author, affiliated_with, institution)={ edge_index=[2, 1043998] },(author, writes, paper)={ edge_index=[2, 7145660] },(paper, cites, paper)={ edge_index=[2, 5416271] },(paper, has_topic, field_of_study)={ edge_index=[2, 7505078] }

)

3. 可用方法

- 切片获取节点/边对象,返回字典形式,键是对象的属性(如

x),值是属性值

paper_node_data = data['paper']

cites_edge_data = data['paper', 'cites', 'paper']

- 如果边类型或节点对类型可以唯一确定一种边,那这样也可以:

cites_edge_data = data['paper', 'paper']

cites_edge_data = data['cites']

- 增删属性、节点类型、边类型:

data['paper'].year = ... # Setting a new paper attribute

del data['field_of_study'] # Deleting 'field_of_study' node type

del data['has_topic'] # Deleting 'has_topic' edge type

- metadata

node_types, edge_types = data.metadata()

print(node_types)

['paper', 'author', 'institution']

print(edge_types)

[('paper', 'cites', 'paper'),

('author', 'writes', 'paper'),

('author', 'affiliated_with', 'institution')]

- 转换设备:

data = data.to('cuda:0')

data = data.cpu()

- 图中是否有孤立点、自环、图是否无向、转换为同质图(注意,我测试了一下,如果部分节点没有特征,如在构建ogbn-mag图时没有使用

preprocess入参,则转换为同质图时所有节点)

data.has_isolated_nodes()

data.has_self_loops()

data.is_undirected()

homogeneous_data = data.to_homogeneous()

- 使用

torch_geometric.transforms对异质图对象进行转换(很多类似同质图上的操作):

data = T.ToUndirected()(data)

data = T.AddSelfLoops()(data)

data = T.NormalizeFeatures()(data)

将异质图转换为无向图:增加反向边,使信息传播可以在各边上双向进行;如有必要还会增加反向边类型

示例:

import torch_geometric.transforms as TT.ToUndirected()(data)

输出:

HeteroData(paper={x=[736389, 128],year=[736389],y=[736389],train_mask=[736389],val_mask=[736389],test_mask=[736389]},author={ x=[1134649, 128] },institution={ x=[8740, 128] },field_of_study={ x=[59965, 128] },(author, affiliated_with, institution)={ edge_index=[2, 1043998] },(author, writes, paper)={ edge_index=[2, 7145660] },(paper, cites, paper)={ edge_index=[2, 10792672] },(paper, has_topic, field_of_study)={ edge_index=[2, 7505078] },(institution, rev_affiliated_with, author)={ edge_index=[2, 1043998] },(paper, rev_writes, author)={ edge_index=[2, 7145660] },(field_of_study, rev_has_topic, paper)={ edge_index=[2, 7505078] }

)

增加自环:每种边都会加

4. 节点表征类

lazy initialization:

with torch.no_grad(): # Initialize lazy modules.out = model(data.x_dict, data.edge_index_dict)

注意事项:一是检查张量的dtype(edge_index要torch.long,x要统一(一般就统一成torch.float)),二是检查edge_index没有超界(我常犯的错误是出现以节点数为索引的节点)

问题一会出现的bug可参考:AssertionError when implementing heterogenous GNN · Discussion #5175 · pyg-team/pytorch_geometric

问题二自查可参考:

for edge_type in data.edge_types:src, _, dst = edge_typeassert data[edge_type].edge_index[0].max() < data[src].num_nodesassert data[edge_type].edge_index[1].max() < data[dst].num_nodes

解决方式可参考我之前写的博文:RuntimeError: CUDA error: device-side assert triggered

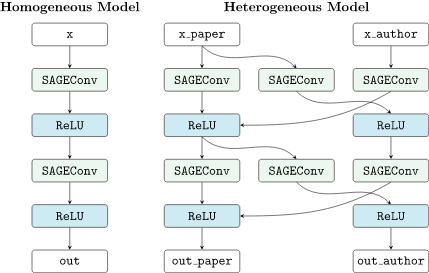

4.1 将同质图GNN直接转换为异质图GNN

也就是直接正常定义GNN模型(有些同质图GNN无法应用于异质图),转换为异质图GNN就是在每种边类型上运行一个同质图GNN模型的实例

torch_geometric.nn.to_hetero()

torch_geometric.nn.to_hetero_with_bases()

示例代码:

import torchimport torch_geometric.transforms as T

from torch_geometric.datasets import OGB_MAG

from torch_geometric.nn import SAGEConv, to_heterodataset = OGB_MAG(root='/data/pyg_data',preprocess='metapath2vec',transform=T.ToUndirected())

data = dataset[0]class GNN(torch.nn.Module):def __init__(self, hidden_channels, out_channels):super().__init__()self.conv1 = SAGEConv((-1, -1), hidden_channels)self.conv2 = SAGEConv((-1, -1), out_channels)def forward(self, x, edge_index):x = self.conv1(x, edge_index).relu()x = self.conv2(x, edge_index)return xmodel = GNN(hidden_channels=64, out_channels=dataset.num_classes)

model = to_hetero(model, data.metadata(), aggr='sum')

in_channels输入tuple形式,是为了二部图的信息传播(事实上我也没搞懂这是啥意思),事实上在本例中用int输入也可以:

import torchimport torch_geometric.transforms as T

from torch_geometric.datasets import OGB_MAG

from torch_geometric.nn import SAGEConv, to_heterodataset = OGB_MAG(root='/data/pyg_data',preprocess='metapath2vec',transform=T.ToUndirected())

data = dataset[0]class GNN(torch.nn.Module):def __init__(self, hidden_channels, out_channels):super().__init__()self.conv1 = SAGEConv(-1, hidden_channels)self.conv2 = SAGEConv(-1, out_channels)def forward(self, x, edge_index):x = self.conv1(x, edge_index).relu()x = self.conv2(x, edge_index)return xmodel = GNN(hidden_channels=64, out_channels=dataset.num_classes)

model = to_hetero(model, data.metadata(), aggr='sum')

如果没有转换为无向图的话,由于author节点没有入边,就会导致NotImplementedError问题。报的警告是:

env_path/lib/python3.8/site-packages/torch_geometric/nn/to_hetero_transformer.py:145: UserWarning: There exist node types ({'author'}) whose representations do not get updated during message passing as they do not occur as destination type in any edge type. This may lead to unexpected behaviour. warnings.warn(

带可学习skip-connections(就是每一层卷完的结果再加上输入的线性转换结果)的版本:

from torch_geometric.nn import GATConv, Linear, to_heteroclass GAT(torch.nn.Module):def __init__(self, hidden_channels, out_channels):super().__init__()self.conv1 = GATConv((-1, -1), hidden_channels, add_self_loops=False)self.lin1 = Linear(-1, hidden_channels)self.conv2 = GATConv((-1, -1), out_channels, add_self_loops=False)self.lin2 = Linear(-1, out_channels)def forward(self, x, edge_index):x = self.conv1(x, edge_index) + self.lin1(x)x = x.relu()x = self.conv2(x, edge_index) + self.lin2(x)return xmodel = GAT(hidden_channels=64, out_channels=dataset.num_classes)

model = to_hetero(model, data.metadata(), aggr='sum')

可参考的训练用代码:

def train():model.train()optimizer.zero_grad()out = model(data.x_dict, data.edge_index_dict)mask = data['paper'].train_maskloss = F.cross_entropy(out['paper'][mask], data['paper'].y[mask])loss.backward()optimizer.step()return float(loss)

4.2 使用HeteroConv定义GNN

可以给不同的边定义不同的GNN算子

torch_geometric.nn.conv.HeteroConv

文档:https://pytorch-geometric.readthedocs.io/en/latest/modules/nn.html#torch_geometric.nn.conv.HeteroConv

示例代码:

import torchimport torch_geometric.transforms as T

from torch_geometric.datasets import OGB_MAG

from torch_geometric.nn import HeteroConv, GCNConv, SAGEConv, GATConv, Lineardataset = OGB_MAG(root='/data/pyg_data',preprocess='metapath2vec',transform=T.ToUndirected())

data = dataset[0]class HeteroGNN(torch.nn.Module):def __init__(self, hidden_channels, out_channels, num_layers):super().__init__()self.convs = torch.nn.ModuleList()for _ in range(num_layers):conv = HeteroConv({('paper', 'cites', 'paper'): GCNConv(-1, hidden_channels),('author', 'writes', 'paper'): SAGEConv((-1, -1), hidden_channels),('paper', 'rev_writes', 'author'): GATConv((-1, -1), hidden_channels),}, aggr='sum')self.convs.append(conv)self.lin = Linear(hidden_channels, out_channels)def forward(self, x_dict, edge_index_dict):for conv in self.convs:x_dict = conv(x_dict, edge_index_dict)x_dict = {key: x.relu() for key, x in x_dict.items()}return self.lin(x_dict['author'])model = HeteroGNN(hidden_channels=64, out_channels=dataset.num_classes,num_layers=2)

4.3 使用已有或手写的异质图算子

以HGT模型为例:

import torchimport torch_geometric.transforms as T

from torch_geometric.datasets import OGB_MAG

from torch_geometric.nn import HGTConv, Lineardataset = OGB_MAG(root='/data/pyg_data',preprocess='metapath2vec',transform=T.ToUndirected())

data = dataset[0]class HGT(torch.nn.Module):def __init__(self, hidden_channels, out_channels, num_heads, num_layers):super().__init__()self.lin_dict = torch.nn.ModuleDict()for node_type in data.node_types:self.lin_dict[node_type] = Linear(-1, hidden_channels)self.convs = torch.nn.ModuleList()for _ in range(num_layers):conv = HGTConv(hidden_channels, hidden_channels, data.metadata(),num_heads, group='sum')self.convs.append(conv)self.lin = Linear(hidden_channels, out_channels)def forward(self, x_dict, edge_index_dict):for node_type, x in x_dict.items():x_dict[node_type] = self.lin_dict[node_type](x).relu_()for conv in self.convs:x_dict = conv(x_dict, edge_index_dict)return self.lin(x_dict['author'])model = HGT(hidden_channels=64, out_channels=dataset.num_classes,num_heads=2, num_layers=2)

5. 节点分类任务

5.1 whole-batch

5.1.1 使用已有的异质图算子

5.1.1.1 HAN

参考https://github.com/pyg-team/pytorch_geometric/blob/master/examples/hetero/han_imdb.py

from typing import Dict, List, Unionimport torch

import torch.nn.functional as F

from torch import nnimport torch_geometric.transforms as T

from torch_geometric.datasets import IMDB

from torch_geometric.nn import HANConvmetapaths = [[('movie', 'actor'), ('actor', 'movie')],[('movie', 'director'), ('director', 'movie')]]

transform = T.AddMetaPaths(metapaths=metapaths, drop_orig_edge_types=True,drop_unconnected_node_types=True)

dataset = IMDB('/data/pyg_data/IMDB', transform=transform)

data = dataset[0]

print(data)class HAN(nn.Module):def __init__(self, in_channels: Union[int, Dict[str, int]],out_channels: int, hidden_channels=128, heads=8):super().__init__()self.han_conv = HANConv(in_channels, hidden_channels, heads=heads,dropout=0.6, metadata=data.metadata())self.lin = nn.Linear(hidden_channels, out_channels)def forward(self, x_dict, edge_index_dict):out = self.han_conv(x_dict, edge_index_dict)out = self.lin(out['movie'])return outmodel = HAN(in_channels=-1, out_channels=3)

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')

data, model = data.to(device), model.to(device)with torch.no_grad(): # Initialize lazy modules.out = model(data.x_dict, data.edge_index_dict)optimizer = torch.optim.Adam(model.parameters(), lr=0.005, weight_decay=0.001)def train() -> float:model.train()optimizer.zero_grad()out = model(data.x_dict, data.edge_index_dict)mask = data['movie'].train_maskloss = F.cross_entropy(out[mask], data['movie'].y[mask])loss.backward()optimizer.step()return float(loss)@torch.no_grad()

def test() -> List[float]:model.eval()pred = model(data.x_dict, data.edge_index_dict).argmax(dim=-1)accs = []for split in ['train_mask', 'val_mask', 'test_mask']:mask = data['movie'][split]acc = (pred[mask] == data['movie'].y[mask]).sum() / mask.sum()accs.append(float(acc))return accsbest_val_acc = 0

start_patience = patience = 100

for epoch in range(1, 200):loss = train()train_acc, val_acc, test_acc = test()print(f'Epoch: {epoch:03d}, Loss: {loss:.4f}, Train: {train_acc:.4f}, 'f'Val: {val_acc:.4f}, Test: {test_acc:.4f}')if best_val_acc <= val_acc:patience = start_patiencebest_val_acc = val_accelse:patience -= 1if patience <= 0:print('Stopping training as validation accuracy did not improve 'f'for {start_patience} epochs')break

输出:

HeteroData(metapath_dict={(movie, metapath_0, movie)=[2],(movie, metapath_1, movie)=[2]},movie={x=[4278, 3066],y=[4278],train_mask=[4278],val_mask=[4278],test_mask=[4278]},(movie, metapath_0, movie)={ edge_index=[2, 85358] },(movie, metapath_1, movie)={ edge_index=[2, 17446] }

)

Epoch: 001, Loss: 1.1020, Train: 0.5125, Val: 0.4100, Test: 0.3890

Epoch: 002, Loss: 1.0783, Train: 0.5575, Val: 0.4075, Test: 0.3813

Epoch: 003, Loss: 1.0498, Train: 0.6350, Val: 0.4325, Test: 0.4112

Epoch: 004, Loss: 1.0205, Train: 0.7075, Val: 0.4850, Test: 0.4448

Epoch: 005, Loss: 0.9788, Train: 0.7375, Val: 0.5050, Test: 0.4669

Epoch: 006, Loss: 0.9410, Train: 0.7600, Val: 0.5225, Test: 0.4796

Epoch: 007, Loss: 0.8921, Train: 0.7750, Val: 0.5375, Test: 0.4937

Epoch: 008, Loss: 0.8517, Train: 0.8000, Val: 0.5475, Test: 0.5003

Epoch: 009, Loss: 0.7975, Train: 0.8175, Val: 0.5475, Test: 0.5135

Epoch: 010, Loss: 0.7488, Train: 0.8475, Val: 0.5525, Test: 0.5216

Epoch: 011, Loss: 0.7133, Train: 0.8625, Val: 0.5575, Test: 0.5308

Epoch: 012, Loss: 0.6626, Train: 0.8875, Val: 0.5700, Test: 0.5443

Epoch: 013, Loss: 0.6171, Train: 0.9050, Val: 0.5900, Test: 0.5552

Epoch: 014, Loss: 0.5769, Train: 0.9225, Val: 0.5925, Test: 0.5710

Epoch: 015, Loss: 0.5236, Train: 0.9375, Val: 0.5900, Test: 0.5785

Epoch: 016, Loss: 0.4929, Train: 0.9425, Val: 0.5925, Test: 0.5851

Epoch: 017, Loss: 0.4456, Train: 0.9375, Val: 0.5925, Test: 0.5868

Epoch: 018, Loss: 0.4266, Train: 0.9375, Val: 0.5825, Test: 0.5909

Epoch: 019, Loss: 0.3856, Train: 0.9425, Val: 0.5900, Test: 0.5926

Epoch: 020, Loss: 0.3525, Train: 0.9425, Val: 0.5900, Test: 0.5909

Epoch: 021, Loss: 0.3250, Train: 0.9450, Val: 0.5975, Test: 0.5897

Epoch: 022, Loss: 0.2900, Train: 0.9500, Val: 0.6050, Test: 0.5831

Epoch: 023, Loss: 0.2754, Train: 0.9525, Val: 0.6075, Test: 0.5825

Epoch: 024, Loss: 0.2603, Train: 0.9500, Val: 0.6075, Test: 0.5802

Epoch: 025, Loss: 0.2436, Train: 0.9500, Val: 0.6050, Test: 0.5739

Epoch: 026, Loss: 0.2251, Train: 0.9525, Val: 0.6000, Test: 0.5722

Epoch: 027, Loss: 0.2156, Train: 0.9500, Val: 0.6000, Test: 0.5733

Epoch: 028, Loss: 0.2077, Train: 0.9525, Val: 0.5950, Test: 0.5702

Epoch: 029, Loss: 0.1806, Train: 0.9550, Val: 0.5900, Test: 0.5699

Epoch: 030, Loss: 0.1942, Train: 0.9675, Val: 0.5975, Test: 0.5707

Epoch: 031, Loss: 0.1899, Train: 0.9750, Val: 0.6050, Test: 0.5693

Epoch: 032, Loss: 0.1879, Train: 0.9800, Val: 0.6050, Test: 0.5687

Epoch: 033, Loss: 0.1759, Train: 0.9825, Val: 0.6000, Test: 0.5684

Epoch: 034, Loss: 0.1706, Train: 0.9825, Val: 0.5950, Test: 0.5670

Epoch: 035, Loss: 0.1678, Train: 0.9800, Val: 0.5925, Test: 0.5656

Epoch: 036, Loss: 0.1655, Train: 0.9750, Val: 0.5950, Test: 0.5647

Epoch: 037, Loss: 0.1561, Train: 0.9750, Val: 0.6025, Test: 0.5656

Epoch: 038, Loss: 0.1588, Train: 0.9775, Val: 0.6025, Test: 0.5644

Epoch: 039, Loss: 0.1502, Train: 0.9750, Val: 0.6025, Test: 0.5644

Epoch: 040, Loss: 0.1535, Train: 0.9775, Val: 0.6000, Test: 0.5638

Epoch: 041, Loss: 0.1502, Train: 0.9800, Val: 0.6000, Test: 0.5633

Epoch: 042, Loss: 0.1638, Train: 0.9800, Val: 0.6000, Test: 0.5621

Epoch: 043, Loss: 0.1530, Train: 0.9800, Val: 0.6000, Test: 0.5624

Epoch: 044, Loss: 0.1566, Train: 0.9800, Val: 0.5975, Test: 0.5624

Epoch: 045, Loss: 0.1578, Train: 0.9800, Val: 0.6150, Test: 0.5610

Epoch: 046, Loss: 0.1441, Train: 0.9800, Val: 0.6150, Test: 0.5615

Epoch: 047, Loss: 0.1430, Train: 0.9825, Val: 0.6175, Test: 0.5604

Epoch: 048, Loss: 0.1389, Train: 0.9875, Val: 0.6150, Test: 0.5578

Epoch: 049, Loss: 0.1396, Train: 0.9875, Val: 0.6200, Test: 0.5566

Epoch: 050, Loss: 0.1547, Train: 0.9875, Val: 0.6150, Test: 0.5610

Epoch: 051, Loss: 0.1471, Train: 0.9875, Val: 0.6125, Test: 0.5644

Epoch: 052, Loss: 0.1398, Train: 0.9900, Val: 0.6150, Test: 0.5647

Epoch: 053, Loss: 0.1393, Train: 0.9875, Val: 0.6125, Test: 0.5644

Epoch: 054, Loss: 0.1542, Train: 0.9850, Val: 0.6075, Test: 0.5638

Epoch: 055, Loss: 0.1435, Train: 0.9875, Val: 0.6150, Test: 0.5627

Epoch: 056, Loss: 0.1338, Train: 0.9850, Val: 0.6225, Test: 0.5633

Epoch: 057, Loss: 0.1311, Train: 0.9875, Val: 0.6125, Test: 0.5618

Epoch: 058, Loss: 0.1353, Train: 0.9900, Val: 0.6150, Test: 0.5592

Epoch: 059, Loss: 0.1308, Train: 0.9900, Val: 0.6050, Test: 0.5581

Epoch: 060, Loss: 0.1369, Train: 0.9900, Val: 0.6100, Test: 0.5584

Epoch: 061, Loss: 0.1303, Train: 0.9900, Val: 0.6075, Test: 0.5581

Epoch: 062, Loss: 0.1279, Train: 0.9900, Val: 0.6025, Test: 0.5604

Epoch: 063, Loss: 0.1355, Train: 0.9875, Val: 0.6025, Test: 0.5621

Epoch: 064, Loss: 0.1184, Train: 0.9925, Val: 0.6075, Test: 0.5664

Epoch: 065, Loss: 0.1291, Train: 0.9925, Val: 0.6025, Test: 0.5690

Epoch: 066, Loss: 0.1242, Train: 0.9900, Val: 0.6000, Test: 0.5676

Epoch: 067, Loss: 0.1238, Train: 0.9900, Val: 0.6025, Test: 0.5670

Epoch: 068, Loss: 0.1121, Train: 0.9900, Val: 0.6025, Test: 0.5656

Epoch: 069, Loss: 0.1126, Train: 0.9900, Val: 0.6050, Test: 0.5635

Epoch: 070, Loss: 0.1208, Train: 0.9900, Val: 0.6050, Test: 0.5612

Epoch: 071, Loss: 0.1059, Train: 0.9900, Val: 0.6075, Test: 0.5589

Epoch: 072, Loss: 0.1098, Train: 0.9900, Val: 0.6025, Test: 0.5581

Epoch: 073, Loss: 0.1198, Train: 0.9950, Val: 0.5950, Test: 0.5598

Epoch: 074, Loss: 0.1214, Train: 0.9925, Val: 0.5925, Test: 0.5621

Epoch: 075, Loss: 0.1016, Train: 0.9925, Val: 0.5950, Test: 0.5601

Epoch: 076, Loss: 0.1145, Train: 0.9950, Val: 0.6000, Test: 0.5621

Epoch: 077, Loss: 0.1148, Train: 0.9950, Val: 0.6000, Test: 0.5615

Epoch: 078, Loss: 0.1135, Train: 0.9925, Val: 0.5975, Test: 0.5612

Epoch: 079, Loss: 0.1104, Train: 0.9925, Val: 0.6000, Test: 0.5624

Epoch: 080, Loss: 0.1108, Train: 0.9900, Val: 0.6050, Test: 0.5572

Epoch: 081, Loss: 0.0916, Train: 0.9900, Val: 0.6050, Test: 0.5561

Epoch: 082, Loss: 0.1275, Train: 0.9900, Val: 0.6025, Test: 0.5581

Epoch: 083, Loss: 0.0970, Train: 1.0000, Val: 0.6025, Test: 0.5607

Epoch: 084, Loss: 0.0923, Train: 1.0000, Val: 0.6025, Test: 0.5592

Epoch: 085, Loss: 0.1089, Train: 1.0000, Val: 0.6025, Test: 0.5598

Epoch: 086, Loss: 0.1032, Train: 1.0000, Val: 0.6025, Test: 0.5598

Epoch: 087, Loss: 0.0983, Train: 1.0000, Val: 0.6000, Test: 0.5615

Epoch: 088, Loss: 0.0982, Train: 1.0000, Val: 0.5950, Test: 0.5615

Epoch: 089, Loss: 0.0849, Train: 1.0000, Val: 0.5925, Test: 0.5607

Epoch: 090, Loss: 0.0982, Train: 0.9975, Val: 0.5900, Test: 0.5610

Epoch: 091, Loss: 0.1133, Train: 1.0000, Val: 0.5950, Test: 0.5650

Epoch: 092, Loss: 0.0890, Train: 1.0000, Val: 0.5950, Test: 0.5664

Epoch: 093, Loss: 0.0935, Train: 1.0000, Val: 0.6000, Test: 0.5658

Epoch: 094, Loss: 0.0935, Train: 1.0000, Val: 0.6050, Test: 0.5673

Epoch: 095, Loss: 0.1027, Train: 1.0000, Val: 0.6075, Test: 0.5681

Epoch: 096, Loss: 0.0914, Train: 0.9975, Val: 0.6000, Test: 0.5679

Epoch: 097, Loss: 0.0908, Train: 0.9975, Val: 0.5900, Test: 0.5690

Epoch: 098, Loss: 0.1003, Train: 1.0000, Val: 0.5900, Test: 0.5667

Epoch: 099, Loss: 0.0835, Train: 1.0000, Val: 0.5875, Test: 0.5670

Epoch: 100, Loss: 0.0968, Train: 1.0000, Val: 0.5900, Test: 0.5670

Epoch: 101, Loss: 0.0868, Train: 1.0000, Val: 0.5900, Test: 0.5679

Epoch: 102, Loss: 0.0906, Train: 1.0000, Val: 0.6000, Test: 0.5681

Epoch: 103, Loss: 0.0967, Train: 1.0000, Val: 0.5975, Test: 0.5681

Epoch: 104, Loss: 0.0983, Train: 1.0000, Val: 0.5925, Test: 0.5699

Epoch: 105, Loss: 0.0775, Train: 1.0000, Val: 0.5975, Test: 0.5681

Epoch: 106, Loss: 0.0840, Train: 1.0000, Val: 0.5950, Test: 0.5664

Epoch: 107, Loss: 0.0962, Train: 1.0000, Val: 0.5950, Test: 0.5633

Epoch: 108, Loss: 0.0900, Train: 1.0000, Val: 0.5950, Test: 0.5621

Epoch: 109, Loss: 0.0831, Train: 1.0000, Val: 0.5975, Test: 0.5644

Epoch: 110, Loss: 0.0844, Train: 1.0000, Val: 0.5950, Test: 0.5653

Epoch: 111, Loss: 0.1017, Train: 0.9975, Val: 0.5925, Test: 0.5667

Epoch: 112, Loss: 0.0833, Train: 0.9975, Val: 0.5950, Test: 0.5661

Epoch: 113, Loss: 0.0840, Train: 0.9975, Val: 0.5875, Test: 0.5670

Epoch: 114, Loss: 0.0809, Train: 0.9975, Val: 0.5900, Test: 0.5664

Epoch: 115, Loss: 0.0854, Train: 0.9975, Val: 0.5950, Test: 0.5673

Epoch: 116, Loss: 0.0896, Train: 0.9975, Val: 0.5975, Test: 0.5687

Epoch: 117, Loss: 0.0999, Train: 1.0000, Val: 0.5975, Test: 0.5664

Epoch: 118, Loss: 0.0890, Train: 1.0000, Val: 0.5950, Test: 0.5667

Epoch: 119, Loss: 0.0780, Train: 1.0000, Val: 0.5900, Test: 0.5658

Epoch: 120, Loss: 0.0751, Train: 1.0000, Val: 0.5875, Test: 0.5670

Epoch: 121, Loss: 0.0693, Train: 1.0000, Val: 0.5950, Test: 0.5661

Epoch: 122, Loss: 0.0822, Train: 1.0000, Val: 0.5975, Test: 0.5664

Epoch: 123, Loss: 0.0782, Train: 1.0000, Val: 0.5925, Test: 0.5635

Epoch: 124, Loss: 0.0791, Train: 1.0000, Val: 0.5950, Test: 0.5627

Epoch: 125, Loss: 0.0958, Train: 1.0000, Val: 0.6000, Test: 0.5644

Epoch: 126, Loss: 0.0764, Train: 1.0000, Val: 0.5950, Test: 0.5650

Epoch: 127, Loss: 0.0878, Train: 1.0000, Val: 0.5900, Test: 0.5650

Epoch: 128, Loss: 0.0679, Train: 1.0000, Val: 0.5900, Test: 0.5641

Epoch: 129, Loss: 0.0791, Train: 1.0000, Val: 0.5900, Test: 0.5647

Epoch: 130, Loss: 0.0809, Train: 1.0000, Val: 0.5900, Test: 0.5647

Epoch: 131, Loss: 0.0740, Train: 1.0000, Val: 0.5850, Test: 0.5661

Epoch: 132, Loss: 0.0694, Train: 1.0000, Val: 0.5825, Test: 0.5647

Epoch: 133, Loss: 0.0859, Train: 1.0000, Val: 0.5875, Test: 0.5633

Epoch: 134, Loss: 0.0833, Train: 0.9975, Val: 0.5875, Test: 0.5638

Epoch: 135, Loss: 0.0797, Train: 1.0000, Val: 0.5900, Test: 0.5656

Epoch: 136, Loss: 0.0867, Train: 1.0000, Val: 0.5950, Test: 0.5696

Epoch: 137, Loss: 0.0811, Train: 1.0000, Val: 0.5975, Test: 0.5696

Epoch: 138, Loss: 0.0710, Train: 1.0000, Val: 0.5925, Test: 0.5713

Epoch: 139, Loss: 0.0603, Train: 1.0000, Val: 0.5950, Test: 0.5722

Epoch: 140, Loss: 0.0776, Train: 1.0000, Val: 0.5925, Test: 0.5719

Epoch: 141, Loss: 0.0705, Train: 1.0000, Val: 0.5975, Test: 0.5679

Epoch: 142, Loss: 0.0775, Train: 1.0000, Val: 0.5950, Test: 0.5679

Epoch: 143, Loss: 0.0700, Train: 1.0000, Val: 0.5975, Test: 0.5696

Epoch: 144, Loss: 0.0829, Train: 1.0000, Val: 0.5975, Test: 0.5727

Epoch: 145, Loss: 0.0697, Train: 1.0000, Val: 0.6000, Test: 0.5727

Epoch: 146, Loss: 0.0697, Train: 1.0000, Val: 0.6025, Test: 0.5750

Epoch: 147, Loss: 0.0706, Train: 1.0000, Val: 0.6075, Test: 0.5727

Epoch: 148, Loss: 0.0723, Train: 1.0000, Val: 0.5975, Test: 0.5690

Epoch: 149, Loss: 0.0771, Train: 1.0000, Val: 0.5950, Test: 0.5696

Epoch: 150, Loss: 0.0650, Train: 1.0000, Val: 0.6025, Test: 0.5699

Epoch: 151, Loss: 0.0802, Train: 1.0000, Val: 0.5950, Test: 0.5676

Epoch: 152, Loss: 0.0687, Train: 1.0000, Val: 0.5925, Test: 0.5710

Epoch: 153, Loss: 0.0705, Train: 1.0000, Val: 0.5925, Test: 0.5704

Epoch: 154, Loss: 0.0831, Train: 1.0000, Val: 0.5925, Test: 0.5696

Epoch: 155, Loss: 0.0714, Train: 1.0000, Val: 0.5900, Test: 0.5690

Epoch: 156, Loss: 0.0662, Train: 1.0000, Val: 0.5850, Test: 0.5635

Stopping training as validation accuracy did not improve for 100 epochs

5.1.1.2 HGT

参考https://github.com/pyg-team/pytorch_geometric/blob/master/examples/hetero/hgt_dblp.py

示例代码:

import torch

import torch.nn.functional as Fimport torch_geometric.transforms as T

from torch_geometric.datasets import DBLP

from torch_geometric.nn import HGTConv, Lineardataset = DBLP('/data/pyg_data/DBLP', transform=T.Constant(node_types='conference'))

data = dataset[0]

print(data)class HGT(torch.nn.Module):def __init__(self, hidden_channels, out_channels, num_heads, num_layers):super().__init__()self.lin_dict = torch.nn.ModuleDict()for node_type in data.node_types:self.lin_dict[node_type] = Linear(-1, hidden_channels)self.convs = torch.nn.ModuleList()for _ in range(num_layers):conv = HGTConv(hidden_channels, hidden_channels, data.metadata(),num_heads, group='sum')self.convs.append(conv)self.lin = Linear(hidden_channels, out_channels)def forward(self, x_dict, edge_index_dict):x_dict = {node_type: self.lin_dict[node_type](x).relu_()for node_type, x in x_dict.items()}for conv in self.convs:x_dict = conv(x_dict, edge_index_dict)return self.lin(x_dict['author'])model = HGT(hidden_channels=64, out_channels=4, num_heads=2, num_layers=1)

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')

data, model = data.to(device), model.to(device)with torch.no_grad(): # Initialize lazy modules.out = model(data.x_dict, data.edge_index_dict)optimizer = torch.optim.Adam(model.parameters(), lr=0.005, weight_decay=0.001)def train():model.train()optimizer.zero_grad()out = model(data.x_dict, data.edge_index_dict)mask = data['author'].train_maskloss = F.cross_entropy(out[mask], data['author'].y[mask])loss.backward()optimizer.step()return float(loss)@torch.no_grad()

def test():model.eval()pred = model(data.x_dict, data.edge_index_dict).argmax(dim=-1)accs = []for split in ['train_mask', 'val_mask', 'test_mask']:mask = data['author'][split]acc = (pred[mask] == data['author'].y[mask]).sum() / mask.sum()accs.append(float(acc))return accsfor epoch in range(1, 101):loss = train()train_acc, val_acc, test_acc = test()print(f'Epoch: {epoch:03d}, Loss: {loss:.4f}, Train: {train_acc:.4f}, 'f'Val: {val_acc:.4f}, Test: {test_acc:.4f}')

输出:

HeteroData(author={x=[4057, 334],y=[4057],train_mask=[4057],val_mask=[4057],test_mask=[4057]},paper={ x=[14328, 4231] },term={ x=[7723, 50] },conference={num_nodes=20,x=[20, 1]},(author, to, paper)={ edge_index=[2, 19645] },(paper, to, author)={ edge_index=[2, 19645] },(paper, to, term)={ edge_index=[2, 85810] },(paper, to, conference)={ edge_index=[2, 14328] },(term, to, paper)={ edge_index=[2, 85810] },(conference, to, paper)={ edge_index=[2, 14328] }

)

Epoch: 001, Loss: 1.3967, Train: 0.2550, Val: 0.2700, Test: 0.2539

Epoch: 002, Loss: 1.3708, Train: 0.6750, Val: 0.4825, Test: 0.5272

Epoch: 003, Loss: 1.3459, Train: 0.6200, Val: 0.4525, Test: 0.5302

Epoch: 004, Loss: 1.3173, Train: 0.5675, Val: 0.4300, Test: 0.4992

Epoch: 005, Loss: 1.2809, Train: 0.5550, Val: 0.4150, Test: 0.4836

Epoch: 006, Loss: 1.2323, Train: 0.5825, Val: 0.4175, Test: 0.4814

Epoch: 007, Loss: 1.1662, Train: 0.6575, Val: 0.4325, Test: 0.5026

Epoch: 008, Loss: 1.0761, Train: 0.7475, Val: 0.4775, Test: 0.5511

Epoch: 009, Loss: 0.9564, Train: 0.8425, Val: 0.5575, Test: 0.6098

Epoch: 010, Loss: 0.8064, Train: 0.9400, Val: 0.6275, Test: 0.6831

Epoch: 011, Loss: 0.6348, Train: 0.9750, Val: 0.7125, Test: 0.7446

Epoch: 012, Loss: 0.4627, Train: 0.9950, Val: 0.7225, Test: 0.7768

Epoch: 013, Loss: 0.3151, Train: 0.9975, Val: 0.7275, Test: 0.7842

Epoch: 014, Loss: 0.2002, Train: 0.9975, Val: 0.7200, Test: 0.7835

Epoch: 015, Loss: 0.1142, Train: 0.9950, Val: 0.7200, Test: 0.7706

Epoch: 016, Loss: 0.0614, Train: 0.9950, Val: 0.7250, Test: 0.7596

Epoch: 017, Loss: 0.0336, Train: 1.0000, Val: 0.7125, Test: 0.7633

Epoch: 018, Loss: 0.0163, Train: 1.0000, Val: 0.6950, Test: 0.7676

Epoch: 019, Loss: 0.0068, Train: 1.0000, Val: 0.7150, Test: 0.7688

Epoch: 020, Loss: 0.0033, Train: 1.0000, Val: 0.7175, Test: 0.7630

Epoch: 021, Loss: 0.0022, Train: 1.0000, Val: 0.7200, Test: 0.7630

Epoch: 022, Loss: 0.0016, Train: 1.0000, Val: 0.7175, Test: 0.7587

Epoch: 023, Loss: 0.0010, Train: 1.0000, Val: 0.7175, Test: 0.7624

Epoch: 024, Loss: 0.0006, Train: 1.0000, Val: 0.7300, Test: 0.7621

Epoch: 025, Loss: 0.0003, Train: 1.0000, Val: 0.7300, Test: 0.7599

Epoch: 026, Loss: 0.0002, Train: 1.0000, Val: 0.7325, Test: 0.7571

Epoch: 027, Loss: 0.0002, Train: 1.0000, Val: 0.7425, Test: 0.7608

Epoch: 028, Loss: 0.0002, Train: 1.0000, Val: 0.7425, Test: 0.7633

Epoch: 029, Loss: 0.0002, Train: 1.0000, Val: 0.7475, Test: 0.7624

Epoch: 030, Loss: 0.0003, Train: 1.0000, Val: 0.7525, Test: 0.7636

Epoch: 031, Loss: 0.0005, Train: 1.0000, Val: 0.7475, Test: 0.7667

Epoch: 032, Loss: 0.0006, Train: 1.0000, Val: 0.7475, Test: 0.7663

Epoch: 033, Loss: 0.0007, Train: 1.0000, Val: 0.7550, Test: 0.7673

Epoch: 034, Loss: 0.0008, Train: 1.0000, Val: 0.7600, Test: 0.7682

Epoch: 035, Loss: 0.0008, Train: 1.0000, Val: 0.7625, Test: 0.7703

Epoch: 036, Loss: 0.0008, Train: 1.0000, Val: 0.7650, Test: 0.7722

Epoch: 037, Loss: 0.0008, Train: 1.0000, Val: 0.7650, Test: 0.7759

Epoch: 038, Loss: 0.0008, Train: 1.0000, Val: 0.7625, Test: 0.7722

Epoch: 039, Loss: 0.0009, Train: 1.0000, Val: 0.7550, Test: 0.7756

Epoch: 040, Loss: 0.0010, Train: 1.0000, Val: 0.7550, Test: 0.7734

Epoch: 041, Loss: 0.0010, Train: 1.0000, Val: 0.7525, Test: 0.7749

Epoch: 042, Loss: 0.0011, Train: 1.0000, Val: 0.7475, Test: 0.7743

Epoch: 043, Loss: 0.0011, Train: 1.0000, Val: 0.7500, Test: 0.7753

Epoch: 044, Loss: 0.0011, Train: 1.0000, Val: 0.7525, Test: 0.7746

Epoch: 045, Loss: 0.0012, Train: 1.0000, Val: 0.7500, Test: 0.7749

Epoch: 046, Loss: 0.0012, Train: 1.0000, Val: 0.7550, Test: 0.7762

Epoch: 047, Loss: 0.0013, Train: 1.0000, Val: 0.7575, Test: 0.7792

Epoch: 048, Loss: 0.0015, Train: 1.0000, Val: 0.7550, Test: 0.7808

Epoch: 049, Loss: 0.0016, Train: 1.0000, Val: 0.7525, Test: 0.7783

Epoch: 050, Loss: 0.0016, Train: 1.0000, Val: 0.7575, Test: 0.7808

Epoch: 051, Loss: 0.0016, Train: 1.0000, Val: 0.7600, Test: 0.7811

Epoch: 052, Loss: 0.0016, Train: 1.0000, Val: 0.7625, Test: 0.7842

Epoch: 053, Loss: 0.0017, Train: 1.0000, Val: 0.7600, Test: 0.7823

Epoch: 054, Loss: 0.0018, Train: 1.0000, Val: 0.7600, Test: 0.7835

Epoch: 055, Loss: 0.0019, Train: 1.0000, Val: 0.7600, Test: 0.7808

Epoch: 056, Loss: 0.0019, Train: 1.0000, Val: 0.7575, Test: 0.7820

Epoch: 057, Loss: 0.0019, Train: 1.0000, Val: 0.7600, Test: 0.7832

Epoch: 058, Loss: 0.0020, Train: 1.0000, Val: 0.7625, Test: 0.7848

Epoch: 059, Loss: 0.0021, Train: 1.0000, Val: 0.7625, Test: 0.7845

Epoch: 060, Loss: 0.0021, Train: 1.0000, Val: 0.7625, Test: 0.7839

Epoch: 061, Loss: 0.0022, Train: 1.0000, Val: 0.7650, Test: 0.7826

Epoch: 062, Loss: 0.0023, Train: 1.0000, Val: 0.7700, Test: 0.7826

Epoch: 063, Loss: 0.0023, Train: 1.0000, Val: 0.7700, Test: 0.7848

Epoch: 064, Loss: 0.0024, Train: 1.0000, Val: 0.7700, Test: 0.7820

Epoch: 065, Loss: 0.0025, Train: 1.0000, Val: 0.7700, Test: 0.7839

Epoch: 066, Loss: 0.0025, Train: 1.0000, Val: 0.7675, Test: 0.7826

Epoch: 067, Loss: 0.0026, Train: 1.0000, Val: 0.7675, Test: 0.7832

Epoch: 068, Loss: 0.0026, Train: 1.0000, Val: 0.7650, Test: 0.7854

Epoch: 069, Loss: 0.0027, Train: 1.0000, Val: 0.7650, Test: 0.7863

Epoch: 070, Loss: 0.0027, Train: 1.0000, Val: 0.7625, Test: 0.7866

Epoch: 071, Loss: 0.0028, Train: 1.0000, Val: 0.7625, Test: 0.7860

Epoch: 072, Loss: 0.0028, Train: 1.0000, Val: 0.7625, Test: 0.7872

Epoch: 073, Loss: 0.0028, Train: 1.0000, Val: 0.7625, Test: 0.7872

Epoch: 074, Loss: 0.0028, Train: 1.0000, Val: 0.7625, Test: 0.7860

Epoch: 075, Loss: 0.0029, Train: 1.0000, Val: 0.7625, Test: 0.7854

Epoch: 076, Loss: 0.0029, Train: 1.0000, Val: 0.7650, Test: 0.7863

Epoch: 077, Loss: 0.0029, Train: 1.0000, Val: 0.7600, Test: 0.7866

Epoch: 078, Loss: 0.0029, Train: 1.0000, Val: 0.7625, Test: 0.7875

Epoch: 079, Loss: 0.0030, Train: 1.0000, Val: 0.7625, Test: 0.7872

Epoch: 080, Loss: 0.0030, Train: 1.0000, Val: 0.7625, Test: 0.7885

Epoch: 081, Loss: 0.0030, Train: 1.0000, Val: 0.7625, Test: 0.7897

Epoch: 082, Loss: 0.0030, Train: 1.0000, Val: 0.7600, Test: 0.7894

Epoch: 083, Loss: 0.0030, Train: 1.0000, Val: 0.7600, Test: 0.7897

Epoch: 084, Loss: 0.0030, Train: 1.0000, Val: 0.7625, Test: 0.7900

Epoch: 085, Loss: 0.0030, Train: 1.0000, Val: 0.7625, Test: 0.7897

Epoch: 086, Loss: 0.0031, Train: 1.0000, Val: 0.7650, Test: 0.7903

Epoch: 087, Loss: 0.0031, Train: 1.0000, Val: 0.7650, Test: 0.7906

Epoch: 088, Loss: 0.0031, Train: 1.0000, Val: 0.7650, Test: 0.7909

Epoch: 089, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7915

Epoch: 090, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7915

Epoch: 091, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7915

Epoch: 092, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7912

Epoch: 093, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7909

Epoch: 094, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7909

Epoch: 095, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7909

Epoch: 096, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7909

Epoch: 097, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7906

Epoch: 098, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7912

Epoch: 099, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7912

Epoch: 100, Loss: 0.0031, Train: 1.0000, Val: 0.7675, Test: 0.7915

5.1.2 使用HeteroConv

5.1.2.1 GraphSAGE

参考https://github.com/pyg-team/pytorch_geometric/blob/master/examples/hetero/hetero_conv_dblp.py

(对原数据集中没有特征的节点,用[1.]作为初始特征)

import torch

import torch.nn.functional as Fimport torch_geometric.transforms as T

from torch_geometric.datasets import DBLP

from torch_geometric.nn import HeteroConv, Linear, SAGEConv# We initialize conference node features with a single one-vector as feature:

dataset = DBLP('/data/pyg_data/DBLP', transform=T.Constant(node_types='conference'))

data = dataset[0]

print(data)class HeteroGNN(torch.nn.Module):def __init__(self, metadata, hidden_channels, out_channels, num_layers):super().__init__()self.convs = torch.nn.ModuleList()for _ in range(num_layers):conv = HeteroConv({edge_type: SAGEConv((-1, -1), hidden_channels)for edge_type in metadata[1]})self.convs.append(conv)self.lin = Linear(hidden_channels, out_channels)def forward(self, x_dict, edge_index_dict):for conv in self.convs:x_dict = conv(x_dict, edge_index_dict)x_dict = {key: F.leaky_relu(x) for key, x in x_dict.items()}return self.lin(x_dict['author'])model = HeteroGNN(data.metadata(), hidden_channels=64, out_channels=4,num_layers=2)

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

data, model = data.to(device), model.to(device)with torch.no_grad(): # Initialize lazy modules.out = model(data.x_dict, data.edge_index_dict)optimizer = torch.optim.Adam(model.parameters(), lr=0.005, weight_decay=0.001)def train():model.train()optimizer.zero_grad()out = model(data.x_dict, data.edge_index_dict)mask = data['author'].train_maskloss = F.cross_entropy(out[mask], data['author'].y[mask])loss.backward()optimizer.step()return float(loss)@torch.no_grad()

def test():model.eval()pred = model(data.x_dict, data.edge_index_dict).argmax(dim=-1)accs = []for split in ['train_mask', 'val_mask', 'test_mask']:mask = data['author'][split]acc = (pred[mask] == data['author'].y[mask]).sum() / mask.sum()accs.append(float(acc))return accsfor epoch in range(1, 101):loss = train()train_acc, val_acc, test_acc = test()print(f'Epoch: {epoch:03d}, Loss: {loss:.4f}, Train: {train_acc:.4f}, 'f'Val: {val_acc:.4f}, Test: {test_acc:.4f}')

输出:

HeteroData(author={x=[4057, 334],y=[4057],train_mask=[4057],val_mask=[4057],test_mask=[4057]},paper={ x=[14328, 4231] },term={ x=[7723, 50] },conference={num_nodes=20,x=[20, 1]},(author, to, paper)={ edge_index=[2, 19645] },(paper, to, author)={ edge_index=[2, 19645] },(paper, to, term)={ edge_index=[2, 85810] },(paper, to, conference)={ edge_index=[2, 14328] },(term, to, paper)={ edge_index=[2, 85810] },(conference, to, paper)={ edge_index=[2, 14328] }

)

Epoch: 001, Loss: 1.3721, Train: 0.4550, Val: 0.3450, Test: 0.3819

Epoch: 002, Loss: 1.2867, Train: 0.6050, Val: 0.4800, Test: 0.5333

Epoch: 003, Loss: 1.1778, Train: 0.7175, Val: 0.5325, Test: 0.5941

Epoch: 004, Loss: 1.0368, Train: 0.8350, Val: 0.6050, Test: 0.6788

Epoch: 005, Loss: 0.8729, Train: 0.8950, Val: 0.6725, Test: 0.7228

Epoch: 006, Loss: 0.6991, Train: 0.9350, Val: 0.7025, Test: 0.7479

Epoch: 007, Loss: 0.5314, Train: 0.9600, Val: 0.7350, Test: 0.7765

Epoch: 008, Loss: 0.3831, Train: 0.9825, Val: 0.7525, Test: 0.8010

Epoch: 009, Loss: 0.2585, Train: 0.9900, Val: 0.7800, Test: 0.8189

Epoch: 010, Loss: 0.1632, Train: 0.9975, Val: 0.8025, Test: 0.8284

Epoch: 011, Loss: 0.0988, Train: 0.9975, Val: 0.8100, Test: 0.8293

Epoch: 012, Loss: 0.0578, Train: 1.0000, Val: 0.8025, Test: 0.8290

Epoch: 013, Loss: 0.0324, Train: 1.0000, Val: 0.8150, Test: 0.8296

Epoch: 014, Loss: 0.0178, Train: 1.0000, Val: 0.8075, Test: 0.8281

Epoch: 015, Loss: 0.0100, Train: 1.0000, Val: 0.8050, Test: 0.8281

Epoch: 016, Loss: 0.0060, Train: 1.0000, Val: 0.8050, Test: 0.8262

Epoch: 017, Loss: 0.0039, Train: 1.0000, Val: 0.8025, Test: 0.8235

Epoch: 018, Loss: 0.0027, Train: 1.0000, Val: 0.8100, Test: 0.8232

Epoch: 019, Loss: 0.0020, Train: 1.0000, Val: 0.8125, Test: 0.8235

Epoch: 020, Loss: 0.0017, Train: 1.0000, Val: 0.8125, Test: 0.8238

Epoch: 021, Loss: 0.0015, Train: 1.0000, Val: 0.8175, Test: 0.8268

Epoch: 022, Loss: 0.0014, Train: 1.0000, Val: 0.8175, Test: 0.8271

Epoch: 023, Loss: 0.0013, Train: 1.0000, Val: 0.8125, Test: 0.8274

Epoch: 024, Loss: 0.0014, Train: 1.0000, Val: 0.8150, Test: 0.8284

Epoch: 025, Loss: 0.0014, Train: 1.0000, Val: 0.8175, Test: 0.8281

Epoch: 026, Loss: 0.0015, Train: 1.0000, Val: 0.8175, Test: 0.8268

Epoch: 027, Loss: 0.0017, Train: 1.0000, Val: 0.8225, Test: 0.8225

Epoch: 028, Loss: 0.0019, Train: 1.0000, Val: 0.8250, Test: 0.8216

Epoch: 029, Loss: 0.0021, Train: 1.0000, Val: 0.8250, Test: 0.8198

Epoch: 030, Loss: 0.0024, Train: 1.0000, Val: 0.8225, Test: 0.8195

Epoch: 031, Loss: 0.0026, Train: 1.0000, Val: 0.8200, Test: 0.8192

Epoch: 032, Loss: 0.0029, Train: 1.0000, Val: 0.8225, Test: 0.8189

Epoch: 033, Loss: 0.0032, Train: 1.0000, Val: 0.8175, Test: 0.8185

Epoch: 034, Loss: 0.0035, Train: 1.0000, Val: 0.8200, Test: 0.8185

Epoch: 035, Loss: 0.0037, Train: 1.0000, Val: 0.8200, Test: 0.8176

Epoch: 036, Loss: 0.0038, Train: 1.0000, Val: 0.8225, Test: 0.8185

Epoch: 037, Loss: 0.0039, Train: 1.0000, Val: 0.8200, Test: 0.8176

Epoch: 038, Loss: 0.0041, Train: 1.0000, Val: 0.8175, Test: 0.8192

Epoch: 039, Loss: 0.0043, Train: 1.0000, Val: 0.8175, Test: 0.8204

Epoch: 040, Loss: 0.0044, Train: 1.0000, Val: 0.8150, Test: 0.8189

Epoch: 041, Loss: 0.0045, Train: 1.0000, Val: 0.8150, Test: 0.8173

Epoch: 042, Loss: 0.0046, Train: 1.0000, Val: 0.8175, Test: 0.8179

Epoch: 043, Loss: 0.0047, Train: 1.0000, Val: 0.8150, Test: 0.8170

Epoch: 044, Loss: 0.0047, Train: 1.0000, Val: 0.8175, Test: 0.8185

Epoch: 045, Loss: 0.0047, Train: 1.0000, Val: 0.8125, Test: 0.8195

Epoch: 046, Loss: 0.0047, Train: 1.0000, Val: 0.8150, Test: 0.8192

Epoch: 047, Loss: 0.0047, Train: 1.0000, Val: 0.8125, Test: 0.8182

Epoch: 048, Loss: 0.0047, Train: 1.0000, Val: 0.8075, Test: 0.8167

Epoch: 049, Loss: 0.0047, Train: 1.0000, Val: 0.8050, Test: 0.8158

Epoch: 050, Loss: 0.0047, Train: 1.0000, Val: 0.8000, Test: 0.8167

Epoch: 051, Loss: 0.0047, Train: 1.0000, Val: 0.8050, Test: 0.8170

Epoch: 052, Loss: 0.0047, Train: 1.0000, Val: 0.8100, Test: 0.8152

Epoch: 053, Loss: 0.0046, Train: 1.0000, Val: 0.8075, Test: 0.8149

Epoch: 054, Loss: 0.0046, Train: 1.0000, Val: 0.8075, Test: 0.8133

Epoch: 055, Loss: 0.0046, Train: 1.0000, Val: 0.8100, Test: 0.8139

Epoch: 056, Loss: 0.0046, Train: 1.0000, Val: 0.8100, Test: 0.8152

Epoch: 057, Loss: 0.0045, Train: 1.0000, Val: 0.8050, Test: 0.8149

Epoch: 058, Loss: 0.0045, Train: 1.0000, Val: 0.8025, Test: 0.8146

Epoch: 059, Loss: 0.0045, Train: 1.0000, Val: 0.8100, Test: 0.8139

Epoch: 060, Loss: 0.0044, Train: 1.0000, Val: 0.8125, Test: 0.8149

Epoch: 061, Loss: 0.0044, Train: 1.0000, Val: 0.8100, Test: 0.8149

Epoch: 062, Loss: 0.0043, Train: 1.0000, Val: 0.8025, Test: 0.8130

Epoch: 063, Loss: 0.0043, Train: 1.0000, Val: 0.8050, Test: 0.8124

Epoch: 064, Loss: 0.0042, Train: 1.0000, Val: 0.8050, Test: 0.8124

Epoch: 065, Loss: 0.0042, Train: 1.0000, Val: 0.8100, Test: 0.8127

Epoch: 066, Loss: 0.0041, Train: 1.0000, Val: 0.8100, Test: 0.8130

Epoch: 067, Loss: 0.0041, Train: 1.0000, Val: 0.8050, Test: 0.8121

Epoch: 068, Loss: 0.0040, Train: 1.0000, Val: 0.8050, Test: 0.8124

Epoch: 069, Loss: 0.0040, Train: 1.0000, Val: 0.8100, Test: 0.8118

Epoch: 070, Loss: 0.0039, Train: 1.0000, Val: 0.8075, Test: 0.8115

Epoch: 071, Loss: 0.0038, Train: 1.0000, Val: 0.8050, Test: 0.8109

Epoch: 072, Loss: 0.0038, Train: 1.0000, Val: 0.8075, Test: 0.8109

Epoch: 073, Loss: 0.0037, Train: 1.0000, Val: 0.8100, Test: 0.8099

Epoch: 074, Loss: 0.0037, Train: 1.0000, Val: 0.8100, Test: 0.8109

Epoch: 075, Loss: 0.0036, Train: 1.0000, Val: 0.8050, Test: 0.8106

Epoch: 076, Loss: 0.0036, Train: 1.0000, Val: 0.8075, Test: 0.8115

Epoch: 077, Loss: 0.0036, Train: 1.0000, Val: 0.8100, Test: 0.8112

Epoch: 078, Loss: 0.0035, Train: 1.0000, Val: 0.8075, Test: 0.8112

Epoch: 079, Loss: 0.0035, Train: 1.0000, Val: 0.8050, Test: 0.8112

Epoch: 080, Loss: 0.0035, Train: 1.0000, Val: 0.8050, Test: 0.8112

Epoch: 081, Loss: 0.0034, Train: 1.0000, Val: 0.8050, Test: 0.8115

Epoch: 082, Loss: 0.0034, Train: 1.0000, Val: 0.8050, Test: 0.8109

Epoch: 083, Loss: 0.0034, Train: 1.0000, Val: 0.8050, Test: 0.8109

Epoch: 084, Loss: 0.0034, Train: 1.0000, Val: 0.8050, Test: 0.8112

Epoch: 085, Loss: 0.0033, Train: 1.0000, Val: 0.8050, Test: 0.8106

Epoch: 086, Loss: 0.0033, Train: 1.0000, Val: 0.8025, Test: 0.8103

Epoch: 087, Loss: 0.0033, Train: 1.0000, Val: 0.8025, Test: 0.8103

Epoch: 088, Loss: 0.0032, Train: 1.0000, Val: 0.8025, Test: 0.8099

Epoch: 089, Loss: 0.0032, Train: 1.0000, Val: 0.8025, Test: 0.8106

Epoch: 090, Loss: 0.0032, Train: 1.0000, Val: 0.8025, Test: 0.8103

Epoch: 091, Loss: 0.0032, Train: 1.0000, Val: 0.8025, Test: 0.8106

Epoch: 092, Loss: 0.0031, Train: 1.0000, Val: 0.8025, Test: 0.8106

Epoch: 093, Loss: 0.0031, Train: 1.0000, Val: 0.8025, Test: 0.8109

Epoch: 094, Loss: 0.0031, Train: 1.0000, Val: 0.8025, Test: 0.8115

Epoch: 095, Loss: 0.0031, Train: 1.0000, Val: 0.8000, Test: 0.8121

Epoch: 096, Loss: 0.0030, Train: 1.0000, Val: 0.8025, Test: 0.8124

Epoch: 097, Loss: 0.0030, Train: 1.0000, Val: 0.8000, Test: 0.8130

Epoch: 098, Loss: 0.0030, Train: 1.0000, Val: 0.8025, Test: 0.8127

Epoch: 099, Loss: 0.0030, Train: 1.0000, Val: 0.7975, Test: 0.8124

Epoch: 100, Loss: 0.0030, Train: 1.0000, Val: 0.8000, Test: 0.8127

5.2 mini-batch

可用的DataLoader:

https://pytorch-geometric.readthedocs.io/en/latest/modules/loader.html#torch_geometric.loader.NeighborLoader

https://pytorch-geometric.readthedocs.io/en/latest/modules/loader.html#torch_geometric.loader.HGTLoader

跟同质图一样,还挺方便的,就直接返回HeteroData对象

建立DataLoader的代码模板:

import torch_geometric.transforms as T

from torch_geometric.datasets import OGB_MAG

from torch_geometric.loader import NeighborLoadertransform = T.ToUndirected() # Add reverse edge types.

data = OGB_MAG(root='./data', preprocess='metapath2vec', transform=transform)[0]train_loader = NeighborLoader(data,# Sample 15 neighbors for each node and each edge type for 2 iterations:num_neighbors=[15] * 2,# Use a batch size of 128 for sampling training nodes of type "paper":batch_size=128,input_nodes=('paper', data['paper'].train_mask),

)batch = next(iter(train_loader))

可以使用更细粒度的邻居数控制:num_neighbors = {key: [15] * 2 for key in data.edge_types}

就是这个batch_size是说用于计算这么多节点嵌入,需要用整个batch(前batch_size个嵌入就是这些要的嵌入)

训练的代码模板:

def train():model.train()total_examples = total_loss = 0for batch in train_loader:optimizer.zero_grad()batch = batch.to('cuda:0')batch_size = batch['paper'].batch_sizeout = model(batch.x_dict, batch.edge_index_dict)loss = F.cross_entropy(out['paper'][:batch_size],batch['paper'].y[:batch_size])loss.backward()optimizer.step()total_examples += batch_sizetotal_loss += float(loss) * batch_sizereturn total_loss / total_examples

直接使用NeighborLoader多进程会出现这个奇怪的问题,所以建议用单进程:Heterogenous graph, use NeighborLoader with num_workers>0, and stucks after many epochs · Issue #5348 · pyg-team/pytorch_geometric

如果想要获得mini-batch节点对应原图中的索引,可以参考这个discussion:I wonder how to use NeighborLoader correctly? · Discussion #3409 · pyg-team/pytorch_geometric

示例代码(参考https://github.com/pyg-team/pytorch_geometric/blob/master/examples/hetero/to_hetero_mag.py):

import argparse

import os.path as ospimport torch

import torch.nn.functional as F

from torch.nn import ReLU

from tqdm import tqdmimport torch_geometric.transforms as T

from torch_geometric.datasets import OGB_MAG

from torch_geometric.loader import HGTLoader, NeighborLoader

from torch_geometric.nn import Linear, SAGEConv, Sequential, to_heteroparser = argparse.ArgumentParser()

parser.add_argument('--use_hgt_loader', action='store_true')

args = parser.parse_args()device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')transform = T.ToUndirected(merge=True)

dataset = OGB_MAG('/data/pyg_data', preprocess='metapath2vec', transform=transform)# Already send node features/labels to GPU for faster access during sampling:

data = dataset[0].to(device, 'x', 'y')train_input_nodes = ('paper', data['paper'].train_mask)

val_input_nodes = ('paper', data['paper'].val_mask)

kwargs = {'batch_size': 1024, 'num_workers': 6, 'persistent_workers': True}if not args.use_hgt_loader:train_loader = NeighborLoader(data, num_neighbors=[10] * 2, shuffle=True,input_nodes=train_input_nodes, **kwargs)val_loader = NeighborLoader(data, num_neighbors=[10] * 2,input_nodes=val_input_nodes, **kwargs)

else:train_loader = HGTLoader(data, num_samples=[1024] * 4, shuffle=True,input_nodes=train_input_nodes, **kwargs)val_loader = HGTLoader(data, num_samples=[1024] * 4,input_nodes=val_input_nodes, **kwargs)model = Sequential('x, edge_index', [(SAGEConv((-1, -1), 64), 'x, edge_index -> x'),ReLU(inplace=True),(SAGEConv((-1, -1), 64), 'x, edge_index -> x'),ReLU(inplace=True),(Linear(-1, dataset.num_classes), 'x -> x'),

])

model = to_hetero(model, data.metadata(), aggr='sum').to(device)@torch.no_grad()

def init_params():# Initialize lazy parameters via forwarding a single batch to the model:batch = next(iter(train_loader))batch = batch.to(device, 'edge_index')model(batch.x_dict, batch.edge_index_dict)def train():model.train()total_examples = total_loss = 0for batch in tqdm(train_loader):optimizer.zero_grad()batch = batch.to(device, 'edge_index')batch_size = batch['paper'].batch_sizeout = model(batch.x_dict, batch.edge_index_dict)['paper'][:batch_size]loss = F.cross_entropy(out, batch['paper'].y[:batch_size])loss.backward()optimizer.step()total_examples += batch_sizetotal_loss += float(loss) * batch_sizereturn total_loss / total_examples@torch.no_grad()

def test(loader):model.eval()total_examples = total_correct = 0for batch in tqdm(loader):batch = batch.to(device, 'edge_index')batch_size = batch['paper'].batch_sizeout = model(batch.x_dict, batch.edge_index_dict)['paper'][:batch_size]pred = out.argmax(dim=-1)total_examples += batch_sizetotal_correct += int((pred == batch['paper'].y[:batch_size]).sum())return total_correct / total_examplesinit_params() # Initialize parameters.

optimizer = torch.optim.Adam(model.parameters(), lr=0.01)for epoch in range(1, 21):loss = train()val_acc = test(val_loader)print(f'Epoch: {epoch:02d}, Loss: {loss:.4f}, Val: {val_acc:.4f}')

NeighborLoader最后一个epoch的输出结果:Epoch: 20, Loss: 1.9040, Val: 0.4445

HGTLoader最后一个epoch的输出结果:Epoch: 20, Loss: 2.0077, Val: 0.4271

(因为有进度条,所以太长了,所以就不放全部输出了)

示例代码注意事项:PyG的数据对象用to可以单独挑出一些属性转换设备

6. 链路预测任务

6.1 transductive

6.1.1 GraphSAGE编码+MLP解码+预测用户打分

参考:https://github.com/pyg-team/pytorch_geometric/blob/master/examples/hetero/hetero_link_pred.py

- 用GraphSAGE转换为异质图GNN,做节点编码

- 对节点对表征的解码(也就是得到节点对链路预测得分的过程):将节点对特征concat后,通过2层MLP

- 不算是标准的链路预测任务,因为这个任务是预测已知节点对的

['user','rates','movie']得分(取值范围是0-5的离散整数),所以是用回归任务来做的(损失函数是加权MSE:因为6种打分之间不平衡) - 在测试时把预测结果截断到0-5之间再计算RMSE值,作为输出指标

- 使用MovieLens数据集。原数据集中的节点有两种

- 电影节点,仅有文本特征(标题),代码中用SentenceTransformer模型进行句子表征,注意这个

model_name属性如果直接用本地模型会出现问题,解决方式就是粗暴的直接用本地路径跑一次,然后把存储后的对象改成模型名,然后就用模型名直接调用。不太好解释,直接看这个issue吧(我提了个PR,但是不知道为啥作者没有merge,所以还需手动修改):Unable to process movie_lens dataset with local directory transformers model · Issue #5500 · pyg-team/pytorch_geometric - 用户节点:没有特征,代码中用独热编码作为初始节点特征

- 转换为无向图(产生逆向边)

- 电影节点,仅有文本特征(标题),代码中用SentenceTransformer模型进行句子表征,注意这个

- 数据分割:8-1-1随机划分边,节点不变,训练集和验证集图中用的边相同,测试集用的边在训练集基础上增加验证集计算指标用的边。因为是已知节点对的回归任务,所以不需要负边

import argparseimport torch

import torch.nn.functional as F

from torch.nn import Linearimport torch_geometric.transforms as T

from torch_geometric.datasets import MovieLens

from torch_geometric.nn import SAGEConv, to_heteroparser = argparse.ArgumentParser()

parser.add_argument('--use_weighted_loss', action='store_true',help='Whether to use weighted MSE loss.')

args = parser.parse_args()device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')dataset = MovieLens('/data/pyg_data/MovieLens', model_name='all-MiniLM-L6-v2')

data = dataset[0].to(device)# Add user node features for message passing:

data['user'].x = torch.eye(data['user'].num_nodes, device=device)

del data['user'].num_nodes# Add a reverse ('movie', 'rev_rates', 'user') relation for message passing:

data = T.ToUndirected()(data)

del data['movie', 'rev_rates', 'user'].edge_label # Remove "reverse" label.# Perform a link-level split into training, validation, and test edges:

train_data, val_data, test_data = T.RandomLinkSplit(num_val=0.1,num_test=0.1,neg_sampling_ratio=0.0,edge_types=[('user', 'rates', 'movie')],rev_edge_types=[('movie', 'rev_rates', 'user')],

)(data)# We have an unbalanced dataset with many labels for rating 3 and 4, and very

# few for 0 and 1. Therefore we use a weighted MSE loss.

if args.use_weighted_loss:weight = torch.bincount(train_data['user', 'movie'].edge_label)weight = weight.max() / weight

else:weight = Nonedef weighted_mse_loss(pred, target, weight=None):weight = 1. if weight is None else weight[target].to(pred.dtype)return (weight * (pred - target.to(pred.dtype)).pow(2)).mean()class GNNEncoder(torch.nn.Module):def __init__(self, hidden_channels, out_channels):super().__init__()self.conv1 = SAGEConv((-1, -1), hidden_channels)self.conv2 = SAGEConv((-1, -1), out_channels)def forward(self, x, edge_index):x = self.conv1(x, edge_index).relu()x = self.conv2(x, edge_index)return xclass EdgeDecoder(torch.nn.Module):def __init__(self, hidden_channels):super().__init__()self.lin1 = Linear(2 * hidden_channels, hidden_channels)self.lin2 = Linear(hidden_channels, 1)def forward(self, z_dict, edge_label_index):row, col = edge_label_indexz = torch.cat([z_dict['user'][row], z_dict['movie'][col]], dim=-1)z = self.lin1(z).relu()z = self.lin2(z)return z.view(-1)class Model(torch.nn.Module):def __init__(self, hidden_channels):super().__init__()self.encoder = GNNEncoder(hidden_channels, hidden_channels)self.encoder = to_hetero(self.encoder, data.metadata(), aggr='sum')self.decoder = EdgeDecoder(hidden_channels)def forward(self, x_dict, edge_index_dict, edge_label_index):z_dict = self.encoder(x_dict, edge_index_dict)return self.decoder(z_dict, edge_label_index)model = Model(hidden_channels=32).to(device)# Due to lazy initialization, we need to run one model step so the number

# of parameters can be inferred:

with torch.no_grad():model.encoder(train_data.x_dict, train_data.edge_index_dict)optimizer = torch.optim.Adam(model.parameters(), lr=0.01)def train():model.train()optimizer.zero_grad()pred = model(train_data.x_dict, train_data.edge_index_dict,train_data['user', 'movie'].edge_label_index)target = train_data['user', 'movie'].edge_labelloss = weighted_mse_loss(pred, target, weight)loss.backward()optimizer.step()return float(loss)@torch.no_grad()

def test(data):model.eval()pred = model(data.x_dict, data.edge_index_dict,data['user', 'movie'].edge_label_index)pred = pred.clamp(min=0, max=5)target = data['user', 'movie'].edge_label.float()rmse = F.mse_loss(pred, target).sqrt()return float(rmse)for epoch in range(1, 301):loss = train()train_rmse = test(train_data)val_rmse = test(val_data)test_rmse = test(test_data)print(f'Epoch: {epoch:03d}, Loss: {loss:.4f}, Train: {train_rmse:.4f}, 'f'Val: {val_rmse:.4f}, Test: {test_rmse:.4f}')

输出:

HeteroData(movie={ x=[9742, 404] },user={ x=[610, 610] },(user, rates, movie)={edge_index=[2, 100836],edge_label=[100836]},(movie, rev_rates, user)={ edge_index=[2, 100836] }

)

Epoch: 001, Loss: 11.1455, Train: 3.0880, Val: 3.0996, Test: 3.0917

Epoch: 002, Loss: 9.5358, Train: 2.6658, Val: 2.6792, Test: 2.6712

Epoch: 003, Loss: 7.1066, Train: 1.8713, Val: 1.8877, Test: 1.8804

Epoch: 004, Loss: 3.5019, Train: 1.1067, Val: 1.0977, Test: 1.1063

Epoch: 005, Loss: 1.2249, Train: 1.9740, Val: 1.9311, Test: 1.9472

Epoch: 006, Loss: 5.5210, Train: 1.6758, Val: 1.6408, Test: 1.6595

Epoch: 007, Loss: 2.8131, Train: 1.0975, Val: 1.0894, Test: 1.0977

Epoch: 008, Loss: 1.2045, Train: 1.2613, Val: 1.2734, Test: 1.2708

Epoch: 009, Loss: 1.5908, Train: 1.5404, Val: 1.5562, Test: 1.5505

Epoch: 010, Loss: 2.3730, Train: 1.6708, Val: 1.6869, Test: 1.6805

Epoch: 011, Loss: 2.7915, Train: 1.6564, Val: 1.6724, Test: 1.6661

Epoch: 012, Loss: 2.7436, Train: 1.5262, Val: 1.5418, Test: 1.5362

Epoch: 013, Loss: 2.3292, Train: 1.3166, Val: 1.3301, Test: 1.3266

Epoch: 014, Loss: 1.7333, Train: 1.1141, Val: 1.1205, Test: 1.1219

Epoch: 015, Loss: 1.2412, Train: 1.0907, Val: 1.0819, Test: 1.0912

Epoch: 016, Loss: 1.1896, Train: 1.2743, Val: 1.2524, Test: 1.2672

Epoch: 017, Loss: 1.6238, Train: 1.3905, Val: 1.3643, Test: 1.3808

Epoch: 018, Loss: 1.9334, Train: 1.3026, Val: 1.2797, Test: 1.2951

Epoch: 019, Loss: 1.6968, Train: 1.1325, Val: 1.1190, Test: 1.1307

Epoch: 020, Loss: 1.2826, Train: 1.0549, Val: 1.0534, Test: 1.0595

Epoch: 021, Loss: 1.1128, Train: 1.1005, Val: 1.1076, Test: 1.1089

Epoch: 022, Loss: 1.2111, Train: 1.1792, Val: 1.1902, Test: 1.1889

Epoch: 023, Loss: 1.3905, Train: 1.2222, Val: 1.2345, Test: 1.2323

Epoch: 024, Loss: 1.4938, Train: 1.2101, Val: 1.2222, Test: 1.2202

Epoch: 025, Loss: 1.4643, Train: 1.1525, Val: 1.1631, Test: 1.1623

Epoch: 026, Loss: 1.3283, Train: 1.0802, Val: 1.0871, Test: 1.0889

Epoch: 027, Loss: 1.1668, Train: 1.0385, Val: 1.0392, Test: 1.0446

Epoch: 028, Loss: 1.0784, Train: 1.0565, Val: 1.0501, Test: 1.0592

Epoch: 029, Loss: 1.1163, Train: 1.1062, Val: 1.0946, Test: 1.1060

Epoch: 030, Loss: 1.2236, Train: 1.1266, Val: 1.1135, Test: 1.1257

Epoch: 031, Loss: 1.2693, Train: 1.0953, Val: 1.0846, Test: 1.0959

Epoch: 032, Loss: 1.1998, Train: 1.0460, Val: 1.0404, Test: 1.0495

Epoch: 033, Loss: 1.0942, Train: 1.0229, Val: 1.0234, Test: 1.0295

Epoch: 034, Loss: 1.0464, Train: 1.0346, Val: 1.0399, Test: 1.0434

Epoch: 035, Loss: 1.0705, Train: 1.0578, Val: 1.0659, Test: 1.0677

Epoch: 036, Loss: 1.1189, Train: 1.0684, Val: 1.0775, Test: 1.0787

Epoch: 037, Loss: 1.1414, Train: 1.0578, Val: 1.0665, Test: 1.0681

Epoch: 038, Loss: 1.1190, Train: 1.0331, Val: 1.0400, Test: 1.0428

Epoch: 039, Loss: 1.0672, Train: 1.0112, Val: 1.0150, Test: 1.0196

Epoch: 040, Loss: 1.0224, Train: 1.0071, Val: 1.0070, Test: 1.0139

Epoch: 041, Loss: 1.0143, Train: 1.0195, Val: 1.0159, Test: 1.0246

Epoch: 042, Loss: 1.0395, Train: 1.0297, Val: 1.0244, Test: 1.0339

Epoch: 043, Loss: 1.0602, Train: 1.0227, Val: 1.0182, Test: 1.0274

Epoch: 044, Loss: 1.0460, Train: 1.0051, Val: 1.0033, Test: 1.0113

Epoch: 045, Loss: 1.0103, Train: 0.9932, Val: 0.9948, Test: 1.0011

Epoch: 046, Loss: 0.9864, Train: 0.9937, Val: 0.9984, Test: 1.0032

Epoch: 047, Loss: 0.9875, Train: 1.0002, Val: 1.0069, Test: 1.0105

Epoch: 048, Loss: 1.0004, Train: 1.0024, Val: 1.0099, Test: 1.0131

Epoch: 049, Loss: 1.0048, Train: 0.9962, Val: 1.0033, Test: 1.0069

Epoch: 050, Loss: 0.9925, Train: 0.9855, Val: 0.9912, Test: 0.9956

Epoch: 051, Loss: 0.9712, Train: 0.9780, Val: 0.9815, Test: 0.9871

Epoch: 052, Loss: 0.9565, Train: 0.9782, Val: 0.9792, Test: 0.9862

Epoch: 053, Loss: 0.9568, Train: 0.9818, Val: 0.9813, Test: 0.9891

Epoch: 054, Loss: 0.9640, Train: 0.9807, Val: 0.9801, Test: 0.9880

Epoch: 055, Loss: 0.9619, Train: 0.9737, Val: 0.9744, Test: 0.9818

Epoch: 056, Loss: 0.9481, Train: 0.9670, Val: 0.9697, Test: 0.9762

Epoch: 057, Loss: 0.9351, Train: 0.9652, Val: 0.9699, Test: 0.9754

Epoch: 058, Loss: 0.9315, Train: 0.9664, Val: 0.9725, Test: 0.9773

Epoch: 059, Loss: 0.9338, Train: 0.9662, Val: 0.9729, Test: 0.9775

Epoch: 060, Loss: 0.9335, Train: 0.9626, Val: 0.9690, Test: 0.9739

Epoch: 061, Loss: 0.9265, Train: 0.9575, Val: 0.9629, Test: 0.9684

Epoch: 062, Loss: 0.9167, Train: 0.9542, Val: 0.9584, Test: 0.9646

Epoch: 063, Loss: 0.9106, Train: 0.9539, Val: 0.9568, Test: 0.9637

Epoch: 064, Loss: 0.9099, Train: 0.9539, Val: 0.9561, Test: 0.9634

Epoch: 065, Loss: 0.9099, Train: 0.9516, Val: 0.9541, Test: 0.9614

Epoch: 066, Loss: 0.9056, Train: 0.9479, Val: 0.9514, Test: 0.9582

Epoch: 067, Loss: 0.8985, Train: 0.9453, Val: 0.9500, Test: 0.9563

Epoch: 068, Loss: 0.8935, Train: 0.9446, Val: 0.9504, Test: 0.9563

Epoch: 069, Loss: 0.8922, Train: 0.9442, Val: 0.9507, Test: 0.9563

Epoch: 070, Loss: 0.8914, Train: 0.9424, Val: 0.9490, Test: 0.9546

Epoch: 071, Loss: 0.8882, Train: 0.9397, Val: 0.9459, Test: 0.9517

Epoch: 072, Loss: 0.8831, Train: 0.9376, Val: 0.9432, Test: 0.9491

Epoch: 073, Loss: 0.8791, Train: 0.9367, Val: 0.9417, Test: 0.9480

Epoch: 074, Loss: 0.8775, Train: 0.9359, Val: 0.9408, Test: 0.9471

Epoch: 075, Loss: 0.8760, Train: 0.9341, Val: 0.9395, Test: 0.9456

Epoch: 076, Loss: 0.8726, Train: 0.9319, Val: 0.9383, Test: 0.9441

Epoch: 077, Loss: 0.8685, Train: 0.9307, Val: 0.9382, Test: 0.9435

Epoch: 078, Loss: 0.8662, Train: 0.9302, Val: 0.9386, Test: 0.9434

Epoch: 079, Loss: 0.8653, Train: 0.9289, Val: 0.9376, Test: 0.9422

Epoch: 080, Loss: 0.8630, Train: 0.9270, Val: 0.9353, Test: 0.9399

Epoch: 081, Loss: 0.8593, Train: 0.9257, Val: 0.9335, Test: 0.9381

Epoch: 082, Loss: 0.8570, Train: 0.9251, Val: 0.9326, Test: 0.9372

Epoch: 083, Loss: 0.8560, Train: 0.9239, Val: 0.9317, Test: 0.9361

Epoch: 084, Loss: 0.8538, Train: 0.9223, Val: 0.9308, Test: 0.9349

Epoch: 085, Loss: 0.8507, Train: 0.9211, Val: 0.9307, Test: 0.9344

Epoch: 086, Loss: 0.8486, Train: 0.9205, Val: 0.9307, Test: 0.9341

Epoch: 087, Loss: 0.8473, Train: 0.9192, Val: 0.9296, Test: 0.9329

Epoch: 088, Loss: 0.8450, Train: 0.9178, Val: 0.9280, Test: 0.9312

Epoch: 089, Loss: 0.8425, Train: 0.9169, Val: 0.9269, Test: 0.9300

Epoch: 090, Loss: 0.8408, Train: 0.9160, Val: 0.9261, Test: 0.9291

Epoch: 091, Loss: 0.8393, Train: 0.9148, Val: 0.9255, Test: 0.9282

Epoch: 092, Loss: 0.8370, Train: 0.9136, Val: 0.9253, Test: 0.9275

Epoch: 093, Loss: 0.8348, Train: 0.9127, Val: 0.9253, Test: 0.9272

Epoch: 094, Loss: 0.8333, Train: 0.9118, Val: 0.9249, Test: 0.9266

Epoch: 095, Loss: 0.8315, Train: 0.9106, Val: 0.9240, Test: 0.9254

Epoch: 096, Loss: 0.8294, Train: 0.9097, Val: 0.9231, Test: 0.9243

Epoch: 097, Loss: 0.8277, Train: 0.9089, Val: 0.9226, Test: 0.9237

Epoch: 098, Loss: 0.8263, Train: 0.9079, Val: 0.9224, Test: 0.9232

Epoch: 099, Loss: 0.8246, Train: 0.9070, Val: 0.9225, Test: 0.9230

Epoch: 100, Loss: 0.8230, Train: 0.9063, Val: 0.9227, Test: 0.9230

Epoch: 101, Loss: 0.8217, Train: 0.9056, Val: 0.9227, Test: 0.9227

Epoch: 102, Loss: 0.8204, Train: 0.9048, Val: 0.9223, Test: 0.9222

Epoch: 103, Loss: 0.8190, Train: 0.9042, Val: 0.9219, Test: 0.9216

Epoch: 104, Loss: 0.8178, Train: 0.9036, Val: 0.9217, Test: 0.9213

Epoch: 105, Loss: 0.8168, Train: 0.9030, Val: 0.9218, Test: 0.9212

Epoch: 106, Loss: 0.8157, Train: 0.9024, Val: 0.9220, Test: 0.9212

Epoch: 107, Loss: 0.8146, Train: 0.9019, Val: 0.9223, Test: 0.9212

Epoch: 108, Loss: 0.8137, Train: 0.9013, Val: 0.9224, Test: 0.9210

Epoch: 109, Loss: 0.8127, Train: 0.9008, Val: 0.9222, Test: 0.9205

Epoch: 110, Loss: 0.8117, Train: 0.9003, Val: 0.9220, Test: 0.9201

Epoch: 111, Loss: 0.8108, Train: 0.8998, Val: 0.9220, Test: 0.9198

Epoch: 112, Loss: 0.8100, Train: 0.8993, Val: 0.9221, Test: 0.9196

Epoch: 113, Loss: 0.8091, Train: 0.8989, Val: 0.9223, Test: 0.9196

Epoch: 114, Loss: 0.8083, Train: 0.8985, Val: 0.9225, Test: 0.9195

Epoch: 115, Loss: 0.8076, Train: 0.8981, Val: 0.9224, Test: 0.9193

Epoch: 116, Loss: 0.8069, Train: 0.8977, Val: 0.9222, Test: 0.9189

Epoch: 117, Loss: 0.8063, Train: 0.8974, Val: 0.9220, Test: 0.9186

Epoch: 118, Loss: 0.8057, Train: 0.8971, Val: 0.9219, Test: 0.9184

Epoch: 119, Loss: 0.8051, Train: 0.8967, Val: 0.9219, Test: 0.9184

Epoch: 120, Loss: 0.8045, Train: 0.8965, Val: 0.9220, Test: 0.9184

Epoch: 121, Loss: 0.8040, Train: 0.8962, Val: 0.9220, Test: 0.9183

Epoch: 122, Loss: 0.8035, Train: 0.8959, Val: 0.9218, Test: 0.9181

Epoch: 123, Loss: 0.8030, Train: 0.8956, Val: 0.9216, Test: 0.9178

Epoch: 124, Loss: 0.8026, Train: 0.8954, Val: 0.9214, Test: 0.9176

Epoch: 125, Loss: 0.8022, Train: 0.8952, Val: 0.9214, Test: 0.9176

Epoch: 126, Loss: 0.8017, Train: 0.8950, Val: 0.9214, Test: 0.9176

Epoch: 127, Loss: 0.8013, Train: 0.8947, Val: 0.9214, Test: 0.9175

Epoch: 128, Loss: 0.8009, Train: 0.8945, Val: 0.9213, Test: 0.9174

Epoch: 129, Loss: 0.8006, Train: 0.8943, Val: 0.9211, Test: 0.9172

Epoch: 130, Loss: 0.8002, Train: 0.8941, Val: 0.9208, Test: 0.9171

Epoch: 131, Loss: 0.7999, Train: 0.8939, Val: 0.9207, Test: 0.9169

Epoch: 132, Loss: 0.7995, Train: 0.8938, Val: 0.9207, Test: 0.9169

Epoch: 133, Loss: 0.7992, Train: 0.8936, Val: 0.9207, Test: 0.9169

Epoch: 134, Loss: 0.7989, Train: 0.8934, Val: 0.9206, Test: 0.9168

Epoch: 135, Loss: 0.7986, Train: 0.8932, Val: 0.9204, Test: 0.9166

Epoch: 136, Loss: 0.7983, Train: 0.8931, Val: 0.9202, Test: 0.9165

Epoch: 137, Loss: 0.7980, Train: 0.8929, Val: 0.9201, Test: 0.9164

Epoch: 138, Loss: 0.7977, Train: 0.8928, Val: 0.9201, Test: 0.9164

Epoch: 139, Loss: 0.7974, Train: 0.8926, Val: 0.9201, Test: 0.9164

Epoch: 140, Loss: 0.7972, Train: 0.8925, Val: 0.9200, Test: 0.9163

Epoch: 141, Loss: 0.7969, Train: 0.8923, Val: 0.9199, Test: 0.9162

Epoch: 142, Loss: 0.7967, Train: 0.8922, Val: 0.9197, Test: 0.9161

Epoch: 143, Loss: 0.7965, Train: 0.8921, Val: 0.9196, Test: 0.9159

Epoch: 144, Loss: 0.7962, Train: 0.8919, Val: 0.9198, Test: 0.9161

Epoch: 145, Loss: 0.7960, Train: 0.8918, Val: 0.9194, Test: 0.9158

Epoch: 146, Loss: 0.7958, Train: 0.8917, Val: 0.9193, Test: 0.9157

Epoch: 147, Loss: 0.7956, Train: 0.8916, Val: 0.9193, Test: 0.9157

Epoch: 148, Loss: 0.7954, Train: 0.8915, Val: 0.9192, Test: 0.9156

Epoch: 149, Loss: 0.7952, Train: 0.8914, Val: 0.9191, Test: 0.9156

Epoch: 150, Loss: 0.7950, Train: 0.8913, Val: 0.9190, Test: 0.9155

Epoch: 151, Loss: 0.7949, Train: 0.8912, Val: 0.9190, Test: 0.9155

Epoch: 152, Loss: 0.7947, Train: 0.8911, Val: 0.9189, Test: 0.9155

Epoch: 153, Loss: 0.7945, Train: 0.8910, Val: 0.9189, Test: 0.9154

Epoch: 154, Loss: 0.7944, Train: 0.8909, Val: 0.9189, Test: 0.9154

Epoch: 155, Loss: 0.7942, Train: 0.8909, Val: 0.9188, Test: 0.9153

Epoch: 156, Loss: 0.7941, Train: 0.8908, Val: 0.9188, Test: 0.9153

Epoch: 157, Loss: 0.7939, Train: 0.8907, Val: 0.9188, Test: 0.9152

Epoch: 158, Loss: 0.7938, Train: 0.8906, Val: 0.9188, Test: 0.9152

Epoch: 159, Loss: 0.7936, Train: 0.8905, Val: 0.9188, Test: 0.9151

Epoch: 160, Loss: 0.7935, Train: 0.8905, Val: 0.9188, Test: 0.9151

Epoch: 161, Loss: 0.7934, Train: 0.8904, Val: 0.9188, Test: 0.9151

Epoch: 162, Loss: 0.7932, Train: 0.8903, Val: 0.9188, Test: 0.9151

Epoch: 163, Loss: 0.7931, Train: 0.8903, Val: 0.9188, Test: 0.9151

Epoch: 164, Loss: 0.7930, Train: 0.8902, Val: 0.9188, Test: 0.9150

Epoch: 165, Loss: 0.7929, Train: 0.8901, Val: 0.9189, Test: 0.9150

Epoch: 166, Loss: 0.7928, Train: 0.8901, Val: 0.9189, Test: 0.9150

Epoch: 167, Loss: 0.7926, Train: 0.8900, Val: 0.9189, Test: 0.9150

Epoch: 168, Loss: 0.7925, Train: 0.8899, Val: 0.9189, Test: 0.9150

Epoch: 169, Loss: 0.7924, Train: 0.8899, Val: 0.9189, Test: 0.9150

Epoch: 170, Loss: 0.7923, Train: 0.8898, Val: 0.9190, Test: 0.9150

Epoch: 171, Loss: 0.7922, Train: 0.8898, Val: 0.9190, Test: 0.9149

Epoch: 172, Loss: 0.7921, Train: 0.8897, Val: 0.9190, Test: 0.9149

Epoch: 173, Loss: 0.7920, Train: 0.8896, Val: 0.9190, Test: 0.9148

Epoch: 174, Loss: 0.7919, Train: 0.8896, Val: 0.9190, Test: 0.9148

Epoch: 175, Loss: 0.7918, Train: 0.8895, Val: 0.9190, Test: 0.9148

Epoch: 176, Loss: 0.7917, Train: 0.8895, Val: 0.9190, Test: 0.9147

Epoch: 177, Loss: 0.7916, Train: 0.8894, Val: 0.9190, Test: 0.9147

Epoch: 178, Loss: 0.7916, Train: 0.8894, Val: 0.9190, Test: 0.9147

Epoch: 179, Loss: 0.7915, Train: 0.8893, Val: 0.9191, Test: 0.9147

Epoch: 180, Loss: 0.7914, Train: 0.8893, Val: 0.9191, Test: 0.9147

Epoch: 181, Loss: 0.7913, Train: 0.8893, Val: 0.9191, Test: 0.9147

Epoch: 182, Loss: 0.7912, Train: 0.8892, Val: 0.9191, Test: 0.9146

Epoch: 183, Loss: 0.7911, Train: 0.8892, Val: 0.9191, Test: 0.9146

Epoch: 184, Loss: 0.7910, Train: 0.8891, Val: 0.9191, Test: 0.9146

Epoch: 185, Loss: 0.7910, Train: 0.8891, Val: 0.9192, Test: 0.9146

Epoch: 186, Loss: 0.7909, Train: 0.8890, Val: 0.9192, Test: 0.9146

Epoch: 187, Loss: 0.7908, Train: 0.8890, Val: 0.9192, Test: 0.9146

Epoch: 188, Loss: 0.7907, Train: 0.8889, Val: 0.9192, Test: 0.9145

Epoch: 189, Loss: 0.7907, Train: 0.8889, Val: 0.9192, Test: 0.9145

Epoch: 190, Loss: 0.7906, Train: 0.8889, Val: 0.9192, Test: 0.9145

Epoch: 191, Loss: 0.7905, Train: 0.8888, Val: 0.9192, Test: 0.9145

Epoch: 192, Loss: 0.7905, Train: 0.8888, Val: 0.9192, Test: 0.9144

Epoch: 193, Loss: 0.7904, Train: 0.8888, Val: 0.9192, Test: 0.9144

Epoch: 194, Loss: 0.7903, Train: 0.8887, Val: 0.9192, Test: 0.9144

Epoch: 195, Loss: 0.7903, Train: 0.8887, Val: 0.9192, Test: 0.9144

Epoch: 196, Loss: 0.7902, Train: 0.8886, Val: 0.9192, Test: 0.9144

Epoch: 197, Loss: 0.7901, Train: 0.8886, Val: 0.9193, Test: 0.9143

Epoch: 198, Loss: 0.7901, Train: 0.8886, Val: 0.9193, Test: 0.9143

Epoch: 199, Loss: 0.7900, Train: 0.8885, Val: 0.9193, Test: 0.9143

Epoch: 200, Loss: 0.7899, Train: 0.8885, Val: 0.9193, Test: 0.9143

Epoch: 201, Loss: 0.7899, Train: 0.8885, Val: 0.9192, Test: 0.9143

Epoch: 202, Loss: 0.7898, Train: 0.8884, Val: 0.9193, Test: 0.9143

Epoch: 203, Loss: 0.7898, Train: 0.8884, Val: 0.9193, Test: 0.9143

Epoch: 204, Loss: 0.7897, Train: 0.8884, Val: 0.9193, Test: 0.9143

Epoch: 205, Loss: 0.7896, Train: 0.8883, Val: 0.9193, Test: 0.9143

Epoch: 206, Loss: 0.7896, Train: 0.8883, Val: 0.9193, Test: 0.9142

Epoch: 207, Loss: 0.7895, Train: 0.8883, Val: 0.9193, Test: 0.9143

Epoch: 208, Loss: 0.7895, Train: 0.8882, Val: 0.9193, Test: 0.9142

Epoch: 209, Loss: 0.7894, Train: 0.8882, Val: 0.9193, Test: 0.9142

Epoch: 210, Loss: 0.7894, Train: 0.8882, Val: 0.9193, Test: 0.9142

Epoch: 211, Loss: 0.7893, Train: 0.8882, Val: 0.9193, Test: 0.9142

Epoch: 212, Loss: 0.7893, Train: 0.8881, Val: 0.9193, Test: 0.9142

Epoch: 213, Loss: 0.7892, Train: 0.8881, Val: 0.9193, Test: 0.9142

Epoch: 214, Loss: 0.7892, Train: 0.8881, Val: 0.9193, Test: 0.9142

Epoch: 215, Loss: 0.7891, Train: 0.8880, Val: 0.9194, Test: 0.9141

Epoch: 216, Loss: 0.7891, Train: 0.8880, Val: 0.9194, Test: 0.9141

Epoch: 217, Loss: 0.7890, Train: 0.8880, Val: 0.9194, Test: 0.9141

Epoch: 218, Loss: 0.7890, Train: 0.8880, Val: 0.9194, Test: 0.9141

Epoch: 219, Loss: 0.7889, Train: 0.8879, Val: 0.9194, Test: 0.9141

Epoch: 220, Loss: 0.7889, Train: 0.8879, Val: 0.9194, Test: 0.9141

Epoch: 221, Loss: 0.7888, Train: 0.8879, Val: 0.9194, Test: 0.9141

Epoch: 222, Loss: 0.7888, Train: 0.8879, Val: 0.9194, Test: 0.9141

Epoch: 223, Loss: 0.7887, Train: 0.8878, Val: 0.9194, Test: 0.9141

Epoch: 224, Loss: 0.7887, Train: 0.8878, Val: 0.9195, Test: 0.9141

Epoch: 225, Loss: 0.7887, Train: 0.8878, Val: 0.9194, Test: 0.9141

Epoch: 226, Loss: 0.7886, Train: 0.8878, Val: 0.9194, Test: 0.9141

Epoch: 227, Loss: 0.7886, Train: 0.8877, Val: 0.9195, Test: 0.9141

Epoch: 228, Loss: 0.7885, Train: 0.8877, Val: 0.9195, Test: 0.9141

Epoch: 229, Loss: 0.7885, Train: 0.8877, Val: 0.9195, Test: 0.9140

Epoch: 230, Loss: 0.7884, Train: 0.8877, Val: 0.9195, Test: 0.9140

Epoch: 231, Loss: 0.7884, Train: 0.8876, Val: 0.9195, Test: 0.9140

Epoch: 232, Loss: 0.7884, Train: 0.8876, Val: 0.9195, Test: 0.9140

Epoch: 233, Loss: 0.7883, Train: 0.8876, Val: 0.9195, Test: 0.9140

Epoch: 234, Loss: 0.7883, Train: 0.8876, Val: 0.9195, Test: 0.9140

Epoch: 235, Loss: 0.7882, Train: 0.8876, Val: 0.9195, Test: 0.9140

Epoch: 236, Loss: 0.7882, Train: 0.8875, Val: 0.9196, Test: 0.9140

Epoch: 237, Loss: 0.7882, Train: 0.8875, Val: 0.9196, Test: 0.9140

Epoch: 238, Loss: 0.7881, Train: 0.8875, Val: 0.9195, Test: 0.9140

Epoch: 239, Loss: 0.7881, Train: 0.8875, Val: 0.9196, Test: 0.9140

Epoch: 240, Loss: 0.7880, Train: 0.8874, Val: 0.9196, Test: 0.9140

Epoch: 241, Loss: 0.7880, Train: 0.8874, Val: 0.9196, Test: 0.9140

Epoch: 242, Loss: 0.7880, Train: 0.8874, Val: 0.9196, Test: 0.9140

Epoch: 243, Loss: 0.7879, Train: 0.8874, Val: 0.9196, Test: 0.9140

Epoch: 244, Loss: 0.7879, Train: 0.8874, Val: 0.9196, Test: 0.9140

Epoch: 245, Loss: 0.7879, Train: 0.8873, Val: 0.9196, Test: 0.9140

Epoch: 246, Loss: 0.7878, Train: 0.8873, Val: 0.9196, Test: 0.9140

Epoch: 247, Loss: 0.7878, Train: 0.8873, Val: 0.9196, Test: 0.9140

Epoch: 248, Loss: 0.7877, Train: 0.8873, Val: 0.9196, Test: 0.9140

Epoch: 249, Loss: 0.7877, Train: 0.8873, Val: 0.9196, Test: 0.9139

Epoch: 250, Loss: 0.7877, Train: 0.8872, Val: 0.9196, Test: 0.9140

Epoch: 251, Loss: 0.7876, Train: 0.8872, Val: 0.9196, Test: 0.9140

Epoch: 252, Loss: 0.7876, Train: 0.8872, Val: 0.9196, Test: 0.9140

Epoch: 253, Loss: 0.7876, Train: 0.8872, Val: 0.9196, Test: 0.9140

Epoch: 254, Loss: 0.7875, Train: 0.8872, Val: 0.9196, Test: 0.9140

Epoch: 255, Loss: 0.7875, Train: 0.8871, Val: 0.9196, Test: 0.9139

Epoch: 256, Loss: 0.7875, Train: 0.8871, Val: 0.9196, Test: 0.9140

Epoch: 257, Loss: 0.7874, Train: 0.8871, Val: 0.9196, Test: 0.9140

Epoch: 258, Loss: 0.7874, Train: 0.8871, Val: 0.9196, Test: 0.9139

Epoch: 259, Loss: 0.7874, Train: 0.8871, Val: 0.9196, Test: 0.9139

Epoch: 260, Loss: 0.7873, Train: 0.8870, Val: 0.9196, Test: 0.9139

Epoch: 261, Loss: 0.7873, Train: 0.8870, Val: 0.9196, Test: 0.9140

Epoch: 262, Loss: 0.7873, Train: 0.8870, Val: 0.9196, Test: 0.9139

Epoch: 263, Loss: 0.7872, Train: 0.8870, Val: 0.9196, Test: 0.9139

Epoch: 264, Loss: 0.7872, Train: 0.8870, Val: 0.9196, Test: 0.9139

Epoch: 265, Loss: 0.7872, Train: 0.8870, Val: 0.9196, Test: 0.9139

Epoch: 266, Loss: 0.7871, Train: 0.8869, Val: 0.9197, Test: 0.9139

Epoch: 267, Loss: 0.7871, Train: 0.8869, Val: 0.9197, Test: 0.9139

Epoch: 268, Loss: 0.7871, Train: 0.8869, Val: 0.9196, Test: 0.9139

Epoch: 269, Loss: 0.7871, Train: 0.8869, Val: 0.9196, Test: 0.9139

Epoch: 270, Loss: 0.7870, Train: 0.8869, Val: 0.9197, Test: 0.9139

Epoch: 271, Loss: 0.7870, Train: 0.8869, Val: 0.9197, Test: 0.9139

Epoch: 272, Loss: 0.7870, Train: 0.8868, Val: 0.9197, Test: 0.9139

Epoch: 273, Loss: 0.7869, Train: 0.8868, Val: 0.9197, Test: 0.9139

Epoch: 274, Loss: 0.7869, Train: 0.8868, Val: 0.9197, Test: 0.9139

Epoch: 275, Loss: 0.7869, Train: 0.8868, Val: 0.9197, Test: 0.9139

Epoch: 276, Loss: 0.7868, Train: 0.8868, Val: 0.9197, Test: 0.9139

Epoch: 277, Loss: 0.7868, Train: 0.8868, Val: 0.9197, Test: 0.9139

Epoch: 278, Loss: 0.7868, Train: 0.8867, Val: 0.9197, Test: 0.9139

Epoch: 279, Loss: 0.7867, Train: 0.8867, Val: 0.9197, Test: 0.9139

Epoch: 280, Loss: 0.7867, Train: 0.8867, Val: 0.9197, Test: 0.9139

Epoch: 281, Loss: 0.7867, Train: 0.8867, Val: 0.9197, Test: 0.9139

Epoch: 282, Loss: 0.7867, Train: 0.8867, Val: 0.9197, Test: 0.9139

Epoch: 283, Loss: 0.7866, Train: 0.8867, Val: 0.9197, Test: 0.9139

Epoch: 284, Loss: 0.7866, Train: 0.8866, Val: 0.9196, Test: 0.9139

Epoch: 285, Loss: 0.7866, Train: 0.8866, Val: 0.9196, Test: 0.9139

Epoch: 286, Loss: 0.7865, Train: 0.8866, Val: 0.9197, Test: 0.9139

Epoch: 287, Loss: 0.7865, Train: 0.8866, Val: 0.9196, Test: 0.9138

Epoch: 288, Loss: 0.7865, Train: 0.8866, Val: 0.9197, Test: 0.9139

Epoch: 289, Loss: 0.7865, Train: 0.8866, Val: 0.9196, Test: 0.9139

Epoch: 290, Loss: 0.7864, Train: 0.8865, Val: 0.9196, Test: 0.9138

Epoch: 291, Loss: 0.7864, Train: 0.8865, Val: 0.9196, Test: 0.9138

Epoch: 292, Loss: 0.7864, Train: 0.8865, Val: 0.9196, Test: 0.9138

Epoch: 293, Loss: 0.7863, Train: 0.8865, Val: 0.9196, Test: 0.9138

Epoch: 294, Loss: 0.7863, Train: 0.8865, Val: 0.9196, Test: 0.9138

Epoch: 295, Loss: 0.7863, Train: 0.8865, Val: 0.9196, Test: 0.9138

Epoch: 296, Loss: 0.7863, Train: 0.8864, Val: 0.9196, Test: 0.9138

Epoch: 297, Loss: 0.7862, Train: 0.8864, Val: 0.9196, Test: 0.9138

Epoch: 298, Loss: 0.7862, Train: 0.8864, Val: 0.9196, Test: 0.9138

Epoch: 299, Loss: 0.7862, Train: 0.8864, Val: 0.9196, Test: 0.9138

Epoch: 300, Loss: 0.7861, Train: 0.8864, Val: 0.9195, Test: 0.9138

6.1.2 二部图预测用户打分

参考:https://github.com/pyg-team/pytorch_geometric/blob/master/examples/hetero/bipartite_sage.py

跟上一节的主要区别在于分别用2种模型来编码2种节点

- 使用MovieLens数据集。其他介绍见上一节

- 电影节点,处理方式同上一节

- 用户节点:没有特征,代码中用随机初始化(

torch.nn.Embedding)的方式获得初始特征 - 转换为无向图(产生逆向边)

- 通过metapath-based neighborhood生成电影节点之间的边(没看懂这里为什么用了gcn_norm得到边权重后用这个权重来筛选,这个权重在字面意义上应该是两个节点的度数相乘的归一化,意思是度数高的节点对更重要、所以建立这些边?)

- 数据分割:同上一节

- 电影-电影用2层GraphSAGE+MLP表征,用户节点用3个GraphSAGE(分别在每一个入边类型上应用)表征

- 链路预测解码方式同上一节

import torch

import torch.nn.functional as F

from torch.nn import Embedding, Linearimport torch_geometric.transforms as T

from torch_geometric.datasets import MovieLens

from torch_geometric.nn import SAGEConv

from torch_geometric.nn.conv.gcn_conv import gcn_normdataset = MovieLens('/data/pyg_data/MovieLens',model_name='all-MiniLM-L6-v2')

data = dataset[0]

data['user'].x=torch.arange(data['user'].num_nodes)

data['user','movie'].edge_label = data['user', 'movie'].edge_label.float()device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')

data = data.to(device)# Add a reverse ('movie', 'rev_rates', 'user') relation for message passing:

data = T.ToUndirected()(data)

del data['movie', 'rev_rates', 'user'].edge_label # Remove "reverse" label.# Perform a link-level split into training, validation, and test edges:

train_data, val_data, test_data = T.RandomLinkSplit(num_val=0.1,num_test=0.1,neg_sampling_ratio=0.0,edge_types=[('user', 'rates', 'movie')],rev_edge_types=[('movie', 'rev_rates', 'user')],

)(data)# Generate the co-occurence matrix of movies<>movies:

metapath = [('movie', 'rev_rates', 'user'), ('user', 'rates', 'movie')]

train_data = T.AddMetaPaths(metapaths=[metapath])(train_data)# Apply normalization to filter the metapath:

_, edge_weight = gcn_norm(train_data['movie', 'movie'].edge_index,num_nodes=train_data['movie'].num_nodes,add_self_loops=False,

)

edge_index = train_data['movie', 'movie'].edge_index[:, edge_weight > 0.002]train_data['movie', 'metapath_0', 'movie'].edge_index = edge_index

val_data['movie', 'metapath_0', 'movie'].edge_index = edge_index