基于WIN10的64位系统演示

一、写在前面

这一期,我们介绍Transformer回归。

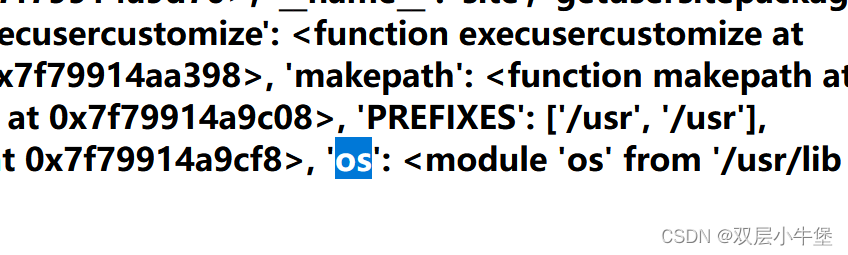

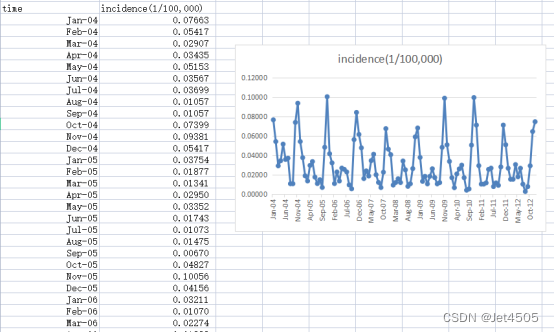

同样,这里使用这个数据:

《PLoS One》2015年一篇题目为《Comparison of Two Hybrid Models for Forecasting the Incidence of Hemorrhagic Fever with Renal Syndrome in Jiangsu Province, China》文章的公开数据做演示。数据为江苏省2004年1月至2012年12月肾综合症出血热月发病率。运用2004年1月至2011年12月的数据预测2012年12个月的发病率数据。

二、Transformer回归

(1)原理

Transformer框架原本是为NLP任务,特别是机器翻译而设计的。但由于其独特的自注意力机制,Transformer在处理顺序数据时表现出色,因此被广泛应用于各种序列数据任务,包括回归任务。

(a)回归任务中的Transformer:

(a1)在回归任务中,Transformer可以捕捉数据中的长期依赖关系。例如,在时间序列数据中,Transformer可以捕捉时间点之间的关系,即使这些时间点相隔很远。

(a2)为回归任务使用Transformer时,通常需要稍微调整模型结构,特别是模型的输出部分。原始的Transformer用于生成序列,但在回归任务中,我们通常需要一个单一的实数作为输出。

(b)Transformer的优点:

(b1)自注意力机制:可以捕捉序列中的任意位置间的依赖关系,而不像RNN那样依赖于前面的信息。

(b2)并行计算:与RNN或LSTM不同,Transformer不需要按顺序处理数据,因此更容易并行处理,提高训练速度。

(b3)可扩展性:可以通过堆叠多个Transformer层来捕捉复杂的模式和关系。

模型解释性:由于自注意力机制,我们可以可视化哪些输入位置对于特定输出最为重要,这增加了模型的解释性。

(c)Transformer的缺点:

(c1)计算需求:尽管可以并行化,但Transformer模型,特别是大型模型,仍然需要大量的计算资源。

(c2)过拟合:在小型数据集上,特别是没有足够的正则化时,Transformer可能会过拟合。

(c3)长序列的挑战:尽管Transformer可以处理长序列,但由于自注意力机制的复杂性,处理非常长的序列仍然是一个挑战。为此,研究人员已经提出了许多变种,例如Reformer。

总体而言,Transformer提供了一个强大的框架来处理各种序列数据任务。

(2)单步滚动预测

import pandas as pd

import numpy as np

from sklearn.metrics import mean_absolute_error, mean_squared_error

from tensorflow.python.keras.models import Sequential

from tensorflow.python.keras import layers, models, optimizers

from tensorflow.python.keras.optimizers import adam_v2# 读取数据

data = pd.read_csv('data.csv')# 将时间列转换为日期格式

data['time'] = pd.to_datetime(data['time'], format='%b-%y')# 创建滞后期特征

lag_period = 6

for i in range(lag_period, 0, -1):data[f'lag_{i}'] = data['incidence'].shift(lag_period - i + 1)# 删除包含 NaN 的行

data = data.dropna().reset_index(drop=True)# 划分训练集和验证集

train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

validation_data = data[(data['time'] >= '2012-01-01') & (data['time'] <= '2012-12-31')]# 定义特征和目标变量

X_train = train_data[['lag_1', 'lag_2', 'lag_3', 'lag_4', 'lag_5', 'lag_6']].values

y_train = train_data['incidence'].values

X_validation = validation_data[['lag_1', 'lag_2', 'lag_3', 'lag_4', 'lag_5', 'lag_6']].values

y_validation = validation_data['incidence'].values# 对于Transformer,我们需要将输入数据重塑为 [samples, timesteps, features]

X_train = X_train.reshape(X_train.shape[0], X_train.shape[1], 1)

X_validation = X_validation.reshape(X_validation.shape[0], X_validation.shape[1], 1)# Transformer的一些参数设置

d_model = 128

num_heads = 4# 构建Transformer回归模型

input_layer = layers.Input(shape=(X_train.shape[1], 1))# Linear Embedding

x = layers.Dense(d_model)(input_layer)# Multi Head Self Attention

x = layers.MultiHeadAttention(num_heads=num_heads, key_dim=d_model)(x, x)# Feed Forward Neural Networks

x = layers.GlobalAveragePooling1D()(x)

x = layers.Dropout(0.1)(x)

x = layers.Dense(50, activation='relu')(x)

x = layers.Dropout(0.1)(x)

output_layer = layers.Dense(1)(x)model = models.Model(inputs=input_layer, outputs=output_layer)model.compile(optimizer=adam_v2.Adam(learning_rate=0.001), loss='mse')# 训练模型

history = model.fit(X_train, y_train, epochs=200, batch_size=32, validation_data=(X_validation, y_validation), verbose=0)# 单步滚动预测函数

def rolling_forecast(model, initial_features, n_forecasts):forecasts = []current_features = initial_features.copy()for i in range(n_forecasts):# 使用当前的特征进行预测forecast = model.predict(current_features.reshape(1, len(current_features), 1)).flatten()[0]forecasts.append(forecast)# 更新特征,用新的预测值替换最旧的特征current_features = np.roll(current_features, shift=-1)current_features[-1] = forecastreturn np.array(forecasts)# 使用训练集的最后6个数据点作为初始特征

initial_features = X_train[-1].flatten()# 使用单步滚动预测方法预测验证集

y_validation_pred = rolling_forecast(model, initial_features, len(X_validation))# 计算训练集上的MAE, MAPE, MSE 和 RMSE

mae_train = mean_absolute_error(y_train, model.predict(X_train).flatten())

mape_train = np.mean(np.abs((y_train - model.predict(X_train).flatten()) / y_train))

mse_train = mean_squared_error(y_train, model.predict(X_train).flatten())

rmse_train = np.sqrt(mse_train)# 计算验证集上的MAE, MAPE, MSE 和 RMSE

mae_validation = mean_absolute_error(y_validation, y_validation_pred)

mape_validation = np.mean(np.abs((y_validation - y_validation_pred) / y_validation))

mse_validation = mean_squared_error(y_validation, y_validation_pred)

rmse_validation = np.sqrt(mse_validation)print("验证集:", mae_validation, mape_validation, mse_validation, rmse_validation)

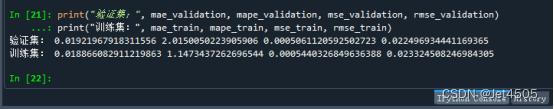

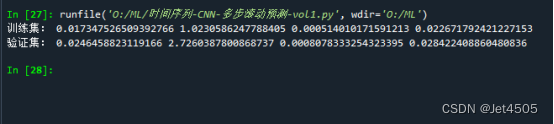

print("训练集:", mae_train, mape_train, mse_train, rmse_train)看结果:

(3)多步滚动预测-vol. 1

import pandas as pd

import numpy as np

from sklearn.metrics import mean_absolute_error, mean_squared_error

import tensorflow as tf

from tensorflow.python.keras.models import Model

from tensorflow.python.keras.layers import Input, MultiHeadAttention, Dense, Dropout, LayerNormalization, Flatten

from tensorflow.python.keras.optimizers import adam_v2# 读取数据

data = pd.read_csv('data.csv')

data['time'] = pd.to_datetime(data['time'], format='%b-%y')n = 6

m = 2# 创建滞后期特征

for i in range(n, 0, -1):data[f'lag_{i}'] = data['incidence'].shift(n - i + 1)data = data.dropna().reset_index(drop=True)train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

validation_data = data[(data['time'] >= '2012-01-01') & (data['time'] <= '2012-12-31')]# 准备训练数据

X_train = []

y_train = []for i in range(len(train_data) - n - m + 1):X_train.append(train_data.iloc[i+n-1][[f'lag_{j}' for j in range(1, n+1)]].values)y_train.append(train_data.iloc[i+n:i+n+m]['incidence'].values)X_train = np.array(X_train)

y_train = np.array(y_train)

X_train = X_train.astype(np.float32)

y_train = y_train.astype(np.float32)# 构建Transformer模型

inputs = Input(shape=(n, 1))x = MultiHeadAttention(num_heads=8, key_dim=64)(inputs, inputs)

x = Dropout(0.1)(x)

x = LayerNormalization(epsilon=1e-6)(x + inputs)x = Flatten()(x) # 新增的Flatten层

x = Dense(50, activation='relu')(x)

x = Dropout(0.1)(x)

outputs = Dense(m)(x)model = Model(inputs=inputs, outputs=outputs)model.compile(optimizer=adam_v2.Adam(learning_rate=0.001), loss='mse')# 训练模型

model.fit(X_train, y_train, epochs=200, batch_size=32, verbose=0)def transformer_rolling_forecast(data, model, n, m):y_pred = []for i in range(len(data) - n):input_data = data.iloc[i+n-1][[f'lag_{j}' for j in range(1, n+1)]].values.astype(np.float32).reshape(1, n, 1)pred = model.predict(input_data)y_pred.extend(pred[0])for i in range(1, m):for j in range(len(y_pred) - i):y_pred[j+i] = (y_pred[j+i] + y_pred[j]) / 2return np.array(y_pred)# Predict for train_data and validation_data

y_train_pred_transformer = transformer_rolling_forecast(train_data, model, n, m)[:len(y_train)]

y_validation_pred_transformer = transformer_rolling_forecast(validation_data, model, n, m)[:len(validation_data) - n]# Calculate performance metrics for train_data

mae_train = mean_absolute_error(train_data['incidence'].values[n:len(y_train_pred_transformer)+n], y_train_pred_transformer)

mape_train = np.mean(np.abs((train_data['incidence'].values[n:len(y_train_pred_transformer)+n] - y_train_pred_transformer) / train_data['incidence'].values[n:len(y_train_pred_transformer)+n]))

mse_train = mean_squared_error(train_data['incidence'].values[n:len(y_train_pred_transformer)+n], y_train_pred_transformer)

rmse_train = np.sqrt(mse_train)# Calculate performance metrics for validation_data

mae_validation = mean_absolute_error(validation_data['incidence'].values[n:len(y_validation_pred_transformer)+n], y_validation_pred_transformer)

mape_validation = np.mean(np.abs((validation_data['incidence'].values[n:len(y_validation_pred_transformer)+n] - y_validation_pred_transformer) / validation_data['incidence'].values[n:len(y_validation_pred_transformer)+n]))

mse_validation = mean_squared_error(validation_data['incidence'].values[n:len(y_validation_pred_transformer)+n], y_validation_pred_transformer)

rmse_validation = np.sqrt(mse_validation)print("训练集:", mae_train, mape_train, mse_train, rmse_train)

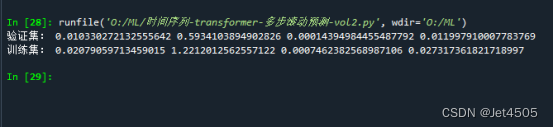

print("验证集:", mae_validation, mape_validation, mse_validation, rmse_validation)结果:

(4)多步滚动预测-vol. 2

import pandas as pd

import numpy as np

from sklearn.model_selection import train_test_split

from sklearn.metrics import mean_absolute_error, mean_squared_error

from tensorflow.python.keras.models import Sequential, Model

from tensorflow.python.keras.layers import Dense, Conv1D, Flatten, MaxPooling1D, Input, MultiHeadAttention, LayerNormalization, Dropout

from tensorflow.python.keras.optimizers import adam_v2# Loading and preprocessing the data

data = pd.read_csv('data.csv')

data['time'] = pd.to_datetime(data['time'], format='%b-%y')n = 6

m = 2# 创建滞后期特征

for i in range(n, 0, -1):data[f'lag_{i}'] = data['incidence'].shift(n - i + 1)data = data.dropna().reset_index(drop=True)train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

validation_data = data[(data['time'] >= '2012-01-01') & (data['time'] <= '2012-12-31')]# 只对X_train、y_train、X_validation取奇数行

X_train = train_data[[f'lag_{i}' for i in range(1, n+1)]].iloc[::2].reset_index(drop=True).values

X_train = X_train.reshape(X_train.shape[0], X_train.shape[1], 1)y_train_list = [train_data['incidence'].shift(-i) for i in range(m)]

y_train = pd.concat(y_train_list, axis=1)

y_train.columns = [f'target_{i+1}' for i in range(m)]

y_train = y_train.iloc[::2].reset_index(drop=True).dropna().values[:, 0]X_validation = validation_data[[f'lag_{i}' for i in range(1, n+1)]].iloc[::2].reset_index(drop=True).values

X_validation = X_validation.reshape(X_validation.shape[0], X_validation.shape[1], 1)y_validation = validation_data['incidence'].values# Building the Transformer model

inputs = Input(shape=(n, 1))

x = MultiHeadAttention(num_heads=8, key_dim=64)(inputs, inputs)

x = Dropout(0.1)(x)

x = LayerNormalization(epsilon=1e-6)(x + inputs)

x = Flatten()(x)

x = Dense(50, activation='relu')(x)

outputs = Dense(1)(x)model = Model(inputs=inputs, outputs=outputs)

optimizer = adam_v2.Adam(learning_rate=0.001)

model.compile(optimizer=optimizer, loss='mse')# Train the model

model.fit(X_train, y_train, epochs=200, batch_size=32, verbose=0)# Predict on validation set

y_validation_pred = model.predict(X_validation).flatten()# Compute metrics for validation set

mae_validation = mean_absolute_error(y_validation[:len(y_validation_pred)], y_validation_pred)

mape_validation = np.mean(np.abs((y_validation[:len(y_validation_pred)] - y_validation_pred) / y_validation[:len(y_validation_pred)]))

mse_validation = mean_squared_error(y_validation[:len(y_validation_pred)], y_validation_pred)

rmse_validation = np.sqrt(mse_validation)# Predict on training set

y_train_pred = model.predict(X_train).flatten()# Compute metrics for training set

mae_train = mean_absolute_error(y_train, y_train_pred)

mape_train = np.mean(np.abs((y_train - y_train_pred) / y_train))

mse_train = mean_squared_error(y_train, y_train_pred)

rmse_train = np.sqrt(mse_train)print("验证集:", mae_validation, mape_validation, mse_validation, rmse_validation)

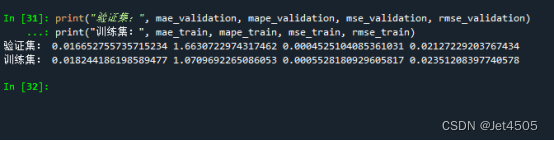

print("训练集:", mae_train, mape_train, mse_train, rmse_train)结果:

(5)多步滚动预测-vol. 3

import pandas as pd

import numpy as np

from sklearn.metrics import mean_absolute_error, mean_squared_error

from tensorflow.python.keras.models import Sequential, Model

from tensorflow.python.keras.layers import Dense, Flatten, Input, MultiHeadAttention, LayerNormalization, Dropout

from tensorflow.python.keras.optimizers import adam_v2# 数据读取和预处理

data = pd.read_csv('data.csv')

data_y = pd.read_csv('data.csv')

data['time'] = pd.to_datetime(data['time'], format='%b-%y')

data_y['time'] = pd.to_datetime(data_y['time'], format='%b-%y')n = 6for i in range(n, 0, -1):data[f'lag_{i}'] = data['incidence'].shift(n - i + 1)data = data.dropna().reset_index(drop=True)

train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

X_train = train_data[[f'lag_{i}' for i in range(1, n+1)]]

m = 3X_train_list = []

y_train_list = []for i in range(m):X_temp = X_trainy_temp = data_y['incidence'].iloc[n + i:len(data_y) - m + 1 + i]X_train_list.append(X_temp)y_train_list.append(y_temp)for i in range(m):X_train_list[i] = X_train_list[i].iloc[:-(m-1)].valuesX_train_list[i] = X_train_list[i].reshape(X_train_list[i].shape[0], X_train_list[i].shape[1], 1)y_train_list[i] = y_train_list[i].iloc[:len(X_train_list[i])].values# 模型训练

models = []

for i in range(m):# Building the Transformer modelinputs = Input(shape=(n, 1))x = MultiHeadAttention(num_heads=8, key_dim=64)(inputs, inputs)x = Dropout(0.1)(x)x = LayerNormalization(epsilon=1e-6)(x + inputs)x = Flatten()(x)x = Dense(50, activation='relu')(x)outputs = Dense(1)(x)model = Model(inputs=inputs, outputs=outputs)optimizer = adam_v2.Adam(learning_rate=0.001)model.compile(optimizer=optimizer, loss='mse')model.fit(X_train_list[i], y_train_list[i], epochs=200, batch_size=32, verbose=0)models.append(model)validation_start_time = train_data['time'].iloc[-1] + pd.DateOffset(months=1)

validation_data = data[data['time'] >= validation_start_time]

X_validation = validation_data[[f'lag_{i}' for i in range(1, n+1)]].values

X_validation = X_validation.reshape(X_validation.shape[0], X_validation.shape[1], 1)y_validation_pred_list = [model.predict(X_validation) for model in models]

y_train_pred_list = [model.predict(X_train_list[i]) for i, model in enumerate(models)]def concatenate_predictions(pred_list):concatenated = []for j in range(len(pred_list[0])):for i in range(m):concatenated.append(pred_list[i][j])return concatenatedy_validation_pred = np.array(concatenate_predictions(y_validation_pred_list))[:len(validation_data['incidence'])]

y_train_pred = np.array(concatenate_predictions(y_train_pred_list))[:len(train_data['incidence']) - m + 1]

y_validation_pred = y_validation_pred.flatten()

y_train_pred = y_train_pred.flatten()mae_validation = mean_absolute_error(validation_data['incidence'], y_validation_pred)

mape_validation = np.mean(np.abs((validation_data['incidence'] - y_validation_pred) / validation_data['incidence']))

mse_validation = mean_squared_error(validation_data['incidence'], y_validation_pred)

rmse_validation = np.sqrt(mse_validation)mae_train = mean_absolute_error(train_data['incidence'][:-(m-1)], y_train_pred)

mape_train = np.mean(np.abs((train_data['incidence'][:-(m-1)] - y_train_pred) / train_data['incidence'][:-(m-1)]))

mse_train = mean_squared_error(train_data['incidence'][:-(m-1)], y_train_pred)

rmse_train = np.sqrt(mse_train)print("验证集:", mae_validation, mape_validation, mse_validation, rmse_validation)

print("训练集:", mae_train, mape_train, mse_train, rmse_train)结果:

三、数据

链接:https://pan.baidu.com/s/1EFaWfHoG14h15KCEhn1STg?pwd=q41n

提取码:q41n