01 - 完成的模型训练套路

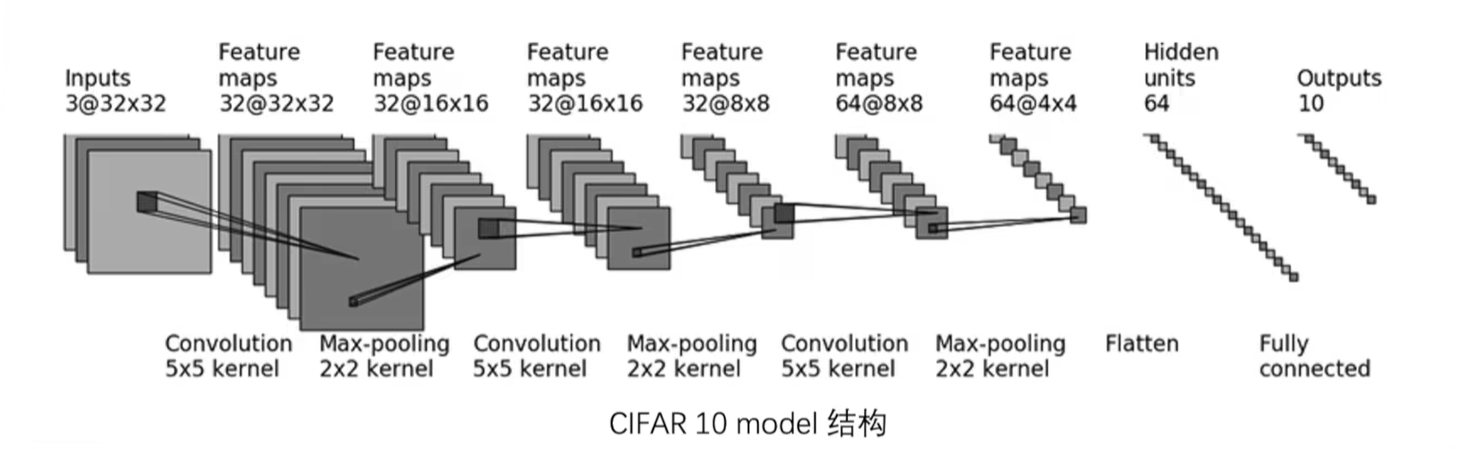

模型图

model.py

import torch

from torch import nn# 搭建神经网络

# 你也可以直接把这个放到model.py里class MyNet(nn.Module):def __init__(self):super(MyNet, self).__init__()self.model = nn.Sequential(# 卷积与最大池化层nn.Conv2d(3, 32, 5, 1, 2),nn.MaxPool2d(2),nn.Conv2d(32, 32, 5, 1, 2),nn.MaxPool2d(2),nn.Conv2d(32, 64, 5, 1, 2),nn.MaxPool2d(2),# 展平nn.Flatten(),# 全连接层nn.Linear(64*4*4, 64),nn.Linear(64, 10))def forward(self, x):x = self.model(x)return x# 测试网络

if __name__ == "__main__":net = MyNet()input = torch.ones((64, 3, 32, 32))output = net(input)# 测试网络的正确性print(output.shape)

train.py

import torchvision

from torch.utils.tensorboard import SummaryWriterfrom model import *

from torch.utils.data import DataLoader# 准备训练数据集

train_data = torchvision.datasets.CIFAR10(root='dataset', train=True, download=True,transform=torchvision.transforms.ToTensor())

# 准备测试数据集

test_data = torchvision.datasets.CIFAR10(root='dataset', train=False, download=True,transform=torchvision.transforms.ToTensor())# 获取数据集中有多少图片

train_data_size = len(train_data)

test_data_size = len(test_data)

print(f"Train data size: {train_data_size}, Test data size: {test_data_size}")# 使用dataloader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)# 创建网络模型

net = MyNet()# 定义损失函数(交叉熵)

loss_func = nn.CrossEntropyLoss()# 定义优化器(随机梯度下降)

# 参数:parameters,学习率

learning_rate = 0.01

optimizer = torch.optim.SGD(net.parameters(), learning_rate)# 设置训练网络的一些参数

# 记录训练次数

total_train_step = 0

# 纪律测试次数

total_test_step = 0

# 训练轮数

epoch = 10# 添加tensorboard

writer = SummaryWriter("logs_train")# 训练的循环

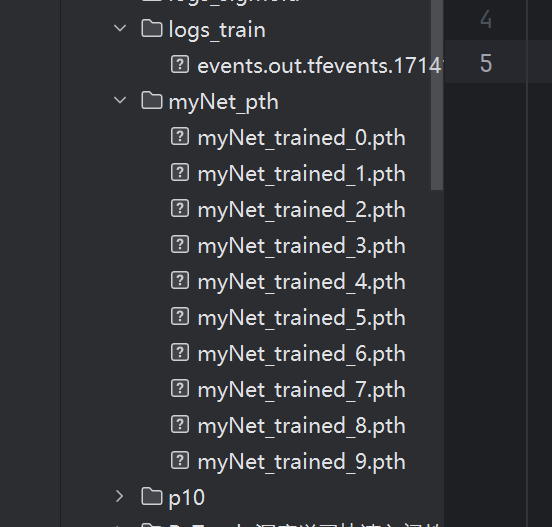

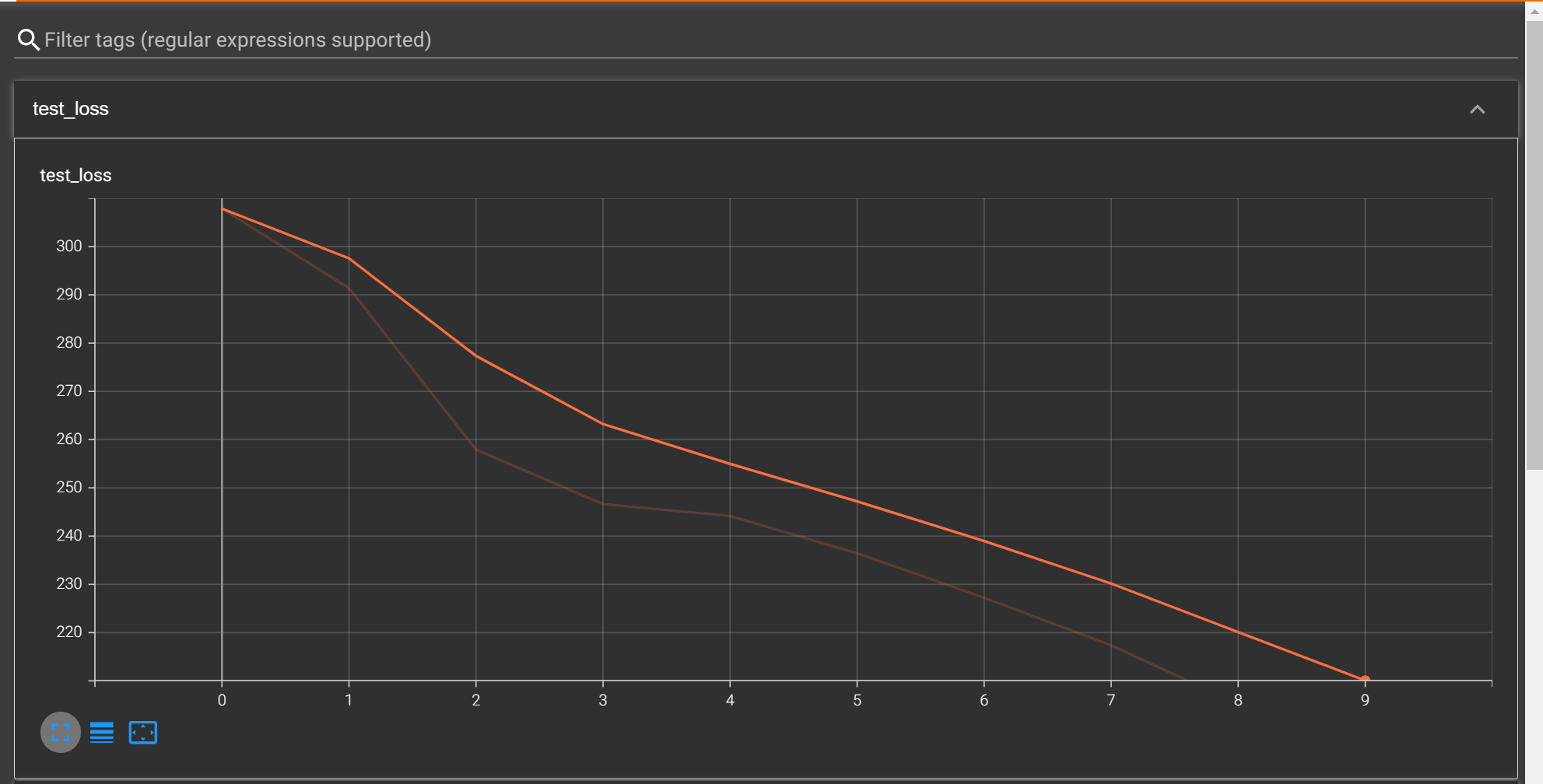

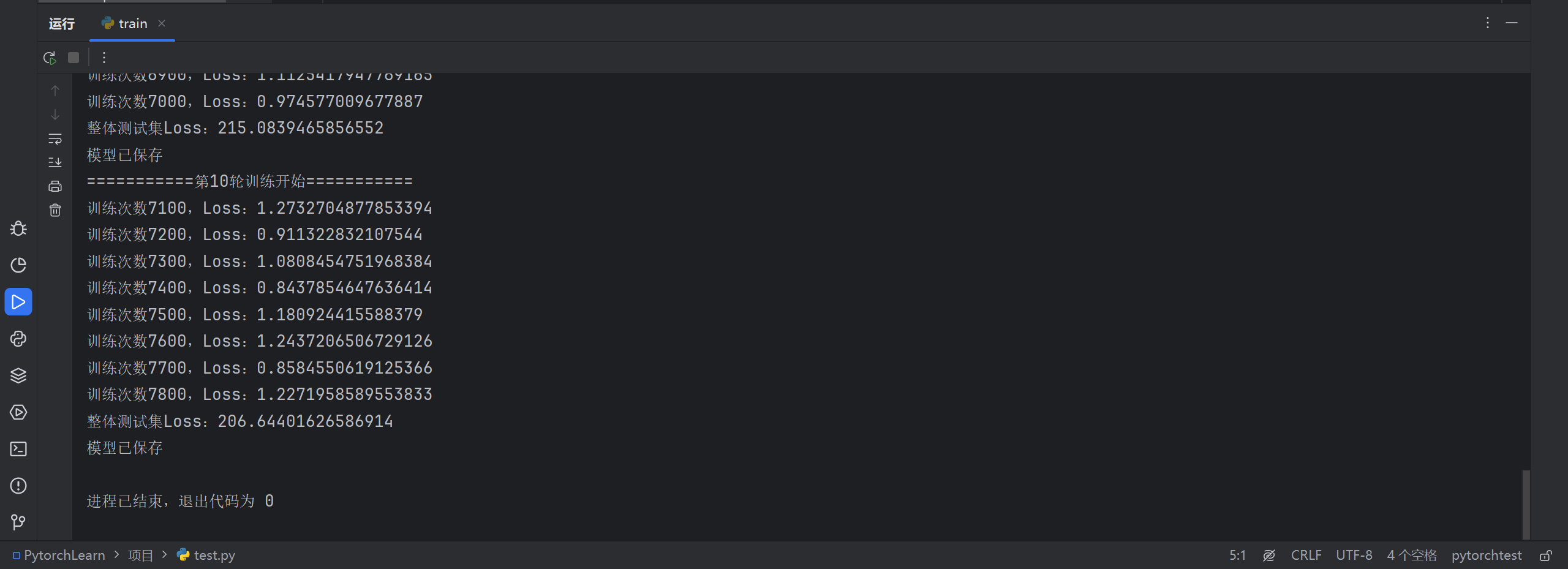

for i in range(epoch):print(f"===========第{i + 1}轮训练开始===========")# 训练步骤开始# 把网络设置为训练模式net.train()for data in train_dataloader:imgs, targets = data# 获得输出outputs = net(imgs)# 计算损失值loss = loss_func(outputs, targets)# 优化器优化模型# 梯度清零optimizer.zero_grad()# 反向传播loss.backward()optimizer.step()total_train_step += 1# 不让每次都printif total_train_step % 100 == 0:print(f"训练次数{total_train_step},Loss:{loss.item()}")writer.add_scalar("train_loss", loss.item(), total_train_step)# 在with中代码的无梯度,调优# 测试步骤开始# 把网络设置为测试模式net.eval()total_test_loss = 0total_accuracy = 0with torch.no_grad():for data in test_dataloader:imgs, targets = dataoutputs = net(imgs)loss = loss_func(outputs, targets)total_test_loss += loss.item()accuracy = (outputs.argmax(1) == targets).sum()total_accuracy += accuracyprint(f"整体测试集Loss:{total_test_loss}")print(f"整体测试集上的正确率:{total_accuracy/test_data_size:.4f}")writer.add_scalar("test_loss", total_test_loss, total_test_step)writer.add_scalar("test_accuracy", total_accuracy/test_data_size, total_test_step)total_test_step += 1# 保存模型(每一轮保存一次)torch.save(net, f"myNet_pth/myNet_trained_{i}.pth")# torch.save(net.state_dict(), f"myNet_pth/myNet_trained_{i}.pth")print("模型已保存")writer.close()

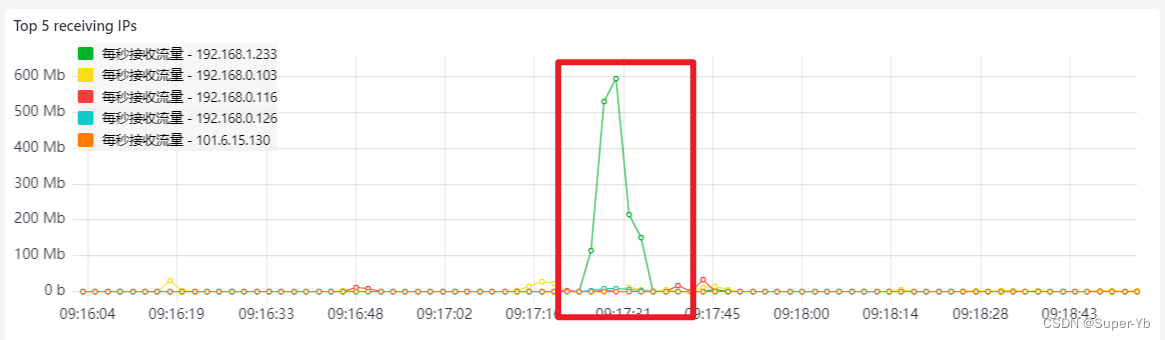

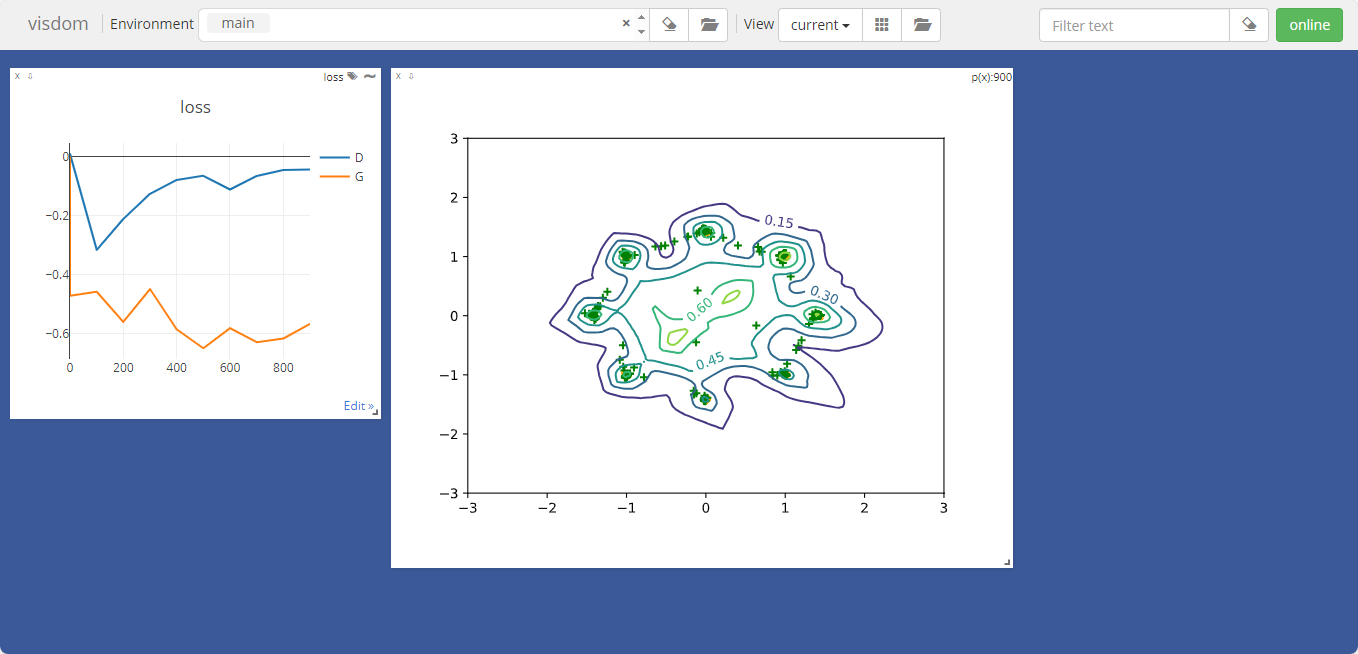

tensorboard中的数据

控制台输出

训练完成咯~

使用GPU训练

import torchvision

from torch.utils.tensorboard import SummaryWriterfrom model import *

from torch.utils.data import DataLoader# 准备训练数据集

train_data = torchvision.datasets.CIFAR10(root='dataset', train=True, download=True,transform=torchvision.transforms.ToTensor())

# 准备测试数据集

test_data = torchvision.datasets.CIFAR10(root='dataset', train=False, download=True,transform=torchvision.transforms.ToTensor())# 获取数据集中有多少图片

train_data_size = len(train_data)

test_data_size = len(test_data)

print(f"Train data size: {train_data_size}, Test data size: {test_data_size}")# 使用dataloader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)# 创建网络模型

net = MyNet()

# 将网络模型转到CUDA上

if torch.cuda.is_available():net = net.cuda()# 定义损失函数(交叉熵)

loss_func = nn.CrossEntropyLoss()

# 将损失函数转到CUDA上

if torch.cuda.is_available():loss_func = loss_func.cuda()# 定义优化器(随机梯度下降)

# 参数:parameters,学习率

learning_rate = 0.01

optimizer = torch.optim.SGD(net.parameters(), learning_rate)# 设置训练网络的一些参数

# 记录训练次数

total_train_step = 0

# 纪律测试次数

total_test_step = 0

# 训练轮数

epoch = 10# 添加tensorboard

writer = SummaryWriter("logs_train")# 训练的循环

for i in range(epoch):print(f"===========第{i + 1}轮训练开始===========")# 训练步骤开始# 把网络设置为训练模式net.train()for data in train_dataloader:imgs, targets = data# 将数据转到CUDA上if torch.cuda.is_available():imgs = imgs.cuda()targets = targets.cuda()# 获得输出outputs = net(imgs)# 计算损失值loss = loss_func(outputs, targets)# 优化器优化模型# 梯度清零optimizer.zero_grad()# 反向传播loss.backward()optimizer.step()total_train_step += 1# 不让每次都printif total_train_step % 100 == 0:print(f"训练次数{total_train_step},Loss:{loss.item()}")writer.add_scalar("train_loss", loss.item(), total_train_step)# 在with中代码的无梯度,调优# 测试步骤开始# 把网络设置为测试模式net.eval()total_test_loss = 0total_accuracy = 0with torch.no_grad():for data in test_dataloader:imgs, targets = data# 将数据转到CUDA上if torch.cuda.is_available():imgs = imgs.cuda()targets = targets.cuda()outputs = net(imgs)loss = loss_func(outputs, targets)total_test_loss += loss.item()accuracy = (outputs.argmax(1) == targets).sum()total_accuracy += accuracyprint(f"整体测试集Loss:{total_test_loss}")print(f"整体测试集上的正确率:{total_accuracy/test_data_size:.4f}")writer.add_scalar("test_loss", total_test_loss, total_test_step)writer.add_scalar("test_accuracy", total_accuracy/test_data_size, total_test_step)total_test_step += 1# 保存模型(每一轮保存一次)torch.save(net, f"myNet_pth/myNet_trained_{i}.pth")# torch.save(net.state_dict(), f"myNet_pth/myNet_trained_{i}.pth")print("模型已保存")writer.close()

import torchvision

from torch.utils.tensorboard import SummaryWriterfrom model import *

from torch.utils.data import DataLoader

import time# 定义GPU设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(f"当前设备:{device}")# 准备训练数据集

train_data = torchvision.datasets.CIFAR10(root='dataset', train=True, download=True,transform=torchvision.transforms.ToTensor())

# 准备测试数据集

test_data = torchvision.datasets.CIFAR10(root='dataset', train=False, download=True,transform=torchvision.transforms.ToTensor())# 获取数据集中有多少图片

train_data_size = len(train_data)

test_data_size = len(test_data)

print(f"Train data size: {train_data_size}, Test data size: {test_data_size}")# 使用dataloader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)# 创建网络模型

net = MyNet()

# 把网络转移到设备上去

net = net.to(device)# 定义损失函数(交叉熵)

loss_func = nn.CrossEntropyLoss()

# 把损失函数转移到设备上去

loss_func = loss_func.to(device)# 定义优化器(随机梯度下降)

# 参数:parameters,学习率

learning_rate = 0.01

optimizer = torch.optim.SGD(net.parameters(), learning_rate)# 设置训练网络的一些参数

# 记录训练次数

total_train_step = 0

# 纪律测试次数

total_test_step = 0

# 训练轮数

epoch = 10# 添加tensorboard

writer = SummaryWriter("logs_train")

start_time = time.process_time()

# 训练的循环

for i in range(epoch):print(f"===========第{i + 1}轮训练开始===========")# 训练步骤开始# 把网络设置为训练模式net.train()for data in train_dataloader:imgs, targets = data# 数据转移到设备上去imgs = imgs.to(device)targets = targets.to(device)# 获得输出outputs = net(imgs)# 计算损失值loss = loss_func(outputs, targets)# 优化器优化模型# 梯度清零optimizer.zero_grad()# 反向传播loss.backward()optimizer.step()total_train_step += 1# 不让每次都printif total_train_step % 100 == 0:end_time = time.process_time()print(f"当前第{total_train_step}次训练耗时:{end_time - start_time:.2f}秒")print(f"训练次数{total_train_step},Loss:{loss.item()}")writer.add_scalar("train_loss", loss.item(), total_train_step)# 在with中代码的无梯度,调优# 测试步骤开始# 把网络设置为测试模式net.eval()total_test_loss = 0total_accuracy = 0with torch.no_grad():for data in test_dataloader:imgs, targets = data# 数据转移到设备上去imgs = imgs.to(device)targets = targets.to(device)outputs = net(imgs)loss = loss_func(outputs, targets)total_test_loss += loss.item()accuracy = (outputs.argmax(1) == targets).sum()total_accuracy += accuracyprint(f"整体测试集Loss:{total_test_loss}")print(f"整体测试集上的正确率:{total_accuracy/test_data_size:.4f}")writer.add_scalar("test_loss", total_test_loss, total_test_step)writer.add_scalar("test_accuracy", total_accuracy/test_data_size, total_test_step)total_test_step += 1# 保存模型(每一轮保存一次)torch.save(net, f"myNet_pth/myNet_trained_{i}.pth")# torch.save(net.state_dict(), f"myNet_pth/myNet_trained_{i}.pth")print("模型已保存")writer.close()

测试耗时差距

import time

# 训练主循环前加入

start_time = time.process_time()

# 训练中每100次打印

end_time = time.process_time()

print(f"当前第{total_train_step}次训练耗时:{end_time - start_time:.2f}秒")

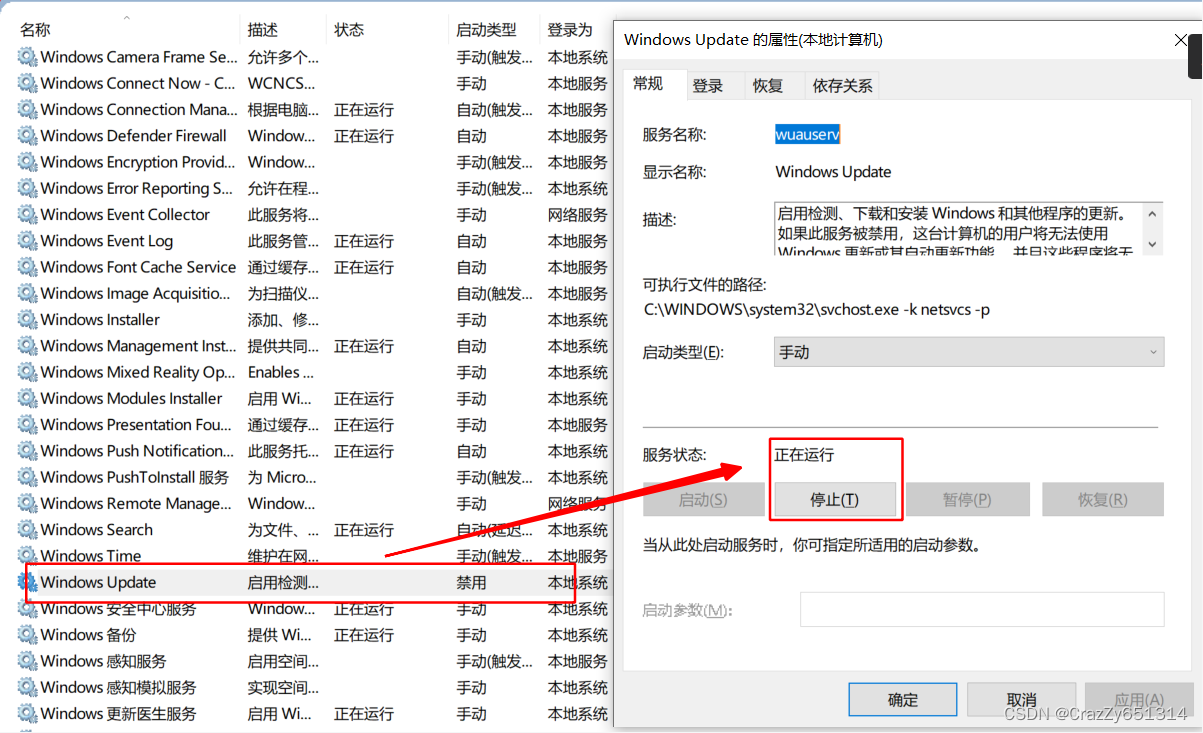

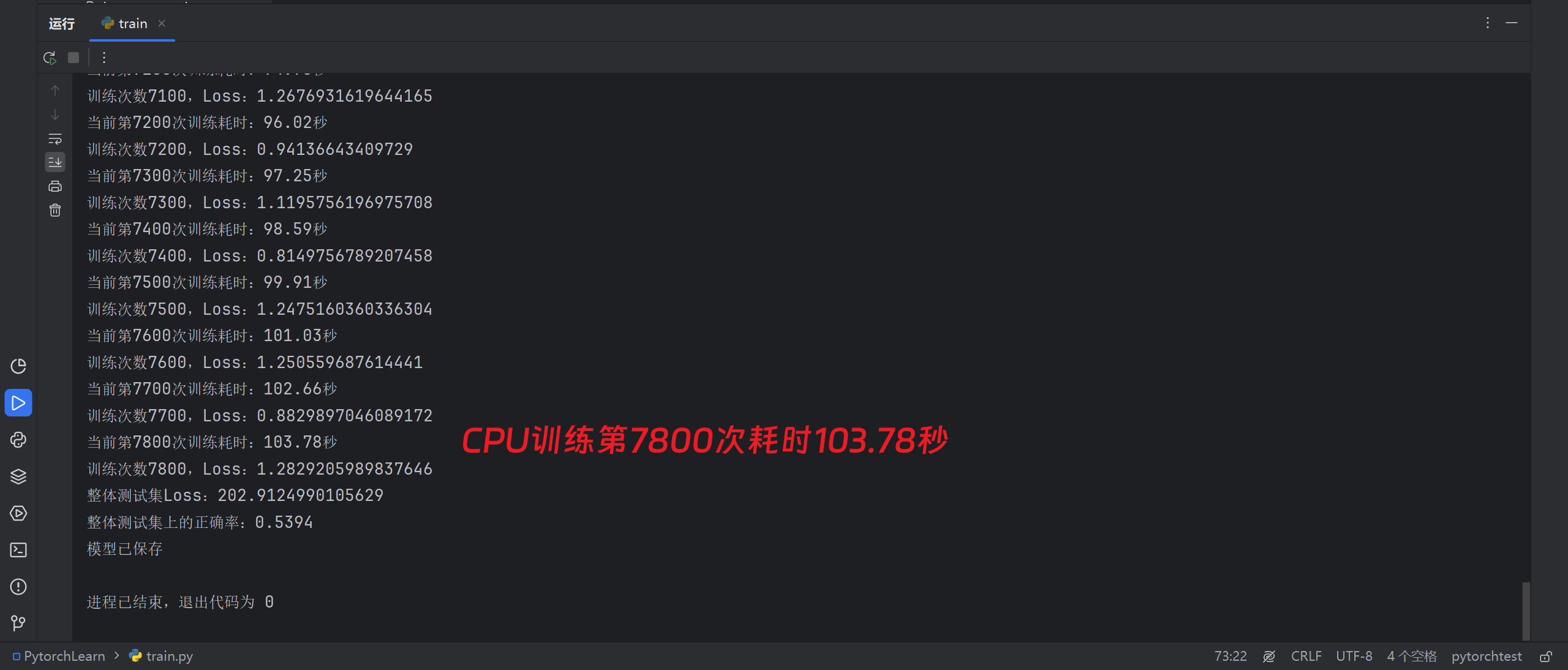

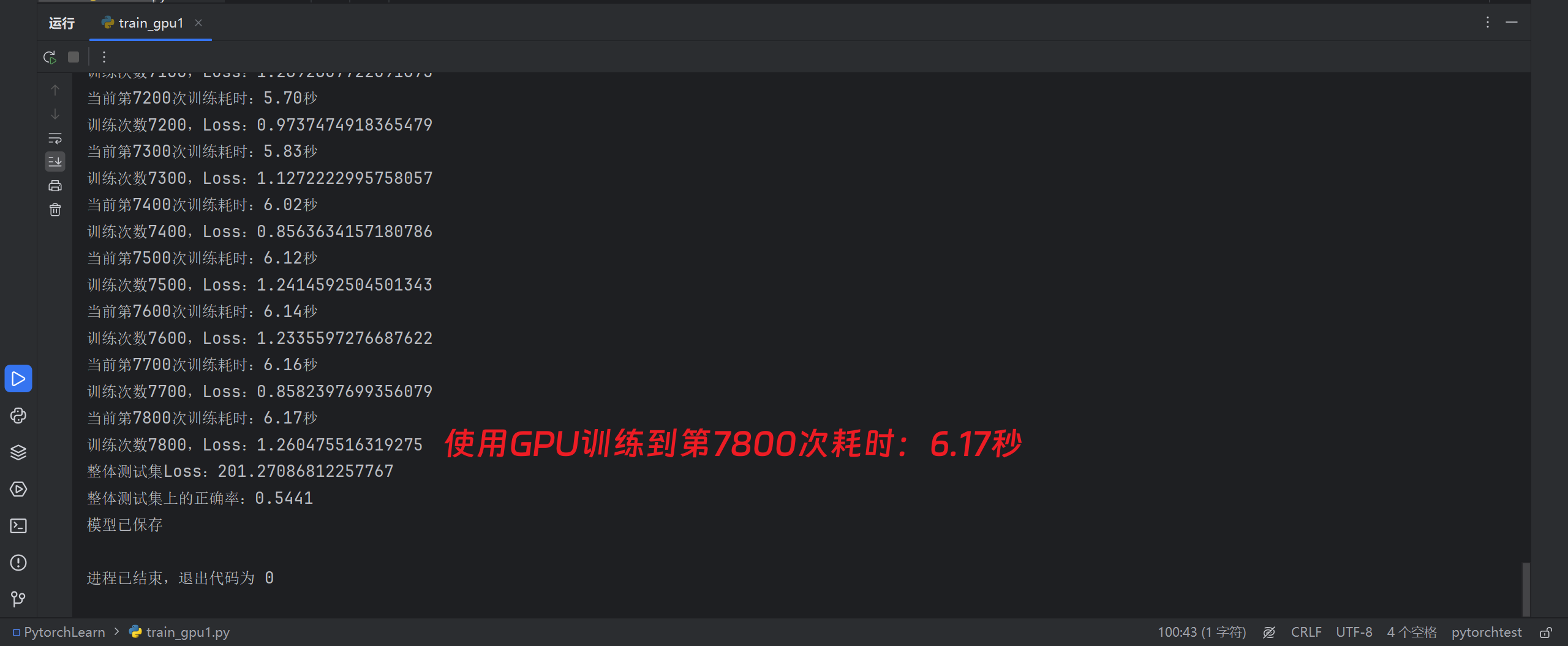

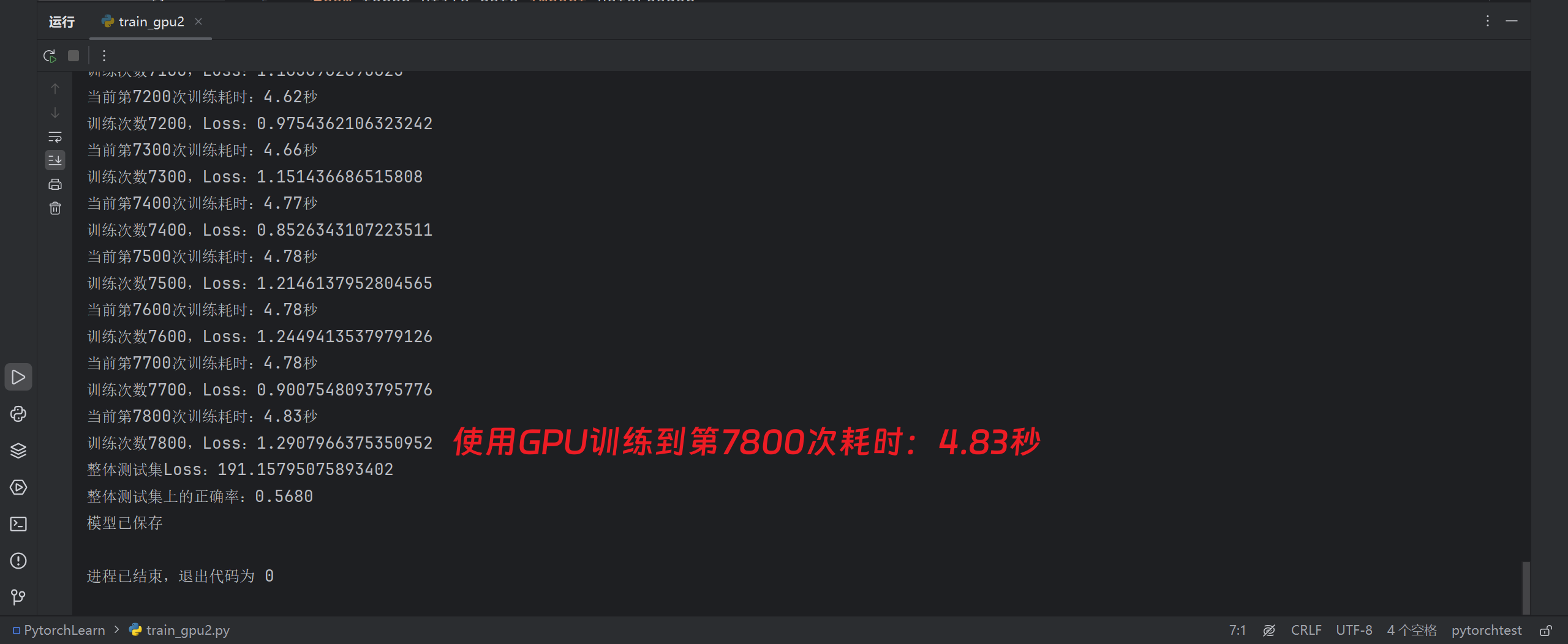

使用CPU(i7-12650H):

使用GPU(RTX 4060 Laptop):

比CPU快20倍!CPU直接狂喜!

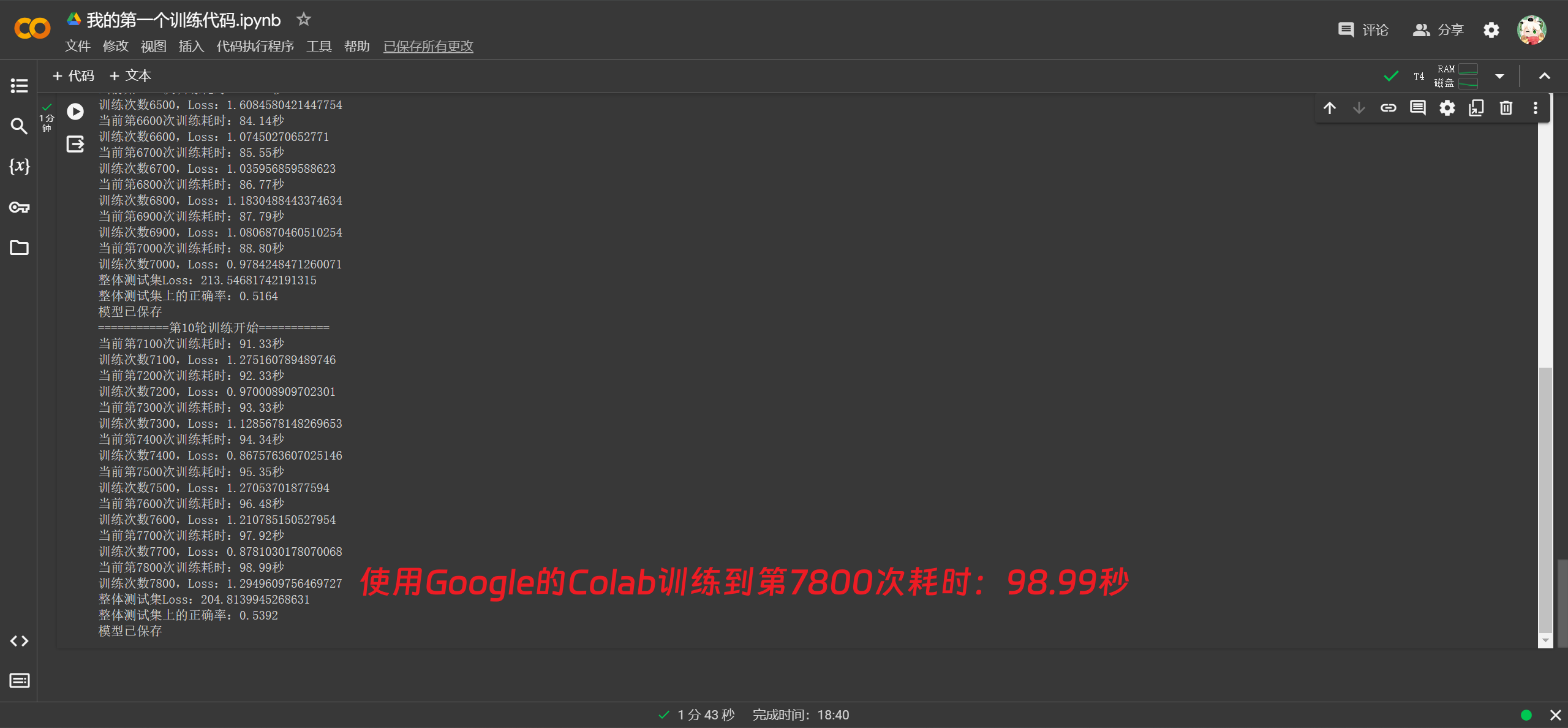

使用Google的Colab进行训练(Tesla T4):

不知道为什么性能这么奇怪。

注意事项

网络对象名.train()和网络对象名.eval()是用于开启网络的训练模式和测试模式,但是没有写这两段代码时,训练也没有问题,这是怎么回事?

所谓的训练模式和测试模式主要是针对特定类型的层如Dropout和BatchNorm层有区别。

当在训练神经网络时,如果使用了Dropout,那么在训练阶段,每次前向传播都会随机关闭网络的一部分神经元(即丢弃),以防止过拟合。然而,在评估或测试过程中,你需要使用全部神经网络,因此dropout应该被关闭,这就需要用到网络对象名.eval()。

同样,BatchNormalization层在训练和评估时也有所不同。在训练时,BatchNorm使用批中的数据来计算均值和方差,并进行归一化处理。但在测试或评估阶段,我们需要使用训练阶段已经学到的均值和方差来进行归一化,所以也需要将模型切换到测试模式。

因此,虽然在不使用train()和eval()切换模式的情况下,你的神经网络依然可以运行,但是可能无法达到最优表现。