这段代码是使用local模式运行spark代码。但是在获取了spark.sqlContext之后,用sqlContext将rdd算子转换为Dataframe的时候报错空指针异常

Exception in thread "main" org.apache.spark.sql.AnalysisException: java.lang.RuntimeException: java.lang.NullPointerException;at org.apache.spark.sql.hive.HiveExternalCatalog.withClient(HiveExternalCatalog.scala:106)at org.apache.spark.sql.hive.HiveExternalCatalog.databaseExists(HiveExternalCatalog.scala:194)at org.apache.spark.sql.internal.SharedState.externalCatalog$lzycompute(SharedState.scala:114)at org.apache.spark.sql.internal.SharedState.externalCatalog(SharedState.scala:102)at org.apache.spark.sql.hive.HiveSessionStateBuilder.externalCatalog(HiveSessionStateBuilder.scala:39)at org.apache.spark.sql.hive.HiveSessionStateBuilder.catalog$lzycompute(HiveSessionStateBuilder.scala:54)at org.apache.spark.sql.hive.HiveSessionStateBuilder.catalog(HiveSessionStateBuilder.scala:52)at org.apache.spark.sql.hive.HiveSessionStateBuilder$$anon$1.<init>(HiveSessionStateBuilder.scala:69)at org.apache.spark.sql.hive.HiveSessionStateBuilder.analyzer(HiveSessionStateBuilder.scala:69)at org.apache.spark.sql.internal.BaseSessionStateBuilder$$anonfun$build$2.apply(BaseSessionStateBuilder.scala:293)at org.apache.spark.sql.internal.BaseSessionStateBuilder$$anonfun$build$2.apply(BaseSessionStateBuilder.scala:293)at org.apache.spark.sql.internal.SessionState.analyzer$lzycompute(SessionState.scala:79)at org.apache.spark.sql.internal.SessionState.analyzer(SessionState.scala:79)at org.apache.spark.sql.execution.QueryExecution.analyzed$lzycompute(QueryExecution.scala:57)at org.apache.spark.sql.execution.QueryExecution.analyzed(QueryExecution.scala:55)at org.apache.spark.sql.execution.QueryExecution.assertAnalyzed(QueryExecution.scala:47)at org.apache.spark.sql.Dataset$.ofRows(Dataset.scala:74)at org.apache.spark.sql.SparkSession.createDataFrame(SparkSession.scala:300)at org.apache.spark.sql.SQLContext.createDataFrame(SQLContext.scala:272)at cn.itcast.xc.dimen.AreaDimInsert$.main(AreaDimInsert.scala:39)at cn.itcast.xc.dimen.AreaDimInsert.main(AreaDimInsert.scala)

Caused by: java.lang.RuntimeException: java.lang.NullPointerExceptionat org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:522)at org.apache.spark.sql.hive.client.HiveClientImpl.newState(HiveClientImpl.scala:180)at org.apache.spark.sql.hive.client.HiveClientImpl.<init>(HiveClientImpl.scala:114)at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)at java.lang.reflect.Constructor.newInstance(Constructor.java:423)at org.apache.spark.sql.hive.client.IsolatedClientLoader.createClient(IsolatedClientLoader.scala:264)at org.apache.spark.sql.hive.HiveUtils$.newClientForMetadata(HiveUtils.scala:385)at org.apache.spark.sql.hive.HiveUtils$.newClientForMetadata(HiveUtils.scala:287)at org.apache.spark.sql.hive.HiveExternalCatalog.client$lzycompute(HiveExternalCatalog.scala:66)at org.apache.spark.sql.hive.HiveExternalCatalog.client(HiveExternalCatalog.scala:65)at org.apache.spark.sql.hive.HiveExternalCatalog$$anonfun$databaseExists$1.apply$mcZ$sp(HiveExternalCatalog.scala:195)at org.apache.spark.sql.hive.HiveExternalCatalog$$anonfun$databaseExists$1.apply(HiveExternalCatalog.scala:195)at org.apache.spark.sql.hive.HiveExternalCatalog$$anonfun$databaseExists$1.apply(HiveExternalCatalog.scala:195)at org.apache.spark.sql.hive.HiveExternalCatalog.withClient(HiveExternalCatalog.scala:97)... 20 more

Caused by: java.lang.NullPointerExceptionat java.lang.ProcessBuilder.start(ProcessBuilder.java:1012)at org.apache.hadoop.util.Shell.runCommand(Shell.java:482)at org.apache.hadoop.util.Shell.run(Shell.java:455)at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:715)at org.apache.hadoop.util.Shell.execCommand(Shell.java:808)at org.apache.hadoop.util.Shell.execCommand(Shell.java:791)at org.apache.hadoop.fs.RawLocalFileSystem.setPermission(RawLocalFileSystem.java:656)at org.apache.hadoop.fs.RawLocalFileSystem.mkdirs(RawLocalFileSystem.java:444)at org.apache.hadoop.fs.FilterFileSystem.mkdirs(FilterFileSystem.java:293)at org.apache.hadoop.hive.ql.session.SessionState.createPath(SessionState.java:639)at org.apache.hadoop.hive.ql.session.SessionState.createSessionDirs(SessionState.java:567)at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:508)... 35 moresqlContext不为空指针,area也不为空指针,这个错的排查还是比较难的。

经发现,是本地模式下,如果在windows环境下运行该代码,并且windows没有配置HADOOP_HOME环境变量就会报这个错

这里直接给出解决方案

情况1: ⽆hadoop环境

先准备好winutils

下载地址:

链接:https://pan.baidu.com/s/17Oy_CHoHBFYGk3-fCo8bJw

提取码:jco5

链接:https://pan.baidu.com/s/17Oy_CHoHBFYGk3-fCo8bJw

提取码:jco5

将这个路径配置成HADOOP_HOME的环境变量

重启idea,再次运行代码,即可解决上述问题

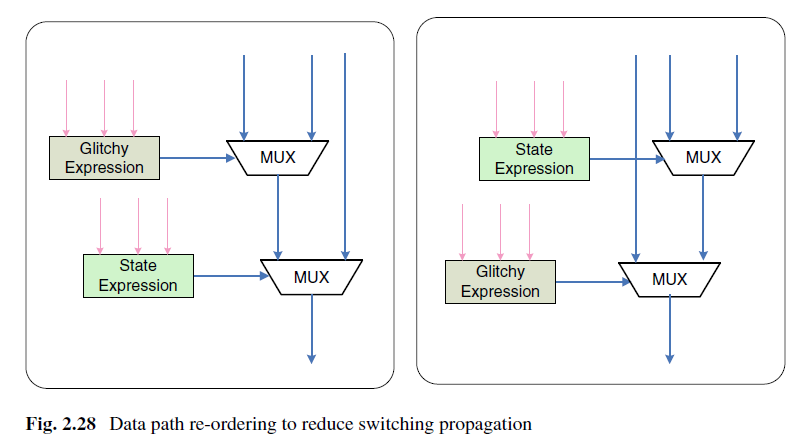

情况2: 有hadoop环境

确认HADOOP_HOME环境变量已正确配置

把winutils.exe复制到HADOOP_HOME⽬录内bin⽬录下, 如下图所示:

把winutils.exe复制到HADOOP_HOME⽬录内bin⽬录下, 如下图所示:

环境变量配置:

⽬录结构: