c++实现binder通讯参考示例 binder通讯 c++源码,本文分析服务端也就是test_server进程的处理过程。

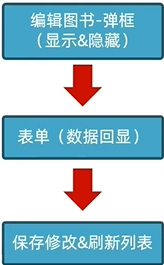

服务端相关的处理流程如下

sp<ProcessState> proc(ProcessState::self());//1

sp<IServiceManager> sm = defaultServiceManager();//2

sm->addService(String16("hello"),new BnHelloService());//3

ProcessState::self()->startThreadPool();//4

IPCThreadState::self()->joinThreadPool();//5

下面对每一行进行分析

1,ProcessState::self()

//frameworks\native\libs\binder\ProcessState.cpp

sp<ProcessState> ProcessState::self()

{Mutex::Autolock _l(gProcessMutex);if (gProcess != NULL) {return gProcess;}gProcess = new ProcessState;return gProcess;

}

ProcessState是个单例,每个进程都只有一个ProcessState对象。如果gProcess不为空就直接返回gProcess,否者就初始化一个ProcessState对象返回。

//frameworks\native\libs\binder\ProcessState.cpp

ProcessState::ProcessState(): mDriverFD(open_driver()) //1, mVMStart(MAP_FAILED), mThreadCountLock(PTHREAD_MUTEX_INITIALIZER), mThreadCountDecrement(PTHREAD_COND_INITIALIZER), mExecutingThreadsCount(0), mMaxThreads(DEFAULT_MAX_BINDER_THREADS), mStarvationStartTimeMs(0), mManagesContexts(false), mBinderContextCheckFunc(NULL), mBinderContextUserData(NULL), mThreadPoolStarted(false), mThreadPoolSeq(1)

{if (mDriverFD >= 0) {// mmap the binder, providing a chunk of virtual address space to receive transactions.mVMStart = mmap(0, BINDER_VM_SIZE, PROT_READ, MAP_PRIVATE | MAP_NORESERVE, mDriverFD, 0);//2if (mVMStart == MAP_FAILED) {// *sigh*ALOGE("Using /dev/binder failed: unable to mmap transaction memory.\n");close(mDriverFD);mDriverFD = -1;}}LOG_ALWAYS_FATAL_IF(mDriverFD < 0, "Binder driver could not be opened. Terminating.");

}

注释1处调用open_driver,并将返回的结果赋值给mDriverFD。注释2处映射内存空间。

//frameworks\native\libs\binder\ProcessState.cpp

static int open_driver()

{int fd = open("/dev/binder", O_RDWR | O_CLOEXEC);//1if (fd >= 0) {int vers = 0;status_t result = ioctl(fd, BINDER_VERSION, &vers);if (result == -1) {ALOGE("Binder ioctl to obtain version failed: %s", strerror(errno));close(fd);fd = -1;}if (result != 0 || vers != BINDER_CURRENT_PROTOCOL_VERSION) {ALOGE("Binder driver protocol does not match user space protocol!");close(fd);fd = -1;}size_t maxThreads = DEFAULT_MAX_BINDER_THREADS;result = ioctl(fd, BINDER_SET_MAX_THREADS, &maxThreads);//2if (result == -1) {ALOGE("Binder ioctl to set max threads failed: %s", strerror(errno));}} else {ALOGW("Opening '/dev/binder' failed: %s\n", strerror(errno));}return fd;

}

可以看出,注释1处open binder驱动,就会导致在binder驱动中创建binder_proc,open流程参考binder 驱动情景分析-注册服务。注释2处设置最大线程数,用于支持多线程。

每个进程都只有一个ProcessState对象,其中的mDriverFD是打开binder驱动返回的fd。在ProcessState::self()函数中,主要完成:

- 打开binder驱动,mDriverFD指向返回的fd

- 设置最大线程数

- 映射内存

sp sm = defaultServiceManager()

该函数用于获取BpServiceManager对象

//frameworks\native\libs\binder\IServiceManager.cpp

sp<IServiceManager> defaultServiceManager()

{if (gDefaultServiceManager != NULL) return gDefaultServiceManager;{AutoMutex _l(gDefaultServiceManagerLock);while (gDefaultServiceManager == NULL) {gDefaultServiceManager = interface_cast<IServiceManager>(ProcessState::self()->getContextObject(NULL));//1if (gDefaultServiceManager == NULL)sleep(1);}}return gDefaultServiceManager;

}

也是使用单例模式,gDefaultServiceManager 通过注释1处的函数获取,先来看看ProcessState::self()->getContextObject(NULL)返回的是什么

//frameworks\native\libs\binder\ProcessState.cpp

sp<IBinder> ProcessState::getContextObject(const sp<IBinder>& /*caller*/)

{return getStrongProxyForHandle(0);

}

继续调用getStrongProxyForHandle,注意传入的参数为0

//frameworks\native\libs\binder\ProcessState.cpp

sp<IBinder> ProcessState::getStrongProxyForHandle(int32_t handle)

{sp<IBinder> result;AutoMutex _l(mLock);handle_entry* e = lookupHandleLocked(handle);if (e != NULL) {// We need to create a new BpBinder if there isn't currently one, OR we// are unable to acquire a weak reference on this current one. See comment// in getWeakProxyForHandle() for more info about this.IBinder* b = e->binder;if (b == NULL || !e->refs->attemptIncWeak(this)) {if (handle == 0) {Parcel data;status_t status = IPCThreadState::self()->transact(0, IBinder::PING_TRANSACTION, data, NULL, 0);if (status == DEAD_OBJECT)return NULL;}b = new BpBinder(handle); //1//省略

注释1处直接创建一个BpBinder,其中传入的handle为0

//frameworks\native\libs\binder\BpBinder.cpp

BpBinder::BpBinder(int32_t handle): mHandle(handle)//1, mAlive(1), mObitsSent(0), mObituaries(NULL)

{ALOGV("Creating BpBinder %p handle %d\n", this, mHandle);extendObjectLifetime(OBJECT_LIFETIME_WEAK);IPCThreadState::self()->incWeakHandle(handle);

}

注释1处将传入的handle赋值给mHandle,值为0。

所以 ProcessState::self()->getContextObject(NULL)返回的是一个BpBinder对象,其中的mHandle为0 。

接着继续来看interface_cast( ProcessState::self()->getContextObject(NULL))函数

//X:\rk3288\P_rk3288_7.1\frameworks\native\include\binder\IInterface.h

template<typename INTERFACE>

inline sp<INTERFACE> interface_cast(const sp<IBinder>& obj)

{return INTERFACE::asInterface(obj);

}

INTERFACE是IServiceManager,等于是调用IServiceManager:asInterface(obj)函数。obj为前面得到的BpBinder对象,其中的mHandle为0

asInterface是个模板函数

//frameworks\native\libs\binder\IServiceManager.cpp

IMPLEMENT_META_INTERFACE(ServiceManager, "android.os.IServiceManager");#define IMPLEMENT_META_INTERFACE(INTERFACE, NAME) \const android::String16 I##INTERFACE::descriptor(NAME); \const android::String16& \I##INTERFACE::getInterfaceDescriptor() const { \return I##INTERFACE::descriptor; \} \android::sp<I##INTERFACE> I##INTERFACE::asInterface( \const android::sp<android::IBinder>& obj) \{ \android::sp<I##INTERFACE> intr; \if (obj != NULL) { \intr = static_cast<I##INTERFACE*>( \obj->queryLocalInterface( \I##INTERFACE::descriptor).get()); \if (intr == NULL) { \intr = new Bp##INTERFACE(obj); //1 \} \} \return intr; \} \I##INTERFACE::I##INTERFACE() { } \I##INTERFACE::~I##INTERFACE() { }

INTERFACE替换为ServiceManager,注释1处返回一个BpServiceManager对象。obj就是前面传入的BpBinder对象

//frameworks\native\libs\binder\IServiceManager.cpp

BpServiceManager(const sp<IBinder>& impl): BpInterface<IServiceManager>(impl){}

//frameworks\native\include\binder\IInterface.h

template<typename INTERFACE>

inline BpInterface<INTERFACE>::BpInterface(const sp<IBinder>& remote): BpRefBase(remote)

{

}//frameworks\native\libs\binder\Binder.cpp

BpRefBase::BpRefBase(const sp<IBinder>& o): mRemote(o.get()), mRefs(NULL), mState(0)

{

//省略

BpServiceManager继承BpInterface,BpInterface又继承BpRefBase。将mRemote指向一个BpBinder对象,其中的mHandle为0

所以该函数:defaultServiceManager 返回的是一个BpServiceManager对象,其中的mRemote指向一个BpBinder对象,其中的mHandle为0,mHandle在binder通讯中代表目的进程,0表示和servicemanager通讯。

sm->addService(String16(“hello”),new BnHelloService())

该函数用于注册服务。通过前面的分析,sm为BpServiceManager对象,即调用BpServiceManager的addService函数

//frameworks\native\libs\binder\IServiceManager.cpp

virtual status_t addService(const String16& name, const sp<IBinder>& service,bool allowIsolated){Parcel data, reply;data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor());data.writeString16(name);//1data.writeStrongBinder(service);//2data.writeInt32(allowIsolated ? 1 : 0);status_t err = remote()->transact(ADD_SERVICE_TRANSACTION, data, &reply);//3return err == NO_ERROR ? reply.readExceptionCode() : err;}

注释1处传入需要注册服务的名字(hello)。注释2处传入服务实体,比如BnHelloService,注释3处发起远程调用,remote()返回的是mRemote,而mRemote指向BpBinder对象,其中的mHandle为0,所以注释3处就是调用BpBinder的transact函数。

先来看一下注释2处的writeStrongBinder函数,writeStrongBinder调用flatten_binder进行处理

//frameworks\native\libs\binder\Parcel.cpp

status_t flatten_binder(const sp<ProcessState>& /*proc*/,const sp<IBinder>& binder, Parcel* out)

{flat_binder_object obj;obj.flags = 0x7f | FLAT_BINDER_FLAG_ACCEPTS_FDS;if (binder != NULL) {IBinder *local = binder->localBinder();if (!local) {BpBinder *proxy = binder->remoteBinder();if (proxy == NULL) {ALOGE("null proxy");}const int32_t handle = proxy ? proxy->handle() : 0;obj.type = BINDER_TYPE_HANDLE;obj.binder = 0; /* Don't pass uninitialized stack data to a remote process */obj.handle = handle;obj.cookie = 0;} else {obj.type = BINDER_TYPE_BINDER;obj.binder = reinterpret_cast<uintptr_t>(local->getWeakRefs());obj.cookie = reinterpret_cast<uintptr_t>(local);//1}} else {obj.type = BINDER_TYPE_BINDER;obj.binder = 0;obj.cookie = 0;}return finish_flatten_binder(binder, obj, out);

}

可以看出,和C语言使用binder一样,注册服务时也是构造flat_binder_object结构体,其type为BINDER_TYPE_BINDER,并将BnHelloService类地址存入其cookie 中,后续通过这个,test_server后续会通过这个决定调动哪个服务的方法。

接着看transact函数

//frameworks\native\libs\binder\BpBinder.cpp

status_t BpBinder::transact(uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

{// Once a binder has died, it will never come back to life.if (mAlive) {status_t status = IPCThreadState::self()->transact(mHandle, code, data, reply, flags);if (status == DEAD_OBJECT) mAlive = 0;return status;}return DEAD_OBJECT;

}

IPCThreadState::self() 创建IPCThreadState对象,并且利用线程的特有数据来保证每个线程有且只有一个IPCThreadState对象。然后调用其transact函数。注意第一个参数是mHandle,值为0

status_t IPCThreadState::transact(int32_t handle,uint32_t code, const Parcel& data,Parcel* reply, uint32_t flags)

{//省略if (err == NO_ERROR) {LOG_ONEWAY(">>>> SEND from pid %d uid %d %s", getpid(), getuid(),(flags & TF_ONE_WAY) == 0 ? "READ REPLY" : "ONE WAY");err = writeTransactionData(BC_TRANSACTION, flags, handle, code, data, NULL);//1}if ((flags & TF_ONE_WAY) == 0) {if (reply) {err = waitForResponse(reply);} else {Parcel fakeReply;err = waitForResponse(&fakeReply);//2}}} else {err = waitForResponse(NULL, NULL);}return err;

}

1,调用writeTransactionData构造数据,handle为0

status_t IPCThreadState::writeTransactionData(int32_t cmd, uint32_t binderFlags,int32_t handle, uint32_t code, const Parcel& data, status_t* statusBuffer)

{binder_transaction_data tr;tr.target.ptr = 0; /* Don't pass uninitialized stack data to a remote process */tr.target.handle = handle; //handle为0,表示和servicemanager通讯tr.code = code;tr.flags = binderFlags;tr.cookie = 0;tr.sender_pid = 0;tr.sender_euid = 0;const status_t err = data.errorCheck();if (err == NO_ERROR) {tr.data_size = data.ipcDataSize();tr.data.ptr.buffer = data.ipcData();tr.offsets_size = data.ipcObjectsCount()*sizeof(binder_size_t);tr.data.ptr.offsets = data.ipcObjects();} else if (statusBuffer) {tr.flags |= TF_STATUS_CODE;*statusBuffer = err;tr.data_size = sizeof(status_t);tr.data.ptr.buffer = reinterpret_cast<uintptr_t>(statusBuffer);tr.offsets_size = 0;tr.data.ptr.offsets = 0;} else {return (mLastError = err);}mOut.writeInt32(cmd);mOut.write(&tr, sizeof(tr));return NO_ERROR;

}

2,调用waitForResponse 发送并等到回复

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult)

{uint32_t cmd;int32_t err;while (1) {if ((err=talkWithDriver()) < NO_ERROR) break; //1err = mIn.errorCheck();if (err < NO_ERROR) break;if (mIn.dataAvail() == 0) continue;cmd = (uint32_t)mIn.readInt32();//2switch (cmd) {case BR_TRANSACTION_COMPLETE:if (!reply && !acquireResult) goto finish;break;//省略注释1处会通过ioctl来将数据写给binder驱动,注释2处开始根据接收到的cmd,做不同的处理。

status_t IPCThreadState::talkWithDriver(bool doReceive)

{//省略if (ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0)err = NO_ERROR;//省略

可以看出,c++注册服务的核心流程和c 语音注册服务的流程是一样的,都是构造数据,其中handle值为0,flat_binder_obj的type为BINDER_TYPE_BINDER,然后通过ioctl将数据写给binder驱动。

ProcessState::self()->startThreadPool()

创建子线程,在startThreadPool继续调用spawnPooledThread函数

//frameworks\native\libs\binder\ProcessState.cpp

void ProcessState::spawnPooledThread(bool isMain)

{if (mThreadPoolStarted) {String8 name = makeBinderThreadName();ALOGV("Spawning new pooled thread, name=%s\n", name.string());sp<Thread> t = new PoolThread(isMain);t->run(name.string());}

}

其中PoolThread继承自Thread,调用run函数,就会导致内部使用pthread_create创建子线程并调用threadLoop函数

//frameworks\native\libs\binder\ProcessState.cpp

virtual bool threadLoop(){IPCThreadState::self()->joinThreadPool(mIsMain);return false;}

//frameworks\native\libs\binder\IPCThreadState.cpp

void IPCThreadState::joinThreadPool(bool isMain)

{//省略do {processPendingDerefs();// now get the next command to be processed, waiting if necessaryresult = getAndExecuteCommand();//获取并处理//省略} while (result != -ECONNREFUSED && result != -EBADF);//省略

}

可以看出joinThreadPool就是让线程进入循环,读取数据并处理

status_t IPCThreadState::getAndExecuteCommand()

{status_t result;int32_t cmd;result = talkWithDriver();//1if (result >= NO_ERROR) {//省略result = executeCommand(cmd);//2//省略}}

注释1处前面分析过,通过ioctl和binder驱动交互,此处是binder驱动返回的数据,注释2处处理数据,这里先不分析这个函数。

startThreadPool函数就是在服务进程中创建子线程,并让子线程循环的获取数据并处理数据。

IPCThreadState::self()->joinThreadPool()

joinThreadPool函数前面分析过,就是让线程循环的获取数据并处理数据,这里的线程是主线程。

总结

在C++中,test_server进程的核心流程也是

- 打开binder驱动并映射(每个进程都只有一个ProcessState对象,在创建ProcessState对象时打开binder驱动并映射)

- 注册服务,注册服务是先获取BpServiceManager对象,BpServiceManager中的mRemote指向的是一个BpBinder对象,BpBinder中的mHandle为0

- 进入循环,等待客户端的数据,有数据来时处理数据(每个线程都只有一个IPCThreadState对象)

对于注册服务,调用流程图如下:

还有一个问题就是test_server接收到客户端的请求后,是怎么调用到BnHelloService中的函数的呢?

前面提到过,在IPCThreadState::getAndExecuteCommand函数中接收到数据后调用executeCommand来处理。对于数据交互收到的cmd是 BR_TRANSACTION

//frameworks\native\libs\binder\IPCThreadState.cpp

status_t IPCThreadState::executeCommand(int32_t cmd)

{switch ((uint32_t)cmd) {//省略case BR_TRANSACTION:{binder_transaction_data tr;result = mIn.read(&tr, sizeof(tr));//1//省略if (tr.target.ptr) {// We only have a weak reference on the target object, so we must first try to// safely acquire a strong reference before doing anything else with it.if (reinterpret_cast<RefBase::weakref_type*>(tr.target.ptr)->attemptIncStrong(this)) {error = reinterpret_cast<BBinder*>(tr.cookie)->transact(tr.code, buffer,&reply, tr.flags);//2reinterpret_cast<BBinder*>(tr.cookie)->decStrong(this);} else {error = UNKNOWN_TRANSACTION;}} else {error = the_context_object->transact(tr.code, buffer, &reply, tr.flags);}}//省略}}

注释1处取出binder_transaction_data ,注释2处将其中的cookie转化成BBinder,然后调用其transact函数。在注册服务时,是将传入的BnHelloService的地址存在cookie里面的,这里就是取出BnHelloService,然后转化成BBinder,BnHelloService继承自BBinder

//frameworks\native\libs\binder\Binder.cpp

status_t BBinder::transact(uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

{data.setDataPosition(0);status_t err = NO_ERROR;switch (code) {case PING_TRANSACTION:reply->writeInt32(pingBinder());break;default:err = onTransact(code, data, reply, flags);//1break;}if (reply != NULL) {reply->setDataPosition(0);}return err;

}

直接调用注释1处的onTransact函数。而BnHelloService实现了onTransact函数。这就调用到了BnHelloService中的函数了。

![[图解]软件开发中的糊涂用语-03-文档](https://img-blog.csdnimg.cn/direct/3fe7cfc000fd49f4ac39d8b2d73be010.png)