OllamaFunctions 学习笔记

- 0. 引言

- 1. 使用方法

- 2. 用于提取

0. 引言

此文章展示了如何使用 Ollama 的实验性包装器,为其提供与 OpenAI Functions 相同的 API。

1. 使用方法

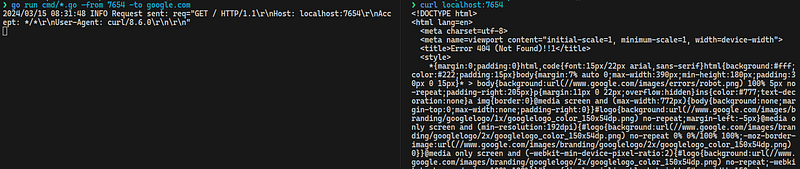

您可以按照与初始化标准 ChatOllama 实例类似的方式初始化 OllamaFunctions:

from langchain_experimental.llms.ollama_functions import OllamaFunctionsllm = OllamaFunctions(base_url="http://xxx.xxx.xxx.xxx:11434", model="gpt-4", temperature=0.0)

然后,您可以绑定使用 JSON Schema 参数和 function_call 参数定义的函数,以强制模型调用给定函数:

model = model.bind(functions=[{"name": "get_current_weather","description": "Get the current weather in a given location","parameters": {"type": "object","properties": {"location": {"type": "string","description": "The city and state, " "e.g. San Francisco, CA",},"unit": {"type": "string","enum": ["celsius", "fahrenheit"],},},"required": ["location"],},}],function_call={"name": "get_current_weather"},

)

使用此模型调用函数会产生与提供的架构匹配的 JSON 输出:

from langchain_core.messages import HumanMessagemodel.invoke("what is the weather in Boston?")

输出,

AIMessage(content='', additional_kwargs={'function_call': {'name': 'get_current_weather', 'arguments': '{"location": "Boston, MA", "unit": "celsius"}'}})

2. 用于提取

您可以在此处使用函数调用做的一件有用的事情是以结构化格式从给定输入中提取属性:

from langchain.chains import create_extraction_chain# Schema

schema = {"properties": {"name": {"type": "string"},"height": {"type": "integer"},"hair_color": {"type": "string"},},"required": ["name", "height"],

}# Input

input = """Alex is 5 feet tall. Claudia is 1 feet taller than Alex and jumps higher than him. Claudia is a brunette and Alex is blonde."""# Run chain

llm = OllamaFunctions(model="mistral", temperature=0)

chain = create_extraction_chain(schema, llm)

chain.run(input)

输出,

[{'name': 'Alex', 'height': 5, 'hair_color': 'blonde'},{'name': 'Claudia', 'height': 6, 'hair_color': 'brunette'}]

完结!

refer: https://python.langchain.com/docs/integrations/chat/ollama_functions/