1.爬取天气 并存在数据库

#!/usr/bin/python

# -*- coding: utf-8 -*-

import pymysql

import requests

from bs4 import BeautifulSoupdb = pymysql.connect(host='localhost',port=3306,user='root',passwd='root',db='mysql',use_unicode=True,charset="utf8"

)

cursor = db.cursor()def downdata(url):hd = {'User-Agent': "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/65.0.3325.181 Safari/537.36"}req = requests.get(url, headers=hd)# req.encoding = 'utf-8'soup = BeautifulSoup(req.text, 'html.parser')da_new = soup.find_all('li', class_='ndays-item png-fix cf')for da in da_new:day = da.find('div', class_='td td2').find('p', class_='p1')week = da.find('div', class_='td td2').find('p', class_='p2')wd = da.find('div', class_='td td5').find('p', class_='p1')fl = da.find('div', class_='td td5').find('p', class_='p2')f2 = da.find('div', class_='td td3').find('div')['title']print('今天是' + day.text + ',' + '星期' + week.text + ',' + '温度' + wd.text + ',' + '风力' + fl.text + ',' + '天气' + f2)sql = "INSERT INTO tianiq(day1,week1, wd, fl, air) VALUES ('%s','%s','%s','%s','%s')" % (day.text, week.text, wd.text, fl.text, f2)print(sql)cursor.execute(sql)db.commit()downdata('http://tianqi.sogou.com/shenyang/15/')

2.爬取漫画

#!/usr/bin/python

# -*- coding: UTF-8 -*-import re

import urllib.request

def gethtml(url):headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:23.0) Gecko/20100101 Firefox/23.0'}req = urllib.request.Request(url=url, headers=headers)html = urllib.request.urlopen(req).read()return html

def getimg(html):reg = r'src="(.*?\.jpg)"'img=re.compile(reg)html=html.decode('utf-8')#python3imglist=re.findall(img,html)x = 0for imgurl in imglist:urllib.request.urlretrieve(imgurl,'D:%s.jpg'%x)x = x+1

html=gethtml("http://www.tuku.cc/")print(getimg(html))

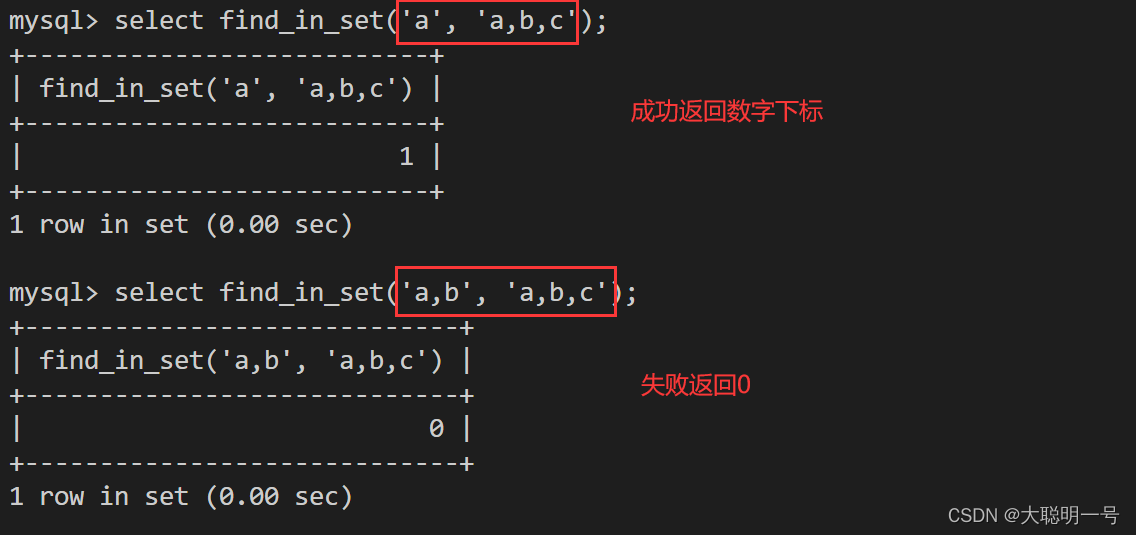

3.调用数据库

#!/usr/bin/python

# -*- coding: UTF-8 -*-import pymysql# 打开数据库连接

db = pymysql.connect("localhost", "root", "root", "mysql")

# 使用cursor()方法获取操作游标

cursor = db.cursor()

# SQL 插入语句

sql = "INSERT INTO tianiq(day1, \

week1, wd, fl, air) \VALUES ('Mac', 'Mohan', 'M', 'M', 'M')"

try:# 执行sql语句cursor.execute(sql)# 执行sql语句db.commit()print("insert ok")

except:# 发生错误时回滚db.rollback()

# 关闭数据库连接

db.close()

4.爬取视频

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import re

import requests

from bs4 import BeautifulSoupdef download(url):dz = {'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/65.0.3325.181 Safari/537.36'}req = requests.get(url,headers = dz).contentwith open('qq.mp4', 'wb') as fp:fp.write(req)download('http://video.study.163.com/edu-video/nos/mp4/2017/04/01/1006064693_cc2842f7dc8b410c96018ec618f37ef6_sd.mp4?ak=d2e3a054a6a144f3d98805f49b4f04439064ce920ba6837d89a32d0b0294ad3c1729b01fa6a0b5a3442ba46f5001b48b1ee2fb6240fc719e1b3940ed872a11f180acad2d0d7744336d03591c3586614af455d97e99102a49b825836de913910ef0837682774232610f0d4e39d8436cb9a153bdeea4a2bfbae357803dfb6768a742fe395e87eba0c3e30b7b64ef1be06585111bf60ea26d5dad1f891edd9e94a8e167e0b04144490499ffe31e0d97a0a1babcbd7d2e007d850cc3bf7aa697e8ff')

5.爬取音频

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import json

import requests

from bs4 import BeautifulSoupdef download(url):hd = {'User-Agent': "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/65.0.3325.181 Safari/537.36"}req = requests.get(url, headers=hd)reps = req.textresult = json.loads(reps)datap = result['data']['tracksAudioPlay']for index in datap:title = index['trackName']index['src']print(index['src'])data = requests.get(index['src'], headers=hd).contenttry:with open('%s.mp3' % title, 'wb') as f:f.write(data)except BaseException:print('1')download('http://www.ximalaya.com/revision/play/album?albumId=7371372&pageNum=1&sort=-1&pageSize=30')

6.爬取文字

#!/usr/bin/python

# -*- coding: UTF-8 -*-import requestsfrom bs4 import BeautifulSoupdef get_h(url):response = requests.get(url)response .encoding = 'utf-8'return response.textdef get_c(html):soup = BeautifulSoup(html,'html.parser')joke_content = soup.select('div.content')[0].getTextreturn joke_content

url_joke = "https://www.qiushibaike.com"

html = get_h(url_joke)

joke_content = get_c(html)

print(joke_content)

7.爬取图片

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import requests

from bs4 import BeautifulSoup

import osheaders = {'User-Agent': "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/22.0.1207.1 Safari/537.1"}

url = 'http://www.ivsky.com/'

start_html = requests.get(url, headers=headers)

Soup = BeautifulSoup(start_html.text, 'html.parser')

all_div = Soup.find_all('div', class_='syl_pic')

for lsd in all_div:lsds = 'http://www.ivsky.com' + lsd.find('a')['href']title = lsd.find('a').get_textprint(lsds)html = requests.get(lsds, headers=headers)Soup_new = BeautifulSoup(html.text, 'html.parser')app = Soup_new.find_all('div', class_='il_img')for app_new in app:apptwo = 'http://www.ivsky.com' + app_new.find('a')['href']htmlthree = requests.get(apptwo, headers=headers)Soupthree = BeautifulSoup(htmlthree.text, 'html.parser')appthree = Soupthree.find('div', class_='pic')appf = appthree.find('img')['src']name = appf[-9:-4]img = requests.get(appf, headers=headers)f = open(name + '.jpg', 'ab') ##写入多媒体文件必须要 b 这个参数!!必须要!!f.write(img.content) ##多媒体文件要是用conctent哦!f.close()

8.爬取小说

#!/usr/bin/python

# -*- coding: UTF-8 -*-from urllib import request

from bs4 import BeautifulSoup

import re

import sysif __name__ == "__main__":#创建txt文件file = open('一念永恒.txt', 'w', encoding='utf-8')#一念永恒小说目录地址target_url = 'http://www.biqukan.com/1_1094/'#User-Agenthead = {}head['User-Agent'] = 'Mozilla/5.0 (Linux; Android 4.1.1; Nexus 7 Build/JRO03D) AppleWebKit/535.19 (KHTML, like Gecko) Chrome/18.0.1025.166 Safari/535.19'target_req = request.Request(url = target_url, headers = head)target_response = request.urlopen(target_req)target_html = target_response.read().decode('gbk','ignore')#创建BeautifulSoup对象listmain_soup = BeautifulSoup(target_html,'html.parser')#搜索文档树,找出div标签中class为listmain的所有子标签chapters = listmain_soup.find_all('div',class_ = 'listmain')#使用查询结果再创建一个BeautifulSoup对象,对其继续进行解析download_soup = BeautifulSoup(str(chapters), 'html.parser')#计算章节个数numbers = (len(download_soup.dl.contents) - 1) / 2 - 8index = 1#开始记录内容标志位,只要正文卷下面的链接,最新章节列表链接剔除begin_flag = False#遍历dl标签下所有子节点for child in download_soup.dl.children:#滤除回车if child != '\n':#找到《一念永恒》正文卷,使能标志位if child.string == u"《一念永恒》正文卷":begin_flag = True#爬取链接并下载链接内容if begin_flag == True and child.a != None:download_url = "http://www.biqukan.com" + child.a.get('href')download_req = request.Request(url = download_url, headers = head)download_response = request.urlopen(download_req)download_html = download_response.read().decode('gbk','ignore')download_name = child.stringsoup_texts = BeautifulSoup(download_html, 'html.parser')texts = soup_texts.find_all(id = 'content', class_ = 'showtxt')soup_text = BeautifulSoup(str(texts), 'html.parser')write_flag = Truefile.write(download_name + '\n\n')#将爬取内容写入文件for each in soup_text.div.text.replace('\xa0',''):if each == 'h':write_flag = Falseif write_flag == True and each != ' ':file.write(each)if write_flag == True and each == '\r':file.write('\n')file.write('\n\n')#打印爬取进度sys.stdout.write("已下载:%.3f%%" % float(index/numbers) + '\r')sys.stdout.flush()index += 1file.close()