效果

(图片来源网络,如有侵权请联系删除)

摘要

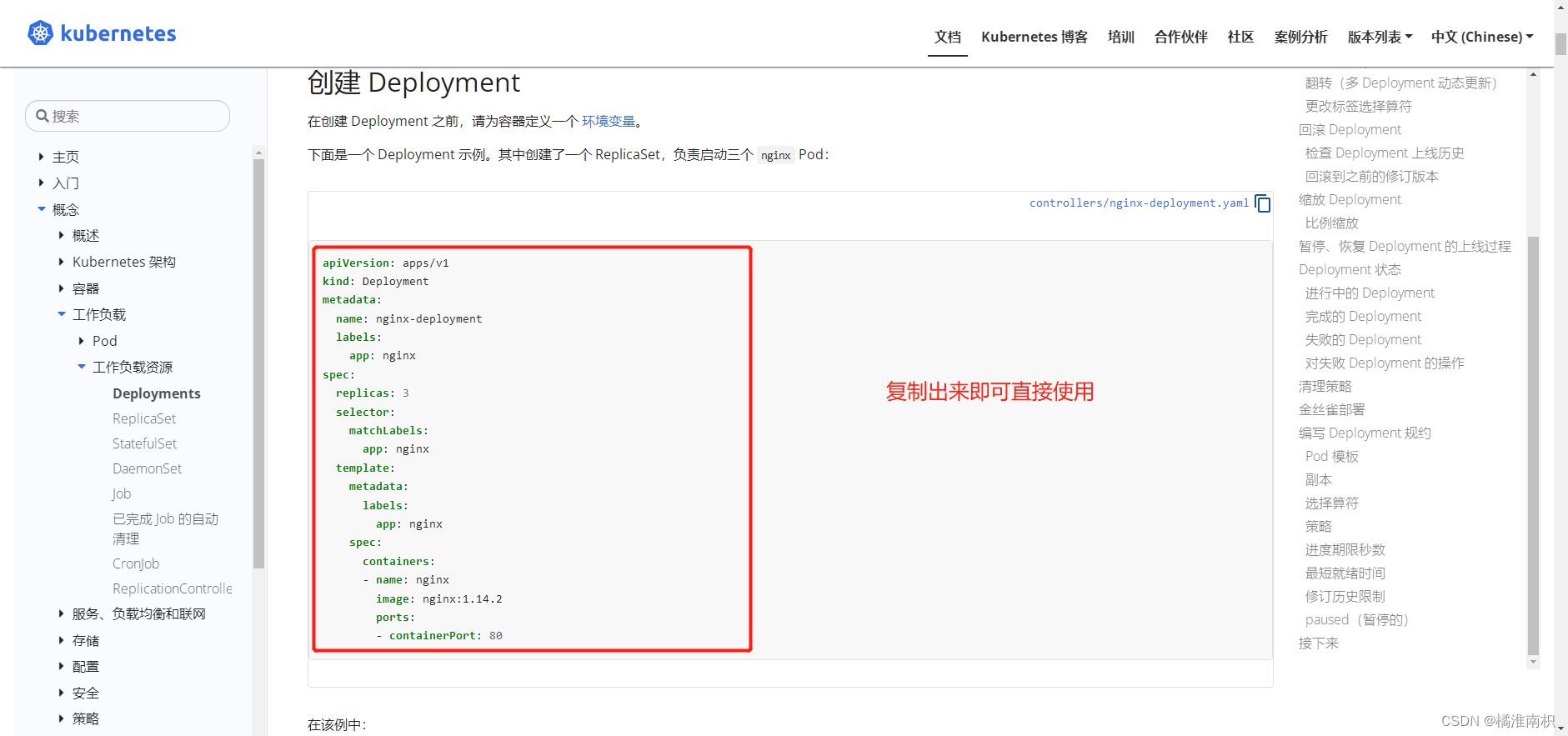

技术栈如下:

代码演示:python

面部关键点识别:dlib

图像处理:pillow,opencv

人脸关键点

既然是做人眼部位的贴图,需要知道人眼与水平方向的夹角,关键点就是左眼的36点和39点,右眼的42点和45点。

还需要准备红眼贴纸,用于后续覆盖在眼部。

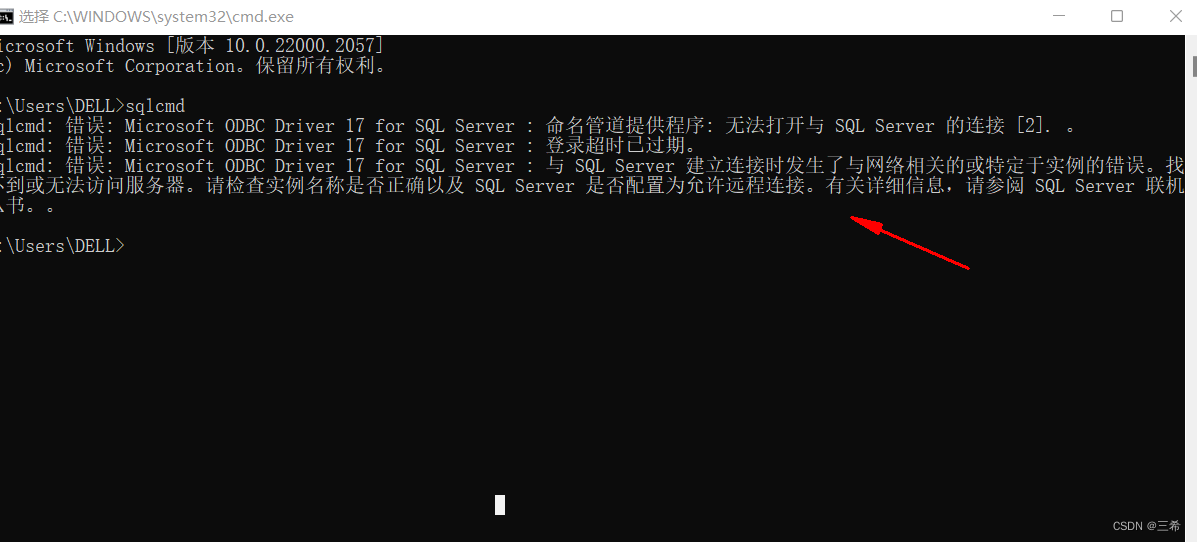

代码

import cv2 as cv

import dlib

from PIL import Imagedef detect_predict():# 生成predictor和detectordetector = dlib.get_frontal_face_detector()predictor = dlib.shape_predictor('shape_predictor_68_face_landmarks.dat')return detector, predictordef cal_ang(point_1, point_2, point_3):# 三点计算夹角,返回角度对应各点的角度a = math.sqrt((point_2[0]-point_3[0])*(point_2[0]-point_3[0])+(point_2[1]-point_3[1])*(point_2[1] - point_3[1]))b = math.sqrt((point_1[0]-point_3[0])*(point_1[0]-point_3[0])+(point_1[1]-point_3[1])*(point_1[1] - point_3[1]))c = math.sqrt((point_1[0]-point_2[0])*(point_1[0]-point_2[0])+(point_1[1]-point_2[1])*(point_1[1]-point_2[1]))A = math.degrees(math.acos((a*a-b*b-c*c)/(-2*b*c)))B = math.degrees(math.acos((b*b-a*a-c*c)/(-2*a*c)))C = math.degrees(math.acos((c*c-a*a-b*b)/(-2*a*b)))return math.ceil(A), math.ceil(B), math.ceil(C)origin = cv.imread('人脸图片')

gray = cv.cvtColor(origin, cv.COLOR_BGR2GRAY)detector, predictor = detect_predict()

res = detector(gray, 1)faces = []

for face in res:shape = predictor(origin, face)parts = shape.parts()faces.append(parts)scale = 5.2 # mask宽度 = 眼距 * scale

pil_img = Image.fromarray(cv.cvtColor(origin, cv.COLOR_BGR2RGB))

mask = Image.open('红眼贴纸')

mask = mask.convert('RGBA')

mw, mh = mask.sizemeasure_l = 0.55 # 左眼mask的中心点位置

measure_r = 0.48 # 右眼mask的中心点位置

eyes = []# 左眼

angle = cal_ang((p[36].x, p[36].y), (p[36].x + 10, p[36].y), (p[39].x, p[39].y))

ang = int('-' + str(angle[0])) if p[36].y < p[39].y else angle[0]

canthus = math.sqrt(math.pow(p[36].x - p[39].x, 2) + math.pow(p[36].y - p[39].y, 2))

target_h = int(mh / (mw / (canthus * scale)))

m1 = mask.resize((int(canthus * scale), target_h), Image.ANTIALIAS)

m1 = m1.rotate(ang, expand=True)

w, h = m1.size

x, y = ((p[38].x + p[40].x) / 2, (p[38].y + p[40].y) / 2)# 小于0时,会出现1px的偏差

ltx = int(x - measure_l * w) if int(x - measure_l * w) >= 0 else int(x - measure_l * w) - 1

lty = int(y - h/2 + ang / 180 * canthus) if int(y - h/2 + ang / 180 * canthus) >= 0 else int(y - h/2 + ang / 180 * canthus) - 1

position = (ltx, lty, int(x - w * measure_l + w), int(y - h/2 + h + ang / 180 * canthus))

pil_img.paste(m1, position, mask=m1.split()[-1])# 右眼

angle = cal_ang((p[42].x, p[42].y), (p[42].x + 10, p[42].y), (p[45].x, p[45].y))

ang = int('-' + str(angle[0])) if p[42].y < p[45].y else angle[0]

canthus = math.sqrt(math.pow(p[42].x - p[45].x, 2) + math.pow(p[42].y - p[45].y, 2))

target_h = int(mh / (mw / (canthus * scale)))

m2 = mask.resize((int(canthus * scale), target_h), Image.ANTIALIAS)

m2 = m2.rotate(ang, expand=True)

w, h = m2.size

x, y = ((p[43].x + p[47].x) / 2, (p[43].y + p[47].y) / 2)# 小于0时,出现1px的偏差

ltx = int(x - measure_r * w) if int(x - measure_r * w) >= 0 else int(x - measure_r * w) - 1

lty = int(y - h / 2 - ang / 180 * canthus) if int(y - h / 2 - ang / 180 * canthus) >= 0 else int(y - h / 2 - ang / 180 * canthus) - 1

position = (ltx, lty, int(x - w * measure_r + w), int(y - h / 2 + h - ang / 180 * canthus))

pil_img.paste(m2, position, mask=m2.split()[-1])

68点人脸关键点检测模型:

链接:https://pan.baidu.com/s/1_kBo6zoYacZdeqgISbkUew

提取码:2sok

问题

所有这种直接在平面贴图的方式,都会面临透视的问题。当用户面部倾斜,并且人的眼部本身就不是在一个平面上,这种情况下的处理结果则很不理想。无法通过正面遮挡,弥补这种处理的缺点。

**更多功能,或者想体验一下,可以扫下方二维码:**

主业前端程序猿一枚。图片处理方面,作为业余爱好。如有错误,请各位大佬轻喷,谢谢!!😂