哈喽兄弟们,今天来实现采集一下最新的qcwu招聘数据。

因为网站嘛,大家都爬来爬去的,人家就会经常更新,所以代码对应的也要经常重新去写。

对于会的人来说,当然无所谓,任他更新也拦不住,但是对于不会的小伙伴来说,网站一更新,当场自闭。

所以这期是出给不会的小伙伴的,我还录制了视频进行详细讲解,跟源码一起打包好了,直接在文末名片自取。

软件工具

先来看看需要准备啥

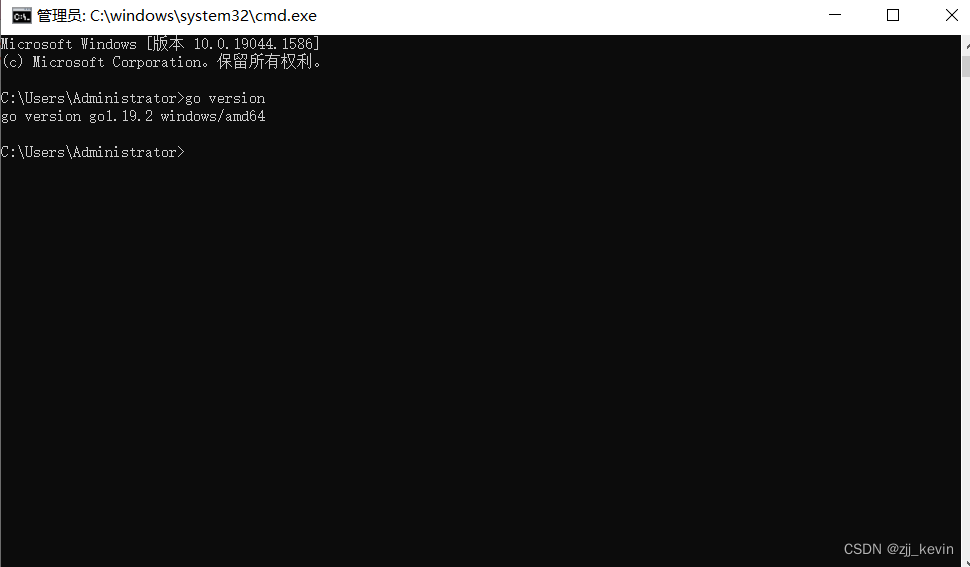

环境使用

Python 3.8

Pycharm

模块使用

# 第三方模块 需要安装的

requests >>> pip install requests

csv

实现爬虫基本流程

一、数据来源分析: 思路固定

-

明确需求:

- 明确采集网站以及数据内容

网址: 51job

内容: 招聘信息 -

通过开发者工具, 进行抓包分析, 分析具体数据来源

I. 打开开发者工具: F12 / 右键点击检查选择network

II. 刷新网页, 让数据内容重新加载一遍

III. 通过搜索<搜索你要的数据>去找数据具体位置

招聘信息数据包: https://we.***.com/api/job/search-pc?api_key=51job×tamp=1688645783&keyword=python&searchType=2&function=&industry=&jobArea=010000%2C020000%2C030200%2C040000%2C090200&jobArea2=&landmark=&metro=&salary=&workYear=°ree=&companyType=&companySize=&jobType=&issueDate=&sortType=0&pageNum=1&requestId=&pageSize=20&source=1&accountId=&pageCode=sou%7Csou%7Csoulb

二、代码实现步骤: 步骤固定

- 发送请求, 模拟浏览器对于url地址发送请求

请求链接: 招聘信息数据包url - 获取数据, 获取服务器返回响应数据 <所有的数据>

开发者工具: response - 解析数据, 提取我们想要的数据内容

招聘基本信息 - 保存数据, 把信息数据保存表格文件里面

代码解析

模块

# 导入数据请求模块

import requests

# 导入格式化输出模块

from pprint import pprint

# 导入csv

import csv

发送请求, 模拟浏览器对于url地址发送请求

headers = {'Cookie': 'guid=54b7a6c4c43a33111912f2b5ac6699e2; sajssdk_2015_cross_new_user=1; sensorsdata2015jssdkcross=%7B%22distinct_id%22%3A%2254b7a6c4c43a33111912f2b5ac6699e2%22%2C%22first_id%22%3A%221892b08f9d11c8-09728ce3464dad8-26031d51-3686400-1892b08f9d211e7%22%2C%22props%22%3A%7B%22%24latest_traffic_source_type%22%3A%22%E7%9B%B4%E6%8E%A5%E6%B5%81%E9%87%8F%22%2C%22%24latest_search_keyword%22%3A%22%E6%9C%AA%E5%8F%96%E5%88%B0%E5%80%BC_%E7%9B%B4%E6%8E%A5%E6%89%93%E5%BC%80%22%2C%22%24latest_referrer%22%3A%22%22%7D%2C%22identities%22%3A%22eyIkaWRlbnRpdHlfY29va2llX2lkIjoiMTg5MmIwOGY5ZDExYzgtMDk3MjhjZTM0NjRkYWQ4LTI2MDMxZDUxLTM2ODY0MDAtMTg5MmIwOGY5ZDIxMWU3IiwiJGlkZW50aXR5X2xvZ2luX2lkIjoiNTRiN2E2YzRjNDNhMzMxMTE5MTJmMmI1YWM2Njk5ZTIifQ%3D%3D%22%2C%22history_login_id%22%3A%7B%22name%22%3A%22%24identity_login_id%22%2C%22value%22%3A%2254b7a6c4c43a33111912f2b5ac6699e2%22%7D%2C%22%24device_id%22%3A%221892b08f9d11c8-09728ce3464dad8-26031d51-3686400-1892b08f9d211e7%22%7D; nsearch=jobarea%3D%26%7C%26ord_field%3D%26%7C%26recentSearch0%3D%26%7C%26recentSearch1%3D%26%7C%26recentSearch2%3D%26%7C%26recentSearch3%3D%26%7C%26recentSearch4%3D%26%7C%26collapse_expansion%3D; search=jobarea%7E%60010000%2C020000%2C030200%2C040000%2C090200%7C%21recentSearch0%7E%60010000%2C020000%2C030200%2C040000%2C090200%A1%FB%A1%FA000000%A1%FB%A1%FA0000%A1%FB%A1%FA00%A1%FB%A1%FA99%A1%FB%A1%FA%A1%FB%A1%FA99%A1%FB%A1%FA99%A1%FB%A1%FA99%A1%FB%A1%FA99%A1%FB%A1%FA9%A1%FB%A1%FA99%A1%FB%A1%FA%A1%FB%A1%FA0%A1%FB%A1%FApython%A1%FB%A1%FA2%A1%FB%A1%FA1%7C%21; privacy=1688644161; Hm_lvt_1370a11171bd6f2d9b1fe98951541941=1688644162; Hm_lpvt_1370a11171bd6f2d9b1fe98951541941=1688644162; JSESSIONID=BA027715BD408799648B89C132AE93BF; acw_tc=ac11000116886495592254609e00df047e220754059e92f8a06d43bc419f21; ssxmod_itna=Qqmx0Q0=K7qeqD5itDXDnBAtKeRjbDce3=e8i=Ax0vTYPGzDAxn40iDtrrkxhziBemeLtE3Yqq6j7rEwPeoiG23pAjix0aDbqGkPA0G4GG0xBYDQxAYDGDDPDocPD1D3qDkD7h6CMy1qGWDm4kDWPDYxDrjOKDRxi7DDvQkx07DQ5kQQGxjpBF=FHpu=i+tBDkD7ypDlaYj9Om6/fxMp7Ev3B3Ix0kl40Oya5s1aoDUlFsBoYPe723tT2NiirY6QiebnnDsAhWC5xyVBDxi74qTZbKAjtDirGn8YD===; ssxmod_itna2=Qqmx0Q0=K7qeqD5itDXDnBAtKeRjbDce3=e8i=DnIfwqxDstKhDL0iWMKV3Ekpun3DwODKGcDYIxxD==; acw_sc__v2=64a6bf58f0b7feda5038718459a3b1e625849fa8','Referer': 'https://we.51job.com/pc/search?jobArea=010000,020000,030200,040000,090200&keyword=python&searchType=2&sortType=0&metro=','User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/114.0.0.0 Safari/537.36',

}

# 请求链接

url = 'https://we.***.com/api/job/search-pc'

# 请求参数

data = {'api_key': '51job','timestamp': '*****','keyword': '****','searchType': '2','function': '','industry': '','jobArea': '010000,020000,030200,040000,090200','jobArea2': '','landmark': '','metro': '','salary': '','workYear': '','degree': '','companyType': '','companySize': '','jobType': '','issueDate': '','sortType': '0','pageNum': '1','requestId': '','pageSize': '20','source': '1','accountId': '','pageCode': 'sou|sou|soulb',

}

# 发送请求

response = requests.get(url=url, params=data, headers=headers)

获取数据

获取服务器返回响应数据 <所有的数据>

开发者工具: response

- response.json() 获取响应json数据

解析数据

提取我们想要的数据内容

for循环遍历

for index in response.json()['resultbody']['job']['items']:# index 具体岗位信息 --> 字典dit = {'职位': index['jobName'],'公司': index['fullCompanyName'],'薪资': index['provideSalaryString'],'城市': index['jobAreaString'],'经验': index['workYearString'],'学历': index['degreeString'],'公司性质': index['companyTypeString'],'公司规模': index['companySizeString'],'职位详情页': index['jobHref'],'公司详情页': index['companyHref'],}

以字典方式进行数据保存

csv_writer.writerow(dit)

print(dit)

保存表格

f = open('python.csv', mode='w', encoding='utf-8', newline='')

csv_writer = csv.DictWriter(f, fieldnames=['职位','公司','薪资','城市','经验','学历','公司性质','公司规模','职位详情页','公司详情页',

])

csv_writer.writeheader()

可视化部分

import pandas as pddf = pd.read_csv('data.csv')

df.head()df['学历'] = df['学历'].fillna('不限学历')

edu_type = df['学历'].value_counts().index.to_list()

edu_num = df['学历'].value_counts().to_list()from pyecharts import options as opts

from pyecharts.charts import Pie

from pyecharts.faker import Faker

from pyecharts.globals import CurrentConfig, NotebookType

CurrentConfig.NOTEBOOK_TYPE = NotebookType.JUPYTER_LAB

c = (Pie().add("",[list(z)for z in zip(edu_type,edu_num)],center=["40%", "50%"],).set_global_opts(title_opts=opts.TitleOpts(title="Python学历要求"),legend_opts=opts.LegendOpts(type_="scroll", pos_left="80%", orient="vertical"),).set_series_opts(label_opts=opts.LabelOpts(formatter="{b}: {c}"))

)

c.load_javascript()c.render_notebook()df['城市'] = df['城市'].str.split('·').str[0]

city_type = df['城市'].value_counts().index.to_list()

city_num = df['城市'].value_counts().to_list()c = (Pie().add("",[list(z)for z in zip(city_type,city_num)],center=["40%", "50%"],).set_global_opts(title_opts=opts.TitleOpts(title="Python招聘城市分布"),legend_opts=opts.LegendOpts(type_="scroll", pos_left="80%", orient="vertical"),).set_series_opts(label_opts=opts.LabelOpts(formatter="{b}: {c}"))

)

c.render_notebook()def LowMoney(i):if '万' in i:low = i.split('-')[0]if '千' in low:low_num = low.replace('千', '')low_money = int(float(low_num) * 1000)else:low_money = int(float(low) * 10000)else:low = i.split('-')[0]if '元/天' in low:low_num = low.replace('元/天', '')low_money = int(low_num) * 30else:low_money = int(float(low) * 1000)return low_money

df['最低薪资'] = df['薪资'].apply(LowMoney)def MaxMoney(j):Max = j.split('-')[-1].split('·')[0]if '万' in Max and '万/年' not in Max:max_num = int(float(Max.replace('万', '')) * 10000)elif '千' in Max:max_num = int(float(Max.replace('千', '')) * 1000)elif '元/天' in Max:max_num = int(Max.replace('元/天', '')) * 30else:max_num = int((int(Max.replace('万/年', '')) * 10000) / 12)return max_num

df['最高薪资'] = df['薪资'].apply(MaxMoney)def tranform_price(x):if x <= 5000.0:return '0~5000元'elif x <= 8000.0:return '5001~8000元'elif x <= 15000.0:return '8001~15000元'elif x <= 25000.0:return '15001~25000元'else:return '25000以上'df['最低薪资分级'] = df['最低薪资'].apply(lambda x:tranform_price(x))

price_1 = df['最低薪资分级'].value_counts()

datas_pair_1 = [(i, int(j)) for i, j in zip(price_1.index, price_1.values)]

df['最高薪资分级'] = df['最高薪资'].apply(lambda x:tranform_price(x))

price_2 = df['最高薪资分级'].value_counts()

datas_pair_2 = [(i, int(j)) for i, j in zip(price_2.index, price_2.values)]pie1 = (Pie(init_opts=opts.InitOpts(theme='dark',width='1000px',height='600px')).add('', datas_pair_1, radius=['35%', '60%']).set_series_opts(label_opts=opts.LabelOpts(formatter="{b}:{d}%")).set_global_opts(title_opts=opts.TitleOpts(title="Python工作薪资\n\n最低薪资区间", pos_left='center', pos_top='center',title_textstyle_opts=opts.TextStyleOpts(color='#F0F8FF', font_size=20, font_weight='bold'),)).set_colors(['#EF9050', '#3B7BA9', '#6FB27C', '#FFAF34', '#D8BFD8', '#00BFFF', '#7FFFAA'])

)

pie1.render_notebook()pie1 = (Pie(init_opts=opts.InitOpts(theme='dark',width='1000px',height='600px')).add('', datas_pair_2, radius=['35%', '60%']).set_series_opts(label_opts=opts.LabelOpts(formatter="{b}:{d}%")).set_global_opts(title_opts=opts.TitleOpts(title="Python工作薪资\n\n最高薪资区间", pos_left='center', pos_top='center',title_textstyle_opts=opts.TextStyleOpts(color='#F0F8FF', font_size=20, font_weight='bold'),)).set_colors(['#EF9050', '#3B7BA9', '#6FB27C', '#FFAF34', '#D8BFD8', '#00BFFF', '#7FFFAA'])

)

pie1.render_notebook() exp_type = df['经验'].value_counts().index.to_list()

exp_num = df['经验'].value_counts().to_list()

c = (Pie().add("",[list(z)for z in zip(exp_type,exp_num)],center=["40%", "50%"],).set_global_opts(title_opts=opts.TitleOpts(title="Python招聘经验要求"),legend_opts=opts.LegendOpts(type_="scroll", pos_left="80%", orient="vertical"),).set_series_opts(label_opts=opts.LabelOpts(formatter="{b}: {c}"))

)

c.render_notebook()# 按城市分组并计算平均薪资

avg_salary = df.groupby('城市')['最低薪资'].mean()

CityType = avg_salary.index.tolist()

CityNum = [int(a) for a in avg_salary.values.tolist()]

avg_salary_1 = df.groupby('城市')['最高薪资'].mean()

CityType_1 = avg_salary_1.index.tolist()

CityNum_1 = [int(a) for a in avg_salary_1.values.tolist()]from pyecharts.charts import Bar

# 创建柱状图实例

c = (Bar().add_xaxis(CityType).add_yaxis("", CityNum).set_global_opts(title_opts=opts.TitleOpts(title="各大城市Python低平均薪资"),visualmap_opts=opts.VisualMapOpts(dimension=1,pos_right="5%",max_=30,is_inverse=True,),xaxis_opts=opts.AxisOpts(axislabel_opts=opts.LabelOpts(rotate=45)) # 设置X轴标签旋转角度为45度).set_series_opts(label_opts=opts.LabelOpts(is_show=False),markline_opts=opts.MarkLineOpts(data=[opts.MarkLineItem(type_="min", name="最小值"),opts.MarkLineItem(type_="max", name="最大值"),opts.MarkLineItem(type_="average", name="平均值"),]),)

)c.render_notebook()# 创建柱状图实例

c = (Bar().add_xaxis(CityType_1).add_yaxis("", CityNum_1).set_global_opts(title_opts=opts.TitleOpts(title="各大城市Python高平均薪资"),visualmap_opts=opts.VisualMapOpts(dimension=1,pos_right="5%",max_=30,is_inverse=True,),xaxis_opts=opts.AxisOpts(axislabel_opts=opts.LabelOpts(rotate=45)) # 设置X轴标签旋转角度为45度).set_series_opts(label_opts=opts.LabelOpts(is_show=False),markline_opts=opts.MarkLineOpts(data=[opts.MarkLineItem(type_="min", name="最小值"),opts.MarkLineItem(type_="max", name="最大值"),opts.MarkLineItem(type_="average", name="平均值"),]),)

)c.render_notebook()### 结论:1. 学历要求基本大专以上2. 薪资待遇: 8000-25000 左右3. 北上广 薪资偏高一些

### 如何简单实现可视化分析1. 通过爬虫采集完整的数据内容 --> 表格 / 数据库2. 读取文件内容3. 统计每个类目的数据情况4. 通过可视化模块: <使用官方文档提供代码模板去实现>import pandas as pd# 读取数据

df = pd.read_csv('data.csv')

# 显示前五行数据

df.head()c_type = df['公司性质'].value_counts().index.to_list() # 统计数据类目

c_num = df['公司性质'].value_counts().to_list() # 统计数据个数

c_typefrom pyecharts.charts import Bar # 导入pyecharts里面柱状图

from pyecharts.faker import Faker # 导入随机生成数据

from pyecharts.globals import ThemeType # 主题设置c = (Bar({"theme": ThemeType.MACARONS}) # 主题设置.add_xaxis(c_type) # x轴数据.add_yaxis("", c_num) # Y轴数据.set_global_opts(# 标题显示title_opts={"text": "Python招聘企业公司性质分布", "subtext": "民营', '已上市', '外资(非欧美)', '合资', '国企', '外资(欧美)', '事业单位'"})# 保存html文件

# .render("bar_base_dict_config.html")

)

# print(Faker.choose()) # ['小米', '三星', '华为', '苹果', '魅族', 'VIVO', 'OPPO'] 数据类目

# print(Faker.values()) # [38, 54, 20, 85, 71, 22, 38] 数据个数

c.render_notebook() # 直接显示在jupyter上面