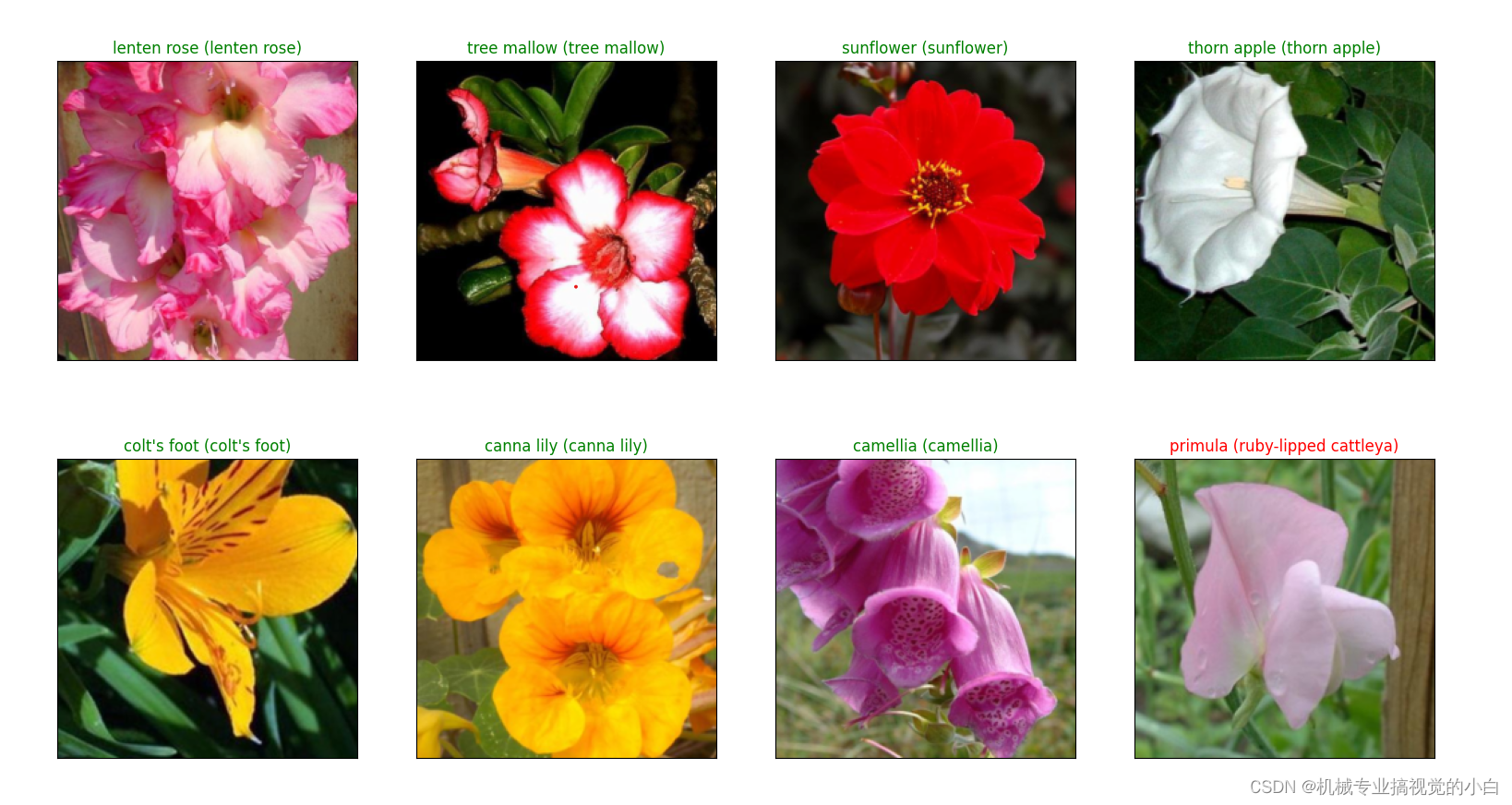

作品效果:识别给出花朵图片的种类。

主要软件:pycharm

技术:VGG神经网络

特色:实现在web进行花朵识别

运行环境:torch=1.12.1+cu116,torchvision=0.13.1+cu116

步骤:

下载数据集:在data_set文件夹下创建新文件夹"flower_data",点击链接下载花分类数据集 https://storage.googleapis.com/download.tensorflow.org/example_images/flower_photos.tgz,解压数据集到flower_data文件夹下,执行"split_data.py"脚本自动将数据集划分成训练集train和验证集val

import os

from shutil import copy, rmtree

import randomdef mk_file(file_path: str):if os.path.exists(file_path):# 如果文件夹存在,则先删除原文件夹在重新创建rmtree(file_path)os.makedirs(file_path)def main():# 保证随机可复现random.seed(0)# 将数据集中10%的数据划分到验证集中split_rate = 0.1# 指向你解压后的flower_photos文件夹cwd = os.getcwd()data_root = os.path.join(cwd, "flower_data")origin_flower_path = os.path.join(data_root, "flower_photos")assert os.path.exists(origin_flower_path), "path '{}' does not exist.".format(origin_flower_path)flower_class = [cla for cla in os.listdir(origin_flower_path)if os.path.isdir(os.path.join(origin_flower_path, cla))]# 建立保存训练集的文件夹train_root = os.path.join(data_root, "train")mk_file(train_root)for cla in flower_class:# 建立每个类别对应的文件夹mk_file(os.path.join(train_root, cla))# 建立保存验证集的文件夹val_root = os.path.join(data_root, "val")mk_file(val_root)for cla in flower_class:# 建立每个类别对应的文件夹mk_file(os.path.join(val_root, cla))for cla in flower_class:cla_path = os.path.join(origin_flower_path, cla)images = os.listdir(cla_path)num = len(images)# 随机采样验证集的索引eval_index = random.sample(images, k=int(num*split_rate))for index, image in enumerate(images):if image in eval_index:# 将分配至验证集中的文件复制到相应目录image_path = os.path.join(cla_path, image)new_path = os.path.join(val_root, cla)copy(image_path, new_path)else:# 将分配至训练集中的文件复制到相应目录image_path = os.path.join(cla_path, image)new_path = os.path.join(train_root, cla)copy(image_path, new_path)print("\r[{}] processing [{}/{}]".format(cla, index+1, num), end="") # processing barprint()print("processing done!")if __name__ == '__main__':main()

- 建立模型 model

根据vgg16模型建立,在model.py文件中实现,make_features函数确定卷积层和池化层的顺序和参数(卷积核大小,步长等),classifier 确定全连接分类的参数,并初始化权重和前向传播。

import torch.nn as nn

import torch# official pretrain weights

model_urls = {'vgg11': 'https://download.pytorch.org/models/vgg11-bbd30ac9.pth','vgg13': 'https://download.pytorch.org/models/vgg13-c768596a.pth','vgg16': 'https://download.pytorch.org/models/vgg16-397923af.pth','vgg19': 'https://download.pytorch.org/models/vgg19-dcbb9e9d.pth'

}class VGG(nn.Module):def __init__(self, features, num_classes=1000, init_weights=False):# net = vgg(model_name=model_name, num_classes=5, init_weights=True)super(VGG, self).__init__()self.features = features # 由make_features函数确定,处理特征值的方法# features确定卷积和池化的操作顺序,.classifier 确定全连接分类的参数self.classifier = nn.Sequential(# 卷积后的结果是512x7x7,输入512*7*7xbatch_size,输出4096xbatch_size# 一个有序的容器,神经网络模块将按照在传入构造器的顺序依次被添加到计算图中执行,# 同时以神经网络模块为元素的有序字典也可以作为传入参数。nn.Linear(512 * 7 * 7, 4096), # 全连接层fc,矩阵展平nn.ReLU(True),nn.Dropout(p=0.5), # 防止过拟合nn.Linear(4096, 4096),nn.ReLU(True),nn.Dropout(p=0.5),nn.Linear(4096, num_classes))if init_weights:self._initialize_weights()# 前向传播def forward(self, x):# N x 3 x 224 x 224x = self.features(x) # 卷积提取特征# N x 512 x 7 x 7x = torch.flatten(x, start_dim=1) # 展平# N x 512*7*7x = self.classifier(x) # 全连接分类return xdef _initialize_weights(self): # 初始化各层权重for m in self.modules():if isinstance(m, nn.Conv2d): # 判断是否相同# nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')nn.init.xavier_uniform_(m.weight) # 初始化权重if m.bias is not None:nn.init.constant_(m.bias, 0)elif isinstance(m, nn.Linear):nn.init.xavier_uniform_(m.weight)# nn.init.normal_(m.weight, 0, 0.01)nn.init.constant_(m.bias, 0)# 确定每一层是卷积层还是池化,及其参数(卷积核大小,步长等)

def make_features(cfg: list):layers = []in_channels = 3for v in cfg: # 遍历层表if v == "M":layers += [nn.MaxPool2d(kernel_size=2, stride=2)]else:conv2d = nn.Conv2d(in_channels, v, kernel_size=3, padding=1)# nn.Conv2d(in_channels=3,out_channels=64,kernel_size=4,stride=2,padding=1)layers += [conv2d, nn.ReLU(True)]in_channels = vreturn nn.Sequential(*layers)cfgs = { # M代表池化,数字代表卷积出的个数'vgg11': [64, 'M', 128, 'M', 256, 256, 'M', 512, 512, 'M', 512, 512, 'M'],'vgg13': [64, 64, 'M', 128, 128, 'M', 256, 256, 'M', 512, 512, 'M', 512, 512, 'M'],'vgg16': [64, 64, 'M', 128, 128, 'M', 256, 256, 256, 'M', 512, 512, 512, 'M', 512, 512, 512, 'M'],'vgg19': [64, 64, 'M', 128, 128, 'M', 256, 256, 256, 256, 'M', 512, 512, 512, 512, 'M', 512, 512, 512, 512, 'M'],

}# 确定运行的是哪一个版本的vgg

def vgg(model_name="vgg16", **kwargs):assert model_name in cfgs, "Warning: model number {} not in cfgs dict!".format(model_name)cfg = cfgs[model_name] # 确定运行的是哪一个版本的vggmodel = VGG(make_features(cfg), **kwargs)# cfg经过make_features后,已经确定每一层的分布return model

- 训练train

把数据集的照片进行处理得到向量,花种类名称写入文件class_indices.json中,确定batch_size = 8,运用模型进行训练,反向传播计算梯度,不断更新权重,最终计算损失函数,保存损失最小的模型权重,到vgg16Net.pth文件中。

import os

import sys

import jsonimport torch

import torch.nn as nn

from torchvision import transforms, datasets

# torchvision.datasets: 一些加载数据的函数及常用的数据集接口;

# torchvision.models: 包含常用的模型结构(含预训练模型),例如AlexNet、VGG、ResNet等;

# torchvision.transforms: 常用的图片变换,例如裁剪、旋转等;

import torch.optim as optim

from tqdm import tqdmfrom model import vggdef main():device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")print("using {} device.".format(device))# 定义一个把数据集的照片处理得到向量的操作data_transform = { # transforms.Compose这个类的主要作用是串联多个图片变换的操作。"train": transforms.Compose([transforms.RandomResizedCrop(224),# transforms.RandomResizedCrop(224)将给定图像随机裁剪为不同的大小和宽高比,# 然后缩放所裁剪得到的图像为制定的大小;(即先随机采集,然后对裁剪得到的图像缩放为同一大小),默认scale=(0.08, 1.0)transforms.RandomHorizontalFlip(),# transforms.RandomHorizontalFlip 随机水平翻转transforms.ToTensor(), # transforms.ToTensor() 将给定图像转为Tensor# transforms.Normalize() 归一化处理transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]),"val": transforms.Compose([transforms.Resize((224, 224)),transforms.ToTensor(),transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])}data_root = os.path.abspath(os.path.join(os.getcwd(), "../..")) # get data root pathimage_path = os.path.join(data_root, "data_set", "flower_data") # flower data set pathassert os.path.exists(image_path), "{} path does not exist.".format(image_path)# 把数据集的照片处理得到向量train_dataset = datasets.ImageFolder(root=os.path.join(image_path, "train"),transform=data_transform["train"])# datasets.ImageFolder# root:图片存储的根目录,即各类别文件夹所在目录的上一级目录,在下面的例子中是’./ data / train /’。# transform:对图片进行预处理的操作(函数),原始图片作为输入,返回一个转换后的图片。# target_transform:对图片类别进行预处理的操作,输入为# target,输出对其的转换。如果不传该参数,即对target不做任何转换,返回的顺序索引0, 1, 2…# loader:表示数据集加载方式,通常默认加载方式即可。# 生成的dataset 有以下成员变量:# self.classes:用一个list保存类别名称# self.class_to_idx:类别对应的索引,与不做任何转换返回的 对应# self.imgs:保存(img - path,class ) tuple的 list,train_num = len(train_dataset)# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4}flower_list = train_dataset.class_to_idxcla_dict = dict((val, key) for key, val in flower_list.items()) # 保存花种类名称# write dict into json filejson_str = json.dumps(cla_dict, indent=4) # 花种类名称写入文件with open('class_indices.json', 'w') as json_file:json_file.write(json_str)batch_size = 8 # 每批次训练8个nw = min([os.cpu_count(), batch_size if batch_size > 1 else 0, 8]) # number of workers# num_workers 用多少个子进程加载数据print('Using {} dataloader workers every process'.format(nw))# torch.utils.data.DataLoader# 该接口主要用来将自定义的数据读取接口的输出或者PyTorch已有的数据读取接口的输入按照batch size封装成Tensor,# 后续只需要再包装成Variable即可作为模型的输入,因此该接口有点承上启下的作用,比较重要## 参数:# dataset(Dataset) – 加载数据的数据集。# batch_size(int, optional) – 每个batch加载多少个样本(默认: 1)。# shuffle(bool, optional) – 设置为True时会在每个epoch重新打乱数据(默认: False).# sampler(Sampler, optional) – 定义从数据集中提取样本的策略,即生成index的方式,可以顺序也可以乱序# num_workers(int, optional) – 用多少个子进程加载数据。0表示数据将在主进程中加载(默认: 0)train_loader = torch.utils.data.DataLoader(train_dataset,batch_size=batch_size, shuffle=True,num_workers=nw)# 处理验证集validate_dataset = datasets.ImageFolder(root=os.path.join(image_path, "val"),transform=data_transform["val"])val_num = len(validate_dataset)validate_loader = torch.utils.data.DataLoader(validate_dataset,batch_size=batch_size, shuffle=False,num_workers=nw)print("using {} images for training, {} images for validation.".format(train_num,val_num))# test_data_iter = iter(validate_loader)# test_image, test_label = test_data_iter.next()# 开始确定网络模型model_name = "vgg16"net = vgg(model_name=model_name, num_classes=5, init_weights=True)# vgg(model_name="vgg16", **kwargs):model = VGG(make_features(model_name), **kwargs)## VGG(self, features, num_classes=1000, init_weights=False):net.to(device)# 这行代码的意思是将所有最开始读取数据时的tensor变量copy一份到device所指定的GPU上去,之后的运算都在GPU上进行loss_function = nn.CrossEntropyLoss()# nn.CrossEntropyLoss是pytorch下的交叉熵损失,用于分类任务使用optimizer = optim.Adam(net.parameters(), lr=0.0001)# 为了使用torch.optim,需先构造一个优化器对象Optimizer,用来保存当前的状态,并能够根据计算得到的梯度来更新参数。# 要构建一个优化器optimizer,你必须给它一个可进行迭代优化的包含了所有参数(所有的参数必须是变量s)的列表。 然后,您可以指定程序优化特定的选项,例如学习速率,权重衰减等。# 训练次数epochs = 30best_acc = 0.0 # 最好准确率save_path = './{}Net.pth'.format(model_name)train_steps = len(train_loader) # 训练集的个数for epoch in range(epochs):# trainnet.train() # 开始训练# b) model.train() :启用 BatchNormalization 和Dropout。# 在模型测试阶段使用model.train() 让model变成训练模式,此时dropout和batch# normalization的操作在训练q起到防止网络过拟合的问题。running_loss = 0.0 # 损失# Tqdm 是一个快速,可扩展的Python进度条,可以在 Python 长循环中添加一个进度提示信息,# 用户只需要封装任意的迭代器 tqdm(iterator)。train_bar = tqdm(train_loader, file=sys.stdout)for step, data in enumerate(train_bar):images, labels = dataoptimizer.zero_grad() # 一些优化算法,如共轭梯度和LBFGS需要重新评估目标函数多次,必须清除梯度outputs = net(images.to(device)) # 输出是预测值loss = loss_function(outputs, labels.to(device)) # 损失,通过预测和标签计算loss.backward() # 反向传播计算梯度optimizer.step() # step()方法来对所有的参数进行更新# print statisticsrunning_loss += loss.item()train_bar.desc = "train epoch[{}/{}] loss:{:.3f}".format(epoch + 1,epochs,loss)# validatenet.eval() # 开始验证,计算准确率# a) model.eval(),不启用# BatchNormalization和Dropout。此时pytorch会自动把BN和DropOut固定住,不会取平均,而是用训练好的值。# 不然的话,一旦test的batch_size过小,很容易就会因BN层导致模型performance损失较大;acc = 0.0 # accumulate accurate number / epochwith torch.no_grad():val_bar = tqdm(validate_loader, file=sys.stdout)for val_data in val_bar:val_images, val_labels = val_data # 获取验证数据outputs = net(val_images.to(device))predict_y = torch.max(outputs, dim=1)[1] # 预测值的种类的索引# output = torch.max(input, dim)# 输入# input是softmax函数输出的一个tensor# dim是max函数索引的维度0 / 1,0是每列的最大值,1是每行的最大值# 输出# 函数会返回两个tensor,第一个tensor是每行的最大值;第二个tensor是每行最大值的索引。# 对两个张量Tensor进行逐元素的比较,若相同位置的两个元素相同,则返回True;若不同,返回False。acc += torch.eq(predict_y, val_labels.to(device)).sum().item()val_accurate = acc / val_num # 预测准确个数/总数print('[epoch %d] train_loss: %.3f val_accuracy: %.3f' %(epoch + 1, running_loss / train_steps, val_accurate))if val_accurate > best_acc: # 保留最好的准确率(模型)best_acc = val_accuratetorch.save(net.state_dict(), save_path)print('Finished Training')if __name__ == '__main__':main()

- 预测 predict

读入待预测文件,进行处理得到向量,加载训练好的权重vgg16Net.pth,运行模型,得到每个种类的得分,最大值视为预测结果。

import os

import jsonimport torch

from PIL import Image

from torchvision import transforms

import matplotlib.pyplot as pltfrom model import vggdef main():device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")data_transform = transforms.Compose([transforms.Resize((224, 224)),transforms.ToTensor(),transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])# load imageimg_path = "image/tulip.jpg"# img_path = "image/1.jpg"# img_path = "image/roses.jpg"# img_path = "image/zpp.jpg"# img_path = "image/zx4.jpg"# img_path = "image/sunflowersP.jpg"# img_path = "image/sunflowers.jpg"# img_path = "image/dandelion.jpg"# img_path = "image/daisy.jpg"assert os.path.exists(img_path), "file: '{}' dose not exist.".format(img_path)img = Image.open(img_path)plt.imshow(img)# [N, C, H, W]img = data_transform(img) # 处理测试图片为张量# expand batch dimensionimg = torch.unsqueeze(img, dim=0)# unsqueeze()函数起升维的作用,参数表示在哪个地方加一个维度。# 在第一个维度(中括号)的每个元素加中括号 0表示在张 量最外层加一个中括号变成第一维。# 图像的向量为一个列表,大小是224*224,unsqueeze后变为1 x 224*224的张量# read class_indictjson_path = './class_indices.json' # 读取花种类名称,字典格式assert os.path.exists(json_path), "file: '{}' dose not exist.".format(json_path)with open(json_path, "r") as f:class_indict = json.load(f)# create modelmodel = vgg(model_name="vgg16", num_classes=5).to(device)# load model weightsweights_path = "./vgg16Net.pth" # 加载训练好的权重assert os.path.exists(weights_path), "file: '{}' dose not exist.".format(weights_path)# 把权重填入模型,# map_location=torch.device('cpu'),意思是映射到cpu上,在cpu上加载模型,无论你这个模型从哪里训练保存的。# 一句话:map_location适用于修改模型能在gpu上运行还是cpu上运行model.load_state_dict(torch.load(weights_path, map_location=device))model.eval() # 开始验证,不启用 BatchNormalization 和 Dropout。with torch.no_grad():# predict classoutput = torch.squeeze(model(img.to(device))).cpu()# queeze()# 函数的功能是维度压缩。返回一个tensor(张量),其中input中大小为1的所有维都已删除。## 举个例子:如果 input的形状为(A×1×B×C×1×D),那么返回的tensor的形状则为(A×B×C×D)predict = torch.softmax(output, dim=0)# torch.nn.Softmax中只要一个参数:来制定归一化维度如果是dim=0指代的是行,dim=1指代的是列。predict_cla = torch.argmax(predict).numpy() # 返回最大值的索引,也就是预测种类的名称# torch.argmax(input, dim=None, keepdim=False)# 返回指定维度最大值的序号# dim给定的定义是:the demention to reduce.也就是把dim这个维度的,变成这个维度的最大值的index。# 打印种类名字和正确概率print_res = "class: {} prob: {:.3}".format(class_indict[str(predict_cla)],predict[predict_cla].numpy())plt.title(print_res)# 分别打印每个种类的可能概率for i in range(len(predict)):print("class: {:10} prob: {:.3}".format(class_indict[str(i)],predict[i].numpy()))plt.show()if __name__ == '__main__':main()

特色:实现在web进行花朵识别

- 使用方式:在代码的本地文件夹处输入cmd进入终端,activate切换所需的虚拟环境,pip install streamlit下载所需要的库,streamlit run app.py 指令运行

- 代码app.py,调用streamlit库,传入预测结果,并显示在web上

实现结果: