文章目录

- 1、Zookeeper 入门

- 1.1 概述

- 1.2 特点

- 1.3 数据结构

- 1.4 应用场景

- 2、本地安装

- 2.1 本地模式安装

- 2.2 配置参数解读

- 3、集群操作

- 3.1 集群操作

- 3.1.1 集群安装

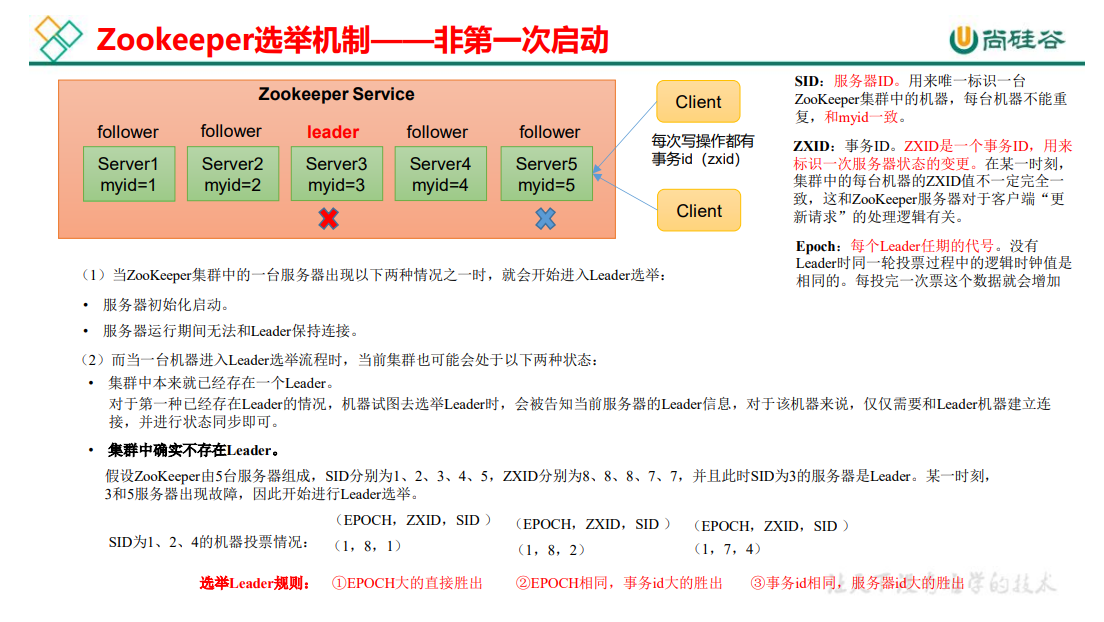

- 3.1.2 选举机制(面试重点)

- 3.1.3 集群启停脚本

- 3.2 客户端命令行操作

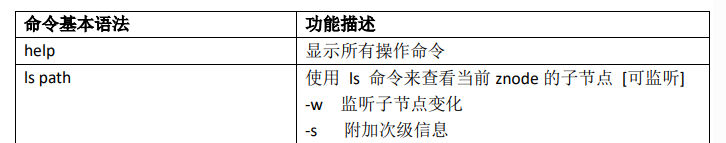

- 3.2.1 命令行语法

- 3.2.2 znode 节点数据信息

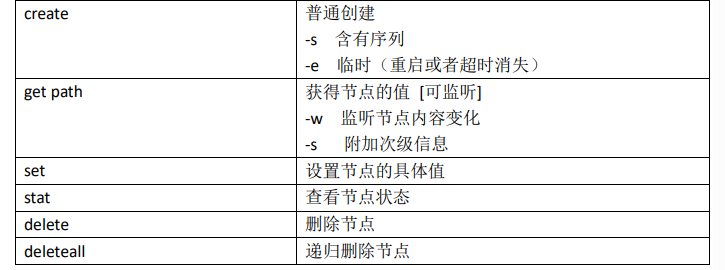

- 3.2.3 节点类型(持久/短暂/有序号/无序号)

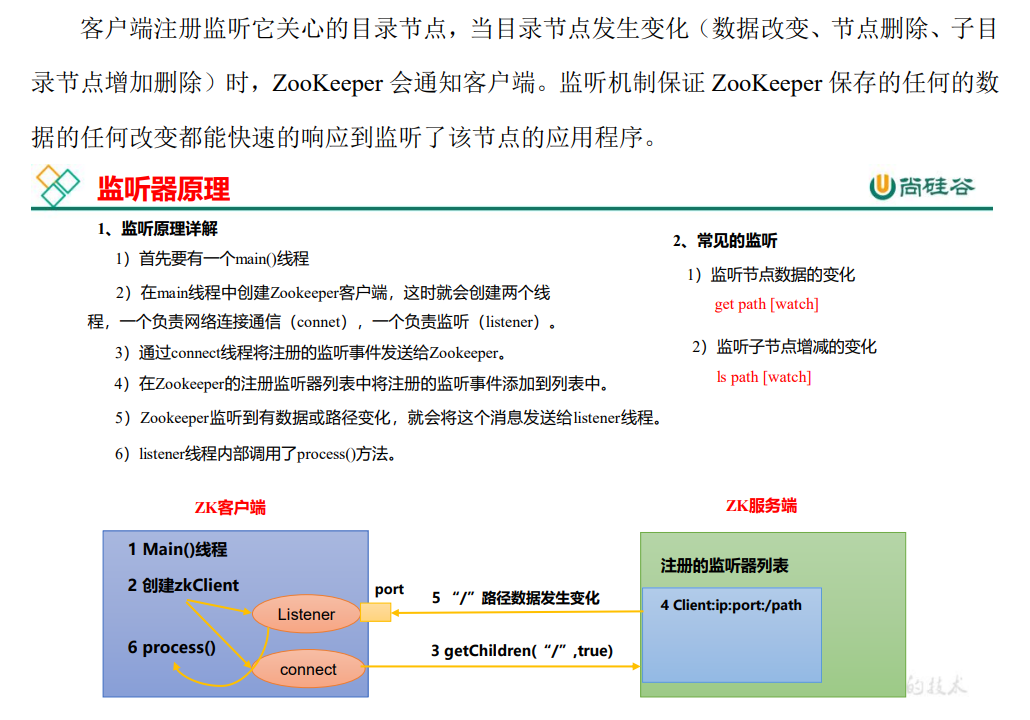

- 3.2.4 监听器原理

- 3.2.5 节点删除与查看

- 3.3 客户端API操作

- 3.3.1 IDEA环境搭建

- 3.3.2 创建zookeeper客户端

- 3.3.3 创建子节点

- 3.3.4 获取子节点并监听节点变化

- 3.3.5 判断Znode是否存在

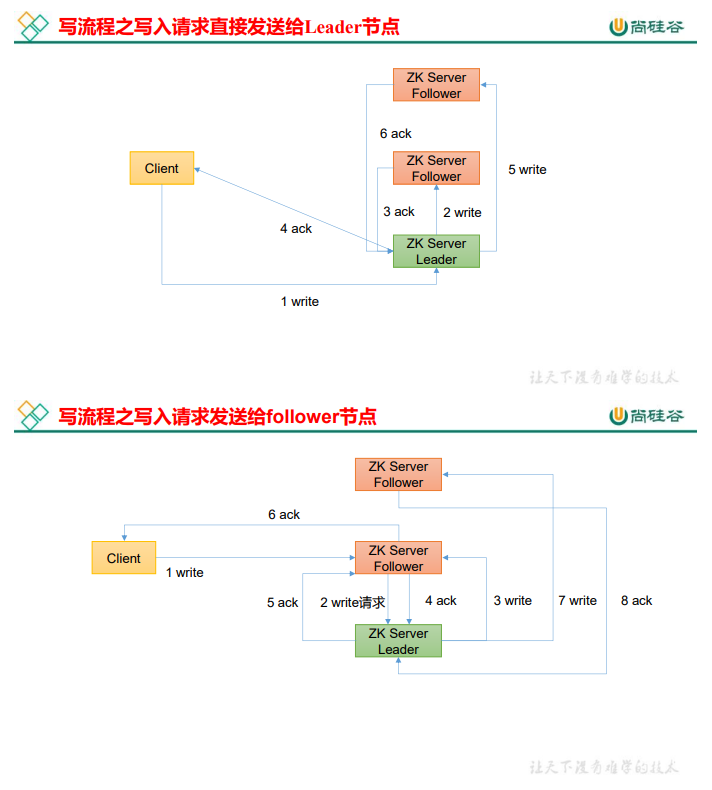

- 3.4 客户端向服务端写数据流程

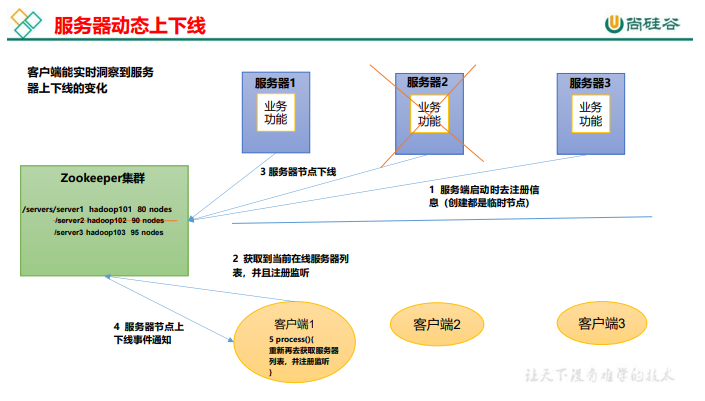

- 4、服务器动态上下线监听案例

- 4.1 需求

- 4.2 需求分析

- 4.3 具体实现

- 4.4 测试

- 5、Zookeeper分布式锁案例

- 5.1 原生Zookeeper实现分布式锁案例

- 5.2 Curator 框架实现分布式锁案例

- 6、企业面试真题

1、Zookeeper 入门

1.1 概述

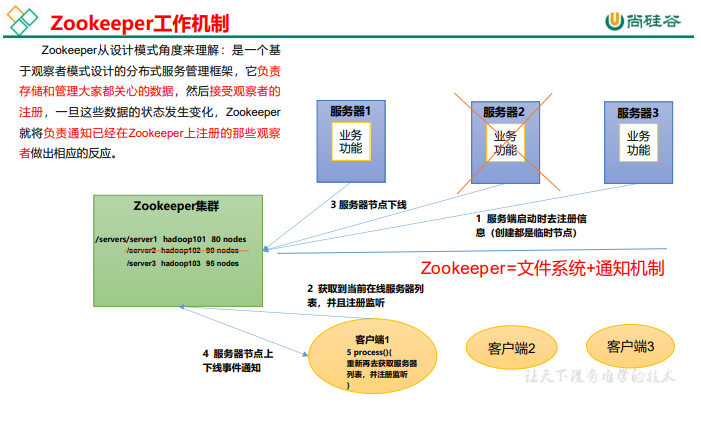

Zookeeper 是一个开源的分布式的,为分布式框架提供协调服务的 Apache 项目。

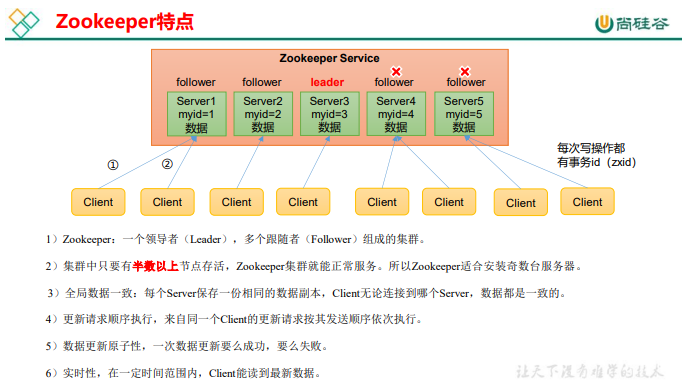

1.2 特点

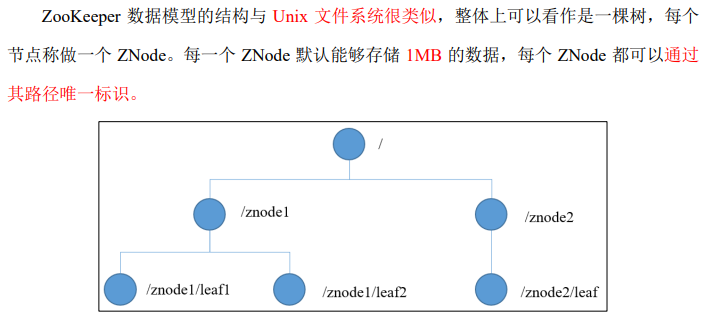

1.3 数据结构

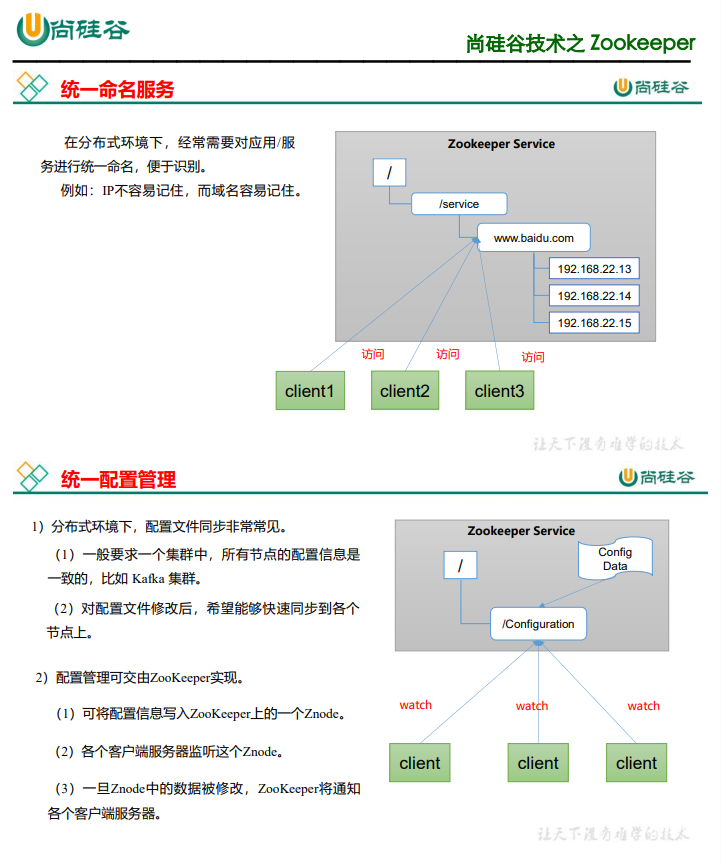

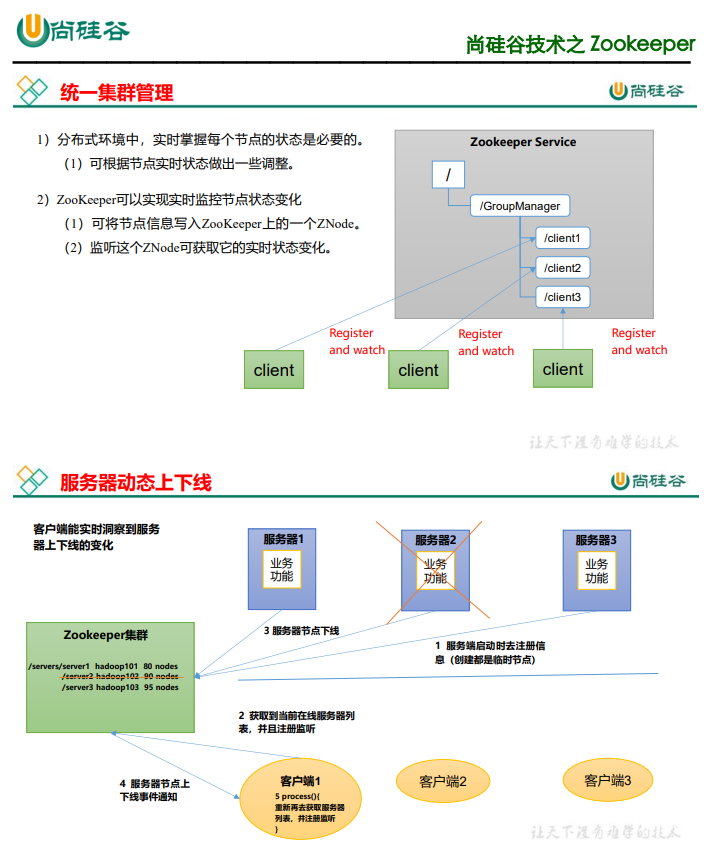

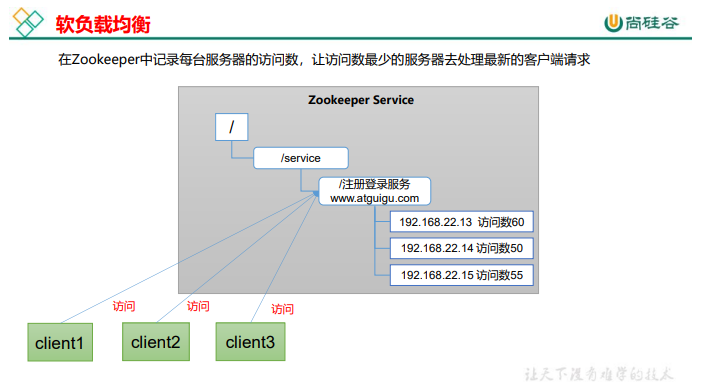

1.4 应用场景

提供的服务包括:统一命名服务、统一配置管理、统一集群管理、服务器节点动态上下

线、软负载均衡等。

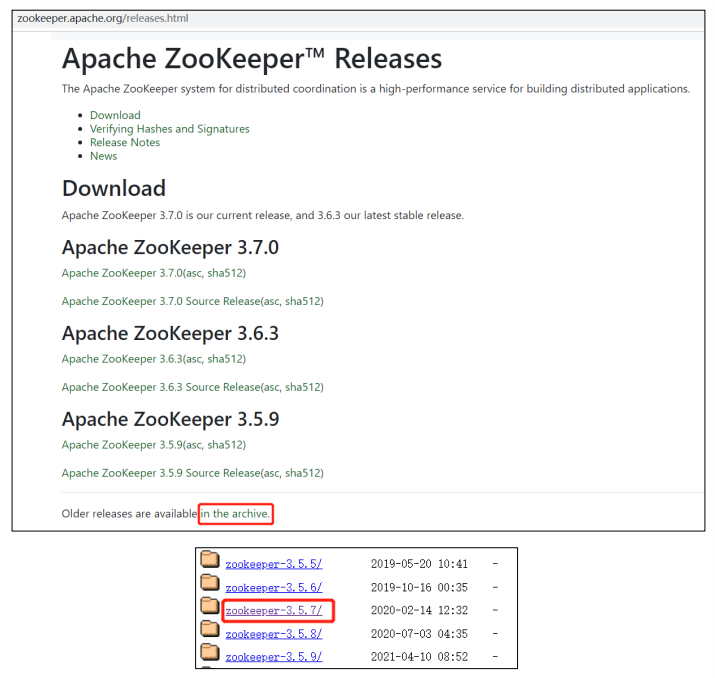

2、本地安装

2.1 本地模式安装

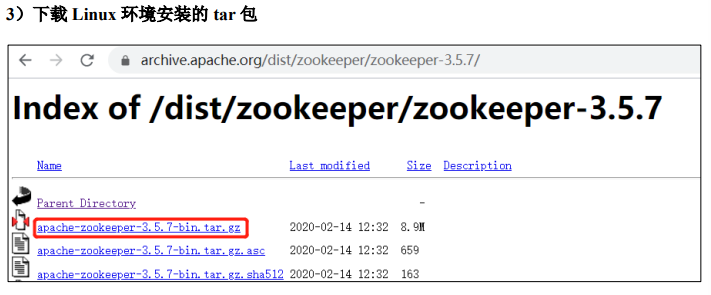

1)安装前准备

(1)安装 JDK

(2)拷贝 apache-zookeeper-3.5.7-bin.tar.gz 安装包到 Linux 系统下

(3)解压到指定目录

[lln@hadoop102 software]$ tar -zxvf apache-zookeeper-3.5.7-bin.tar.gz -C /opt/module/

(4)修改名称

[lln@hadoop102 software]$ cd /opt/module/

[lln@hadoop102 module]$ mv apache-zookeeper-3.5.7-bin zookeeper-3.5.7

2)配置修改

(1)将/opt/module/zookeeper-3.5.7/conf 这个路径下的 zoo_sample.cfg 修改为 zoo.cfg;

[lln@hadoop102 conf]$ mv zoo_sample.cfg zoo.cfg

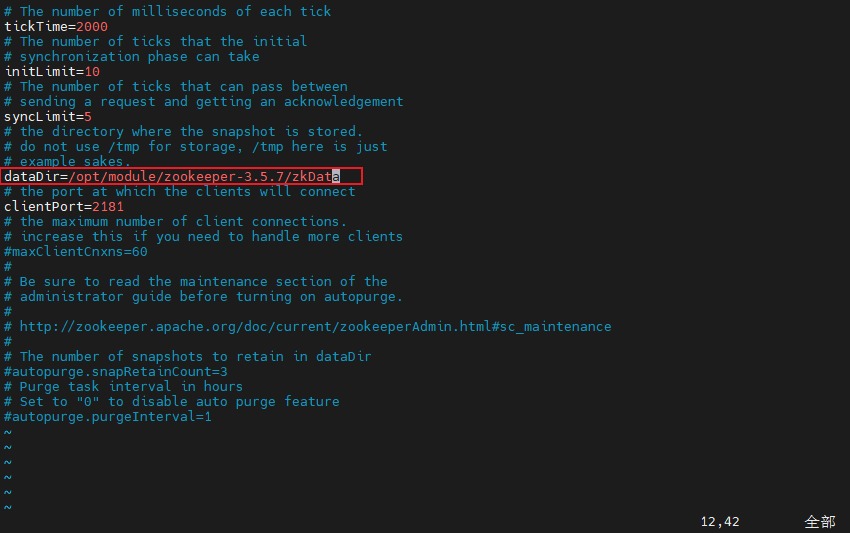

(2)打开 zoo.cfg 文件,修改 dataDir 路径:

[atguigu@hadoop102 zookeeper-3.5.7]$ vim zoo.cfg

修改如下内容:

dataDir=/opt/module/zookeeper-3.5.7/zkData

(3)在/opt/module/zookeeper-3.5.7/这个目录上创建 zkData 文件夹

[lln@hadoop102 zookeeper-3.5.7]$ mkdir zkData

3)操作 Zookeeper

(1)启动 Zookeeper

[atguigu@hadoop102 zookeeper-3.5.7]$ bin/zkServer.sh start

(2)查看进程是否启动

[atguigu@hadoop102 zookeeper-3.5.7]$ jps

4020 Jps

4001 QuorumPeerMain

(3)查看状态

[atguigu@hadoop102 zookeeper-3.5.7]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Mode: standalone

(4)启动客户端

[atguigu@hadoop102 zookeeper-3.5.7]$ bin/zkCli.sh

(5)退出客户端:

[zk: localhost:2181(CONNECTED) 0] quit

(6)停止 Zookeeper

[atguigu@hadoop102 zookeeper-3.5.7]$ bin/zkServer.sh stop

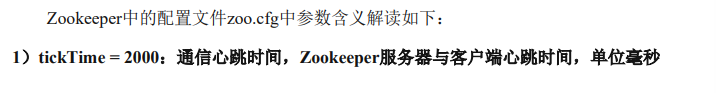

2.2 配置参数解读

3、集群操作

3.1 集群操作

3.1.1 集群安装

1)集群规划

在 hadoop102、hadoop103 和 hadoop104 三个节点上都部署 Zookeeper。

2)解压安装

(1)在 hadoop102 解压 Zookeeper 安装包到/opt/module/目录下

[atguigu@hadoop102 software]$ tar -zxvf apache-zookeeper-3.5.7-bin.tar.gz -C /opt/module/

(2)修改 apache-zookeeper-3.5.7-bin 名称为 zookeeper-3.5.7

[atguigu@hadoop102 module]$ mv apache-zookeeper-3.5.7-bin/ zookeeper-3.5.7

3)配置服务器编号

(1)在/opt/module/zookeeper-3.5.7/这个目录下创建 zkData

[atguigu@hadoop102 zookeeper-3.5.7]$ mkdir zkData

(2)在/opt/module/zookeeper-3.5.7/zkData 目录下创建一个 myid 的文件

[atguigu@hadoop102 zkData]$ vim myid

在文件中添加与 server 对应的编号(注意:上下不要有空行,左右不要有空格)

2

注意:添加 myid 文件,一定要在 Linux 里面创建,在 notepad++里面很可能乱码

(3)拷贝配置好的 zookeeper 到其他机器上

[atguigu@hadoop102 module ]$ xsync zookeeper-3.5.7

并分别在 hadoop103、hadoop104 上修改 myid 文件中内容为 3、4

4)配置zoo.cfg文件

(1)重命名/opt/module/zookeeper-3.5.7/conf 这个目录下的 zoo_sample.cfg 为 zoo.cfg

[atguigu@hadoop102 conf]$ mv zoo_sample.cfg zoo.cfg

(2)打开 zoo.cfg 文件

[atguigu@hadoop102 conf]$ vim zoo.cfg

#修改数据存储路径配置

dataDir=/opt/module/zookeeper-3.5.7/zkData

#增加如下配置

#######################cluster##########################

server.2=hadoop102:2888:3888

server.3=hadoop103:2888:3888

server.4=hadoop104:2888:3888

(3)配置参数解读

server.A=B:C:D

A 是一个数字,表示这个是第几号服务器;

集群模式下配置一个文件 myid,这个文件在 dataDir 目录下,这个文件里面有一个数据

就是 A 的值,Zookeeper 启动时读取此文件,拿到里面的数据与 zoo.cfg 里面的配置信息比

较从而判断到底是哪个 server。

B 是这个服务器的地址;

C 是这个服务器 Follower 与集群中的 Leader 服务器交换信息的端口;

D 是万一集群中的 Leader 服务器挂了,需要一个端口来重新进行选举,选出一个新的

Leader,而这个端口就是用来执行选举时服务器相互通信的端口。

(4)同步 zoo.cfg 配置文件

[atguigu@hadoop102 conf]$ xsync zoo.cfg

5)集群操作

(1)分别启动 Zookeeper

[atguigu@hadoop102 zookeeper-3.5.7]$ bin/zkServer.sh start

[atguigu@hadoop103 zookeeper-3.5.7]$ bin/zkServer.sh start

[atguigu@hadoop104 zookeeper-3.5.7]$ bin/zkServer.sh start

(2)查看状态

[atguigu@hadoop102 zookeeper-3.5.7]# bin/zkServer.sh status

JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Mode: follower

[atguigu@hadoop103 zookeeper-3.5.7]# bin/zkServer.sh status

JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Mode: leader

[atguigu@hadoop104 zookeeper-3.4.5]# bin/zkServer.sh status

JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Mode: follower

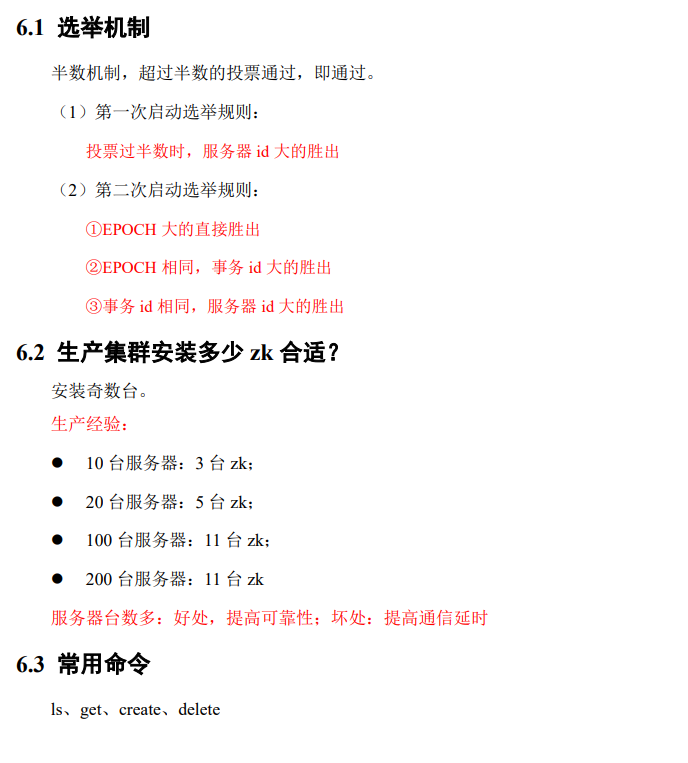

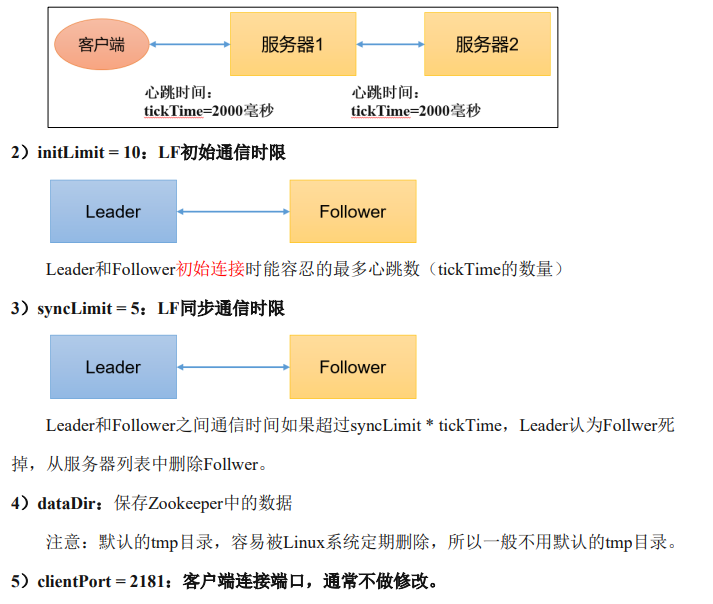

3.1.2 选举机制(面试重点)

3.1.3 集群启停脚本

1)在 hadoop102 的/home/atguigu/bin 目录下创建脚本

[atguigu@hadoop102 bin]$ vim zk.sh

在脚本中编写如下内容

#!/bin/bash

case $1 in

"start"){for i in hadoop102 hadoop103 hadoop104doecho ---------- zookeeper $i 启动 ------------ssh $i "/opt/module/zookeeper-3.5.7/bin/zkServer.sh start"done

};;

"stop"){for i in hadoop102 hadoop103 hadoop104doecho ---------- zookeeper $i 停止 ------------ ssh $i "/opt/module/zookeeper-3.5.7/bin/zkServer.sh stop"done

};;

"status"){for i in hadoop102 hadoop103 hadoop104doecho ---------- zookeeper $i 状态 ------------ ssh $i "/opt/module/zookeeper-3.5.7/bin/zkServer.sh status"done

};;

esac

2)增加脚本执行权限

[atguigu@hadoop102 bin]$ chmod u+x zk.sh

3)Zookeeper 集群启动脚本

[atguigu@hadoop102 module]$ zk.sh start

4)Zookeeper 集群停止脚本

[atguigu@hadoop102 module]$ zk.sh stop

3.2 客户端命令行操作

3.2.1 命令行语法

1)启动客户端

[atguigu@hadoop102 zookeeper-3.5.7]$ bin/zkCli.sh -server hadoop102:2181

2)显示所有操作命令

[zk: hadoop102:2181(CONNECTED) 1] help

3.2.2 znode 节点数据信息

1)查看当前znode中所包含的内容

[zk: hadoop102:2181(CONNECTED) 0] ls /

[zookeeper]

2)查看当前节点详细数据

[zk: hadoop102:2181(CONNECTED) 5] ls -s /

[zookeeper]cZxid = 0x0

ctime = Thu Jan 01 08:00:00 CST 1970

mZxid = 0x0

mtime = Thu Jan 01 08:00:00 CST 1970

pZxid = 0x0

cversion = -1

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 0

numChildren = 1

(1)czxid:创建节点的事务 zxid

每次修改 ZooKeeper 状态都会产生一个 ZooKeeper 事务 ID。事务 ID 是 ZooKeeper 中所

有修改总的次序。每次修改都有唯一的 zxid,如果 zxid1 小于 zxid2,那么 zxid1 在 zxid2 之

前发生。

(2)ctime:znode 被创建的毫秒数(从 1970 年开始)

(3)mzxid:znode 最后更新的事务 zxid

(4)mtime:znode 最后修改的毫秒数(从 1970 年开始)

(5)pZxid:znode 最后更新的子节点 zxid

(6)cversion:znode 子节点变化号,znode 子节点修改次数

(7)dataversion:znode 数据变化号

(8)aclVersion:znode 访问控制列表的变化号

(9)ephemeralOwner:如果是临时节点,这个是 znode 拥有者的 session id。如果不是临时节点则是 0。

(10)dataLength:znode 的数据长度

(11)numChildren:znode 子节点数量

3.2.3 节点类型(持久/短暂/有序号/无序号)

1)分别创建2个普通节点(永久节点 + 不带序号)

[zk: localhost:2181(CONNECTED) 3] create /sanguo "diaochan"

Created /sanguo

[zk: localhost:2181(CONNECTED) 4] create /sanguo/shuguo "liubei"

Created /sanguo/shuguo

注意:创建节点时,要赋值

2)获得节点的值

[zk: localhost:2181(CONNECTED) 5] get -s /sanguo

diaochan

cZxid = 0x100000003

ctime = Wed Aug 29 00:03:23 CST 2018

mZxid = 0x100000003

mtime = Wed Aug 29 00:03:23 CST 2018

pZxid = 0x100000004

cversion = 1

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 7

numChildren = 1

[zk: localhost:2181(CONNECTED) 6] get -s /sanguo/shuguo

liubei

cZxid = 0x100000004

ctime = Wed Aug 29 00:04:35 CST 2018

mZxid = 0x100000004

mtime = Wed Aug 29 00:04:35 CST 2018

pZxid = 0x100000004

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 6

numChildren = 0

3)创建带序号的节点(永久节点 + 带序号)

(1)先创建一个普通的根节点/sanguo/weiguo

[zk: localhost:2181(CONNECTED) 1] create /sanguo/weiguo "caocao"

Created /sanguo/weiguo

(2)创建带序号的节点

[zk: localhost:2181(CONNECTED) 2] create -s /sanguo/weiguo/zhangliao "zhangliao"

Created /sanguo/weiguo/zhangliao0000000000

[zk: localhost:2181(CONNECTED) 3] create -s /sanguo/weiguo/zhangliao "zhangliao"

Created /sanguo/weiguo/zhangliao0000000001

[zk: localhost:2181(CONNECTED) 4] create -s /sanguo/weiguo/xuchu "xuchu"

Created /sanguo/weiguo/xuchu0000000002

如果原来没有序号节点,序号从 0 开始依次递增。如果原节点下已有 2 个节点,则再排序时从 2 开始,以此类推。

4)创建短暂节点(短暂节点 + 不带序号 or 带序号)

(1)创建短暂的不带序号的节点

[zk: localhost:2181(CONNECTED) 7] create -e /sanguo/wuguo "zhouyu"

Created /sanguo/wuguo

(2)创建短暂的带序号的节点

[zk: localhost:2181(CONNECTED) 2] create -e -s /sanguo/wuguo "zhouyu"

Created /sanguo/wuguo0000000001

(3)在当前客户端是能查看到的

[zk: localhost:2181(CONNECTED) 3] ls /sanguo

[wuguo, wuguo0000000001, shuguo]

(4)退出当前客户端然后再重启客户端

[zk: localhost:2181(CONNECTED) 12] quit

[atguigu@hadoop104 zookeeper-3.5.7]$ bin/zkCli.sh

(5)再次查看根目录下短暂节点已经删除

[zk: localhost:2181(CONNECTED) 0] ls /sanguo

[shuguo]

5)修改节点数据值

[zk: localhost:2181(CONNECTED) 6] set /sanguo/weiguo "simayi"

3.2.4 监听器原理

1)节点的值变化监听

(1)在 hadoop104 主机上注册监听/sanguo 节点数据变化

[zk: localhost:2181(CONNECTED) 26] get -w /sanguo

(2)在 hadoop103 主机上修改/sanguo 节点的数据

[zk: localhost:2181(CONNECTED) 1] set /sanguo "xisi"

(3)观察 hadoop104 主机收到数据变化的监听

WATCHER::

WatchedEvent state:SyncConnected type:NodeDataChanged

path:/sanguo

注意:在hadoop103再多次修改/sanguo的值,hadoop104上不会再收到监听。因为注册

一次,只能监听一次。想再次监听,需要再次注册。

2)节点的子节点变化监听(路径变化)

(1)在 hadoop104 主机上注册监听/sanguo 节点的子节点变化

[zk: localhost:2181(CONNECTED) 1] ls -w /sanguo

[shuguo, weiguo]

(2)在 hadoop103 主机/sanguo 节点上创建子节点

[zk: localhost:2181(CONNECTED) 2] create /sanguo/jin "simayi"

Created /sanguo/jin

(3)观察 hadoop104 主机收到子节点变化的监听

WATCHER::

WatchedEvent state:SyncConnected type:NodeChildrenChanged

path:/sanguo

注意:节点的路径变化,也是注册一次,生效一次。想多次生效,就需要多次注册。

3.2.5 节点删除与查看

1)删除节点

[zk: localhost:2181(CONNECTED) 4] delete /sanguo/jin

2)递归删除节点

[zk: localhost:2181(CONNECTED) 15] deleteall /sanguo/shuguo

3)查看节点状态

[zk: localhost:2181(CONNECTED) 17] stat /sanguo

cZxid = 0x100000003

ctime = Wed Aug 29 00:03:23 CST 2018

mZxid = 0x100000011

mtime = Wed Aug 29 00:21:23 CST 2018

pZxid = 0x100000014

cversion = 9

dataVersion = 1

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 4

numChildren = 1

3.3 客户端API操作

前提:保证 hadoop102、hadoop103、hadoop104 服务器上 Zookeeper 集群服务端启动。

3.3.1 IDEA环境搭建

1)创建一个工程:zookeeper

2)添加pom文件

<dependencies><dependency><groupId>junit</groupId><artifactId>junit</artifactId><version>RELEASE</version></dependency><dependency><groupId>org.apache.logging.log4j</groupId><artifactId>log4j-core</artifactId><version>2.8.2</version></dependency><dependency><groupId>org.apache.zookeeper</groupId><artifactId>zookeeper</artifactId><version>3.5.7</version></dependency>

</dependencies>3)拷贝log4j.properties文件到项目根目录

需要在项目的 src/main/resources 目录下,新建一个文件,命名为“log4j.properties”,在

文件中填入。

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c]

- %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c]

- %m%n

4)创建包名com.atguigu.zk

5)创建类名称zkClient

3.3.2 创建zookeeper客户端

3.3.3 创建子节点

3.3.4 获取子节点并监听节点变化

package com.xxxx.lln;import org.apache.zookeeper.*;

import org.junit.Before;

import org.junit.Test;import java.io.IOException;

import java.util.List;public class zkClient {//不能有空格private String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";private int sessionTimeout = 2000;private ZooKeeper zkClient;@Beforepublic void init() throws IOException {zkClient = new ZooKeeper(connectString, sessionTimeout, new Watcher() {public void process(WatchedEvent watchedEvent) {}});}//创建子节点// 参数 1:要创建的节点的路径; 参数 2:节点数据 ; 参数 3:节点权限 ;参数 4:节点的类型@Testpublic void create() throws KeeperException, InterruptedException {String nodeCreated = zkClient.create("/atguigu","ss.avi".getBytes(), ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.PERSISTENT);}//获取子节点并监听节点变化@Testpublic void getChildren() throws KeeperException, InterruptedException {List<String> children = zkClient.getChildren("/",true);for (String child : children){System.out.println(child);}// 延时阻塞Thread.sleep(Long.MAX_VALUE);}}package com.xxxx.lln;import org.apache.zookeeper.*;

import org.junit.Before;

import org.junit.Test;import java.io.IOException;

import java.util.List;public class zkClient {//不能有空格private String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";private int sessionTimeout = 2000;private ZooKeeper zkClient;@Beforepublic void init() throws IOException {zkClient = new ZooKeeper(connectString, sessionTimeout, new Watcher() {public void process(WatchedEvent watchedEvent) {List<String> children = null;try {children = zkClient.getChildren("/",true);for (String child : children){System.out.println(child);}} catch (KeeperException e) {e.printStackTrace();} catch (InterruptedException e) {e.printStackTrace();}}});}//创建子节点// 参数 1:要创建的节点的路径; 参数 2:节点数据 ; 参数 3:节点权限 ;参数 4:节点的类型@Testpublic void create() throws KeeperException, InterruptedException {String nodeCreated = zkClient.create("/atguigu","ss.avi".getBytes(), ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.PERSISTENT);}//获取子节点并监听节点变化@Testpublic void getChildren() throws InterruptedException, KeeperException {// List<String> children = zkClient.getChildren("/",true);

// for (String child : children){

// System.out.println(child);

// }// 延时阻塞Thread.sleep(Long.MAX_VALUE);}}3.3.5 判断Znode是否存在

package com.xxxx.lln;import org.apache.zookeeper.*;

import org.apache.zookeeper.data.Stat;

import org.junit.Before;

import org.junit.Test;import java.io.IOException;

import java.util.List;public class zkClient {//不能有空格private String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";private int sessionTimeout = 2000;private ZooKeeper zkClient;@Beforepublic void init() throws IOException {zkClient = new ZooKeeper(connectString, sessionTimeout, new Watcher() {public void process(WatchedEvent watchedEvent) {List<String> children = null;try {children = zkClient.getChildren("/",true);for (String child : children){System.out.println(child);}} catch (KeeperException e) {e.printStackTrace();} catch (InterruptedException e) {e.printStackTrace();}}});}//创建子节点// 参数 1:要创建的节点的路径; 参数 2:节点数据 ; 参数 3:节点权限 ;参数 4:节点的类型@Testpublic void create() throws KeeperException, InterruptedException {String nodeCreated = zkClient.create("/atguigu","ss.avi".getBytes(), ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.PERSISTENT);}//获取子节点并监听节点变化@Testpublic void getChildren() throws InterruptedException, KeeperException {// List<String> children = zkClient.getChildren("/",true);

// for (String child : children){

// System.out.println(child);

// }// 延时阻塞Thread.sleep(Long.MAX_VALUE);}//判断Znode是否存在@Testpublic void exist() throws Exception {Stat stat = zkClient.exists("/atguigu", false);System.out.println(stat == null ? "not exist" : "exist");}}3.4 客户端向服务端写数据流程

4、服务器动态上下线监听案例

4.1 需求

某分布式系统中,主节点可以有多台,可以动态上下线,任意一台客户端都能实时感知

到主节点服务器的上下线。

4.2 需求分析

4.3 具体实现

(1)先在集群上创建/servers 节点

[zk: localhost:2181(CONNECTED) 10] create /servers “servers”

Created /servers

(2)在 Idea 中创建包名:com.atguigu.case1

(3)服务器端向 Zookeeper 注册代码

package com.xxxx.lln.case1;import org.apache.zookeeper.*;import java.io.IOException;public class DistributeServer {private String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";private int sessionTimeout = 2000;private ZooKeeper zk = null;public static void main(String[] args) throws IOException, KeeperException, InterruptedException {DistributeServer server = new DistributeServer();//获取zk连接server.getConnect();//利用zk连接注册服务器信息server.regist(args[0]);// 启动业务逻辑server.business();}//业务功能private void business() throws InterruptedException {Thread.sleep(Long.MAX_VALUE);}//注册服务器private void regist(String hostname) throws KeeperException, InterruptedException {// 参数 1:要创建的节点的路径; 参数 2:节点数据 ; 参数 3:节点权限 ;参数 4:节点的类型zk.create("/servers/" + hostname,hostname.getBytes(), ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.EPHEMERAL_SEQUENTIAL);System.out.println(hostname + " is online");}// 创建到 zk 的客户端连接private void getConnect() throws IOException {zk = new ZooKeeper(connectString, sessionTimeout, new Watcher() {public void process(WatchedEvent watchedEvent) {}});}}(3)客户端代码

package com.xxxx.lln.case1;import org.apache.zookeeper.KeeperException;

import org.apache.zookeeper.WatchedEvent;

import org.apache.zookeeper.Watcher;

import org.apache.zookeeper.ZooKeeper;import java.io.IOException;

import java.util.ArrayList;

import java.util.List;public class DistributeClient {private String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";private int sessionTimeout = 2000;private ZooKeeper zk = null;public static void main(String[] args) throws Exception {DistributeClient client = new DistributeClient();//1.获取zk连接client.getConnect();//2.监听/servers下面子节点的增加和删除client.getServerList();// 3 业务进程启动client.business();}// 业务功能public void business() throws Exception{System.out.println("client is working ...");Thread.sleep(Long.MAX_VALUE);}private void getServerList() throws KeeperException, InterruptedException {// 1 获取服务器子节点信息,并且对父节点进行监听List<String> children = zk.getChildren("/servers",true);// 2 存储服务器信息列表ArrayList<String> servers = new ArrayList<String>();// 3 遍历所有节点,获取节点中的主机名称信息for (String child : children){byte[] data = zk.getData("/servers/"+child,false,null);servers.add(new String(data));}// 4 打印服务器列表信息System.out.println(servers);}// 创建到 zk 的客户端连接private void getConnect() throws IOException {zk = new ZooKeeper(connectString, sessionTimeout, new Watcher() {public void process(WatchedEvent watchedEvent) {//再次启动监听try {getServerList();} catch (KeeperException e) {e.printStackTrace();} catch (InterruptedException e) {e.printStackTrace();}}});}}4.4 测试

1)在 Linux 命令行上操作增加减少服务器

(1)启动 DistributeClient 客户端

(2)在 hadoop102 上 zk 的客户端/servers 目录上创建临时带序号节点

[zk: localhost:2181(CONNECTED) 1] create -e -s /servers/hadoop102 "hadoop102"

[zk: localhost:2181(CONNECTED) 2] create -e -s /servers/hadoop103 "hadoop103"

(3)观察 Idea 控制台变化

[hadoop102, hadoop103]

(4)执行删除操作

[zk: localhost:2181(CONNECTED) 8] delete /servers/hadoop1020000000000

(5)观察 Idea 控制台变化

[hadoop103]

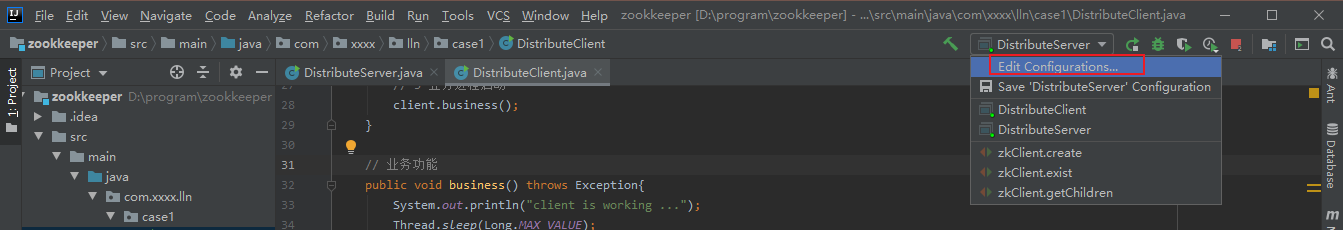

2)在 Idea 上操作增加减少服务器

(1)启动 DistributeClient 客户端(如果已经启动过,不需要重启)

(2)启动 DistributeServer 服务

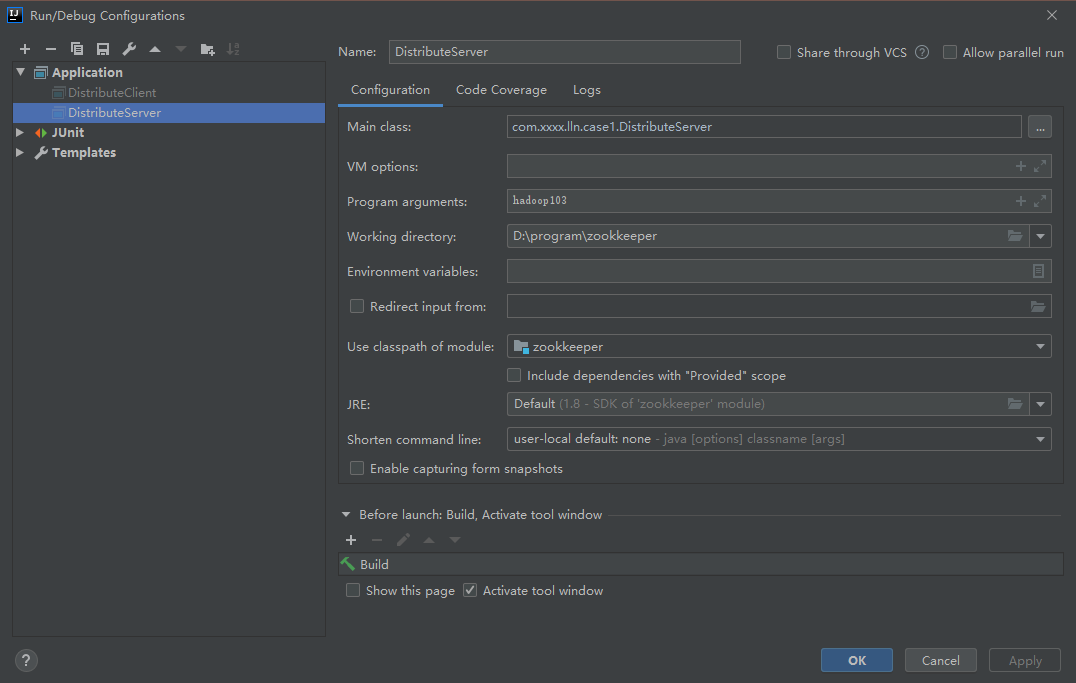

①点击 Edit Configurations…

②在弹出的窗口中(Program arguments)输入想启动的主机,例如,hadoop103

③回到 DistributeServer 的 main方法,右键在弹出的窗口中 Run“DistributeServer.main()”

④观察 DistributeServer 控制台,提示 hadoop103 is working

⑤观察 DistributeClient 控制台,提示 hadoop103 已经上线

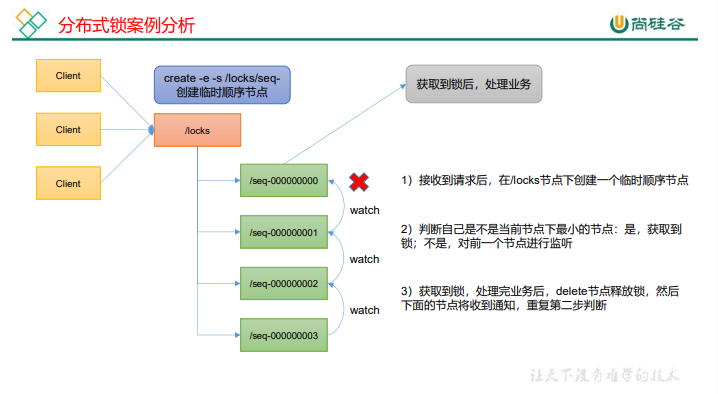

5、Zookeeper分布式锁案例

什么叫做分布式锁呢?

比如说"进程 1"在使用该资源的时候,会先去获得锁,"进程 1"获得锁以后会对该资源保持独占,这样其他进程就无法访问该资源,"进程 1"用完该资源以后就将锁释放掉,让其他进程来获得锁,那么通过这个锁机制,我们就能保证了分布式系统中多个进程能够有序的访问该临界资源。那么我们把这个分布式环境下的这个锁叫作分布式锁。

5.1 原生Zookeeper实现分布式锁案例

1)分布式锁实现

package com.xxxx.lln.case2;import org.apache.zookeeper.*;

import org.apache.zookeeper.data.Stat;import java.io.IOException;

import java.util.Collections;

import java.util.List;

import java.util.concurrent.CountDownLatch;public class DistributedLock {//zookeeper server列表private final String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";//超时时间private final int sessionTimeout = 2000;private final ZooKeeper zk;private CountDownLatch connectLatch = new CountDownLatch(1);private CountDownLatch waitLatch = new CountDownLatch(1);//当前client等待的子节点private String waitPath;private String currentMode;public DistributedLock() throws IOException, KeeperException, InterruptedException {//获取连接zk = new ZooKeeper(connectString, sessionTimeout, new Watcher() {public void process(WatchedEvent watchedEvent) {//connectLatch 如果连接上zk 可以释放if(watchedEvent.getState() == Event.KeeperState.SyncConnected){connectLatch.countDown();}//waitLatch 需要释放if(watchedEvent.getType() == Event.EventType.NodeDeleted && watchedEvent.getPath().equals(waitPath)){waitLatch.countDown();}}});//等待连接建立connectLatch.await();//判断根节点/locks是否存在Stat stat = zk.exists("/locks",false);if(stat==null){//创建根节点zk.create("/locks","locks".getBytes(), ZooDefs.Ids.OPEN_ACL_UNSAFE,CreateMode.PERSISTENT);}}//对zk加锁public void zklock(){//创建对应的临时带序号节点try {currentMode = zk.create("/locks/" + "seq-" ,null, ZooDefs.Ids.OPEN_ACL_UNSAFE,CreateMode.EPHEMERAL_SEQUENTIAL);//判断创建的节点是否是最小的序号节点,如果是,获取到锁;如果不是,监听它序号前一个节点List<String> children = zk.getChildren("/locks",false);//如果children只有一个值,那就直接获取锁;如果有多个节点,需要判断,谁最小if(children.size()==1){return;}else{Collections.sort(children);//获取当前节点名称String thisNode = currentMode.substring("/locks/".length());//获取当前节点的位置int index = children.indexOf(thisNode);if(index == -1){System.out.println("数据异常");}else if(index == 0){//就一个节点,可以获取锁return;}else{//需要监听前一个节点waitPath = "/locks/"+children.get(index-1);// 在 waitPath 上注册监听器, 当 waitPath 被删除时,zookeeper 会回调监听器的 process 方法zk.getData(waitPath,true,null);//等待监听waitLatch.await();return;}}} catch (KeeperException e) {e.printStackTrace();} catch (InterruptedException e) {e.printStackTrace();}}//解锁public void unZkLock(){//删除节点try {zk.delete(currentMode,-1);} catch (InterruptedException e) {e.printStackTrace();} catch (KeeperException e) {e.printStackTrace();}}}2)分布式锁测试

package com.xxxx.lln.case2;import org.apache.zookeeper.KeeperException;import java.io.IOException;public class DistributedLockTest {public static void main(String[] args) throws InterruptedException, IOException, KeeperException {final DistributedLock lock1 = new DistributedLock();final DistributedLock lock2 = new DistributedLock();new Thread(new Runnable() {public void run() {try {lock1.zklock();System.out.println("线程1 启动,获取到锁");Thread.sleep(5*1000);lock1.unZkLock();System.out.println("线程1 释放锁");} catch (InterruptedException e) {e.printStackTrace();}}}).start();new Thread(new Runnable() {public void run() {try {lock2.zklock();System.out.println("线程2 启动,获取到锁");Thread.sleep(5*1000);lock2.unZkLock();System.out.println("线程2 释放锁");} catch (InterruptedException e) {e.printStackTrace();}}}).start();}

}观察控制台变化:

5.2 Curator 框架实现分布式锁案例

1)原生的 Java API 开发存在的问题

(1)会话连接是异步的,需要自己去处理。比如使用 CountDownLatch

(2)Watch 需要重复注册,不然就不能生效

(3)开发的复杂性还是比较高的

(4)不支持多节点删除和创建。需要自己去递归

2)Curator 是一个专门解决分布式锁的框架,解决了原生 JavaAPI 开发分布式遇到的问题。

详情请查看官方文档:https://curator.apache.org/index.html

3)Curator 案例实操

(1)添加依赖

<dependency><groupId>org.apache.curator</groupId><artifactId>curator-framework</artifactId><version>4.3.0</version></dependency><dependency><groupId>org.apache.curator</groupId><artifactId>curator-recipes</artifactId><version>4.3.0</version></dependency><dependency><groupId>org.apache.curator</groupId><artifactId>curator-client</artifactId><version>4.3.0</version></dependency>

(2)代码实现

package com.xxxx.lln.case3;import org.apache.curator.RetryPolicy;

import org.apache.curator.framework.CuratorFramework;

import org.apache.curator.framework.CuratorFrameworkFactory;

import org.apache.curator.framework.recipes.locks.InterProcessMutex;

import org.apache.curator.retry.ExponentialBackoffRetry;public class CuratorLockTest {public static void main(String[] args) {//创建分布式锁1final InterProcessMutex lock1 = new InterProcessMutex(getCuratorFramework(),"/locks");//创建分布式锁2final InterProcessMutex lock2 = new InterProcessMutex(getCuratorFramework(),"/locks");new Thread(new Runnable() {public void run() {try {lock1.acquire();System.out.println("线程1 获取到锁");lock1.acquire();System.out.println("线程1 再次获取到锁");Thread.sleep(5*1000);lock1.release();System.out.println("线程1 释放锁");lock1.release();System.out.println("线程1 再次释放锁");} catch (InterruptedException e) {e.printStackTrace();} catch (Exception e) {e.printStackTrace();}}}).start();new Thread(new Runnable() {public void run() {try {lock2.acquire();System.out.println("线程2 获取到锁");lock2.acquire();System.out.println("线程2 再次获取到锁");Thread.sleep(5*1000);lock2.release();System.out.println("线程2 释放锁");lock2.release();System.out.println("线程2 再次释放锁");} catch (InterruptedException e) {e.printStackTrace();} catch (Exception e) {e.printStackTrace();}}}).start();}//分布式锁初始化private static CuratorFramework getCuratorFramework() {//重试策略,初试时间3秒,重试三次RetryPolicy policy = new ExponentialBackoffRetry(3000,3);//通过工厂创建CuratorCuratorFramework client = CuratorFrameworkFactory.builder().connectString("hadoop102:2181,hadoop103:2181,hadoop104:2181").connectionTimeoutMs(2000).sessionTimeoutMs(2000).retryPolicy(policy).build();//启动客户端client.start();System.out.println("zookeeper启动成功");return client;}

}(2)观察控制台变化:

线程1 获取到锁

线程1 再次获取到锁

线程1 释放锁

线程1 再次释放锁

线程2 获取到锁

线程2 再次获取到锁

线程2 释放锁

线程2 再次释放锁

6、企业面试真题