据此修改 Flink 源码

| 版本 | |

|---|---|

| Flink | 1.13.5 |

| Atlas | 1.2.0 |

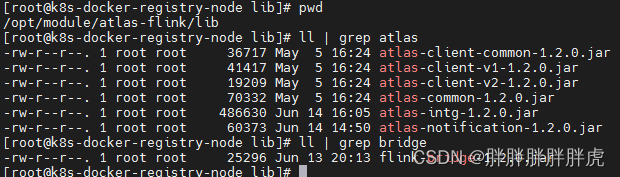

将 atlas 配置文件打进 flink-bridge;atlas 相关的 jar 放进 flink/lib

jar uf flink-bridge-1.2.0.jar atlas-application.properties

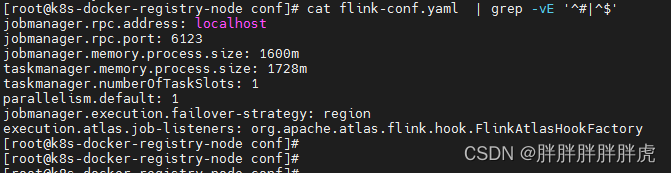

flink-conf.yaml 注册监听

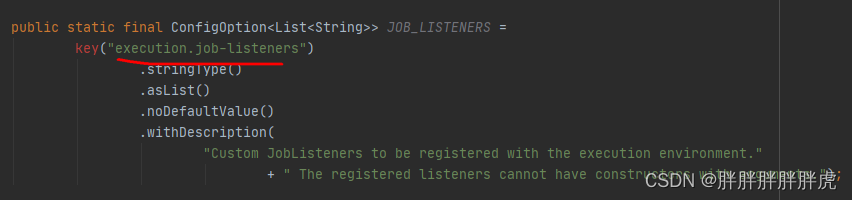

org.apache.flink.configuration.ExecutionOptions 添加配置属性

public static final ConfigOption<List<String>> JOB_LISTENERS =ConfigOptions.key("execution.atlas.job-listeners").stringType().asList().noDefaultValue().withDescription("JobListenerFactories to be registered for the execution.");

一点说明:官方Flink1.12.0 版本之后支持配置execution.job-listeners,因此自己添加了个配置属性execution.atlas.job-listeners 进行区分,

org.apache.flink.configuration.DeploymentOptions

任务提交

flink run -m yarn-cluster -ys 1 -yjm 1024 -ytm 1024 -c com.nufront.bigdata.v2x.test.AtlasTest /opt/v2x-1.0-SNAPSHOT.jar

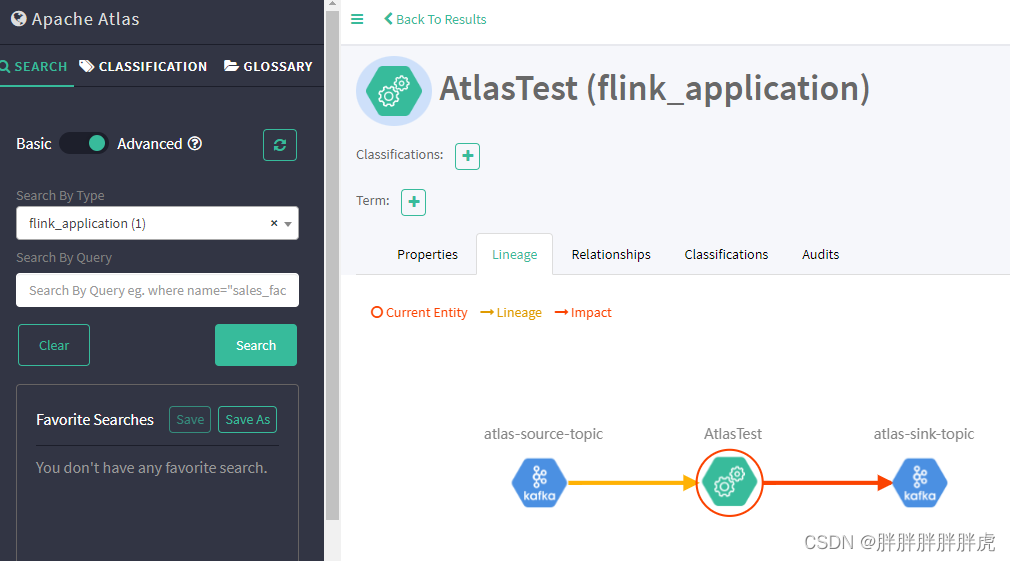

测试任务

public class AtlasTest {public static void main(String[] args) throws Exception {//TODO 1.获取执行环境StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();env.setParallelism(1);env.disableOperatorChaining();// TODO kafka消费// 配置 kafka 输入流信息Properties consumerprops = new Properties();consumerprops.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, "10.0.2.67:9092");consumerprops.put(ConsumerConfig.GROUP_ID_CONFIG, "group1");consumerprops.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, "false");consumerprops.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, "latest");consumerprops.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");consumerprops.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");// 添加 kafka 数据源DataStreamSource<String> dataStreamSource = env.addSource(new FlinkKafkaConsumer<>("atlas-source-topic", new SimpleStringSchema(), consumerprops));// 配置kafka输入流信息Properties producerprops = new Properties();producerprops.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, "10.0.2.67:9092");// 配置证书信息dataStreamSource.addSink(new FlinkKafkaProducer<String>("atlas-sink-topic", new KeyedSerializationSchemaWrapper(new SerializationSchema<String>(){@Overridepublic byte[] serialize(String element) {return element.getBytes();}}), producerprops));env.execute("AtlasTest");}}

flink on yarn 日志输出

修改 json 解析方式

org.apache.atlas.utils.AtlasJson#toJson

public static String toJson(Object obj) {String ret;if (obj instanceof JsonNode && ((JsonNode) obj).isTextual()) {ret = ((JsonNode) obj).textValue();} else {// 修改 json 处理方式:fastjson,原来的ObjectMapper.writeValueAsString() 一度卡住不往下执行// ret = mapper.writeValueAsString(obj);ret = JSONObject.toJSONString(JSONObject.toJSON(obj));LOG.info(ret);}return ret;}

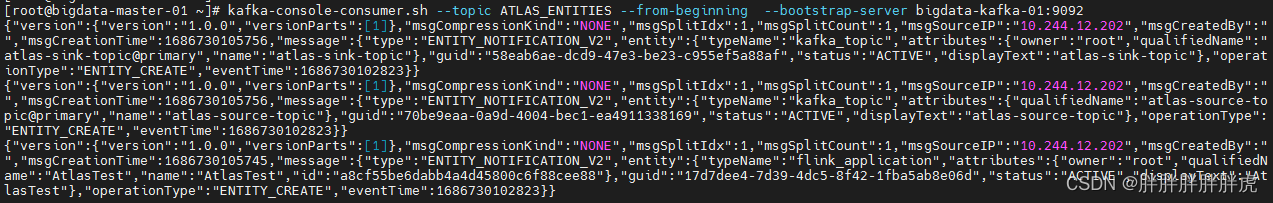

查看目标 kafka 对应topic