文章目录

- 神经网络的初始化

- 初始化数据

- 模型搭建

- 简单函数

- 零初始化——initialize_parameters_zeros

- 随机初始化——initialize_parameters_random

- He初始化

- 三种初始化结果对比

- 在神经网络中使用正则化

- 导入数据

- 模型搭建

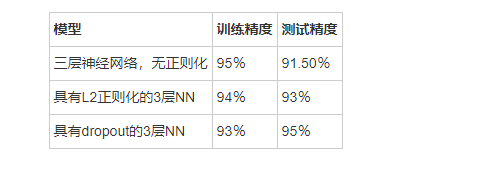

- 非正则化模型

- L2正则化

- dropout

- 三种模型的对比

- 怎么说呢

神经网络的初始化

主要是看权重的不同初始化方法的影响

导包

import numpy as np

import matplotlib.pyplot as plt

import sklearn

import sklearn.datasets%matplotlib inline

plt.rcParams['figure.figsize'] = (7.0, 4.0)

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

这里使用的是sklearn中的函数来当作数据集使用,该函数介绍如下

sklearn.datasets.make_circles(n_samples = 100,shuffle = True,noise = None,random_state = None,factor = 0.8 )#n_samples:整数 可选 默认为100.生成的总点数。(如果是奇数,内圆比外圆多一点,但是测试输入5后,内圆和外圆均是两个点)

#shuffle:布尔变量 可选 默认为True,是否打乱样本

#noise:double 或None 默认为None。将高斯噪声的标准差加入到数据中

#random_state:整数 RandomState instance or None。确定数据集变换和噪声的随机数生成。

#factor:0 < double < 1 默认值0.8。内外圆之间的比例因子、#返回值:X:[n_samples, 2]形状的数组,生成的样本 y:[n_samples]形状的数组,每个样本的标签(0或1)

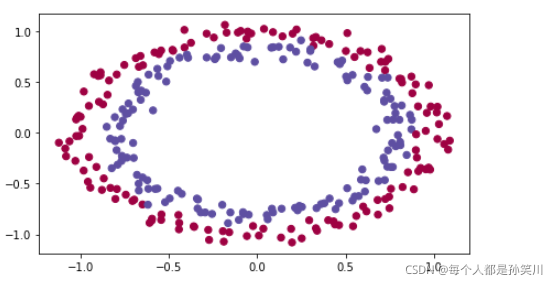

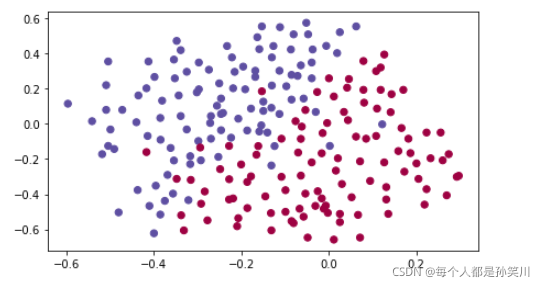

初始化数据

def load_dataset():np.random.seed(1)train_X, train_Y = sklearn.datasets.make_circles(n_samples=300, noise=.05)np.random.seed(2)test_X, test_Y = sklearn.datasets.make_circles(n_samples=100, noise=.05)# 可视化plt.scatter(train_X[:, 0], train_X[:, 1], c=train_Y, s=40, cmap=plt.cm.Spectral)train_X = train_X.Ttrain_Y = train_Y.reshape((1, train_Y.shape[0]))test_X = test_X.Ttest_Y = test_Y.reshape((1, test_Y.shape[0]))return train_X, train_Y, test_X, test_Y

train_X, train_Y, test_X, test_Y = load_dataset()

模型搭建

模型主要框架如下代码所示:

要实现三种初始化方法,并逐步完善该模型

零初始化 :在输入参数中设置initialization = “zeros”。

随机初始化 :在输入参数中设置initialization = “random”,这会将权重初始化为较大的随机值。

He初始化 :在输入参数中设置initialization = “he”,这会根据He等人(2015)的论文将权重初始化为按比例缩放的随机值。

def model(X, Y, learning_rate = 0.01, num_iterations = 15000, print_cost = True, initialization = "he"):grads = {}costs = [] m = X.shape[1] # train 300,test 100layers_dims = [X.shape[0], 10, 5, 1]#两个隐藏层 10 、5 ,输出层为1# 初始化参数w、bif initialization == "zeros":parameters = initialize_parameters_zeros(layers_dims)elif initialization == "random":parameters = initialize_parameters_random(layers_dims)elif initialization == "he":parameters = initialize_parameters_he(layers_dims)for i in range(0, num_iterations):# 前向传播: LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SIGMOID.a3, cache = forward_propagation(X, parameters)# 计算该次循环的损失,通过A和Ycost = compute_loss(a3, Y)# 反向传播计算导数.grads = backward_propagation(X, Y, cache)# 通过grads更新w、bparameters = update_parameters(parameters, grads, learning_rate) if print_cost and i % 1000 == 0:print("Cost after iteration {}: {}".format(i, cost))costs.append(cost)plt.plot(costs)plt.ylabel('cost')plt.xlabel('iterations (per hundreds)')plt.title("Learning rate =" + str(learning_rate))plt.show()return parameters

简单函数

#sigmoid

def sigmoid(x): s = 1/(1+np.exp(-x))return s#relu

def relu(x):s = np.maximum(0,x) return s#前向传播

def forward_propagation(X, parameters):# 取出数据W1 = parameters["W1"]b1 = parameters["b1"]W2 = parameters["W2"]b2 = parameters["b2"]W3 = parameters["W3"]b3 = parameters["b3"]# LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SIGMOIDz1 = np.dot(W1, X) + b1a1 = relu(z1)z2 = np.dot(W2, a1) + b2a2 = relu(z2)z3 = np.dot(W3, a2) + b3a3 = sigmoid(z3)cache = (z1, a1, W1, b1, z2, a2, W2, b2, z3, a3, W3, b3)return a3, cache#反向传播计算导数

def backward_propagation(X, Y, cache):m = X.shape[1]# train 300,test 100(z1, a1, W1, b1, z2, a2, W2, b2, z3, a3, W3, b3) = cache# dz3通过dj/da3和da3/dz3计算出来的,后者为sigmoid的导数dz3 = 1./m * (a3 - Y)#这里乘1/m让后面梯度下降不需要再除mdW3 = np.dot(dz3, a2.T)db3 = np.sum(dz3, axis=1, keepdims = True)#np.int64(a2 > 0 为relu的导数da2 = np.dot(W3.T, dz3)dz2 = np.multiply(da2, np.int64(a2 > 0))dW2 = np.dot(dz2, a1.T)db2 = np.sum(dz2, axis=1, keepdims = True)da1 = np.dot(W2.T, dz2)dz1 = np.multiply(da1, np.int64(a1 > 0))dW1 = np.dot(dz1, X.T)db1 = np.sum(dz1, axis=1, keepdims = True)gradients = {"dz3": dz3, "dW3": dW3, "db3": db3,"da2": da2, "dz2": dz2, "dW2": dW2, "db2": db2,"da1": da1, "dz1": dz1, "dW1": dW1, "db1": db1}return gradientsdef update_parameters(parameters, grads, learning_rate):L = len(parameters) // 2 # 参数中有w、b,除以2代表层数for k in range(L):parameters["W" + str(k+1)] = parameters["W" + str(k+1)] - learning_rate * grads["dW" + str(k+1)]parameters["b" + str(k+1)] = parameters["b" + str(k+1)] - learning_rate * grads["db" + str(k+1)]return parameters#经典损失函数

def compute_loss(a3, Y):m = Y.shape[1]logprobs = np.multiply(-np.log(a3),Y) + np.multiply(-np.log(1 - a3), 1 - Y)loss = 1./m * np.nansum(logprobs)return loss#通过训练得到的parameters进行预测

def predict(X, y, parameters):m = X.shape[1]p = np.zeros((1,m), dtype = np.int32)# 网上教程给的是np.int,不安全a3, caches = forward_propagation(X, parameters)for i in range(0, a3.shape[1]):if a3[0,i] > 0.5:p[0,i] = 1else:p[0,i] = 0# print resultsprint("Accuracy: " + str(np.mean((p[0,:] == y[0,:]))))return p#绘制决策边界

def plot_decision_boundary(model, X, y):x_min, x_max = X[0, :].min() - 1, X[0, :].max() + 1y_min, y_max = X[1, :].min() - 1, X[1, :].max() + 1h = 0.01# np.arange为生成等差数列#np.arange(0, 6, 2) ————array([0, 2, 4])xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))# 语法:X,Y = numpy.meshgrid(x, y)#输入的x,y,就是网格点的横纵坐标列向量(非矩阵)#输出的X,Y,就是坐标矩阵。#简单介绍就是:将x、y的笛卡尔积坐标分别放到X、Y中#np.r_:是按列连接两个矩阵,就是把两矩阵上下相加,要求列数相等#np.c_:是按行连接两个矩阵,就是把两矩阵左右相加,要求行数相等Z = model(np.c_[xx.ravel(), yy.ravel()])Z = Z.reshape(xx.shape)#matplotlib一直不懂,wwwplt.contourf(xx, yy, Z, cmap=plt.cm.Spectral)plt.ylabel('x2')plt.xlabel('x1')plt.scatter(X[0, :], X[1, :], c=np.squeeze(y), cmap=plt.cm.Spectral)plt.show()

def predict_dec(parameters, X):a3, cache = forward_propagation(X, parameters)predictions = (a3>0.5)return predictions

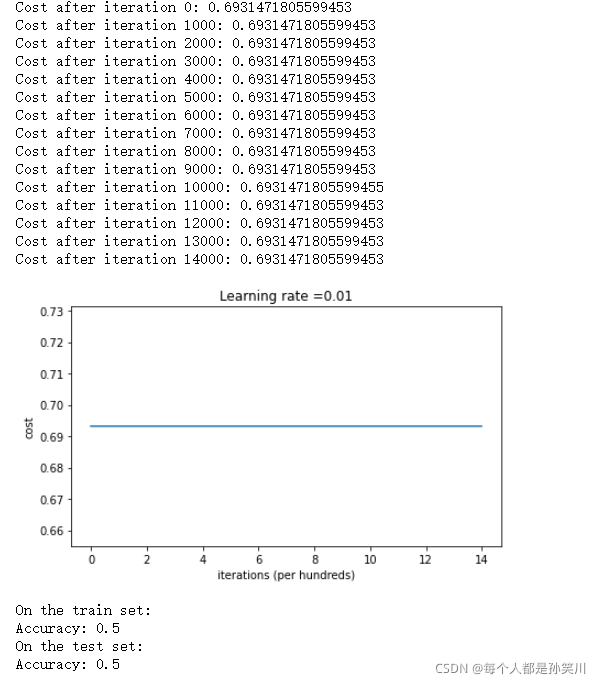

零初始化——initialize_parameters_zeros

将所有参数初始化为零

由于参数都为0的话,训练的时候所有的权重都相同,导致所有的神经元中所蕴藏的函数都相同

def initialize_parameters_zeros(layers_dims):parameters = {}L = len(layers_dims) for l in range(1, L):parameters['W' + str(l)] = np.zeros((layers_dims[l],layers_dims[l-1]))parameters['b' + str(l)] = np.zeros((layers_dims[l],1))return parameters

parameters = model(train_X, train_Y, initialization = "zeros")

print ("On the train set:")

predictions_train = predict(train_X, train_Y, parameters)

print ("On the test set:")

predictions_test = predict(test_X, test_Y, parameters)

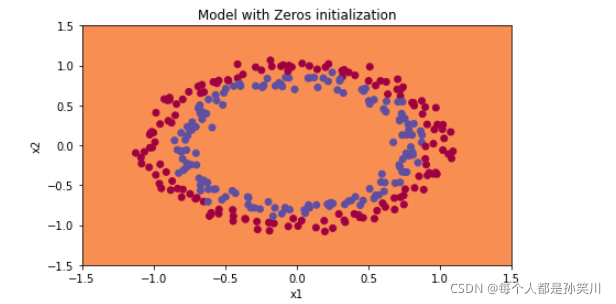

plt.title("Model with Zeros initialization")

axes = plt.gca()

axes.set_xlim([-1.5,1.5])

axes.set_ylim([-1.5,1.5])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

如果我们输出一下预测结果的话,会发现它预测都为0,将所有权重初始化为零会导致网络无法打破对称性。 这意味着每一层中的每个神经元都将学习相同的东西,就好像nl=1

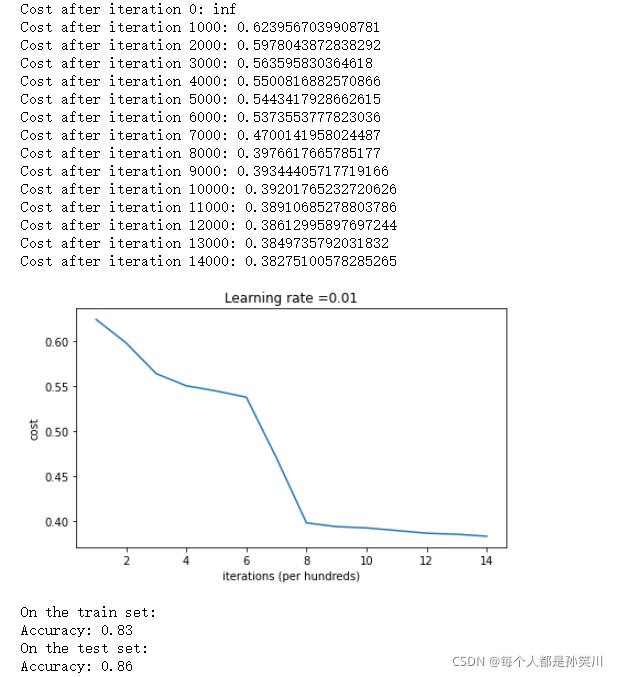

随机初始化——initialize_parameters_random

将权重初始化为较大的随机值(按*10缩放),并将偏差设为0。

将 np.random.randn(…,…) * 10用于权重,将np.zeros((…, …))用于偏差。

使用固定的np.random.seed(…),以确保你的“随机”权重与我们的权重匹配。运行几次代码后参数初始值始终相同

def initialize_parameters_random(layers_dims):np.random.seed(3) # This seed makes sure your "random" numbers will be the as oursparameters = {}L = len(layers_dims) # integer representing the number of layersfor l in range(1, L):parameters['W' + str(l)] = np.random.randn(layers_dims[l],layers_dims[l-1])*10parameters['b' + str(l)] = np.zeros((layers_dims[l],1))return parameters

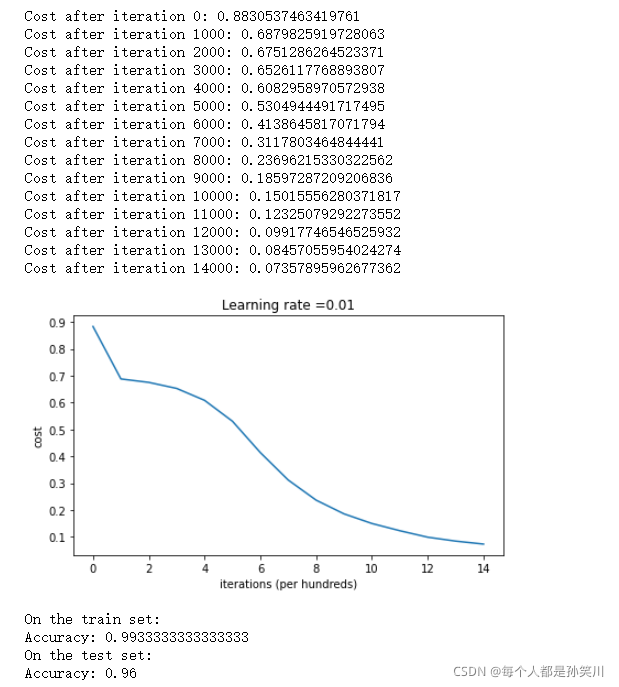

parameters = model(train_X, train_Y, initialization = "random")

print ("On the train set:")

predictions_train = predict(train_X, train_Y, parameters)

print ("On the test set:")

predictions_test = predict(test_X, test_Y, parameters)

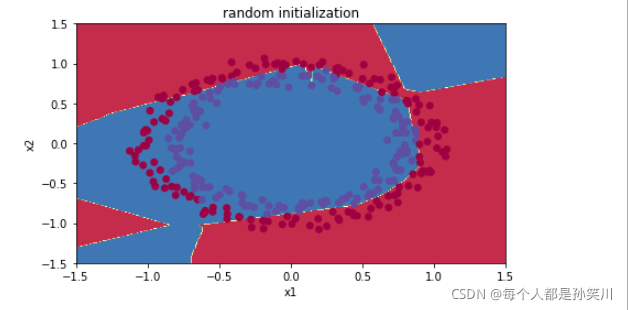

plt.title("random initialization")

axes = plt.gca()

axes.set_xlim([-1.5,1.5])

axes.set_ylim([-1.5,1.5])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

训练较长时间的网络,将会看到更好的结果,但是使用太大的随机数进行初始化会降低优化速度

初始化为较小的随机值会更好,也就是使用He初始化,或者是Xavier初始化

He初始化

在上一节作业中使用过Xavier初始化,He初始化与之类似

Xavier初始化使用比例因子sqrt(1./layers_dims[l-1])来表示权重 ,而He初始化使用sqrt(2./layers_dims[l-1]))

def initialize_parameters_he(layers_dims):np.random.seed(3)parameters = {}L = len(layers_dims) - 1for l in range(1, L + 1):parameters['W' + str(l)] = np.random.randn(layers_dims[l],layers_dims[l-1])*np.sqrt(2./layers_dims[l-1])parameters['b' + str(l)] = np.zeros((layers_dims[l],1))return parameters

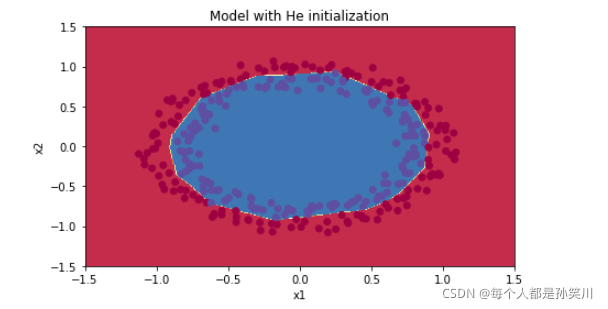

parameters = model(train_X, train_Y, initialization = "he")

print ("On the train set:")

predictions_train = predict(train_X, train_Y, parameters)

print ("On the test set:")

predictions_test = predict(test_X, test_Y, parameters)

plt.title("Model with He initialization")

axes = plt.gca()

axes.set_xlim([-1.5,1.5])

axes.set_ylim([-1.5,1.5])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

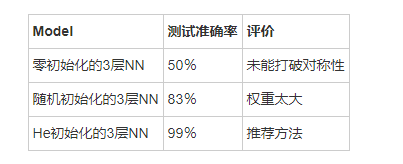

三种初始化结果对比

在神经网络中使用正则化

导入数据

import numpy as np

import matplotlib.pyplot as plt

import sklearn

import scipy.io

import sklearn.datasets%matplotlib inline

plt.rcParams['figure.figsize'] = (7.0, 4.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

def load_2D_dataset():data = scipy.io.loadmat('./data.mat')#mat格式也是类似于字典,我们可以遍历.keys()来看里面的标签#X、y、yval、Xvaltrain_X = data['X'].Ttrain_Y = data['y'].Ttest_X = data['Xval'].Ttest_Y = data['yval'].Tplt.scatter(train_X[0, :], train_X[1, :], c=np.squeeze(train_Y), s=40, cmap=plt.cm.Spectral)return train_X, train_Y, test_X, test_Y

train_X, train_Y, test_X, test_Y = load_2D_dataset()

模型搭建

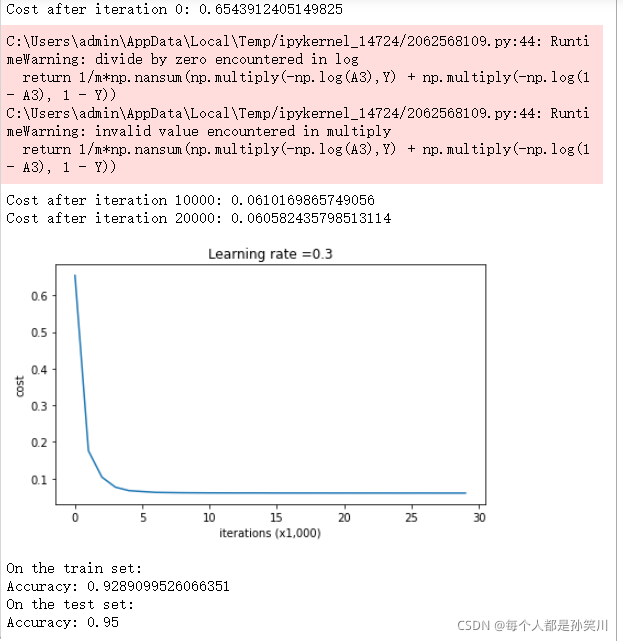

def model(X, Y, learning_rate = 0.3, num_iterations = 30000, print_cost = True, lambd = 0, keep_prob = 1):grads = {}costs = [] m = X.shape[1] layers_dims = [X.shape[0], 20, 3, 1]parameters = initialize_parameters(layers_dims)for i in range(0, num_iterations):# LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SIGMOID.if keep_prob == 1:a3, cache = forward_propagation(X, parameters)elif keep_prob < 1:a3, cache = forward_propagation_with_dropout(X, parameters, keep_prob)# 代价函数if lambd == 0:cost = compute_cost(a3, Y)else:cost = compute_cost_with_regularization(a3, Y, parameters, lambd)# 反向传播assert(lambd==0 or keep_prob==1) if lambd == 0 and keep_prob == 1:grads = backward_propagation(X, Y, cache)elif lambd != 0:grads = backward_propagation_with_regularization(X, Y, cache, lambd)elif keep_prob < 1:grads = backward_propagation_with_dropout(X, Y, cache, keep_prob)# 梯度下降parameters = update_parameters(parameters, grads, learning_rate)# Print the loss every 10000 iterationsif print_cost and i % 10000 == 0:print("Cost after iteration {}: {}".format(i, cost))if print_cost and i % 1000 == 0:costs.append(cost)# 绘制代价曲线plt.plot(costs)plt.ylabel('cost')plt.xlabel('iterations (x1,000)')plt.title("Learning rate =" + str(learning_rate))plt.show()return parameters

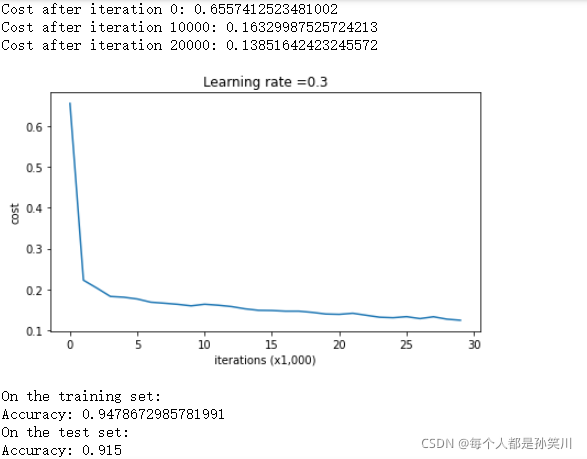

非正则化模型

传统模型,没有添加lambd、dropout

def relu(x):return np.maximum(0,x)def sigmoid(x):return 1/(1+np.exp(-x))def initialize_parameters(layers_dims): parameters={}np.random.seed(3)for i in range(1,len(layers_dims)):parameters['W'+str(i)]=np.random.randn(layers_dims[i],layers_dims[i-1])/ np.sqrt(layers_dims[i-1])parameters['b'+str(i)]=np.zeros((layers_dims[i],1))return parametersdef forward_propagation(X, parameters):#可以使用之前的L层模型操作,但是放到这里的话,有点麻烦W1 = parameters["W1"]b1 = parameters["b1"]W2 = parameters["W2"]b2 = parameters["b2"]W3 = parameters["W3"]b3 = parameters["b3"]# LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SIGMOIDZ1 = np.dot(W1, X) + b1A1 = relu(Z1)Z2 = np.dot(W2, A1) + b2A2 = relu(Z2)Z3 = np.dot(W3, A2) + b3A3 = sigmoid(Z3)cache = (Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3)return A3, cache

def compute_cost(A3,Y):m=Y.shape[1]# 对应np.dot、np.mutiply、*可以看https://blog.csdn.net/zenghaitao0128/article/details/78715140return 1/m*np.nansum(np.multiply(-np.log(A3),Y) + np.multiply(-np.log(1 - A3), 1 - Y))def backward_propagation(X,Y,cache):m = X.shape[1](Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3) = cachedZ3= 1./m * (A3-Y)#np.multiply(A3,sigmoid的关于Z的导数)dW3=np.dot(dZ3,A2.T)db3=np.sum(dZ3, axis=1, keepdims = True)dA2=np.dot(W3.T,dZ3)dZ2=np.multiply(dA2,np.int64(A2>0))dW2=np.dot(dZ2,A1.T)db2=np.sum(dZ2,axis=1,keepdims=True)dA1=np.dot(W2.T,dZ2)dZ1=np.multiply(dA1,np.int64(A1>0))dW1=np.dot(dZ1,X.T)db1=np.sum(dZ1,axis=1,keepdims=True)gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,"dA2": dA2, "dZ2": dZ2, "dW2": dW2, "db2": db2,"dA1": dA1, "dZ1": dZ1, "dW1": dW1, "db1": db1}return gradientsdef update_parameters(parameters, grads, learning_rate):for i in range(len(parameters)//2):parameters['W'+str(i+1)]=parameters['W'+str(i+1)]-learning_rate*grads['dW'+str(i+1)]parameters["b" + str(i+1)] = parameters["b" + str(i+1)] - learning_rate * grads["db" + str(i+1)]return parametersdef predict(X,y,parameters):m = X.shape[1]p = np.zeros((1,m), dtype = np.int64)a3,cache=forward_propagation(X,parameters)for i in range(0, a3.shape[1]):if a3[0,i] > 0.5:p[0,i] = 1else:p[0,i] = 0print("Accuracy: " + str(np.mean((p[0,:] == y[0,:]))))return pdef plot_decision_boundary(model, X, y):x_min, x_max = X[0, :].min() - 1, X[0, :].max() + 1y_min, y_max = X[1, :].min() - 1, X[1, :].max() + 1h = 0.01# np.arange为生成等差数列#np.arange(0, 6, 2) ————array([0, 2, 4])xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))# 语法:X,Y = numpy.meshgrid(x, y)#输入的x,y,就是网格点的横纵坐标列向量(非矩阵)#输出的X,Y,就是坐标矩阵。#简单介绍就是:将x、y的笛卡尔积坐标分别放到X、Y中#np.r_:是按列连接两个矩阵,就是把两矩阵上下相加,要求列数相等#np.c_:是按行连接两个矩阵,就是把两矩阵左右相加,要求行数相等Z = model(np.c_[xx.ravel(), yy.ravel()])Z = Z.reshape(xx.shape)#matplotlib一直不懂,wwwplt.contourf(xx, yy, Z, cmap=plt.cm.Spectral)plt.ylabel('x2')plt.xlabel('x1')plt.scatter(X[0, :], X[1, :], c=np.squeeze(y), cmap=plt.cm.Spectral)plt.show()

def predict_dec(parameters, X):#看不懂了这里,先跳过a3, cache = forward_propagation(X, parameters)predictions = (a3>0.5)return predictions

parameters = model(train_X, train_Y)

print ("On the training set:")

predictions_train = predict(train_X, train_Y, parameters)

print ("On the test set:")

predictions_test = predict(test_X, test_Y, parameters)

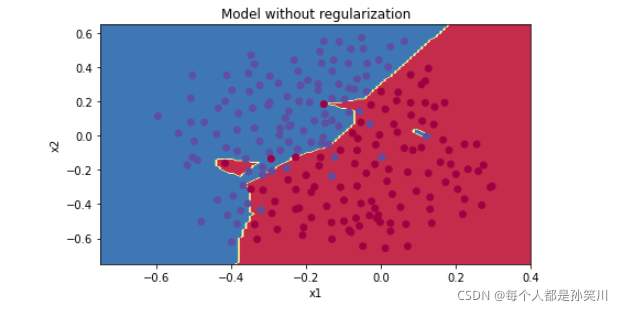

plt.title("Model without regularization")

axes = plt.gca()

axes.set_xlim([-0.75,0.40])

axes.set_ylim([-0.75,0.65])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)#https://www.cnblogs.com/James-221/p/13669049.html

# 关于lambda表达式可以看上面连接

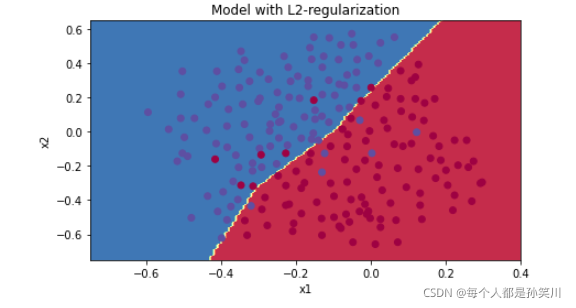

L2正则化

更改损失函数之后,必须更改反向传播,针对新损失函数计算所有梯度

添加的正则项只包含W权重矩阵,所以只需要对dW1,dW2,dW3添加正则化项的梯度即可

def compute_cost_with_regularization(A3, Y, parameters, lambd):m = Y.shape[1]W1 = parameters["W1"]W2 = parameters["W2"]W3 = parameters["W3"]cross_entropy_cost = compute_cost(A3, Y) #np.sum(np.square(Wl)) 矩阵所有元素平方和L2_regularization_cost = (1./m*lambd/2)*(np.sum(np.square(W1)) + np.sum(np.square(W2)) + np.sum(np.square(W3)))cost = cross_entropy_cost + L2_regularization_costreturn cost

def backward_propagation_with_regularization(X, Y, cache, lambd):m = X.shape[1](Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3) = cachedZ3 = A3 - YdW3 = 1./m * np.dot(dZ3, A2.T) + lambd/m * W3db3 = 1./m * np.sum(dZ3, axis=1, keepdims = True)dA2 = np.dot(W3.T, dZ3)dZ2 = np.multiply(dA2, np.int64(A2 > 0))dW2 = 1./m * np.dot(dZ2, A1.T) + lambd/m * W2db2 = 1./m * np.sum(dZ2, axis=1, keepdims = True)dA1 = np.dot(W2.T, dZ2)dZ1 = np.multiply(dA1, np.int64(A1 > 0))dW1 = 1./m * np.dot(dZ1, X.T) + lambd/m * W1db1 = 1./m * np.sum(dZ1, axis=1, keepdims = True)gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,"dA2": dA2,"dZ2": dZ2, "dW2": dW2, "db2": db2, "dA1": dA1, "dZ1": dZ1, "dW1": dW1, "db1": db1}return gradients

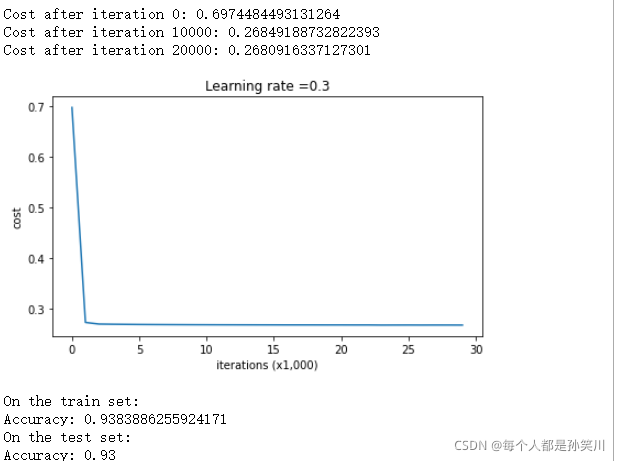

使用lambd为0.7时候的模型调用

parameters = model(train_X, train_Y, lambd = 0.7)

print ("On the train set:")

predictions_train = predict(train_X, train_Y, parameters)

print ("On the test set:")

predictions_test = predict(test_X, test_Y, parameters)

plt.title("Model with L2-regularization")

axes = plt.gca()

axes.set_xlim([-0.75,0.40])

axes.set_ylim([-0.75,0.65])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

与传统网络相比,方差减小了,不再过拟合训练数据了

lambd变大,w必须减小才能缩小损失函数,w减小,Z减小,Z减小之后取值范围小,在relu中对应可能就为线性,也就是曲线更加平滑

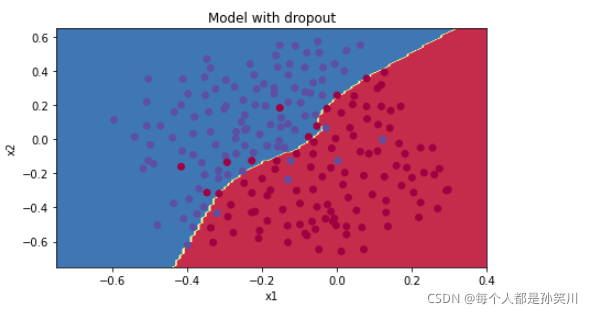

dropout

它会在每次迭代中随机关闭一些神经元。

你将训练仅使用神经元子集的不同模型。通过Dropout,你的神经元对另一种特定神经元的激活变得不那么敏感,因为另一神经元可能随时关闭。

实现带有Dropout的正向传播,为第一和第二隐藏层添加Dropout。不会将Dropout应用于输入层或输出层

步骤:

1、创建与A矩阵维度相同的[0,1]之间取值的矩阵D

2、对矩阵D中进行阈值设置,将矩阵X的所有条目设置为0(如果概率小于0.5)或1(如果概率大于0.5),则可以执行:X = (X < 0.5)。注意0和1分别对应False和True

3、A=A*D,关闭一些神经元

4、A=A/dropout,这样可以保证损失结果仍有与未丢弃神经元时的期望。也就是在减少一些神经元影响的同时又加强另外神经元的影响(也称为反向dropout)

def forward_propagation_with_dropout(X, parameters, keep_prob = 0.5):np.random.seed(1)# retrieve parametersW1 = parameters["W1"]b1 = parameters["b1"]W2 = parameters["W2"]b2 = parameters["b2"]W3 = parameters["W3"]b3 = parameters["b3"]# LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SIGMOIDZ1 = np.dot(W1, X) + b1A1 = relu(Z1)D1 = np.random.rand(A1.shape[0],A1.shape[1]) D1 = D1 < keep_prob A1 = A1 * D1 A1 = A1 / keep_prob Z2 = np.dot(W2, A1) + b2A2 = relu(Z2)D2 = np.random.rand(A2.shape[0],A2.shape[1]) D2 = D2 < keep_prob A2 = A2 * D2 A2 = A2 / keep_prob Z3 = np.dot(W3, A2) + b3A3 = sigmoid(Z3)cache = (Z1, D1, A1, W1, b1, Z2, D2, A2, W2, b2, Z3, A3, W3, b3)return A3, cache

反向传播:

1、通过D1关闭的A1中的某些神经元,在反向传播中,我们必须通过D1再次关闭

2.在正向传播过程中,你已将A1除以keep_prob。 因此,在反向传播中,必须再次将dA1除以keep_prob(计算的解释是,如果被keep_prob缩放,则其派生的也由相同的keep_prob缩放)。

教程中给出反向传播也需要除以keep_prob,但是我感觉不需要,不知道为什么需要,A已经除以了,再除的话相当于除了两次,希望有大佬解惑

def backward_propagation_with_regularization(X, Y, cache, lambd):m = X.shape[1](Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3) = cachedZ3 = A3 - YdW3 = 1./m * np.dot(dZ3, A2.T) + lambd/m * W3db3 = 1./m * np.sum(dZ3, axis=1, keepdims = True)dA2 = np.dot(W3.T, dZ3)dZ2 = np.multiply(dA2, np.int64(A2 > 0))dW2 = 1./m * np.dot(dZ2, A1.T) + lambd/m * W2db2 = 1./m * np.sum(dZ2, axis=1, keepdims = True)dA1 = np.dot(W2.T, dZ2)dZ1 = np.multiply(dA1, np.int64(A1 > 0))dW1 = 1./m * np.dot(dZ1, X.T) + lambd/m * W1db1 = 1./m * np.sum(dZ1, axis=1, keepdims = True)gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,"dA2": dA2,"dZ2": dZ2, "dW2": dW2, "db2": db2, "dA1": dA1, "dZ1": dZ1, "dW1": dW1, "db1": db1}return gradients

def backward_propagation_with_dropout(X, Y, cache, keep_prob):m = X.shape[1](Z1, D1, A1, W1, b1, Z2, D2, A2, W2, b2, Z3, A3, W3, b3) = cachedZ3 = A3 - YdW3 = 1./m * np.dot(dZ3, A2.T)db3 = 1./m * np.sum(dZ3, axis=1, keepdims = True)dA2 = np.dot(W3.T, dZ3)dA2 = dA2 * D2 dA2 = dA2 / keep_prob dZ2 = np.multiply(dA2, np.int64(A2 > 0))dW2 = 1./m * np.dot(dZ2, A1.T)db2 = 1./m * np.sum(dZ2, axis=1, keepdims = True)dA1 = np.dot(W2.T, dZ2)dA1 = dA1 * D1 dA1 = dA1 / keep_prob dZ1 = np.multiply(dA1, np.int64(A1 > 0))dW1 = 1./m * np.dot(dZ1, X.T)db1 = 1./m * np.sum(dZ1, axis=1, keepdims = True)gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,"dA2": dA2,"dZ2": dZ2, "dW2": dW2, "db2": db2, "dA1": dA1, "dZ1": dZ1, "dW1": dW1, "db1": db1}return gradients

parameters = model(train_X, train_Y, keep_prob = 0.86, learning_rate = 0.3)print ("On the train set:")

predictions_train = predict(train_X, train_Y, parameters)

print ("On the test set:")

predictions_test = predict(test_X, test_Y, parameters)

plt.title("Model with dropout")

axes = plt.gca()

axes.set_xlim([-0.75,0.40])

axes.set_ylim([-0.75,0.65])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

仅在训练期间使用dropout,在测试期间不要使用

正则化会损害训练集的性能,这是因为它限制了网络过拟合训练集的能力。 但它最终可以提供更好的测试准确性

三种模型的对比

怎么说呢

第三个作业检验梯度下降,没有做,感觉没时间了,要到下一个组会了,赶快学

matplotlib,只要碰到这个相关的代码,都看不懂,现在不知道要不要学一下,但是感觉专门去学一个库没有必要,纠结

赶快学完这些看pytorch了,自己实现模型细节好麻烦,赶快调库调库,调参侠重现人间