文章目录

- 0.前置说明

- 1.安装Java11

- 2.集群部署

- 2.1 安装ZooKeeper

- 2.2 安装Kafka

- 2.3 封装启动脚本

0.前置说明

由于我们手里只有一台Linux机器,所以我们实现的是简单的单机模拟的集群部署,通过修改配置文件,启动 3 个 kafka 时用到 3 个不同的端口(9091,9092,9093)。

1.安装Java11

- 切换到你的

工作目录下执行:

yum install java-11-openjdk -y

- 添加环境变量;

export JAVA_HOME=/usr/lib/jvm/java-11-openjdk-11.0.23.0.9-3.tl3.x86_64

export PATH=$PATH:$JAVA_HOME/bin

- 让你的环境变量生效;

source /etc/profile

- 测试是否安装成功;

java -version

openjdk version "11.0.23" 2024-04-16 LTS

OpenJDK Runtime Environment (Red_Hat-11.0.23.0.9-2) (build 11.0.23+9-LTS)

OpenJDK 64-Bit Server VM (Red_Hat-11.0.23.0.9-2) (build 11.0.23+9-LTS, mixed mode, sharing)

2.集群部署

- 在你的工作目录下新建目录

cluster(集群);

mkdir cluster

rz -E # 上传本地安装包

tar -zxf kafka_2.12-3.6.1.tgz

- 改名为

kafka;

mv kafka_2.12-3.6.1 kafka

2.1 安装ZooKeeper

- 修改文件夹名为

zookeeper;

因为 kafka 内置了 ZooKeeper 软件,所以此处将解压缩的文件作为 ZooKeeper 软件使用。

mv kafka/ zookeeper

- 修改

config/zookeeper.properties文件;

cd zookeeper/

vim config/zookeeper.properties

- 修改

dataDir,zookeeper 数据的存储文件。

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# the directory where the snapshot is stored.

dataDir=/root/cluster/zookeeper-data/zookeeper

# the port at which the clients will connect

clientPort=2181

# disable the per-ip limit on the number of connections since this is a non-production config

maxClientCnxns=0

# Disable the adminserver by default to avoid port conflicts.

# Set the port to something non-conflicting if choosing to enable this

admin.enableServer=false

# admin.serverPort=8080

2.2 安装Kafka

- 将上面解压缩的文件复制一份,改名为

broke-1;

mv kafka_2.12-3.6.1/ broker-1

ll

total 8

drwxr-xr-x 7 root root 4096 Nov 24 17:43 broker-1

drwxr-xr-x 7 root root 4096 Nov 24 17:43 zookeeper

- 修改

config/server.properties配置文件;

vim broker-1/config/server.properties

############################# Server Basics ############################## The id of the broker. This must be set to a unique integer for each broker.

broker.id=1 # kafka 节点数字标识,集群内具有唯一性

#......############################# Socket Server Settings ############################## The address the socket server listens on. If not configured, the host name will be equal to the value of

# java.net.InetAddress.getCanonicalHostName(), with PLAINTEXT listener name, and port 9092.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://:9091

#.......

############################# Log Basics ############################## A comma separated list of directories under which to store log files

log.dirs=/root/cluster/broker-data/broker-1 # 监听器 9091 为本地端口,如果冲突,请重新指定

# .......

############################# Zookeeper ############################## Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=localhost:2181 # 数据文件路径,如果不存在,会自动创建# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=18000 # ZooKeeper 软件连接地址,2181 为默认的ZK 端口号 /kafka 为ZK 的管理节点- 同样的步骤,复制一份

broker-2与broker-3,并修改配置文件。

# broker-2

############################# Server Basics ############################## The id of the broker. This must be set to a unique integer for each broker.

broker.id=2 # kafka 节点数字标识,集群内具有唯一性

#......############################# Socket Server Settings ############################## The address the socket server listens on. If not configured, the host name will be equal to the value of

# java.net.InetAddress.getCanonicalHostName(), with PLAINTEXT listener name, and port 9092.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://:9092

#.......

############################# Log Basics ############################## A comma separated list of directories under which to store log files

log.dirs=/root/cluster/broker-data/broker-2 # 监听器 9091 为本地端口,如果冲突,请重新指定

# .......

############################# Zookeeper ############################## Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=localhost:2181 # 数据文件路径,如果不存在,会自动创建# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=18000 # ZooKeeper 软件连接地址,2181 为默认的ZK 端口号 /kafka 为ZK 的管理节点

# broker-3

############################# Server Basics ############################## The id of the broker. This must be set to a unique integer for each broker.

broker.id=3 # kafka 节点数字标识,集群内具有唯一性

#......############################# Socket Server Settings ############################## The address the socket server listens on. If not configured, the host name will be equal to the value of

# java.net.InetAddress.getCanonicalHostName(), with PLAINTEXT listener name, and port 9092.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://:9093

#.......

############################# Log Basics ############################## A comma separated list of directories under which to store log files

log.dirs=/root/cluster/broker-data/broker-3 # 监听器 9091 为本地端口,如果冲突,请重新指定

# .......

############################# Zookeeper ############################## Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=localhost:2181 # 数据文件路径,如果不存在,会自动创建# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=18000 # ZooKeeper 软件连接地址,2181 为默认的ZK 端口号 /kafka 为ZK 的管理节点

2.3 封装启动脚本

因为 Kafka 启动前,必须先启动 ZooKeeper,并且Kafka集群中有多个节点需要启动,所以启动过程比较繁琐,这里我们将启动的指令进行封装。

- 在

zookeeper文件夹下创建zk.sh批处理文件;

cd zookeeper

vim zk.sh## zk.sh

./bin/zookeeper-server-start.sh config/zookeeper.propertieschmod +x zk.sh

- 在

broker-1,broker-2,broker-3文件夹下分别创建kfk.sh批处理文件;

cd broker-1

vim kfk.sh## kfk.sh

./bin/kafka-server-start.sh config/server.propertieschmod +x kfk.sh

- 在

cluster文件夹下创建cluster.sh批处理文件,用于启动 kafka 集群。

vim cluster.sh

# cluster.sh

cd zookeeper

./zk.sh

cd ../broker-1

./kfk.sh

cd ../broker-2

./kfk.sh

cd ../broker-3

./kfk.sh

chmod +x cluster.sh

- 在

cluster文件夹下创建cluster-clear.sh批处理文件,用于清理和重置 kafka 数据。

vim cluster-clear.sh# cluster-clear.sh

rm -rf zookeeper-data

rm -rf broker-datachmod +x cluster-clear.sh

- 在

cluster目录下,运行./cluster.sh文件即可启动集群。

# 启动集群

./cluster# 查看是否启动成功,如果新建了data文件,说明启动成功了

ll zookeeper-data/

total 4

drwxr-xr-x 3 root root 4096 May 27 10:20 zookeeperll broker-data/

total 12

drwxr-xr-x 2 root root 4096 May 27 10:21 broker-1

drwxr-xr-x 2 root root 4096 May 27 10:21 broker-2

drwxr-xr-x 2 root root 4096 May 27 10:21 broker-3

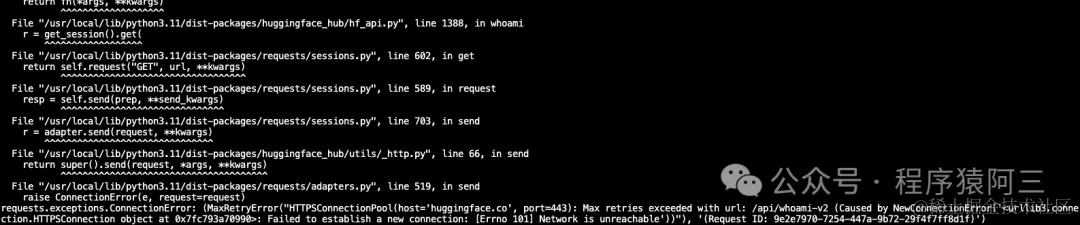

- 当我们想要关闭集群时,不仅要清理 zookeeper 和 kafka 的

数据文件,还有kill -9结束 zookeeper 进程与 kakfa 进程,这需要我们手动逐一kill。

ps axj | grep zookeeper

kill -9 #zookeeper的PIDps axj | grep kafka

kill -9 #3个kafka节点的PID