目录

一、准备工作

二、容器运行时

三、安装kubelet 、kubeadm、 kubectl

四、配置CNI

五、安装nginx

一、准备工作

1、更新yum源安装 vim、net-tools等工具(每个节点都执行)

yum update -yyum install vim -yyum install net-tools -y2、配置每个节点的网络,然后能互相ping通(每个节点上都要执行)

vi /etc/hosts192.168.178.141 master

192.168.178.150 node1

192.168.178.151 node23、安装时间插件,保证每个节点时间一致(每个节点上都要执行)

yum install -y chrony

systemctl start chronyd

systemctl enable chronyd4、关闭防火墙(这里为了省事,生产环境请开放相应的端口,每个节点都执行)

systemctl stop firewalld && systemctl disable firewalld5、禁用swap分区(每个节点都执行)

sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab6、禁用SELINUX(每个节点上都要执行)

sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config && setenforce 07、转发 IPv4 并让 iptables 看到桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOFsudo modprobe overlay

sudo modprobe br_netfilter# 设置所需的 sysctl 参数,参数在重新启动后保持不变

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF# 应用 sysctl 参数而不重新启动

sudo sysctl --system#检查模块是否被加载

lsmod | grep br_netfilter

lsmod | grep overlay#检查是否设置成功

sysctl net.bridge.bridge-nf-call-iptables net.bridge.bridge-nf-call-ip6tables net.ipv4.ip_forward8、 配置ipvs功能(每个节点上都要执行)

#安装

yum install ipset ipvsadm -y

#配置

cat <<EOF > /etc/sysconfig/modules/ipvs.modules

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

#授权

chmod +x /etc/sysconfig/modules/ipvs.modules

#执行

sh +x /etc/sysconfig/modules/ipvs.modules

#查看

lsmod | grep -e ip_vs -e nf_conntrack_ipv49、重启服务器让SELINUX生效

reboot -h now二、容器运行时

k8s支持4中容器运行时,这里介绍containerd方式安装。(每个节点都要安装)

# 添加docker源

curl -L -o /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# 安装containerd

yum install -y containerd.io

# 创建默认配置文件

containerd config default > /etc/containerd/config.toml

# 设置aliyun地址,不设置会连接不上, 如果无法下载镜像检查一下配置是否替换 cat /etc/containerd/config.toml |grep sandbox_image

sed -i "s#registry.k8s.io/pause#registry.aliyuncs.com/google_containers/pause#g" /etc/containerd/config.toml

# 设置驱动为systemd

sed -i 's/SystemdCgroup = false/SystemdCgroup = true/g' /etc/containerd/config.toml

# 设置docker地址为aliyun镜像地址

sed -i '/\[plugins\."io\.containerd\.grpc\.v1\.cri"\.registry\.mirrors\]/a\ [plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]\n endpoint = ["https://3kcpregv.mirror.aliyuncs.com" ,"https://registry-1.docker.io"]' /etc/containerd/config.toml# 重启服务

systemctl daemon-reload

systemctl enable --now containerd

systemctl restart containerd

三、安装kubelet 、kubeadm、 kubectl

1、安装Crictl

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOFsetenforce 0# 安装crictl工具

yum install -y cri-tools

# 生成配置文件

crictl config runtime-endpoint

# 编辑配置文件

cat << EOF | tee /etc/crictl.yaml

runtime-endpoint: "unix:///run/containerd/containerd.sock"

image-endpoint: "unix:///run/containerd/containerd.sock"

timeout: 10

debug: false

pull-image-on-create: false

disable-pull-on-run: false

EOF# 查看是否安装成功,和docker命令差不多

crictl info

crictl images

2、安装kubelet、kubeadm、kubectl

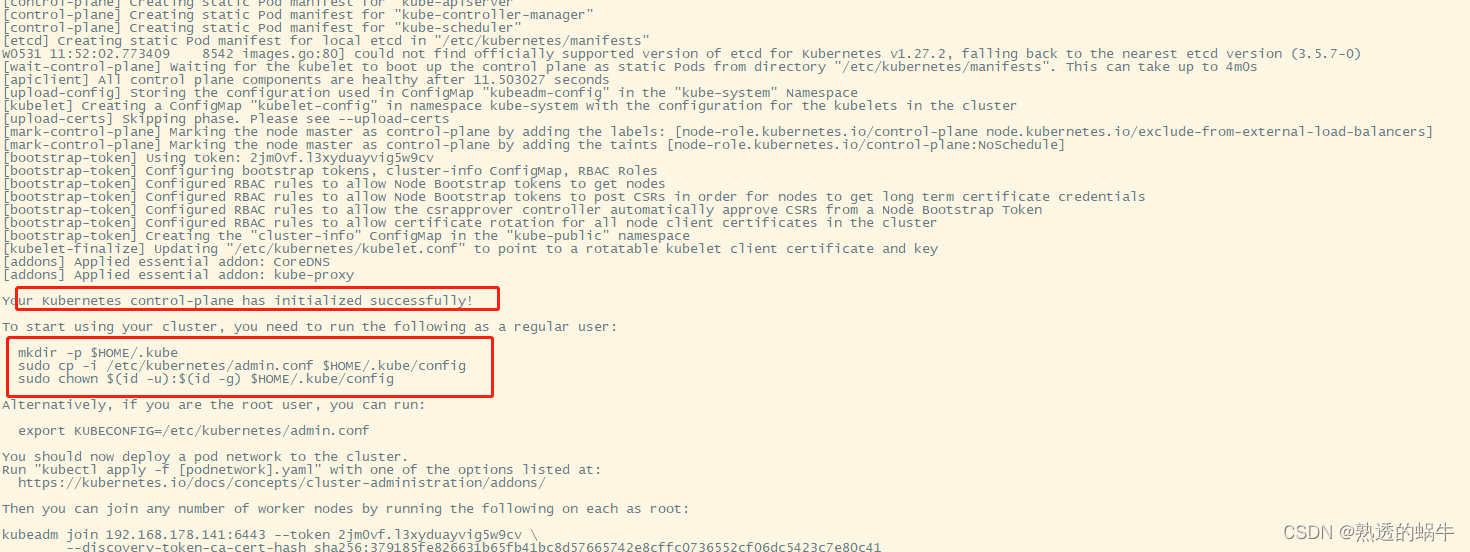

yum install --setopt=obsoletes=0 kubelet-1.27.2-0 kubeadm-1.27.2-0 kubectl-1.27.2-0 -ysystemctl enable kubelet && systemctl start kubelet3、节点初始化(仅在master节点执行)

kubeadm init \--apiserver-advertise-address=192.168.178.141 \--image-repository registry.aliyuncs.com/google_containers \--kubernetes-version v1.27.2 \--service-cidr=10.96.0.0/12 \--pod-network-cidr=10.244.0.0/16 \--ignore-preflight-errors=all如果报错执行重置命令

kubeadm resetrm -fr ~/.kube/ /etc/kubernetes/* var/lib/etcd/*

在master节点执行

mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/config在node节点执行,加入集群

kubeadm join 192.168.178.141:6443 --token 2jm0vf.l3xyduayvig5w9cv \--discovery-token-ca-cert-hash sha256:379185fe826631b65fb41bc8d57665742e8cffc0736552cf06dc5423c7e80c41四、配置CNI

1、创建kube-flannel.yml(master节点执行)

cat > kube-flannel.yml << EOF

---

kind: Namespace

apiVersion: v1

metadata:name: kube-flannellabels:pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: flannel

rules:

- apiGroups:- ""resources:- podsverbs:- get

- apiGroups:- ""resources:- nodesverbs:- list- watch

- apiGroups:- ""resources:- nodes/statusverbs:- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: flannel

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: flannel

subjects:

- kind: ServiceAccountname: flannelnamespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:name: flannelnamespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:name: kube-flannel-cfgnamespace: kube-flannellabels:tier: nodeapp: flannel

data:cni-conf.json: |{"name": "cbr0","cniVersion": "0.3.1","plugins": [{"type": "flannel","delegate": {"hairpinMode": true,"isDefaultGateway": true}},{"type": "portmap","capabilities": {"portMappings": true}}]}net-conf.json: |{"Network": "10.244.0.0/16","Backend": {"Type": "vxlan"}}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: kube-flannel-dsnamespace: kube-flannellabels:tier: nodeapp: flannel

spec:selector:matchLabels:app: flanneltemplate:metadata:labels:tier: nodeapp: flannelspec:affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: kubernetes.io/osoperator: Invalues:- linuxhostNetwork: truepriorityClassName: system-node-criticaltolerations:- operator: Existseffect: NoScheduleserviceAccountName: flannelinitContainers:- name: install-cni-plugin#image: flannelcni/flannel-cni-plugin:v1.1.0 for ppc64le and mips64le (dockerhub limitations may apply)image: docker.io/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.0command:- cpargs:- -f- /flannel- /opt/cni/bin/flannelvolumeMounts:- name: cni-pluginmountPath: /opt/cni/bin- name: install-cni#image: flannelcni/flannel:v0.19.0 for ppc64le and mips64le (dockerhub limitations may apply)image: docker.io/rancher/mirrored-flannelcni-flannel:v0.19.0command:- cpargs:- -f- /etc/kube-flannel/cni-conf.json- /etc/cni/net.d/10-flannel.conflistvolumeMounts:- name: cnimountPath: /etc/cni/net.d- name: flannel-cfgmountPath: /etc/kube-flannel/containers:- name: kube-flannel#image: flannelcni/flannel:v0.19.0 for ppc64le and mips64le (dockerhub limitations may apply)image: docker.io/rancher/mirrored-flannelcni-flannel:v0.19.0command:- /opt/bin/flanneldargs:- --ip-masq- --kube-subnet-mgrresources:requests:cpu: "100m"memory: "50Mi"limits:cpu: "100m"memory: "50Mi"securityContext:privileged: falsecapabilities:add: ["NET_ADMIN", "NET_RAW"]env:- name: POD_NAMEvalueFrom:fieldRef:fieldPath: metadata.name- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespace- name: EVENT_QUEUE_DEPTHvalue: "5000"volumeMounts:- name: runmountPath: /run/flannel- name: flannel-cfgmountPath: /etc/kube-flannel/- name: xtables-lockmountPath: /run/xtables.lockvolumes:- name: runhostPath:path: /run/flannel- name: cni-pluginhostPath:path: /opt/cni/bin- name: cnihostPath:path: /etc/cni/net.d- name: flannel-cfgconfigMap:name: kube-flannel-cfg- name: xtables-lockhostPath:path: /run/xtables.locktype: FileOrCreate

EOF2、执行

kubectl apply -f kube-flannel.yml3、查看node节点(等待1-2分钟)

kubectl get nodes

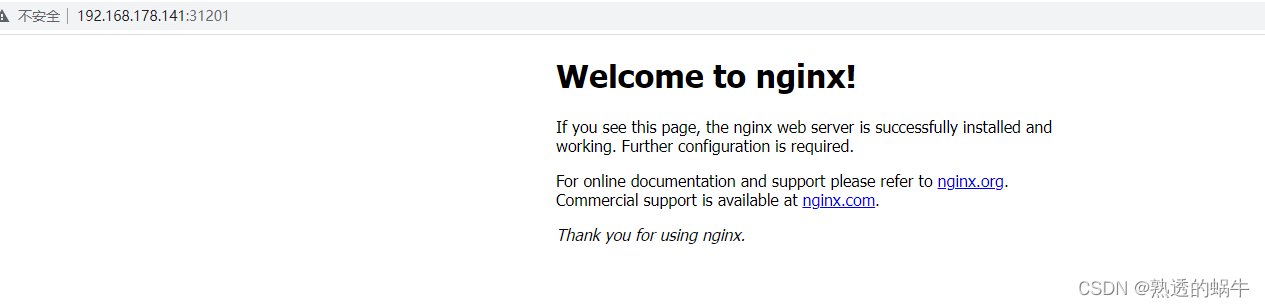

五、安装nginx

1、在master执行如下命令

kubectl create deployment nginx --image=nginx

2、暴露端口

kubectl expose deployment nginx --port=80 --type=NodePort

3、查看运行的服务

kubectl get pods,service

4、访问nginx

ip+31201

参考:https://blog.csdn.net/qq_36765991/article/details/128702891