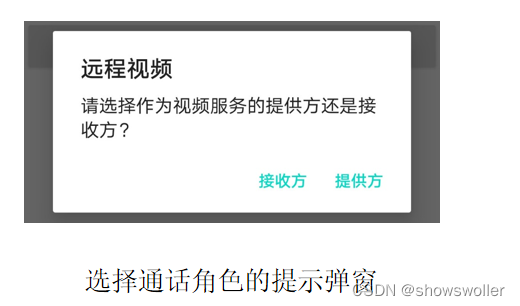

需要源码请点赞关注收藏后评论区留言私信~~~

一、引入WebRTC开源库

WebRTC开源库的集成步骤如下:

(1)给App模块的build.gradle添加WebRTC的依赖库配置;

(2)App得申请录音和相机权限,还得申请互联网权限;

(3)在代码中配置STUN/TURN服务器信息,并将它作为ICE候选者;

Peer对象的功能实现

每台接入WebRTC的设备都拥有自己的Peer对象,通过Peer对象完成点对点连接的相关操作。Peer对象主要实现下列几项功能:

(1)根据连接工厂、媒体流和ICE服务器初始化点对点连接。

(2)实现接口PeerConnection.Observer,主要重写onIceCandidate和onAddStream两个方法,其中前者在收到ICE候选者时回调,后者在添加媒体流时回调。

(3)实现接口SdpObserver,主要重写onCreateSuccess方法,该方法在SDP连接创建成功时回调,此时不但要设置本地连接的会话描述,还要把媒体能力的会话描述送给信令服务器。

二、实现WebRTC的发起方

初始化发起方音视频的媒体流之时,主要完成下列三项任务:

(1)创建并初始化视频捕捉器,以便通过摄像头实时获取视频画面;

(2)创建音视频的媒体流,并给媒体流先后添加音频轨道和视频轨道;

(3)指定视频轨道中我方的渲染图层,也就是关联SurfaceViewRenderer控件;

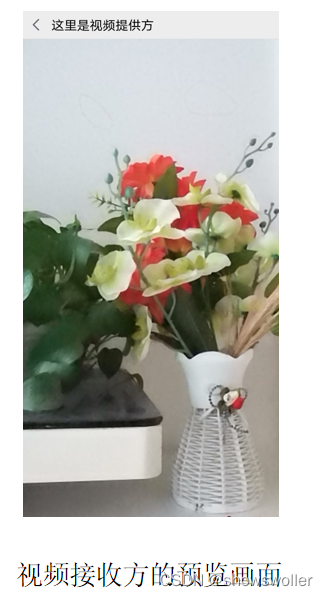

发起方界面如下

代码如下

package com.example.live;import androidx.appcompat.app.AppCompatActivity;import android.os.Bundle;

import android.util.Log;

import android.widget.TextView;import com.example.live.bean.ContactInfo;

import com.example.live.constant.ChatConst;

import com.example.live.util.SocketUtil;

import com.example.live.webrtc.Peer;

import com.example.live.webrtc.ProxyVideoSink;import org.json.JSONException;

import org.json.JSONObject;

import org.webrtc.AudioSource;

import org.webrtc.AudioTrack;

import org.webrtc.Camera1Enumerator;

import org.webrtc.Camera2Enumerator;

import org.webrtc.CameraEnumerator;

import org.webrtc.DefaultVideoDecoderFactory;

import org.webrtc.DefaultVideoEncoderFactory;

import org.webrtc.EglBase;

import org.webrtc.IceCandidate;

import org.webrtc.MediaConstraints;

import org.webrtc.MediaStream;

import org.webrtc.PeerConnection;

import org.webrtc.PeerConnectionFactory;

import org.webrtc.RendererCommon;

import org.webrtc.SessionDescription;

import org.webrtc.SurfaceTextureHelper;

import org.webrtc.SurfaceViewRenderer;

import org.webrtc.VideoCapturer;

import org.webrtc.VideoDecoderFactory;

import org.webrtc.VideoEncoderFactory;

import org.webrtc.VideoSource;

import org.webrtc.VideoTrack;

import org.webrtc.audio.AudioDeviceModule;

import org.webrtc.audio.JavaAudioDeviceModule;import java.util.List;import io.socket.client.Socket;public class VideoOfferActivity extends AppCompatActivity {private final static String TAG = "VideoOfferActivity";private Socket mSocket; // 声明一个套接字对象private SurfaceViewRenderer svr_local; // 本地的表面视图渲染器(我方)private PeerConnectionFactory mConnFactory; // 点对点连接工厂private EglBase mEglBase; // OpenGL ES 与本地设备之间的接口对象private MediaStream mMediaStream; // 媒体流private VideoCapturer mVideoCapturer; // 视频捕捉器private MediaConstraints mOfferConstraints; // 提供方的媒体条件private MediaConstraints mAudioConstraints; // 音频的媒体条件private List<PeerConnection.IceServer> mIceServers = ChatConst.getIceServerList(); // ICE服务器列表private Peer mPeer; // 点对点对象private ContactInfo mContact = new ContactInfo("提供方", "接收方");@Overrideprotected void onCreate(Bundle savedInstanceState) {super.onCreate(savedInstanceState);setContentView(R.layout.activity_video_offer);initRender(); // 初始化渲染图层initStream(); // 初始化音视频的媒体流initSocket(); // 初始化信令交互的套接字initView(); // 初始化视图界面}// 初始化视图界面private void initView() {TextView tv_title = findViewById(R.id.tv_title);tv_title.setText("这里是视频提供方");findViewById(R.id.iv_back).setOnClickListener(v -> dialOff()); // 挂断通话}// 挂断通话private void dialOff() {mSocket.off("other_hang_up"); // 取消监听对方的挂断请求SocketUtil.emit(mSocket, "self_hang_up", mContact); // 发出挂断通话消息finish(); // 关闭当前页面}@Overridepublic void onBackPressed() {super.onBackPressed();dialOff(); // 挂断通话}// 初始化渲染图层private void initRender() {svr_local = findViewById(R.id.svr_local);mEglBase = EglBase.create(); // 创建EglBase实例// 以下初始化我方的渲染图层svr_local.init(mEglBase.getEglBaseContext(), null);svr_local.setMirror(true); // 是否设置镜像svr_local.setZOrderMediaOverlay(true); // 是否置于顶层// 设置缩放类型,SCALE_ASPECT_FILL表示充满视图svr_local.setScalingType(RendererCommon.ScalingType.SCALE_ASPECT_FIT);svr_local.setEnableHardwareScaler(false); // 是否开启硬件缩放}// 初始化音视频的媒体流private void initStream() {Log.d(TAG, "initStream");// 初始化点对点连接工厂PeerConnectionFactory.initialize(PeerConnectionFactory.InitializationOptions.builder(getApplicationContext()).createInitializationOptions());// 创建视频的编解码方式VideoEncoderFactory encoderFactory;VideoDecoderFactory decoderFactory;encoderFactory = new DefaultVideoEncoderFactory(mEglBase.getEglBaseContext(), true, true);decoderFactory = new DefaultVideoDecoderFactory(mEglBase.getEglBaseContext());AudioDeviceModule audioModule = JavaAudioDeviceModule.builder(this).createAudioDeviceModule();// 创建点对点连接工厂PeerConnectionFactory.Options options = new PeerConnectionFactory.Options();mConnFactory = PeerConnectionFactory.builder().setOptions(options).setAudioDeviceModule(audioModule).setVideoEncoderFactory(encoderFactory).setVideoDecoderFactory(decoderFactory).createPeerConnectionFactory();initConstraints(); // 初始化视频通话的各项条件// 创建音视频的媒体流mMediaStream = mConnFactory.createLocalMediaStream("local_stream");// 以下创建并添加音频轨道AudioSource audioSource = mConnFactory.createAudioSource(mAudioConstraints);AudioTrack audioTrack = mConnFactory.createAudioTrack("audio_track", audioSource);mMediaStream.addTrack(audioTrack);// 以下创建并初始化视频捕捉器mVideoCapturer = createVideoCapture();VideoSource videoSource = mConnFactory.createVideoSource(mVideoCapturer.isScreencast());SurfaceTextureHelper surfaceHelper = SurfaceTextureHelper.create("CaptureThread", mEglBase.getEglBaseContext());mVideoCapturer.initialize(surfaceHelper, this, videoSource.getCapturerObserver());// 设置视频画质。三个参数分别表示:视频宽度、视频高度、每秒传输帧数fpsmVideoCapturer.startCapture(720, 1080, 15);// 以下创建并添加视频轨道VideoTrack videoTrack = mConnFactory.createVideoTrack("video_track", videoSource);mMediaStream.addTrack(videoTrack);ProxyVideoSink localSink = new ProxyVideoSink();localSink.setTarget(svr_local); // 指定视频轨道中我方的渲染图层mMediaStream.videoTracks.get(0).addSink(localSink);}// 初始化信令交互的套接字private void initSocket() {mSocket = MainApplication.getInstance().getSocket();mSocket.connect(); // 建立Socket连接// 等待接入ICE候选者,目的是打通流媒体传输网络mSocket.on("IceInfo", args -> {Log.d(TAG, "IceInfo");try {JSONObject json = (JSONObject) args[0];IceCandidate candidate = new IceCandidate(json.getString("id"),json.getInt("label"), json.getString("candidate"));mPeer.getConnection().addIceCandidate(candidate); // 添加ICE候选者} catch (JSONException e) {e.printStackTrace();}});// 等待对方的会话连接,以便建立双方的通信链路mSocket.on("SdpInfo", args -> {Log.d(TAG, "SdpInfo");try {JSONObject json = (JSONObject) args[0];SessionDescription sd = new SessionDescription(SessionDescription.Type.fromCanonicalForm(json.getString("type")), json.getString("description"));mPeer.getConnection().setRemoteDescription(mPeer, sd); // 设置对方的会话描述} catch (JSONException e) {e.printStackTrace();}});mSocket.on("other_hang_up", (args) -> dialOff()); // 等待对方挂断通话Log.d(TAG, "self_dial_in");SocketUtil.emit(mSocket, "self_dial_in", mContact); // 我方发起了视频通话// 等待对方接受视频通话mSocket.on("other_dial_in", (args) -> {String other_name = (String) args[0];Log.d(TAG, mContact.from+" to "+mContact.to+", other_name="+other_name);// 第四个参数表示对方接受视频通话之后,如何显示对方的视频画面mPeer = new Peer(mSocket, mContact.from, mContact.to, (userId, remoteStream) -> Log.d(TAG, "new Peer"));mPeer.init(mConnFactory, mMediaStream, mIceServers); // 初始化点对点连接mPeer.getConnection().createOffer(mPeer, mOfferConstraints); // 创建供应});}// 初始化视频通话的各项条件private void initConstraints() {// 创建发起方的媒体条件mOfferConstraints = new MediaConstraints();// 是否接受音频流mOfferConstraints.mandatory.add(new MediaConstraints.KeyValuePair("OfferToReceiveAudio", "true"));// 是否接受视频流mOfferConstraints.mandatory.add(new MediaConstraints.KeyValuePair("OfferToReceiveVideo", "true"));// 创建音频流的媒体条件mAudioConstraints = new MediaConstraints();// 是否消除回声mAudioConstraints.mandatory.add(new MediaConstraints.KeyValuePair("googEchoCancellation", "true"));// 是否自动增益mAudioConstraints.mandatory.add(new MediaConstraints.KeyValuePair("googAutoGainControl", "true"));// 是否过滤高音mAudioConstraints.mandatory.add(new MediaConstraints.KeyValuePair("googHighpassFilter", "true"));// 是否抑制噪音mAudioConstraints.mandatory.add(new MediaConstraints.KeyValuePair("googNoiseSuppression", "true"));}// 根据相机类型创建对应的视频捕捉器private VideoCapturer createCameraCapture(CameraEnumerator enumerator) {final String[] deviceNames = enumerator.getDeviceNames();// 先使用前置摄像头for (String deviceName : deviceNames) {if (enumerator.isFrontFacing(deviceName)) {VideoCapturer videoCapturer = enumerator.createCapturer(deviceName, null);if (videoCapturer != null) {return videoCapturer;}}}// 没有前置摄像头再找后置摄像头for (String deviceName : deviceNames) {if (!enumerator.isFrontFacing(deviceName)) {VideoCapturer videoCapturer = enumerator.createCapturer(deviceName, null);if (videoCapturer != null) {return videoCapturer;}}}return null;}// 创建视频捕捉器private VideoCapturer createVideoCapture() {VideoCapturer videoCapturer;if (Camera2Enumerator.isSupported(this)) { // 优先使用二代相机videoCapturer = createCameraCapture(new Camera2Enumerator(this));} else { // 如果不支持二代相机,就使用传统相机videoCapturer = createCameraCapture(new Camera1Enumerator(true));}return videoCapturer;}@Overrideprotected void onDestroy() {super.onDestroy();mSocket.off("other_dial_in"); // 取消监听对方的接入请求mSocket.off("other_hang_up"); // 取消监听对方的挂断请求mSocket.off("IceInfo"); // 取消监听流媒体传输mSocket.off("SdpInfo"); // 取消监听会话连接svr_local.release(); // 释放本地的渲染器资源(我方)try { // 停止视频捕捉,也就是关闭摄像头mVideoCapturer.stopCapture();} catch (Exception e) {e.printStackTrace();}if (mSocket.connected()) { // 已经连上Socket服务器mSocket.disconnect(); // 断开Socket连接}}}三、实现WebRTC的接收方

除了向信令服务器发送同意通话指令以外,接受方与发起方的处理逻辑还有下列两处区别:

(1)收到对方的会话连接后,要调用createAnswer方法创建应答,然后发起方才能传来音视频数据;

(2)创建Peer对象之时,第四个输入参数要收下对方远程的媒体流对象,并将其设置到视频轨道中对方的渲染图层;

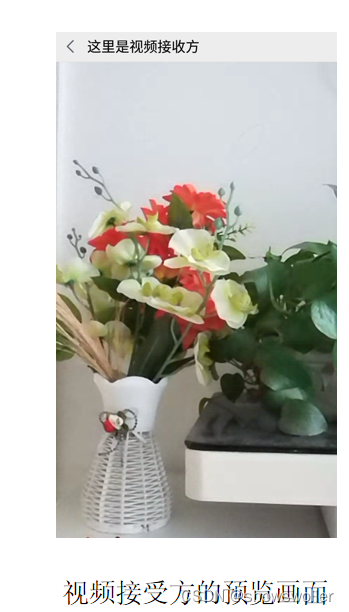

接受后效果如下

代码如下

package com.example.live;import androidx.appcompat.app.AppCompatActivity;import android.os.Bundle;

import android.util.Log;

import android.widget.TextView;import com.example.live.bean.ContactInfo;

import com.example.live.constant.ChatConst;

import com.example.live.util.SocketUtil;

import com.example.live.webrtc.Peer;

import com.example.live.webrtc.ProxyVideoSink;import org.json.JSONException;

import org.json.JSONObject;

import org.webrtc.DefaultVideoDecoderFactory;

import org.webrtc.DefaultVideoEncoderFactory;

import org.webrtc.EglBase;

import org.webrtc.IceCandidate;

import org.webrtc.MediaConstraints;

import org.webrtc.MediaStream;

import org.webrtc.PeerConnection;

import org.webrtc.PeerConnectionFactory;

import org.webrtc.RendererCommon;

import org.webrtc.SessionDescription;

import org.webrtc.SurfaceViewRenderer;

import org.webrtc.VideoDecoderFactory;

import org.webrtc.VideoEncoderFactory;

import org.webrtc.VideoTrack;

import org.webrtc.audio.AudioDeviceModule;

import org.webrtc.audio.JavaAudioDeviceModule;import java.util.List;import io.socket.client.Socket;public class VideoRecipientActivity extends AppCompatActivity {private final static String TAG = "VideoRecipientActivity";private Socket mSocket; // 声明一个套接字对象private SurfaceViewRenderer svr_remote; // 远程的表面视图渲染器(对方)private PeerConnectionFactory mConnFactory; // 点对点连接工厂private EglBase mEglBase; // OpenGL ES 与本地设备之间的接口对象private MediaStream mMediaStream; // 媒体流private List<PeerConnection.IceServer> mIceServers = ChatConst.getIceServerList(); // ICE服务器列表private Peer mPeer; // 点对点对象private ContactInfo mContact = new ContactInfo("接收方", "提供方");@Overrideprotected void onCreate(Bundle savedInstanceState) {super.onCreate(savedInstanceState);setContentView(R.layout.activity_video_recipient);initRender(); // 初始化渲染图层initStream(); // 初始化音视频的媒体流initSocket(); // 初始化信令交互的套接字initView(); // 初始化视图界面}// 初始化视图界面private void initView() {TextView tv_title = findViewById(R.id.tv_title);tv_title.setText("这里是视频接收方");findViewById(R.id.iv_back).setOnClickListener(v -> dialOff()); // 挂断通话}// 挂断通话private void dialOff() {mSocket.off("other_hang_up"); // 取消监听对方的挂断请求SocketUtil.emit(mSocket, "self_hang_up", mContact); // 发出挂断通话消息finish(); // 关闭当前页面}@Overridepublic void onBackPressed() {super.onBackPressed();dialOff(); // 挂断通话}// 初始化渲染图层private void initRender() {svr_remote = findViewById(R.id.svr_remote);mEglBase = EglBase.create(); // 创建EglBase实例// 以下初始化对方的渲染图层svr_remote.init(mEglBase.getEglBaseContext(), null);svr_remote.setMirror(false); // 是否设置镜像svr_remote.setZOrderMediaOverlay(false); // 是否置于顶层// 设置缩放类型,SCALE_ASPECT_FILL表示充满视图svr_remote.setScalingType(RendererCommon.ScalingType.SCALE_ASPECT_FILL);svr_remote.setEnableHardwareScaler(false); // 是否开启硬件缩放}// 初始化音视频的媒体流private void initStream() {Log.d(TAG, "initStream");// 初始化点对点连接工厂PeerConnectionFactory.initialize(PeerConnectionFactory.InitializationOptions.builder(getApplicationContext()).createInitializationOptions());// 创建视频的编解码方式VideoEncoderFactory encoderFactory;VideoDecoderFactory decoderFactory;encoderFactory = new DefaultVideoEncoderFactory(mEglBase.getEglBaseContext(), true, true);decoderFactory = new DefaultVideoDecoderFactory(mEglBase.getEglBaseContext());AudioDeviceModule audioModule = JavaAudioDeviceModule.builder(this).createAudioDeviceModule();// 创建点对点连接工厂PeerConnectionFactory.Options options = new PeerConnectionFactory.Options();mConnFactory = PeerConnectionFactory.builder().setOptions(options).setAudioDeviceModule(audioModule).setVideoEncoderFactory(encoderFactory).setVideoDecoderFactory(decoderFactory).createPeerConnectionFactory();// 创建音视频的媒体流mMediaStream = mConnFactory.createLocalMediaStream("local_stream");}// 初始化信令交互的套接字private void initSocket() {mSocket = MainApplication.getInstance().getSocket();mSocket.connect(); // 建立Socket连接// 等待接入ICE候选者,目的是打通流媒体传输网络mSocket.on("IceInfo", args -> {Log.d(TAG, "IceInfo");try {JSONObject json = (JSONObject) args[0];IceCandidate candidate = new IceCandidate(json.getString("id"),json.getInt("label"), json.getString("candidate"));mPeer.getConnection().addIceCandidate(candidate); // 添加ICE候选者} catch (JSONException e) {e.printStackTrace();}});// 等待对方的会话连接,以便建立双方的通信链路mSocket.on("SdpInfo", args -> {Log.d(TAG, "SdpInfo");try {JSONObject json = (JSONObject) args[0];SessionDescription sd = new SessionDescription(SessionDescription.Type.fromCanonicalForm(json.getString("type")), json.getString("description"));mPeer.getConnection().setRemoteDescription(mPeer, sd); // 设置对方的会话描述// 接受方要创建应答mPeer.getConnection().createAnswer(mPeer, new MediaConstraints());} catch (JSONException e) {e.printStackTrace();}});// 第四个参数表示对方接受视频通话之后,如何显示对方的视频画面mPeer = new Peer(mSocket, mContact.from, mContact.to, (userId, remoteStream) -> {String desc = String.format("from=%s, to=%s", mContact.from, mContact.to);Log.d(TAG, "addRemoteStream "+desc);ProxyVideoSink remoteSink = new ProxyVideoSink();remoteSink.setTarget(svr_remote); // 设置视频轨道中对方的渲染图层VideoTrack videoTrack = remoteStream.videoTracks.get(0);videoTrack.addSink(remoteSink);});mPeer.init(mConnFactory, mMediaStream, mIceServers); // 初始化点对点连接mSocket.on("other_hang_up", (args) -> dialOff()); // 等待对方挂断通话Log.d(TAG, "self_dial_in");SocketUtil.emit(mSocket, "self_dial_in", mContact); // 我方同意了视频通话}@Overrideprotected void onDestroy() {super.onDestroy();mSocket.off("other_dial_in"); // 取消监听对方的接入请求mSocket.off("other_hang_up"); // 取消监听对方的挂断请求mSocket.off("IceInfo"); // 取消监听流媒体传输mSocket.off("SdpInfo"); // 取消监听会话连接svr_remote.release(); // 释放远程的渲染器资源(对方)if (mSocket.connected()) { // 已经连上Socket服务器mSocket.disconnect(); // 断开Socket连接}}}创作不易 觉得有帮助请点赞关注收藏~~~

![[附源码]Python计算机毕业设计Django交通事故档案管理系统](https://img-blog.csdnimg.cn/d369f1b7905846d1a7a2dfaf9ef566e0.png)