一、数据集准备

数据集下载地址:https://github.com/YimianDai/sirst

1. 需要将数据集转换为YOLO所需要的txt格式

参考链接:https://github.com/pprp/voc2007_for_yolo_torch

1.1 检测图片及其xml文件

import os, shutildef checkPngXml(dir1, dir2, dir3, is_move=True):"""dir1 是图片所在文件夹dir2 是标注文件所在文件夹dir3 是创建的,如果图片没有对应的xml文件,那就将图片放入dir3is_move 是确认是否进行移动,否则只进行打印"""if not os.path.exists(dir3):os.mkdir(dir3)cnt = 0for file in os.listdir(dir1):f_name,f_ext = file.split(".")if not os.path.exists(os.path.join(dir2, f_name+".xml")):print(f_name)if is_move:cnt += 1shutil.move(os.path.join(dir1,file), os.path.join(dir3, file))if cnt > 0:print("有%d个文件不符合要求,已打印。"%(cnt))else:print("所有图片和对应的xml文件都是一一对应的。")if __name__ == "__main__":dir1 = r"dataset/images/images" # 修改为自己的图片路径dir2 = r"dataset/masks/masks" # 修改为自己的图片路径dir3 = r"dataset/Allempty" # 修改为自己的图片路径checkPngXml(dir1, dir2, dir3, False)1.2 划分训练集

import os

import random

import os, fnmatchtrainval_percent = 0.8

train_percent = 0.8xmlfilepath = r"dataset/masks/masks"

txtsavepath = r"dataset"

total_xml = fnmatch.filter(os.listdir(xmlfilepath), '*.xml')

print(total_xml)num=len(total_xml)

list=range(num)

tv=int(num*trainval_percent)

tr=int(tv*train_percent)

trainval= random.sample(list,tv)

train=random.sample(trainval,tr)ftrainval = open('dataset/trainval.txt', 'w')

ftest = open('dataset/test.txt', 'w')

ftrain = open('dataset/train.txt', 'w')

fval = open('dataset/val.txt', 'w')for i in list:name=total_xml[i][:-4]+'\n'if i in trainval:ftrainval.write(name)if i in train:ftrain.write(name)else:fval.write(name)else:ftest.write(name)ftrainval.close()

ftrain.close()

fval.close()

ftest.close()1.3 转为txt标签

# -*- coding: utf-8 -*-

"""

需要修改的地方:

1. sets中替换为自己的数据集

2. classes中替换为自己的类别

3. 将本文件放到VOC2007目录下

4. 直接开始运行

"""import xml.etree.ElementTree as ET

import pickle

import os

from os import listdir, getcwd

from os.path import join

sets=[('2007', 'train'), ('2007', 'val'), ('2007', 'test')] #替换为自己的数据集

classes = ["Target"] #修改为自己的类别def convert(size, box):dw = 1./(size[0])dh = 1./(size[1])x = (box[0] + box[1])/2.0y = (box[2] + box[3])/2.0w = box[1] - box[0]h = box[3] - box[2]x = x*dww = w*dwy = y*dhh = h*dhreturn (x,y,w,h)def convert_annotation(year, image_id):in_file = open('dataset/masks/masks/%s.xml'%(image_id)) #将数据集放于当前目录下out_file = open('dataset/labels/%s.txt'%(image_id), 'w')tree=ET.parse(in_file)root = tree.getroot()size = root.find('size')w = int(size.find('width').text)h = int(size.find('height').text)print(w,h)for obj in root.iter('object'):difficult = obj.find('difficult').textcls = obj.find('name').textif cls not in classes or int(difficult)==1:continuecls_id = classes.index(cls)print(cls_id)xmlbox = obj.find('bndbox')b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text), float(xmlbox.find('ymax').text))bb = convert((w,h), b)print(bb)out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for year, image_set in sets:if not os.path.exists('dataset/labels/'):os.makedirs('dataset/labels/')image_ids = open('dataset/%s.txt'%(image_set)).read().strip().split()list_file = open('%s_%s.txt'%(year, image_set), 'w')for image_id in image_ids:list_file.write('dataset/images/images/%s.png\n'%(image_id))convert_annotation(year, image_id)list_file.close()

# os.system("cat 2007_train.txt 2007_val.txt > train.txt") #修改为自己的数据集用作训练1.4 构造数据集

import os, shutil"""

需要满足以下条件:

1. 在JPEGImages中准备好图片

2. 在labels中准备好labels

3. 创建好如下所示的文件目录:- images- train2014- val2014- labels(由于voc格式中有labels文件夹,所以重命名为label)- train2014- val2014

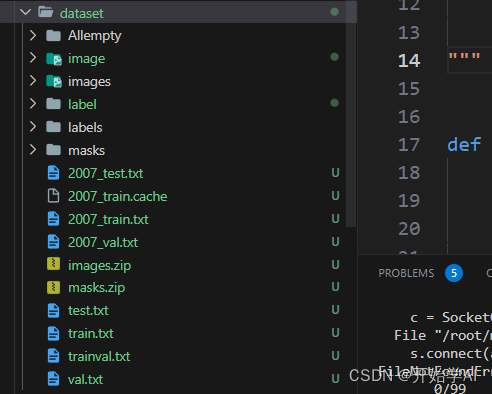

"""def make_for_torch_yolov3(dir_image,dir_label,dir1_train,dir1_val,dir2_train,dir2_val,main_trainval,main_test):if not os.path.exists(dir1_train):os.mkdir(dir1_train)if not os.path.exists(dir1_val):os.mkdir(dir1_val)if not os.path.exists(dir2_train):os.mkdir(dir2_train)if not os.path.exists(dir2_val):os.mkdir(dir2_val)with open(main_trainval, "r") as f1:for line in f1:print(line[:-1])# print(os.path.join(dir_image, line[:-1]+".png"), os.path.join(dir1_train, line[:-1]+".png"))shutil.copy(os.path.join(dir_image, line[:-1]+".png"),os.path.join(dir1_train, line[:-1]+".png"))shutil.copy(os.path.join(dir_label, line[:-1]+".txt"),os.path.join(dir2_train, line[:-1]+".txt"))with open(main_test, "r") as f2:for line in f2:print(line[:-1])shutil.copy(os.path.join(dir_image, line[:-1]+".png"),os.path.join(dir1_val, line[:-1]+".png"))shutil.copy(os.path.join(dir_label, line[:-1]+".txt"),os.path.join(dir2_val, line[:-1]+".txt"))if __name__ == "__main__":'''https://github.com/ultralytics/yolov3这个pytorch版本的数据集组织- images- train2014 # dir1_train- val2014 # dir1_val- labels- train2014 # dir2_train- val2014 # dir2_valtrainval.txt, test.txt 是由create_main.py构建的'''dir_image = r"dataset/images/images"dir_label = r"dataset/labels"dir1_train = r"dataset/image/train2014"dir1_val = r"dataset/image/val2014"dir2_train = r"dataset/label/train2014"dir2_val = r"dataset/label/val2014"main_trainval = r"dataset/trainval.txt"main_test = r"dataset/test.txt"make_for_torch_yolov3(dir_image,dir_label,dir1_train,dir1_val,dir2_train,dir2_val,main_trainval,main_test)最终数据集格式如下:

2. 构造训练所需要的数据集

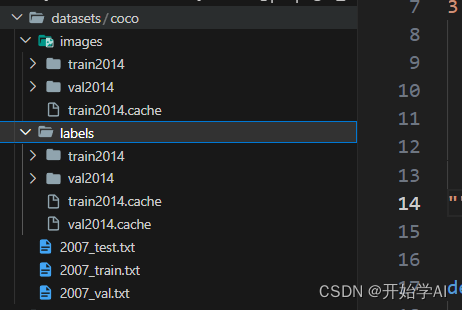

根据以上数据集 需要单独构建一个datasets文件夹,存放标签和图像,具体格式如下:

可以参考该链接:https://github.com/ultralytics/yolov5/issues/7389

3. 构建数据配置文档,需要注意 YOLOv5目录需要和datasets目录同级。

命名为hongwai.yaml

# YOLOv3 🚀 by Ultralytics, AGPL-3.0 license

# COCO 2017 dataset http://cocodataset.org by Microsoft

# Example usage: python train.py --data coco.yaml

# parent

# ├── yolov5

# └── datasets

# └── coco ← downloads here (20.1 GB)# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: ../datasets/coco # dataset root dir

train: images/train2014 # train images (relative to 'path') 118287 images

val: images/val2014 # val images (relative to 'path') 5000 images

test: images/val2014 # 20288 of 40670 images, submit to https://competitions.codalab.org/competitions/20794# Classes

nc: 1

names:0: Target二、矩池云配置环境

1. 租用环境

2、 配置环境,缺啥配啥,耐心解决问题

参考命令:

pip install -r requirements.txt也许训练过程中还会报错找不到module,根据module名字,使用pip安装即可

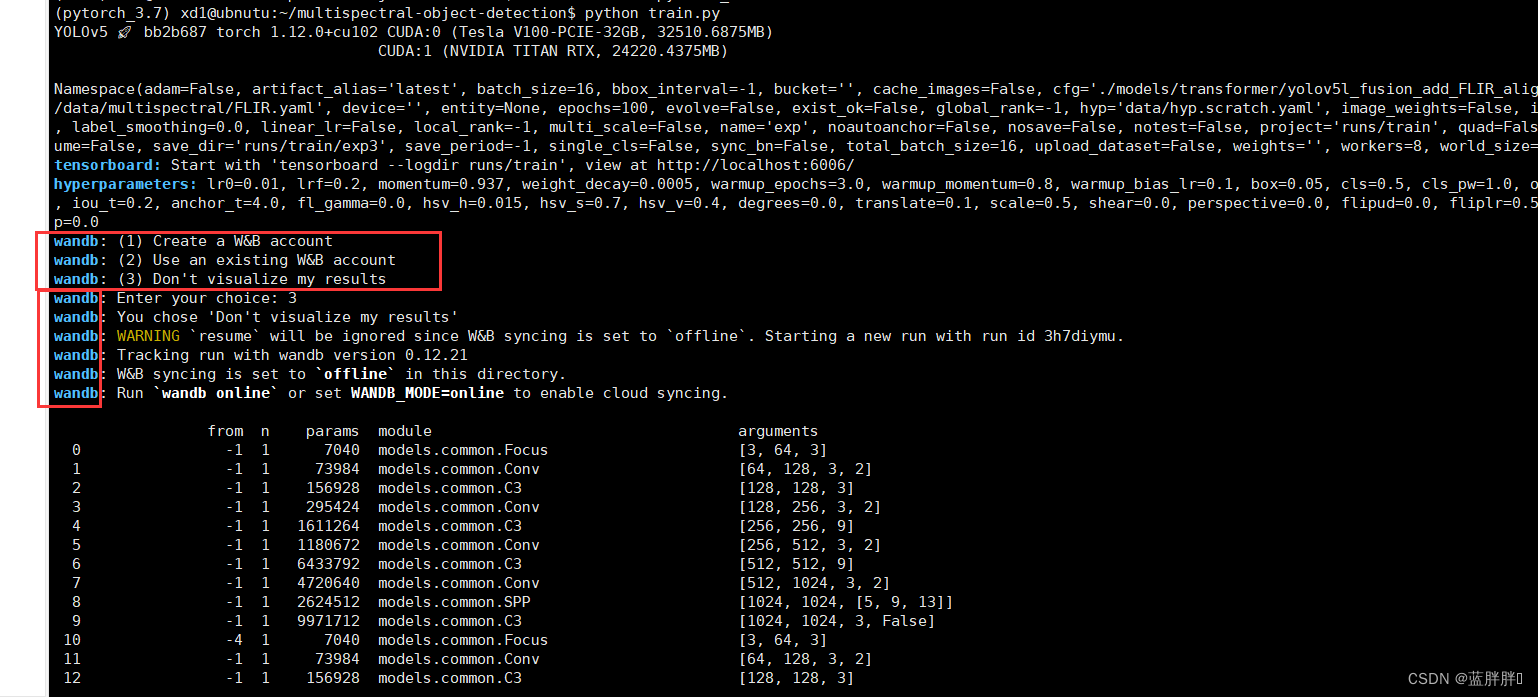

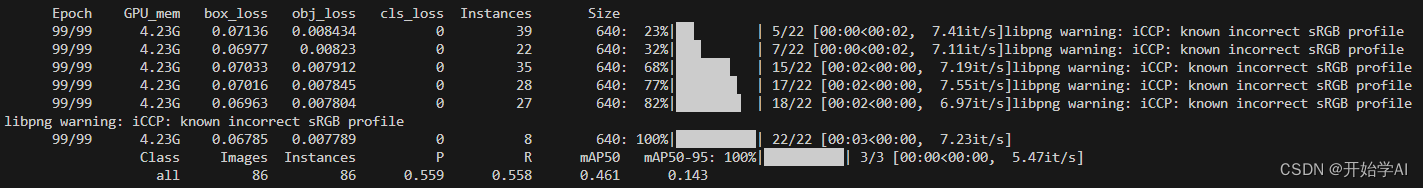

三、训练

YOLOv5训练命令:

python train.py --data data/hongwai.yaml --weights '' --cfg yolov5s.yaml --img 640 --device 0YOLOv3训练命令:

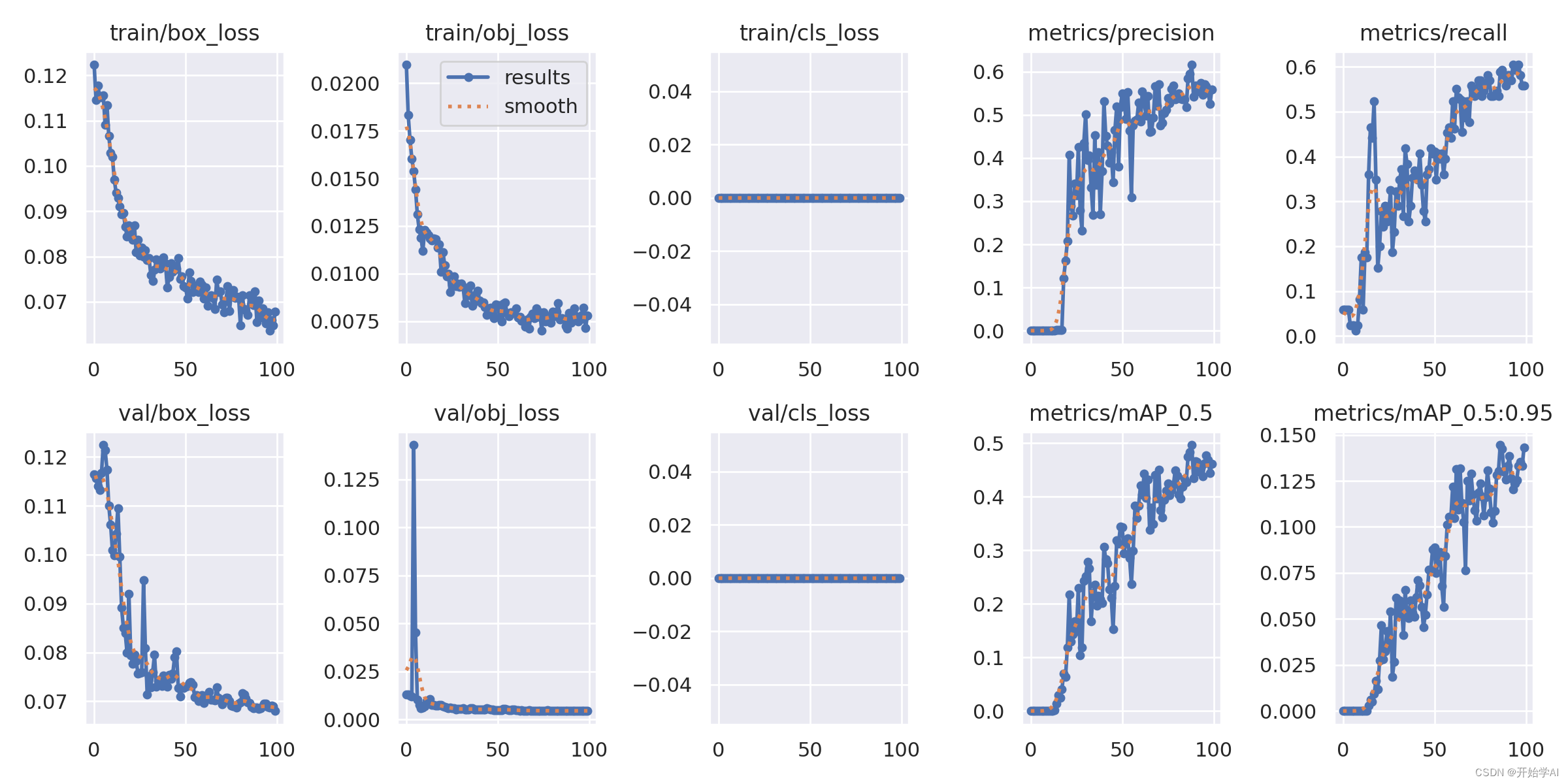

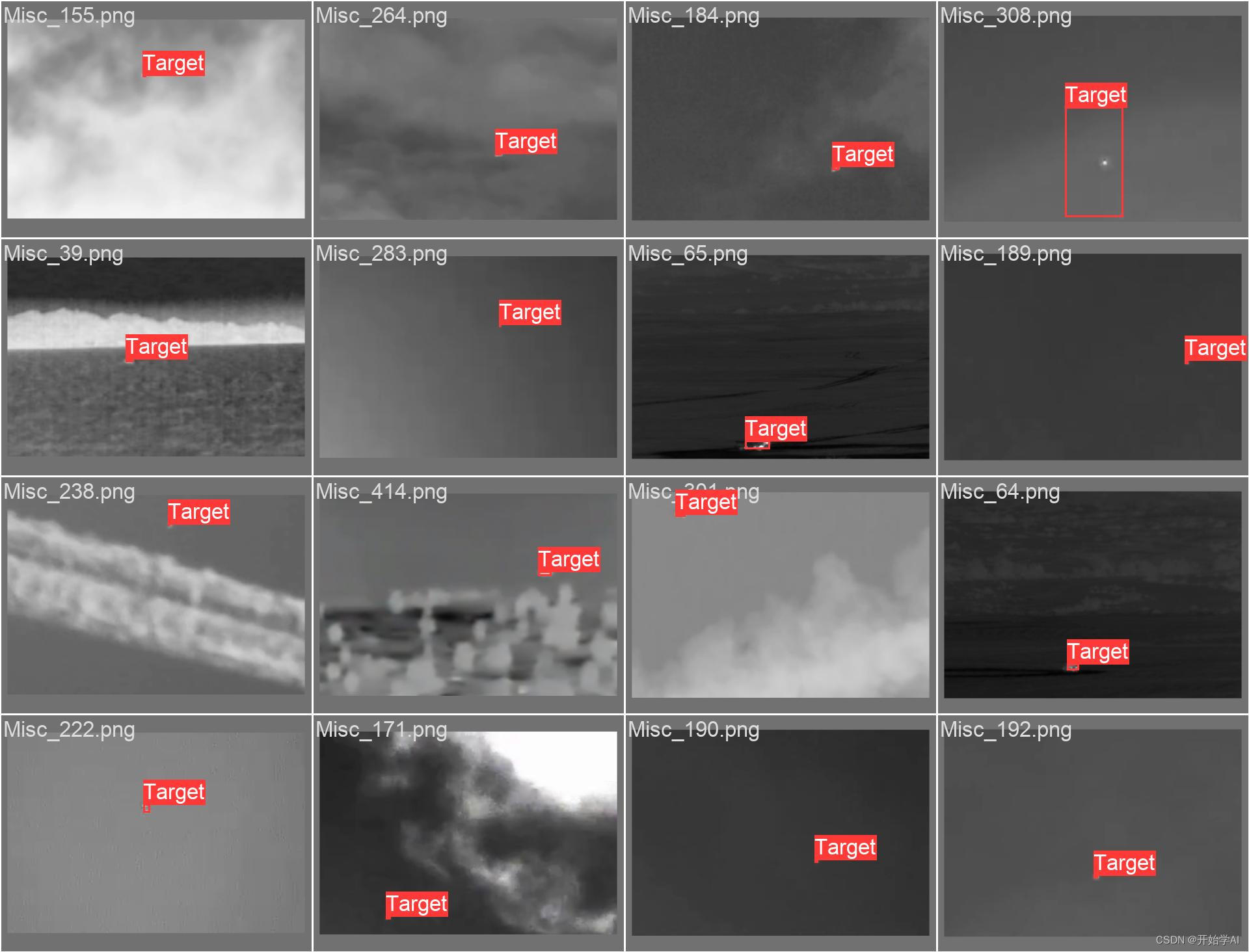

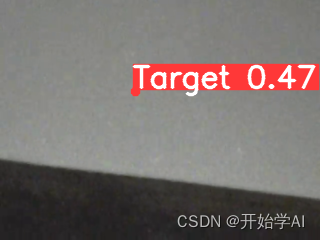

python train.py --data data/hongwai.yaml --weights '' --cfg yolov3.yaml --img 640 --device 0训练结果部分展示:

四、文件夹检测

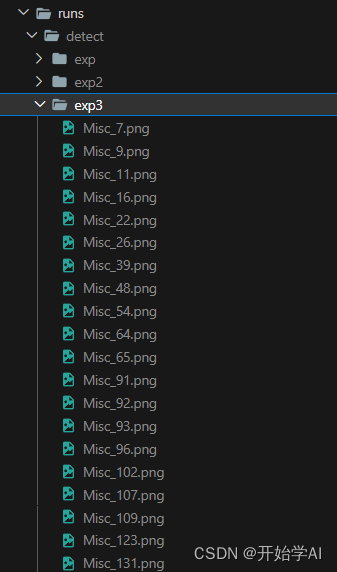

执行命令:

python detect.py --weights runs/train/exp10/weights/best.pt --source dataset/image/val2014结果保存位置:

【创造不易,需要指导做该项目的可以联系】