一、上节回顾

上一节,我们一起回顾了常见的文件系统和磁盘 I/O 性能指标,梳理了核心的 I/O 性能观测工具,最后还总结了快速分析 I/O 性能问题的思路。

虽然 I/O 的性能指标很多,相应的性能分析工具也有好几个,但理解了各种指标的含义后,你就会发现它们其实都有一定的关联。

顺着这些关系往下理解,你就会发现,掌握这些常用的瓶颈分析思路,其实并不难。找出了 I/O 的性能瓶颈后,下一步要做的就是优化了,也就是如何以最快的速度完成 I/O

操作,或者换个思路,减少甚至避免磁盘的 I/O 操作。今天,我就来说说,优化 I/O 性能问题的思路和注意事项。

二、I/O 基准测试

按照我的习惯,优化之前,我会先问自己, I/O 性能优化的目标是什么?换句话说,我们观察的这些 I/O 性能指标(比如 IOPS、吞吐量、延迟等),要达到多少才合适呢?

事实上,I/O 性能指标的具体标准,每个人估计会有不同的答案,因为我们每个人的应用场景、使用的文件系统和物理磁盘等,都有可能不一样。

为了更客观合理地评估优化效果,我们首先应该对磁盘和文件系统进行基准测试,得到文件系统或者磁盘 I/O 的极限性能。

fio(Flexible I/O Tester)正是最常用的文件系统和磁盘 I/O 性能基准测试工具。它提供了大量的可定制化选项,可以用来测试,裸盘或者文件系统在各种场景下的 I/O 性能,包

括了不同块大小、不同 I/O 引擎以及是否使用缓存等场景。fio 的安装比较简单,你可以执行下面的命令来安装它:

# Ubuntu apt-get install -y fioroot@luoahong:~# apt-get --fix-broken install E: dpkg was interrupted, you must manually run 'dpkg --configure -a' to correct the problem. root@luoahong:~# dpkg --purge fio dpkg: warning: ignoring request to remove fio which isn't installed root@luoahong:~# apt-get install -y fio Reading package lists... Done Building dependency tree ...... Setting up fio (3.1-1) ... Processing triggers for libc-bin (2.27-3ubuntu1) ... W: APT had planned for dpkg to do more than it reported back (45 vs 49).Affected packages: man-db:amd64

1、fio基准测试

安装完成后,就可以执行 man fio 查询它的使用方法。

fio 的选项非常多, 我会通过几个常见场景的测试方法,介绍一些最常用的选项。这些常见场景包括随机读、随机写、顺序读以及顺序写等,你可以执行下面这些命令来测试:

随机读

[root@luoahong ~]# fio -name=randread -direct=1 -iodepth=64 -rw=randread -ioengine=libaio -bs=4k -size=1G -numjobs=1 -runtime=1000 -group_reporting -filename=/dev/sda randread: (g=0): rw=randread, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=64 fio-3.1 Starting 1 process Jobs: 1 (f=1): [r(1)][100.0%][r=5088KiB/s,w=0KiB/s][r=1272,w=0 IOPS][eta 00m:00s] randread: (groupid=0, jobs=1): err= 0: pid=10300: Wed Sep 4 10:38:44 2019read: IOPS=267, BW=1071KiB/s (1096kB/s)(1024MiB/979315msec)slat (nsec): min=1031, max=203759k, avg=50478.54, stdev=1172919.69clat (usec): min=269, max=3218.8k, avg=239027.70, stdev=215031.80lat (usec): min=485, max=3218.8k, avg=239079.19, stdev=215027.75clat percentiles (msec):| 1.00th=[ 20], 5.00th=[ 43], 10.00th=[ 61], 20.00th=[ 93],| 30.00th=[ 123], 40.00th=[ 153], 50.00th=[ 184], 60.00th=[ 222],| 70.00th=[ 271], 80.00th=[ 347], 90.00th=[ 477], 95.00th=[ 600],| 99.00th=[ 978], 99.50th=[ 1418], 99.90th=[ 2198], 99.95th=[ 2366],| 99.99th=[ 2567]bw ( KiB/s): min= 280, max= 4311, per=99.75%, avg=1067.32, stdev=326.74, samples=1958iops : min= 70, max= 1077, avg=266.61, stdev=81.72, samples=1958lat (usec) : 500=0.01%, 1000=0.01%lat (msec) : 2=0.01%, 4=0.03%, 10=0.17%, 20=0.83%, 50=6.00%lat (msec) : 100=15.48%, 250=43.58%, 500=25.27%, 750=6.38%, 1000=1.31%lat (msec) : 2000=0.71%, >=2000=0.24%cpu : usr=0.38%, sys=2.65%, ctx=240149, majf=0, minf=72IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%issued rwt: total=262144,0,0, short=0,0,0, dropped=0,0,0latency : target=0, window=0, percentile=100.00%, depth=64Run status group 0 (all jobs):READ: bw=1071KiB/s (1096kB/s), 1071KiB/s-1071KiB/s (1096kB/s-1096kB/s), io=1024MiB (1074MB), run=979315-979315msecDisk stats (read/write):sda: ios=259355/10, merge=2705/0, ticks=62083410/1783, in_queue=61955109, util=31.08%

随机写

[root@luoahong ~]# fio -name=randwrite -direct=1 -iodepth=64 -rw=randwrite -ioengine=libaio -bs=4k -size=1G -numjobs=1 -runtime=1000 -group_reporting -filename=/dev/sda randwrite: (g=0): rw=randwrite, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=64 fio-3.1 Starting 1 process Jobs: 1 (f=1): [w(1)][100.0%][r=0KiB/s,w=1297KiB/s][r=0,w=324 IOPS][eta 00m:00s] randwrite: (groupid=0, jobs=1): err= 0: pid=10321: Wed Sep 4 10:57:01 2019write: IOPS=229, BW=919KiB/s (941kB/s)(898MiB/1000356msec)slat (nsec): min=1569, max=151439k, avg=86390.77, stdev=641299.23clat (usec): min=139, max=1949.7k, avg=278515.95, stdev=184461.72lat (usec): min=1589, max=1949.7k, avg=278603.35, stdev=184431.97clat percentiles (msec):| 1.00th=[ 6], 5.00th=[ 16], 10.00th=[ 115], 20.00th=[ 161],| 30.00th=[ 192], 40.00th=[ 222], 50.00th=[ 249], 60.00th=[ 279],| 70.00th=[ 313], 80.00th=[ 355], 90.00th=[ 443], 95.00th=[ 693],| 99.00th=[ 978], 99.50th=[ 1053], 99.90th=[ 1267], 99.95th=[ 1385],| 99.99th=[ 1603]bw ( KiB/s): min= 7, max= 2272, per=100.00%, avg=943.44, stdev=339.01, samples=1945iops : min= 1, max= 568, avg=235.59, stdev=84.83, samples=1945lat (usec) : 250=0.01%, 1000=0.01%lat (msec) : 2=0.01%, 4=0.24%, 10=2.62%, 20=3.00%, 50=1.09%lat (msec) : 100=1.28%, 250=42.03%, 500=42.14%, 750=3.40%, 1000=3.38%lat (msec) : 2000=0.82%cpu : usr=0.15%, sys=2.95%, ctx=132790, majf=0, minf=10IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%issued rwt: total=0,229790,0, short=0,0,0, dropped=0,0,0latency : target=0, window=0, percentile=100.00%, depth=64Run status group 0 (all jobs):WRITE: bw=919KiB/s (941kB/s), 919KiB/s-919KiB/s (941kB/s-941kB/s), io=898MiB (941MB), run=1000356-1000356msecDisk stats (read/write):sda: ios=40/229428, merge=0/336, ticks=64/63397222, in_queue=63282052, util=19.13%

顺序读

root@luoahong:~# fio -name=read -direct=1 -iodepth=64 -rw=read -ioengine=libaio -bs=4k -size=1G -numjobs=1 -runtime=1000 -group_reporting -filename=/dev/sda read: (g=0): rw=read, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=64 fio-3.1 Starting 1 process Jobs: 1 (f=1): [R(1)][100.0%][r=8513KiB/s,w=0KiB/s][r=2128,w=0 IOPS][eta 00m:00s] read: (groupid=0, jobs=1): err= 0: pid=18581: Wed Sep 4 11:52:49 2019read: IOPS=2327, BW=9308KiB/s (9531kB/s)(1024MiB/112653msec)slat (nsec): min=936, max=139761k, avg=414996.94, stdev=453643.64clat (usec): min=379, max=192111, avg=27075.92, stdev=5001.29lat (usec): min=806, max=192113, avg=27492.07, stdev=5029.24clat percentiles (msec):| 1.00th=[ 14], 5.00th=[ 24], 10.00th=[ 25], 20.00th=[ 26],| 30.00th=[ 27], 40.00th=[ 27], 50.00th=[ 28], 60.00th=[ 28],| 70.00th=[ 28], 80.00th=[ 29], 90.00th=[ 30], 95.00th=[ 31],| 99.00th=[ 36], 99.50th=[ 41], 99.90th=[ 91], 99.95th=[ 117],| 99.99th=[ 182]bw ( KiB/s): min= 6568, max=10376, per=99.81%, avg=9290.73, stdev=550.37, samples=225iops : min= 1642, max= 2594, avg=2322.52, stdev=137.64, samples=225lat (usec) : 500=0.01%, 1000=0.01%lat (msec) : 2=0.01%, 4=0.01%, 10=0.46%, 20=2.02%, 50=97.24%lat (msec) : 100=0.18%, 250=0.07%cpu : usr=0.17%, sys=95.02%, ctx=44147, majf=0, minf=75IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%issued rwt: total=262144,0,0, short=0,0,0, dropped=0,0,0latency : target=0, window=0, percentile=100.00%, depth=64Run status group 0 (all jobs):READ: bw=9308KiB/s (9531kB/s), 9308KiB/s-9308KiB/s (9531kB/s-9531kB/s), io=1024MiB (1074MB), run=112653-112653msecDisk stats (read/write):sda: ios=256744/7, merge=5167/7, ticks=245516/60, in_queue=245476, util=99.13% root@luoahong:~# [root@luoahong ~]# fio -name=randwrite -direct=1 -iodepth=64 -rw=randwrite -ioengine=libaio -bs=4k -size=1G -numjobs=1 -runtime=1000 -group_reporting -filename=/dev/sda iB/1000356msec)slat (nsec): min=1569, max=151439k, avg=86390.77, stdev=641299.23clat (usec): min=139, max=1949.7k, avg=278515.95, stdev=184461.72lat (usec): min=1589, max=1949.7k, avg=278603.35, stdev=184431.97clat percentiles (msec):| 1.00th=[ 6], 5.00th=[ 16], 10.00th=[ 115], 20.00th=[ 161],| 30.00th=[ 192], 40.00th=[ 222], 50.00th=[ 249], 60.00th=[ 279],| 70.00th=[ 313], 80.00th=[ 355], 90.00th=[ 443], 95.00th=[ 693],| 99.00th=[ 978], 99.50th=[ 1053], 99.90th=[ 1267], 99.95th=[ 1385],| 99.99th=[ 1603]bw ( KiB/s): min= 7, max= 2272, per=100.00%, avg=943.44, stdev=339.01, samples=1945iops : min= 1, max= 568, avg=235.59, stdev=84.83, samples=1945lat (usec) : 250=0.01%, 1000=0.01%lat (msec) : 2=0.01%, 4=0.24%, 10=2.62%, 20=3.00%, 50=1.09%lat (msec) : 100=1.28%, 250=42.03%, 500=42.14%, 750=3.40%, 1000=3.38%lat (msec) : 2000=0.82%cpu : usr=0.15%, sys=2.95%, ctx=132790, majf=0, minf=10IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%issued rwt: total=0,229790,0, short=0,0,0, dropped=0,0,0latency : target=0, window=0, percentile=100.00%, depth=64Run status group 0 (all jobs):WRITE: bw=919KiB/s (941kB/s), 919KiB/s-919KiB/s (941kB/s-941kB/s), io=898MiB (941MB), run=1000356-1000356msecDisk stats (read/write):sda: ios=40/229428, merge=0/336, ticks=64/63397222, in_queue=63282052, util=19.13%

顺序写

root@luoahong:~# fio -name=write -direct=1 -iodepth=64 -rw=write -ioengine=libaio -bs=4k -size=1G -numjobs=1 -runtime=1000 -group_reporting -filename=/dev/sda write: (g=0): rw=write, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=64 fio-3.1 Starting 1 process Jobs: 1 (f=1): [W(1)][100.0%][r=0KiB/s,w=9228KiB/s][r=0,w=2307 IOPS][eta 00m:00s] write: (groupid=0, jobs=1): err= 0: pid=18719: Wed Sep 4 11:56:27 2019write: IOPS=2123, BW=8492KiB/s (8696kB/s)(1024MiB/123478msec)slat (nsec): min=1087, max=170478k, avg=441588.53, stdev=1042972.32clat (usec): min=394, max=370796, avg=29695.38, stdev=13215.53lat (usec): min=1161, max=371439, avg=30138.96, stdev=13315.57clat percentiles (msec):| 1.00th=[ 11], 5.00th=[ 21], 10.00th=[ 25], 20.00th=[ 27],| 30.00th=[ 27], 40.00th=[ 28], 50.00th=[ 28], 60.00th=[ 29],| 70.00th=[ 30], 80.00th=[ 32], 90.00th=[ 35], 95.00th=[ 41],| 99.00th=[ 69], 99.50th=[ 107], 99.90th=[ 213], 99.95th=[ 292],| 99.99th=[ 326]bw ( KiB/s): min= 2431, max=11952, per=99.70%, avg=8466.10, stdev=1311.50, samples=246iops : min= 607, max= 2988, avg=2116.33, stdev=327.89, samples=246lat (usec) : 500=0.01%lat (msec) : 2=0.01%, 4=0.06%, 10=0.90%, 20=3.49%, 50=93.49%lat (msec) : 100=1.51%, 250=0.46%, 500=0.07%cpu : usr=0.17%, sys=92.34%, ctx=42648, majf=0, minf=12IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%issued rwt: total=0,262144,0, short=0,0,0, dropped=0,0,0latency : target=0, window=0, percentile=100.00%, depth=64Run status group 0 (all jobs):WRITE: bw=8492KiB/s (8696kB/s), 8492KiB/s-8492KiB/s (8696kB/s-8696kB/s), io=1024MiB (1074MB), run=123478-123478msecDisk stats (read/write):sda: ios=62/251952, merge=0/10003, ticks=76/452756, in_queue=452736, util=98.83%

2、在这其中,有几个参数需要你重点关注一下

direct

iodepth

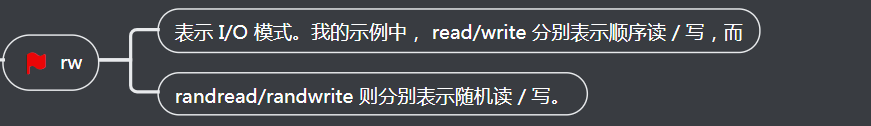

rw

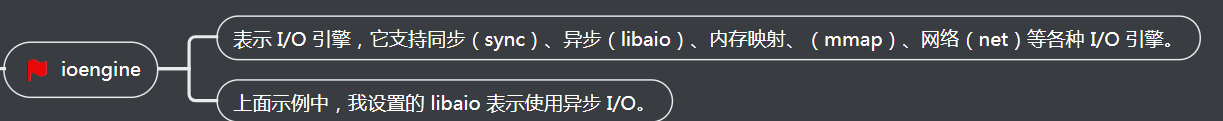

ioengine

bs

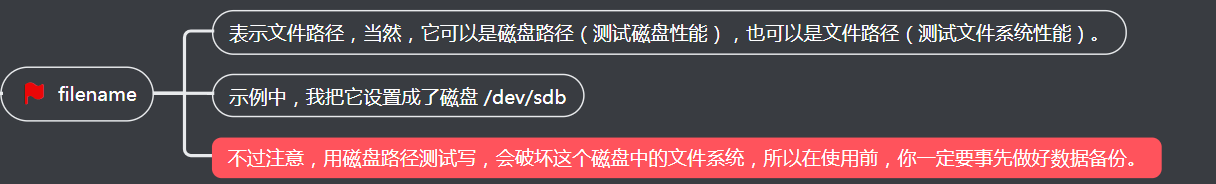

filename

3、下面就是我使用 fio 测试顺序读的一个报告示例。

read: (g=0): rw=read, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=64 fio-3.1 Starting 1 process Jobs: 1 (f=1): [R(1)][100.0%][r=16.7MiB/s,w=0KiB/s][r=4280,w=0 IOPS][eta 00m:00s] read: (groupid=0, jobs=1): err= 0: pid=17966: Sun Dec 30 08:31:48 2018read: IOPS=4257, BW=16.6MiB/s (17.4MB/s)(1024MiB/61568msec)slat (usec): min=2, max=2566, avg= 4.29, stdev=21.76clat (usec): min=228, max=407360, avg=15024.30, stdev=20524.39lat (usec): min=243, max=407363, avg=15029.12, stdev=20524.26clat percentiles (usec):| 1.00th=[ 498], 5.00th=[ 1020], 10.00th=[ 1319], 20.00th=[ 1713],| 30.00th=[ 1991], 40.00th=[ 2212], 50.00th=[ 2540], 60.00th=[ 2933],| 70.00th=[ 5407], 80.00th=[ 44303], 90.00th=[ 45351], 95.00th=[ 45876],| 99.00th=[ 46924], 99.50th=[ 46924], 99.90th=[ 48497], 99.95th=[ 49021],| 99.99th=[404751]bw ( KiB/s): min= 8208, max=18832, per=99.85%, avg=17005.35, stdev=998.94, samples=123iops : min= 2052, max= 4708, avg=4251.30, stdev=249.74, samples=123lat (usec) : 250=0.01%, 500=1.03%, 750=1.69%, 1000=2.07%lat (msec) : 2=25.64%, 4=37.58%, 10=2.08%, 20=0.02%, 50=29.86%lat (msec) : 100=0.01%, 500=0.02%cpu : usr=1.02%, sys=2.97%, ctx=33312, majf=0, minf=75IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%issued rwt: total=262144,0,0, short=0,0,0, dropped=0,0,0latency : target=0, window=0, percentile=100.00%, depth=64Run status group 0 (all jobs):READ: bw=16.6MiB/s (17.4MB/s), 16.6MiB/s-16.6MiB/s (17.4MB/s-17.4MB/s), io=1024MiB (1074MB), run=61568-61568msecDisk stats (read/write):sdb: ios=261897/0, merge=0/0, ticks=3912108/0, in_queue=3474336, util=90.09%

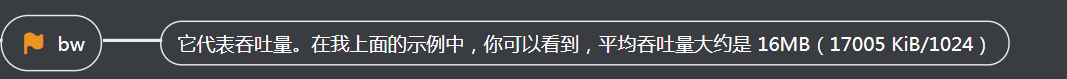

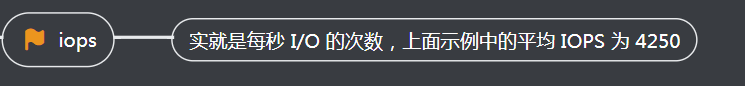

这个报告中,需要我们重点关注的是, slat、clat、lat ,以及 bw 和 iops 这几行。

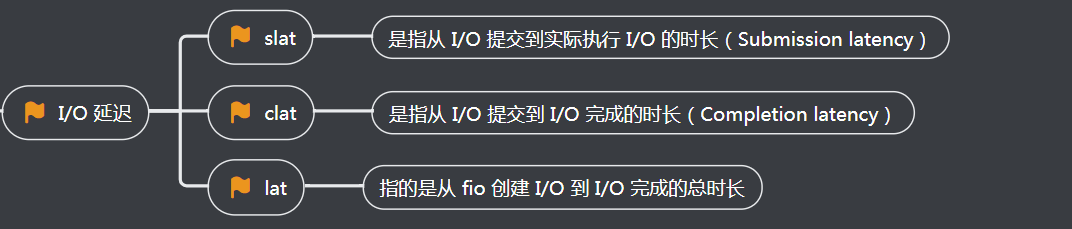

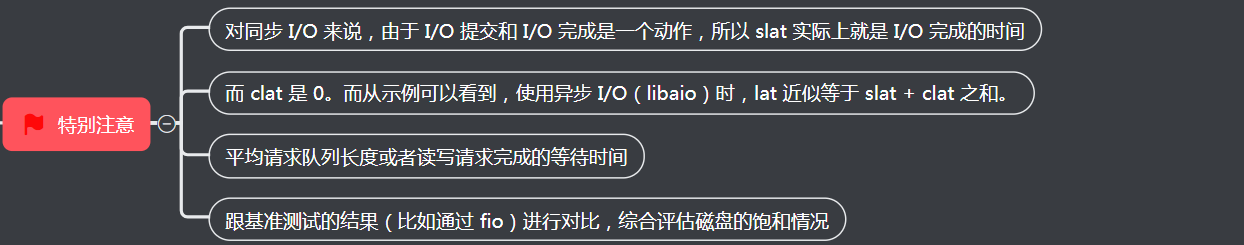

先来看刚刚提到的前三个参数。事实上,slat、clat、lat 都是指 I/O 延迟(latency)。不同之处在于:

这里需要注意的是:

bw

iops

4、怎么才能精确模拟应用程序的 I/O 模式呢?

通常情况下,应用程序的 I/O 都是读写并行的,而且每次的 I/O 大小也不一定相同。所以,刚刚说的这几种场景,并不能精确模拟应用程序的 I/O 模式。那怎么才能精确模拟应用程序的 I/O 模式呢?

幸运的是,fio 支持 I/O 的重放。借助前面提到过的 blktrace,再配合上 fio,就可以实现对应用程序 I/O 模式的基准测试。你需要先用 blktrace ,记录磁盘设备的 I/O 访问情况;然后使用 fio ,重放 blktrace 的记录。

比如你可以运行下面的命令来操作:

# 使用 blktrace 跟踪磁盘 I/O,注意指定应用程序正在操作的磁盘 $ blktrace /dev/sdb# 查看 blktrace 记录的结果 # ls sdb.blktrace.0 sdb.blktrace.1# 将结果转化为二进制文件 $ blkparse sdb -d sdb.bin# 使用 fio 重放日志 $ fio --name=replay --filename=/dev/sdb --direct=1 --read_iolog=sdb.bin

实验命令如下

root@luoahong:/opt# blktrace /dev/sda ^C=== sda ===CPU 0: 58750 events, 2754 KiB dataCPU 1: 30543 events, 1432 KiB dataTotal: 89293 events (dropped 0), 4186 KiB data root@luoahong:/opt#ls sda.blktrace.0 sda.blktrace.1 root@luoahong:/opt#blkparse sda -d sdb.bin root@luoahong:/opt# ls sda.blktrace.0 sda.blktrace.1 sdb.bin root@luoahong:/opt# fio --name=replay --filename=/dev/sda --direct=1 --read_iolog=sda.bin ......8,0 0 0 760.074846448 0 m N cfq schedule dispatch8,0 0 17700 760.075924831 442 A FWFS 80191616 + 8 <- (8,2) 801875208,0 0 17701 760.075997189 442 Q WS 80191616 + 8 [jbd2/sda2-8]8,0 0 17702 760.076071782 442 G WS 80191616 + 8 [jbd2/sda2-8]8,0 0 17703 760.076139949 442 I WS 80191616 + 8 [jbd2/sda2-8]8,0 0 0 760.076208396 0 m N cfq442SN insert_request8,0 0 0 760.076741162 0 m N cfq442SN Not idling. st->count:18,0 0 0 760.076807933 0 m N cfq442SN dispatch_insert8,0 0 0 760.076875821 0 m N cfq442SN dispatched a request8,0 0 0 760.076943150 0 m N cfq442SN activate rq, drv=18,0 0 17704 760.077009082 442 D WS 80191616 + 8 [jbd2/sda2-8]8,0 0 17705 760.077959513 442 C WS 80191616 + 8 [0]8,0 0 0 760.078169323 0 m N cfq442SN complete rqnoidle 18,0 0 0 760.078567431 0 m N cfq442SN Not idling. st->count:18,0 0 0 760.078638671 0 m N cfq schedule dispatch CPU0 (sda):Reads Queued: 49, 348KiB Writes Queued: 1630, 1862MiBRead Dispatches: 48, 344KiB Write Dispatches: 2265, 1889MiBReads Requeued: 0 Writes Requeued: 0Reads Completed: 49, 348KiB Writes Completed: 2245, 1863MiBRead Merges: 0, 0KiB Write Merges: 280, 5688KiBRead depth: 15 Write depth: 33PC Reads Queued: 0, 0KiB PC Writes Queued: 0, 0KiBPC Read Disp.: 0, 0KiB PC Write Disp.: 0, 0KiBPC Reads Req.: 0 PC Writes Req.: 0PC Reads Compl.: 1 PC Writes Compl.: 0IO unplugs: 1972 Timer unplugs: 15 CPU1 (sda):Reads Queued: 3, 12KiB Writes Queued: 1757, 1871MiBRead Dispatches: 4, 16KiB Write Dispatches: 2096, 1844MiBReads Requeued: 0 Writes Requeued: 0Reads Completed: 3, 12KiB Writes Completed: 2116, 1870MiBRead Merges: 0, 0KiB Write Merges: 535, 7248KiBRead depth: 15 Write depth: 33PC Reads Queued: 0, 0KiB PC Writes Queued: 0, 0KiBPC Read Disp.: 1, 0KiB PC Write Disp.: 0, 0KiBPC Reads Req.: 0 PC Writes Req.: 0PC Reads Compl.: 0 PC Writes Compl.: 0IO unplugs: 1907 Timer unplugs: 13Total (sda):Reads Queued: 52, 360KiB Writes Queued: 3387, 3734MiBRead Dispatches: 52, 360KiB Write Dispatches: 4361, 3734MiBReads Requeued: 0 Writes Requeued: 0Reads Completed: 52, 360KiB Writes Completed: 4361, 3734MiBRead Merges: 0, 0KiB Write Merges: 815, 12936KiBPC Reads Queued: 0, 0KiB PC Writes Queued: 0, 0KiBPC Read Disp.: 1, 0KiB PC Write Disp.: 0, 0KiBPC Reads Req.: 0 PC Writes Req.: 0PC Reads Compl.: 1 PC Writes Compl.: 0IO unplugs: 3879 Timer unplugs: 28Throughput (R/W): 0KiB/s / 4913KiB/s Events (sda): 68763 entries Skips: 0 forward (0 - 0.0%)

三、I/O 性能优化

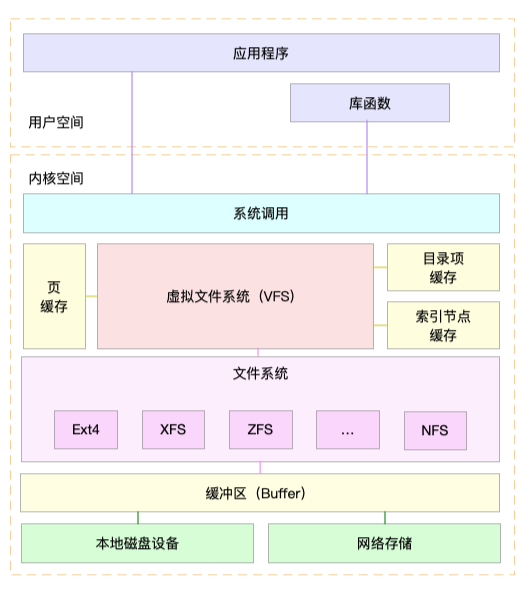

得到 I/O 基准测试报告后,再用上我们上一节总结的性能分析套路,找出 I/O 的性能瓶颈并优化,就是水到渠成的事情了。当然, 想要优化 I/O 性能,肯定离不开 Linux 系统的

I/O 栈图的思路辅助。你可以结合下面的 I/O 栈图再回顾一下。

下面,我就带你从应用程序、文件系统以及磁盘角度,分别看看 I/O 性能优化的基本思路。

四、应用程序优化

首先,我们来看一下,从应用程序的角度有哪些优化 I/O 的思路。

应用程序处于整个 I/O 栈的最上端,它可以通过系统调用,来调整 I/O 模式(如顺序还是随机、同步还是异步), 同时,它也是 I/O 数据的最终来源。在我看来,可以有这么几种

方式来优化应用程序的 I/O 性能。

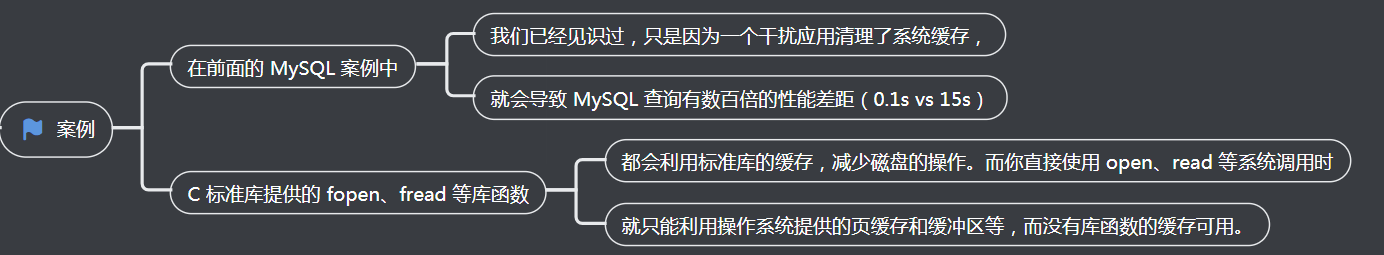

第一:

第二:

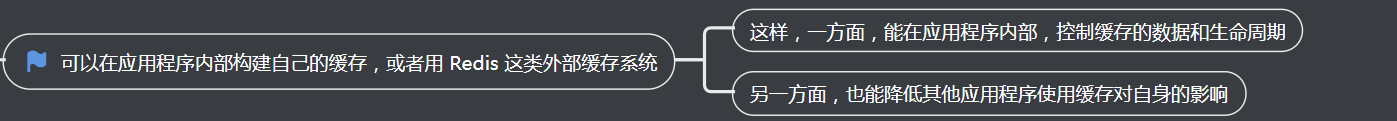

第三:

可以在应用程序内部构建自己的缓存,或者用 Redis 这类外部缓存系统

案例

第四:

第五:

第六:

第七:

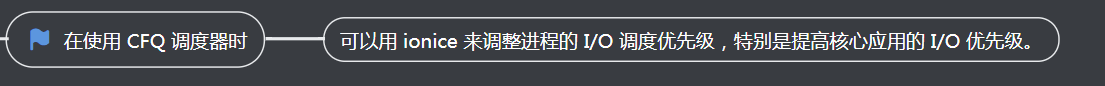

在使用 CFQ 调度器时

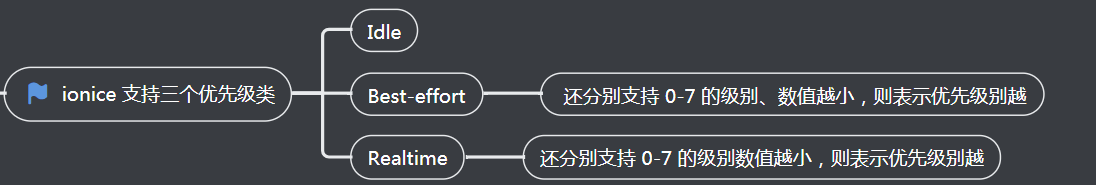

ionice 支持三个优先级类

五、文件系统优化

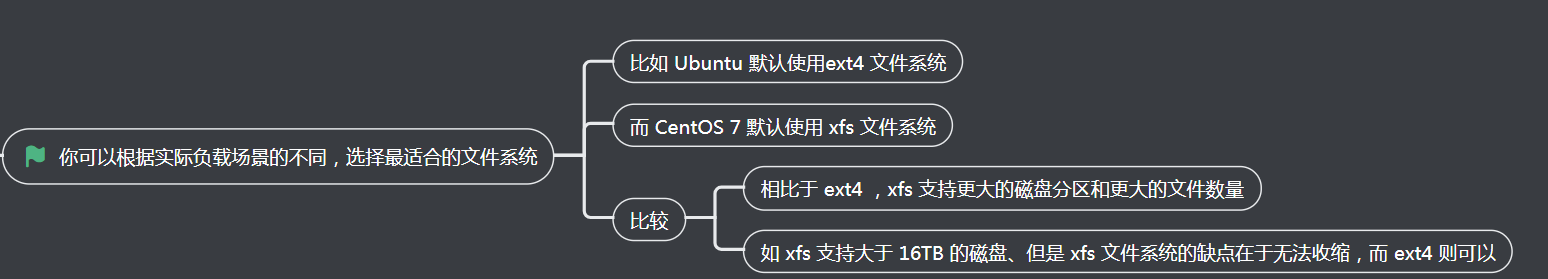

1、你可以根据实际负载场景的不同,选择最适合的文件系统

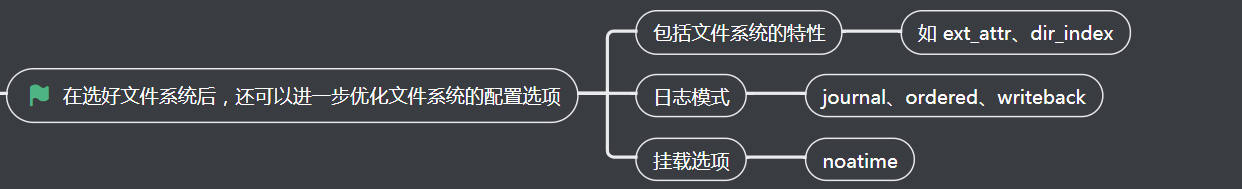

2、在选好文件系统后,还可以进一步优化文件系统的配置选项

3、可以优化文件系统的缓存

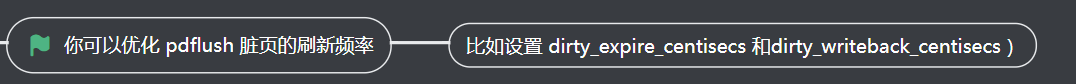

1、你可以优化 pdflush 脏页的刷新频率

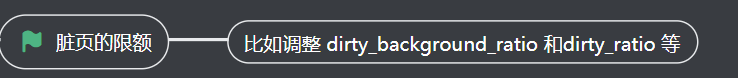

2、脏页的限额

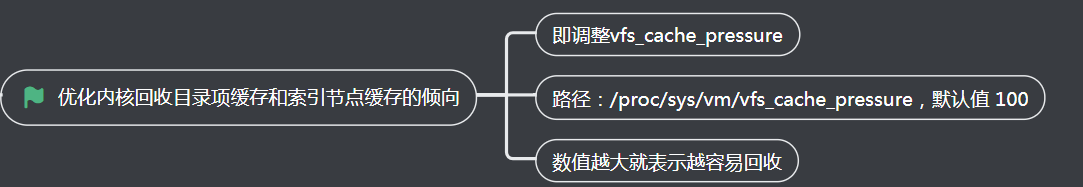

3、优化内核回收目录项缓存和索引节点缓存的倾向

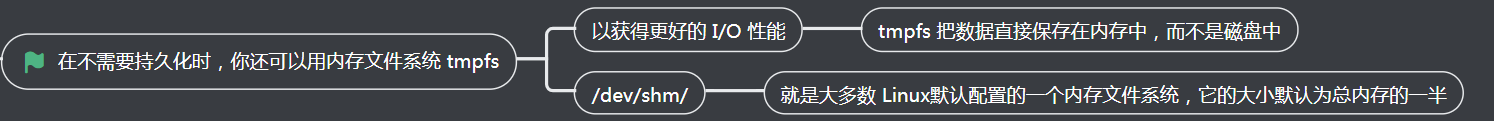

4、在不需要持久化时,你还可以用内存文件系统 tmpfs

六、磁盘优化

数据的持久化存储,最终还是要落到具体的物理磁盘中,同时,磁盘也是整个 I/O 栈的最底层。从磁盘角度出发,自然也有很多有效的性能优化方法

1、最简单有效的优化方法,就是换用性能更好的磁盘

2、我们可以使用 RAID

3、针对磁盘和应用程序 I/O 模式的特征,我们可以选择最适合的 I/O 调度算法

4、我们可以对应用程序的数据,进行磁盘级别的隔离

5、在顺序读比较多的场景中、我们可以增大磁盘的预读数据

调整 /dev/sdb 的预读大小的两种方法

调整内核选项

使用 blockdev 工具设置

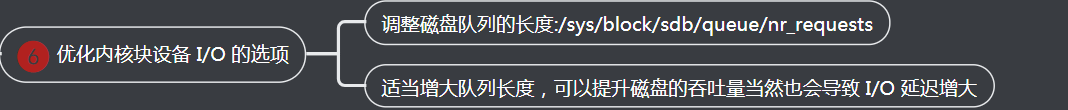

6、优化内核块设备 I/O 的选项

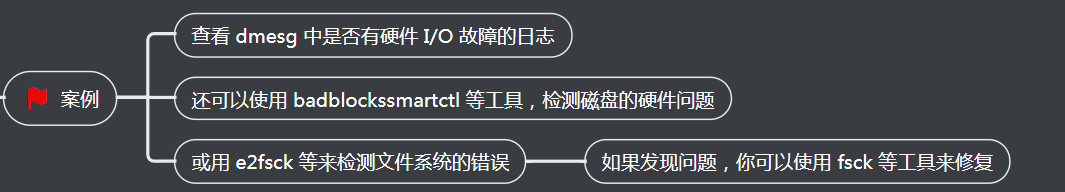

7、磁盘本身出现硬件错误

案例

七、小结

今天,我们一起梳理了常见的文件系统和磁盘 I/O 的性能优化思路和方法。发现 I/O 性能问题后,不要急于动手优化,而要先找出最重要的、可以最大程度提升性能的问题,然后

再从 I/O 栈的不同层入手,考虑具体的优化方法。

记住,磁盘和文件系统的 I/O ,通常是整个系统中最慢的一个模块。所以,在优化 I/O 问题时,除了可以优化 I/O 的执行流程,还可以借助更快的内存、网络、CPU 等,减少 I/O调用。

比如,你可以充分利用系统提供的 Buffer、Cache ,或是应用程序内部缓存, 再或者Redis 这类的外部缓存系统。