1.首先建立一个model.py文件,用来写神经网络,代码如下:

import torch

import torch.nn as nn

class My_VGG16(nn.Module):def __init__(self,num_classes=5,init_weight=True):super(My_VGG16, self).__init__()# 特征提取层self.features = nn.Sequential(nn.Conv2d(in_channels=3,out_channels=64,kernel_size=3,stride=1,padding=1),nn.Conv2d(in_channels=64,out_channels=64,kernel_size=3,stride=1,padding=1),nn.MaxPool2d(kernel_size=2,stride=2),nn.Conv2d(in_channels=64, out_channels=128, kernel_size=3, stride=1, padding=1),nn.Conv2d(in_channels=128, out_channels=128, kernel_size=3, stride=1, padding=1),nn.MaxPool2d(kernel_size=2, stride=2),nn.Conv2d(in_channels=128, out_channels=256, kernel_size=3, stride=1, padding=1),nn.Conv2d(in_channels=256, out_channels=256, kernel_size=3, stride=1, padding=1),nn.Conv2d(in_channels=256, out_channels=256, kernel_size=3, stride=1, padding=1),nn.MaxPool2d(kernel_size=2,stride=2),nn.Conv2d(in_channels=256, out_channels=512, kernel_size=3, stride=1, padding=1),nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),nn.MaxPool2d(kernel_size=2, stride=2),nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),nn.MaxPool2d(kernel_size=2, stride=2),)# 分类层self.classifier = nn.Sequential(nn.Linear(in_features=7*7*512,out_features=4096),nn.ReLU(),nn.Dropout(0.5),nn.Linear(in_features=4096,out_features=4096),nn.ReLU(),nn.Dropout(0.5),nn.Linear(in_features=4096,out_features=num_classes))# 参数初始化if init_weight: # 如果进行参数初始化for m in self.modules(): # 对于模型的每一层if isinstance(m, nn.Conv2d): # 如果是卷积层# 使用kaiming初始化nn.init.kaiming_normal_(m.weight, mode="fan_out", nonlinearity="relu")# 如果bias不为空,固定为0if m.bias is not None:nn.init.constant_(m.bias, 0)elif isinstance(m, nn.Linear):# 如果是线性层# 正态初始化nn.init.normal_(m.weight, 0, 0.01)# bias则固定为0nn.init.constant_(m.bias, 0)def forward(self,x):x = self.features(x)x = torch.flatten(x,1)result = self.classifier(x)return result

2.下载数据集

DATA_URL = 'http://download.tensorflow.org/example_images/flower_photos.tgz'

3.下载完后写一个spile_data.py文件,将数据集进行分类

#spile_data.py

import os

from shutil import copy

import randomdef mkfile(file):if not os.path.exists(file):os.makedirs(file)file = 'flower_data/flower_photos'

flower_class = [cla for cla in os.listdir(file) if ".txt" not in cla]

mkfile('flower_data/train')

for cla in flower_class:mkfile('flower_data/train/'+cla)mkfile('flower_data/val')

for cla in flower_class:mkfile('flower_data/val/'+cla)split_rate = 0.1

for cla in flower_class:cla_path = file + '/' + cla + '/'images = os.listdir(cla_path)num = len(images)eval_index = random.sample(images, k=int(num*split_rate))for index, image in enumerate(images):if image in eval_index:image_path = cla_path + imagenew_path = 'flower_data/val/' + clacopy(image_path, new_path)else:image_path = cla_path + imagenew_path = 'flower_data/train/' + clacopy(image_path, new_path)print("\r[{}] processing [{}/{}]".format(cla, index+1, num), end="") # processing barprint()print("processing done!")

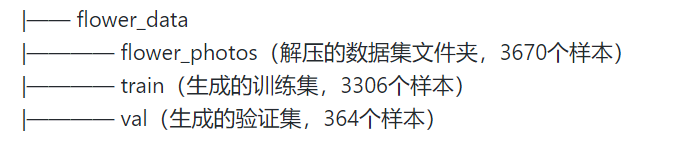

之后应该是这样:

4.再写一个train.py文件,用来训练模型

import torch

import torch.nn as nn

from torchvision import transforms, datasets

import json

import os

import torch.optim as optim

from model import My_VGG16device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

data_transform = {"train": transforms.Compose([transforms.RandomResizedCrop(224),transforms.RandomHorizontalFlip(),transforms.ToTensor(),transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])]),"val": transforms.Compose([transforms.Resize(256),transforms.CenterCrop(224),transforms.ToTensor(),transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])])}data_root = os.getcwd() # get data root path

image_path = data_root + "/flower_data/" # flower data set path

train_dataset = datasets.ImageFolder(root=image_path+"train",transform=data_transform["train"])

train_num = len(train_dataset)# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4}

flower_list = train_dataset.class_to_idx

cla_dict = dict((val, key) for key, val in flower_list.items())cla_dict = dict((val, key) for key, val in flower_list.items())

# write dict into json file

json_str = json.dumps(cla_dict, indent=4)

with open('class_indices.json', 'w') as json_file:json_file.write(json_str)batch_size = 16train_loader = torch.utils.data.DataLoader(train_dataset,batch_size=batch_size, shuffle=True,num_workers=0)validate_dataset = datasets.ImageFolder(root=image_path + "val",transform=data_transform["val"])

val_num = len(validate_dataset)

validate_loader = torch.utils.data.DataLoader(validate_dataset,batch_size=batch_size, shuffle=False,num_workers=0)

net = My_VGG16(num_classes=5)# load pretrain weights

model_weight_path = "./vgg16.pth"

pre_weights = torch.load(model_weight_path)net.to(device)loss_function = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.0001)best_acc = 0.0

save_path = './vgg16_train.pth'for epoch in range(5):# trainnet.train()running_loss = 0.0for step, data in enumerate(train_loader, start=0):images, labels = dataoptimizer.zero_grad()logits = net(images.to(device))#.to(device)print("===>",logits.shape,labels.shape)loss = loss_function(logits, labels.to(device))loss.backward()optimizer.step()# print statisticsrunning_loss += loss.item()# print train processrate = (step+1)/len(train_loader)a = "*" * int(rate * 50)b = "." * int((1 - rate) * 50)print("\rtrain loss: {:^3.0f}%[{}->{}]{:.4f}".format(int(rate*100), a, b, loss), end="")print()# validatenet.eval()acc = 0.0 # accumulate accurate number / epochwith torch.no_grad():for val_data in validate_loader:val_images, val_labels = val_dataoutputs = net(val_images.to(device)) # eval model only have last output layer# loss = loss_function(outputs, test_labels)predict_y = torch.max(outputs, dim=1)[1]acc += (predict_y == val_labels.to(device)).sum().item()val_accurate = acc / val_numif val_accurate > best_acc:best_acc = val_accuratetorch.save(net.state_dict(), save_path)print('[epoch %d] train_loss: %.3f test_accuracy: %.3f' %(epoch + 1, running_loss / step, val_accurate))print('Finished Training')