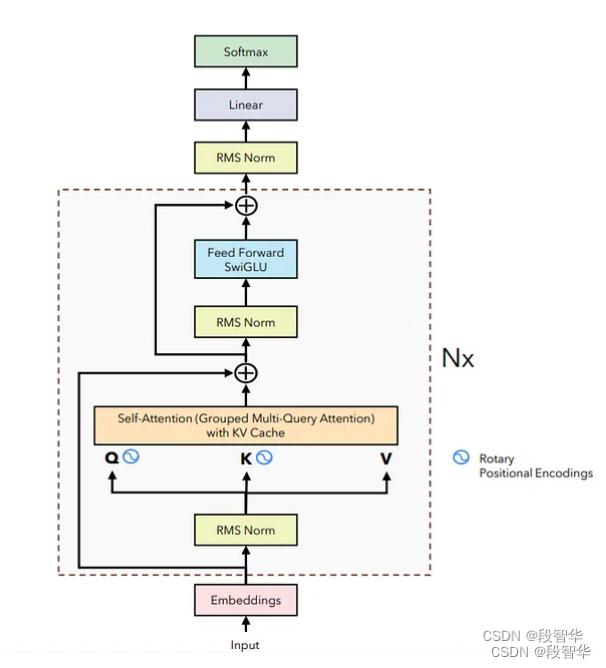

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(八)编码器块

Transformer块

由于 只关注模型的推理,因此 只会研究transformer块

class EncoderBlock(nn.Module):def __init__(self, args: ModelArgs):super().__init__()self.n_heads = args.n_headsself.dim = args.dimself.head_dim = args.dim // args.n_headsself.attention = SelfAttention(args)self.feed_forward = FeedForward(args)# normalize BEFORE the self attentionself.attention_norm = RMSNorm(args.dim, eps=args.norm_eps)# Normalization BEFORE the feed forwardself.ffn_norm = RMSNorm(args.dim, eps=args.norm_eps)def forward(self, x: torch.Tensor, start_pos: int, freqs_complex: torch.Tensor):# (B, seq_len, dim) + (B, seq_len, dim) -> (B, seq_len, dim)h = x + self.attention.forward(self.attention_norm(x), start_pos, freqs_complex)out = h + self.feed_forward.forward(self.ffn_norm(h))return out

系列博客

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(一)Llama3 模型 架构

https://duanzhihua.blog.csdn.net/article/details/138208650

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(二)RoPE位置编码

https://duanzhihua.blog.csdn.net/article/details/138212328

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(三)KV缓存

https://duanzhihua.blog.csdn.net/article/details/138213306

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(四)分组多查询注意力

https://duanzhihua.blog.csdn.net/article/details/138216050

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(五)RMS 均方根归一化

https://duanzhihua.blog.csdn.net/article/details/138216630

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(六)SwiGLU 激活函数

https://duanzhihua.blog.csdn.net/article/details/138217261

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(七)前馈神经网络

https://duanzhihua.blog.csdn.net/article/details/138218095