本博文转自捋一捋pytorch官方FasterRCNN代码 - 知乎 (zhihu.com),增加了其中代码的更详细的解读,以帮助自己理解该代码。

代码理解的参考Faster-RCNN全面解读(手把手带你分析代码实现)---前向传播部分_手把手faster rcnn-CSDN博客

1. 代码结构

作为 torchvision 中目标检测基类,GeneralizedRCNN 继承了 torch.nn.Module,后续 FasterRCNN 、MaskRCNN 都继承 GeneralizedRCNN。

2. GeneralizedRCNN

GeneralizedRCNN 继承基类 nn.Module 。首先来看看基类 GeneralizedRCNN 的代码:

python">class GeneralizedRCNN(nn.Module):def __init__(self, backbone, rpn, roi_heads, transform):super(GeneralizedRCNN, self).__init__()self.transform = transformself.backbone = backboneself.rpn = rpnself.roi_heads = roi_heads# images是输入的除以255归一化后的batch图像# targets是输入对应images的batch标记框(如果self.training训练模式,targets不能为空)def forward(self, images, targets=None):# 初始化一个空列表,并且通过torch.jit.annotate对其类型进行注解,确保符合编译要求# List[Tuple[int, int]] 表示 original_image_sizes 是一个列表(List),列表中的每个元素是一个元组(Tuple),而该元组的类型是 (int, int),即包含两个整数original_image_sizes = torch.jit.annotate(List[Tuple[int, int]], [])# 从图像列表images中提取每张图像的尺寸,并将其添加到original_image_sizesfor img in images:val = img.shape[-2:] #获取image.shape的最后两个维度,即高度和宽度assert len(val) == 2 #用于检查val的长度是否为2original_image_sizes.append((val[0], val[1])) #将当前图像尺寸添加到列表中images, targets = self.transform(images, targets)features = self.backbone(images.tensors) # 一般为VGG,ResNet,MobileNet等网络if isinstance(features, torch.Tensor):features = OrderedDict([('0', features)])proposals, proposal_losses = self.rpn(images, features, targets)detections, detector_losses = self.roi_heads(features, proposals, images.image_sizes, targets)detections = self.transform.postprocess(detections, images.image_sizes, original_image_sizes)losses = {}losses.update(detector_losses)losses.update(proposal_losses)return (losses, detections)对于 GeneralizedRCNN 类,其中有4个重要的接口:

- transform

- backbone

- rpn

- roi_heads

2.1 transform

transform代码:

python"># GeneralizedRCNN.forward(...)

for img in images:val = img.shape[-2:]assert len(val) == 2original_image_sizes.append((val[0], val[1]))images, targets = self.transform(images, targets)

tansform主要做两件事:

1. 将输入进行标准化

2. 将图像缩放到固定大小

2.2 backbone+rpn+roi_heads

完成图像缩放后才正式进入网络流程,主要有4个步骤:

- 将transform后的图像输入到backbone模块提取特征图

- 经过rpn模块生成proposals和proposal_losses

- 进入roi_heads模块(即roi_pooling+分类)

- 经postprocess模块(进行NMS,同时将box通过original_images_size映射回原图)

3. FasterRCNN

FasterRCNN 继承基类 GeneralizedRCNN。其代码为:

python">class FasterRCNN(GeneralizedRCNN):def __init__(self, backbone, num_classes=None,# transform parametersmin_size=800, max_size=1333,image_mean=None, image_std=None,# RPN parametersrpn_anchor_generator=None, rpn_head=None,rpn_pre_nms_top_n_train=2000, rpn_pre_nms_top_n_test=1000,rpn_post_nms_top_n_train=2000, rpn_post_nms_top_n_test=1000,rpn_nms_thresh=0.7,rpn_fg_iou_thresh=0.7, rpn_bg_iou_thresh=0.3,rpn_batch_size_per_image=256, rpn_positive_fraction=0.5,# Box parametersbox_roi_pool=None, box_head=None, box_predictor=None,box_score_thresh=0.05, box_nms_thresh=0.5, box_detections_per_img=100,box_fg_iou_thresh=0.5, box_bg_iou_thresh=0.5,box_batch_size_per_image=512, box_positive_fraction=0.25,bbox_reg_weights=None):out_channels = backbone.out_channelsif rpn_anchor_generator is None:anchor_sizes = ((32,), (64,), (128,), (256,), (512,))aspect_ratios = ((0.5, 1.0, 2.0),) * len(anchor_sizes)rpn_anchor_generator = AnchorGenerator(anchor_sizes, aspect_ratios)if rpn_head is None:rpn_head = RPNHead(out_channels, rpn_anchor_generator.num_anchors_per_location()[0])rpn_pre_nms_top_n = dict(training=rpn_pre_nms_top_n_train, testing=rpn_pre_nms_top_n_test)rpn_post_nms_top_n = dict(training=rpn_post_nms_top_n_train, testing=rpn_post_nms_top_n_test)rpn = RegionProposalNetwork(rpn_anchor_generator, rpn_head,rpn_fg_iou_thresh, rpn_bg_iou_thresh,rpn_batch_size_per_image, rpn_positive_fraction,rpn_pre_nms_top_n, rpn_post_nms_top_n, rpn_nms_thresh)if box_roi_pool is None:box_roi_pool = MultiScaleRoIAlign(featmap_names=['0', '1', '2', '3'],output_size=7,sampling_ratio=2)if box_head is None:resolution = box_roi_pool.output_size[0]representation_size = 1024box_head = TwoMLPHead(out_channels * resolution ** 2,representation_size)if box_predictor is None:representation_size = 1024box_predictor = FastRCNNPredictor(representation_size,num_classes)roi_heads = RoIHeads(# Boxbox_roi_pool, box_head, box_predictor,box_fg_iou_thresh, box_bg_iou_thresh,box_batch_size_per_image, box_positive_fraction,bbox_reg_weights,box_score_thresh, box_nms_thresh, box_detections_per_img)if image_mean is None:image_mean = [0.485, 0.456, 0.406]if image_std is None:image_std = [0.229, 0.224, 0.225]transform = GeneralizedRCNNTransform(min_size, max_size, image_mean, image_std)super(FasterRCNN, self).__init__(backbone, rpn, roi_heads, transform)FasterRCNN 继承了 GeneralizedRCNN 中的 transform、backbone、rpn、roi_heads 接口:

python"># FasterRCNN.__init__(...)

super(FasterRCNN, self).__init__(backbone, rpn, roi_heads, transform)3.1 Transform接口

对于 transform 接口,使用 GeneralizedRCNNTransform 实现。从代码变量名可以明显看到包含:

- 与缩放相关参数:min_size + max_size

- 与归一化相关参数:image_mean + image_std(对输入[0, 1]减去image_mean再除以image_std)

python"># FasterRCNN.__init__(...)

if image_mean is None:image_mean = [0.485, 0.456, 0.406]

if image_std is None:image_std = [0.229, 0.224, 0.225]

transform = GeneralizedRCNNTransform(min_size, max_size, image_mean, image_std)3.2 Backnone接口

使用 ResNet50 + FPN 结构:

python">def fasterrcnn_resnet50_fpn(pretrained=False, progress=True, num_classes=91, pretrained_backbone=True, **kwargs):if pretrained:# no need to download the backbone if pretrained is setpretrained_backbone = Falsebackbone = resnet_fpn_backbone('resnet50', pretrained_backbone)model = FasterRCNN(backbone, num_classes, **kwargs)if pretrained:state_dict = load_state_dict_from_url(model_urls['fasterrcnn_resnet50_fpn_coco'], progress=progress)model.load_state_dict(state_dict)return modelResNet: Deep Residual Learning for Image Recognition

FPN: Feature Pyramid Networks for Object Detection

3.3 RPN接口

首先是 rpn_anchor_generator :

python"># FasterRCNN.__init__(...)

if rpn_anchor_generator is None:anchor_sizes = ((32,), (64,), (128,), (256,), (512,))aspect_ratios = ((0.5, 1.0, 2.0),) * len(anchor_sizes)rpn_anchor_generator = AnchorGenerator(anchor_sizes, aspect_ratios)对于普通的 FasterRCNN 只需要将 feature_map 输入到 rpn 网络生成 proposals 即可。但是由于加入 FPN,需要将多个 feature_map 逐个输入到 rpn 网络。

接下来看看 AnchorGenerator 具体实现:

python">class AnchorGenerator(nn.Module):......def generate_anchors(self, scales, aspect_ratios, dtype=torch.float32, device="cpu"):# type: (List[int], List[float], int, Device) # noqa: F821scales = torch.as_tensor(scales, dtype=dtype, device=device) # 将scale列表转换为一个Pytorch张量aspect_ratios = torch.as_tensor(aspect_ratios, dtype=dtype, device=device)h_ratios = torch.sqrt(aspect_ratios) w_ratios = 1 / h_ratios# w_ratios[:, None]将w_ratio变为一个列向量(列维度为n*1),#scales[None, :]将scales转换为一个行向量(1*m),#.view(-1)将张量展平为一维张量,得到每个锚框的宽度ws = (w_ratios[:, None] * scales[None, :]).view(-1) hs = (h_ratios[:, None] * scales[None, :]).view(-1)# 生成包含锚框的张量base_anchors;# -ws,-hs表示左上角的坐标,ws和hs表示右下角的坐标;# /2将坐标调整为以锚框中心为基准的方式base_anchors = torch.stack([-ws, -hs, ws, hs], dim=1) / 2 return base_anchors.round() # 确保坐标是整数# 为每个网格单元cell生成对应的锚框def set_cell_anchors(self, dtype, device):# type: (int, Device) -> None # noqa: F821......cell_anchors = [self.generate_anchors(sizes,aspect_ratios,dtype,device)# zip(self.sizes, self.aspect_ratios)会将self.sizes和self.aspect_ratios中的元素按位置配对到每一对sizes和aspect_ratios;# 每一对再调用self.generate_anchors生成相应的锚框,并存储在cell_anchors列表中。for sizes, aspect_ratios in zip(self.sizes, self.aspect_ratios) ]self.cell_anchors = cell_anchors首先,每个位置有 5 种 anchor_size 和 3 种 aspect_ratios(rpn接口一开始给出的),所以每个位置生成 15 个 base_anchors:

[ -23., -11., 23., 11.] # w = h = 32, ratio = 2

[ -16., -16., 16., 16.] # w = h = 32, ratio = 1

[ -11., -23., 11., 23.] # w = h = 32, ratio = 0.5

[ -45., -23., 45., 23.] # w = h = 64, ratio = 2

[ -32., -32., 32., 32.] # w = h = 64, ratio = 1

[ -23., -45., 23., 45.] # w = h = 64, ratio = 0.5

[ -91., -45., 91., 45.] # w = h = 128, ratio = 2

[ -64., -64., 64., 64.] # w = h = 128, ratio = 1

[ -45., -91., 45., 91.] # w = h = 128, ratio = 0.5

[-181., -91., 181., 91.] # w = h = 256, ratio = 2

[-128., -128., 128., 128.] # w = h = 256, ratio = 1

[ -91., -181., 91., 181.] # w = h = 256, ratio = 0.5

[-362., -181., 362., 181.] # w = h = 512, ratio = 2

[-256., -256., 256., 256.] # w = h = 512, ratio = 1

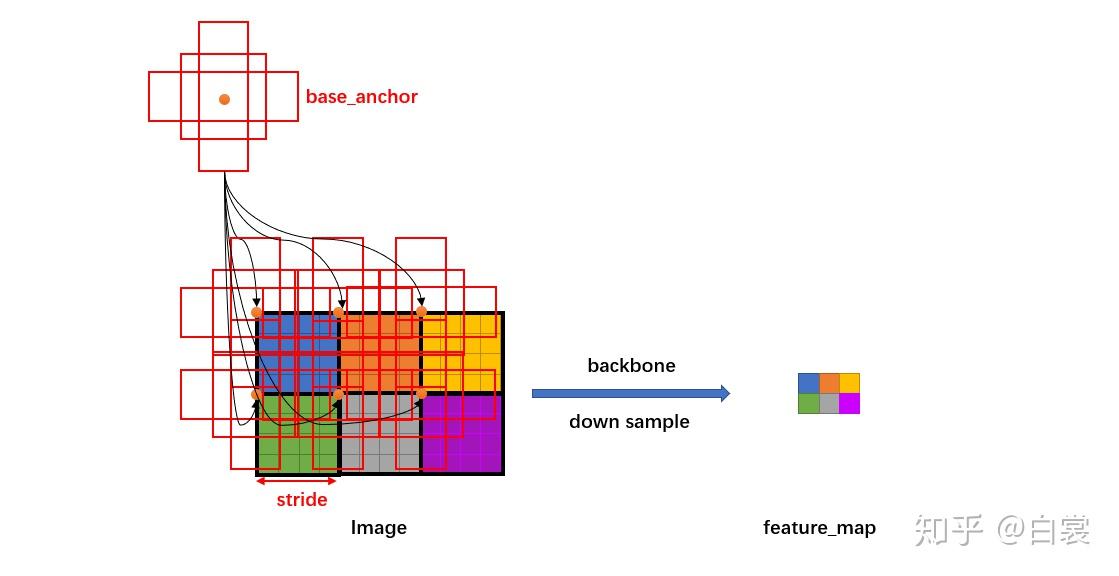

[-181., -362., 181., 362.] # w = h = 512, ratio = 0.5注意 base_anchors 的中心都是 (0,0) 点,如下图所示:

接着来看 AnchorGenerator.grid_anchors 函数:

python"># AnchorGenerator

# 用于生成在给定特征图上的所有锚点

# grid_sizes是包含每个特征图网格尺寸的列表。每个元素是一个长度为2的列表,表示特征图的高度和宽度。

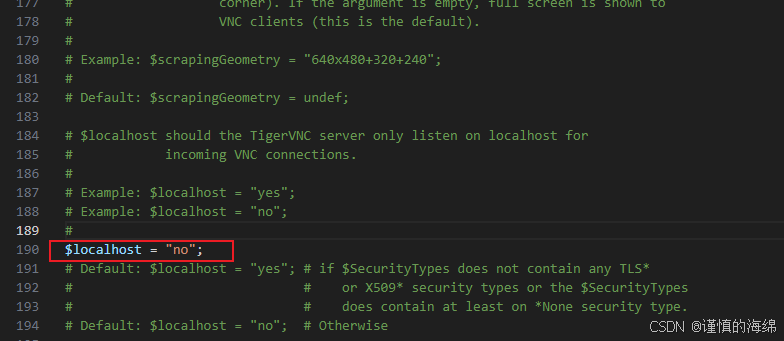

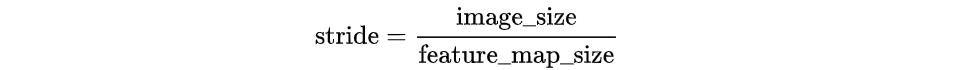

# strides是包含每个特征图对应的步幅(stride)的列表,步幅决定了每个网格单元的实际尺寸。def grid_anchors(self, grid_sizes, strides):# type: (List[List[int]], List[List[Tensor]])anchors = [] # 存储所有锚框的列表cell_anchors = self.cell_anchors # 预先计算好的基础锚框assert cell_anchors is not None # 判断cell_anchors是否为空,为空抛出错误# zip(grid_sizes, strides, cell_anchors)会并行地遍历每个特征图的尺寸(size)、步幅(stride)和基础锚框(base_anchors)。# base_anchors 是预先生成的锚框,它们会根据每个特征图的网格尺寸和步幅进行偏移。for size, stride, base_anchors in zip(grid_sizes, strides, cell_anchors):grid_height, grid_width = sizestride_height, stride_width = stridedevice = base_anchors.device# For output anchor, compute [x_center, y_center, x_center, y_center]# 计算每个网格单元的锚框中心位置shifts_x = torch.arange(0, grid_width, dtype=torch.float32, device=device) * stride_width # 水平方向上的步幅偏移shifts_y = torch.arange(0, grid_height, dtype=torch.float32, device=device) * stride_heightshift_y, shift_x = torch.meshgrid(shifts_y, shifts_x) # 生成网格的x和y坐标shift_x = shift_x.reshape(-1) shift_y = shift_y.reshape(-1)shifts = torch.stack((shift_x, shift_y, shift_x, shift_y), dim=1) # 展平为一维张量,然后得到每个网格单元的左上角和右下角坐标# For every (base anchor, output anchor) pair,# offset each zero-centered base anchor by the center of the output anchor.# 将每个网格单元的偏移量shifts加到基础锚框base_anchors生成最终的锚框。anchors.append((shifts.view(-1, 1, 4) + base_anchors.view(1, -1, 4)).reshape(-1, 4)) # shifts.view(-1, 1, 4) 和 base_anchors.view(1, -1, 4) 是为了保证广播机制能够正确应用,使得每个基础锚框都与每个网格单元的偏移量相加return anchors# 模型的前向传播函数,在目标检测中,通常用来生成各个特征图上的锚框,并返回这些锚框。def forward(self, image_list, feature_maps):# type: (ImageList, List[Tensor])grid_sizes = list([feature_map.shape[-2:] for feature_map in feature_maps]) # 每个特征图的高度和宽度image_size = image_list.tensors.shape[-2:] # 输入图像的尺寸dtype, device = feature_maps[0].dtype, feature_maps[0].device# strides 是一个列表,其中每个元素是一个包含特征图步幅的列表。# 步幅是通过将原始图像尺寸除以特征图的尺寸来计算的。strides = [[torch.tensor(image_size[0] / g[0], dtype=torch.int64, device=device),torch.tensor(image_size[1] / g[1], dtype=torch.int64, device=device)] for g in grid_sizes] self.set_cell_anchors(dtype, device) # 调用 set_cell_anchors 方法,生成锚框的基本尺寸(通过 generate_anchors 生成)。确保锚框的尺寸和设备类型与特征图兼容。anchors_over_all_feature_maps = self.cached_grid_anchors(grid_sizes, strides)......在之前提到,由于有 FPN 网络,所以输入 rpn 的是多个特征。为了方便介绍,以下都是以某一个特征进行描述,其他特征类似。

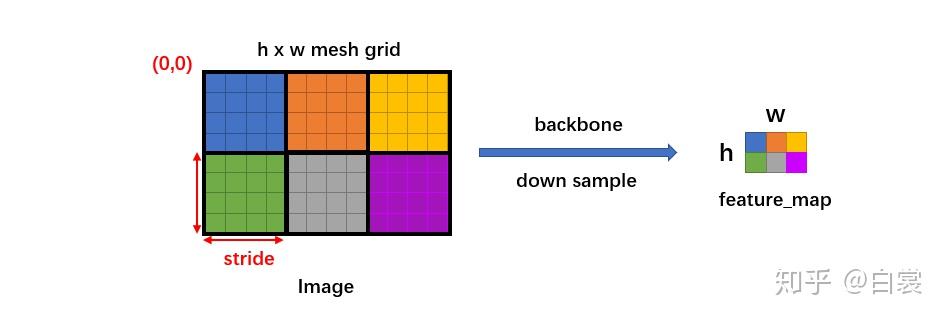

假设有 ℎ×𝑤 的特征,首先会计算这个特征相对于输入图像的下采样倍数 stride:

然后生成一个 ℎ×𝑤 大小的网格,每个格子长度为 stride,如下图:

python"># AnchorGenerator.grid_anchors(...)

shifts_x = torch.arange(0, grid_width, dtype=torch.float32, device=device) * stride_width

shifts_y = torch.arange(0, grid_height, dtype=torch.float32, device=device) * stride_height

shift_y, shift_x = torch.meshgrid(shifts_y, shifts_x)

然后将 base_anchors 的中心从 (0,0) 移动到网格的点,且在网格的每个点都放置一组 base_anchors。这样就在当前 feature_map 上有了很多的 anchors。

需要特别说明,stride 代表网络的感受野,网络不可能检测到比 feature_map 更密集的框了!所以才只会在网格中每个点设置 anchors(反过来说,如果在网格的两个点之间设置 anchors,那么就对应 feature_map 中半个点,显然不合理)。

python"># AnchorGenerator.grid_anchors(...)

anchors.append((shifts.view(-1, 1, 4) + base_anchors.view(1, -1, 4)).reshape(-1, 4))

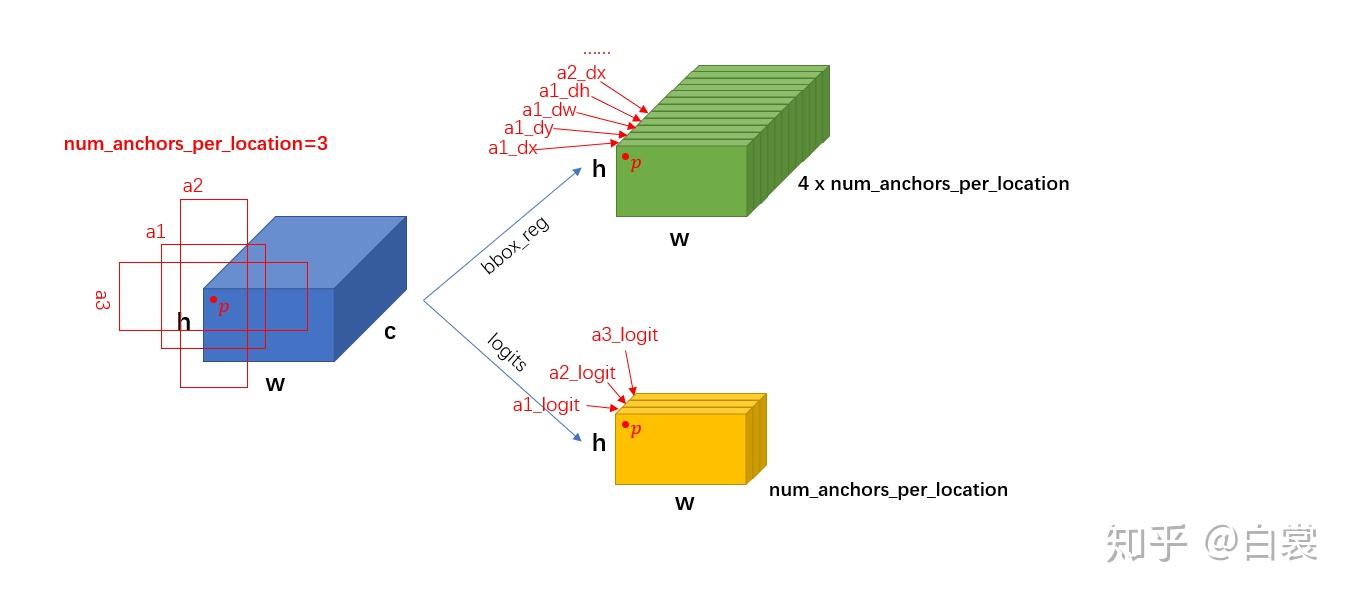

放置好 anchors 后,接下来就要调整网络,使网络输出能够判断每个 anchor 是否有目标,同时还要有 bounding box regression 需要的4个值 (𝑑𝑥,𝑑𝑦,𝑑𝑤,𝑑ℎ) 。

python">class RPNHead(nn.Module):def __init__(self, in_channels, num_anchors):super(RPNHead, self).__init__()self.conv = nn.Conv2d(in_channels, in_channels, kernel_size=3, stride=1, padding=1) # 进行3*3的卷积# 对feature进行卷积,输出cls_logits对应每个anchor是否有目标self.cls_logits = nn.Conv2d(in_channels, num_anchors, kernel_size=1, stride=1)# 对feature进行卷积,对应每个点的4个框位置回归信息self.bbox_pred = nn.Conv2d(in_channels, num_anchors * 4, kernel_size=1, stride=1)# x:输入是一个特征图列表,通常来自不同的卷积层(或不同尺度的特征图)。# 每个 feature 都是一个形状为 [batch_size, in_channels, height, width] 的张量。def forward(self, x):logits = []bbox_reg = []for feature in x:t = F.relu(self.conv(feature)) # 对每个特征图进行卷积,并通过ReLU激活函数非线性化logits.append(self.cls_logits(t)) # 对卷积后的特征图进行分类预测,输出每个锚框是否是前景(目标)或背景。bbox_reg.append(self.bbox_pred(t)) # 对卷积后的特征图进行边界框回归,输出每个锚框的位置调整(坐标偏移)。return logits, bbox_reg# RPNHead.__init__(...)

self.cls_logits = nn.Conv2d(in_channels, num_anchors, kernel_size=1, stride=1)

self.bbox_pred = nn.Conv2d(in_channels, num_anchors * 4, kernel_size=1, stride=1)

上述过程只是单个 feature_map 的处理流程。对于 FPN 网络的输出的多个大小不同的feature_maps,每个特征图都会按照上述过程计算 stride 和网格,并设置 anchors。当处理完后获得密密麻麻的各种 anchors 了。

接下来进入 RegionProposalNetwork 类:

python"># FasterRCNN.__init__(...)

rpn_pre_nms_top_n = dict(training=rpn_pre_nms_top_n_train, testing=rpn_pre_nms_top_n_test)

rpn_post_nms_top_n = dict(training=rpn_post_nms_top_n_train, testing=rpn_post_nms_top_n_test)# rpn_anchor_generator 生成anchors

# rpn_head 调整feature_map获得cls_logits+bbox_pred

rpn = RegionProposalNetwork(rpn_anchor_generator, rpn_head,rpn_fg_iou_thresh, rpn_bg_iou_thresh,rpn_batch_size_per_image, rpn_positive_fraction,rpn_pre_nms_top_n, rpn_post_nms_top_n, rpn_nms_thresh)RegionProposalNetwork 类的用是:

- test 阶段 :计算有目标的 anchor 并进行框回归生成 proposals,然后 NMS

- train 阶段 :除了上面的作用,还计算 rpn loss

python">class RegionProposalNetwork(torch.nn.Module):.......def forward(self, images, features, targets=None):features = list(features.values())# 计算有目标的anchor并进行框回归生成proposalsobjectness, pred_bbox_deltas = self.head(features)anchors = self.anchor_generator(images, features)# 获取每个图像的锚框数量num_images = len(anchors)# 获取每个特征图层(或每个尺度的特征图)上第一个维度的形状。通常是图像的高度或宽度。num_anchors_per_level_shape_tensors = [o[0].shape for o in objectness]# 计算每个特征图层上锚框的总数量num_anchors_per_level = [s[0] * s[1] * s[2] for s in num_anchors_per_level_shape_tensors]objectness, pred_bbox_deltas = \concat_box_prediction_layers(objectness, pred_bbox_deltas) # 数据拼接# apply pred_bbox_deltas to anchors to obtain the decoded proposals# note that we detach the deltas because Faster R-CNN do not backprop through# the proposals# 解码候选框# 注意,detach() 表示我们不通过这个过程进行反向传播proposals = self.box_coder.decode(pred_bbox_deltas.detach(), anchors)# 将候选框的输出重新组织成适合批量处理的形状,每个图像有一组候选框,每个候选框有 4 个坐标(x1, y1, x2, y2)。proposals = proposals.view(num_images, -1, 4) # 依照objectness置信度由大到小排序,并NMS生成proposal boxes.boxes, scores = self.filter_proposals(proposals, objectness, images.image_sizes, num_anchors_per_level)losses = {}# 训练阶段下计算cls_logits和bbox_pred的损失if self.training:assert targets is not None# 将锚框和真实标签匹配,生成标签和真实边界框labels, matched_gt_boxes = self.assign_targets_to_anchors(anchors, targets)# 使用匹配的真实边界框生成回归目标regression_targets = self.box_coder.encode(matched_gt_boxes, anchors)# 计算损失值loss_objectness, loss_rpn_box_reg = self.compute_loss(objectness, pred_bbox_deltas, labels, regression_targets)losses = {"loss_objectness": loss_objectness,"loss_rpn_box_reg": loss_rpn_box_reg,}return boxes, losses2.4 ROI_Heads接口

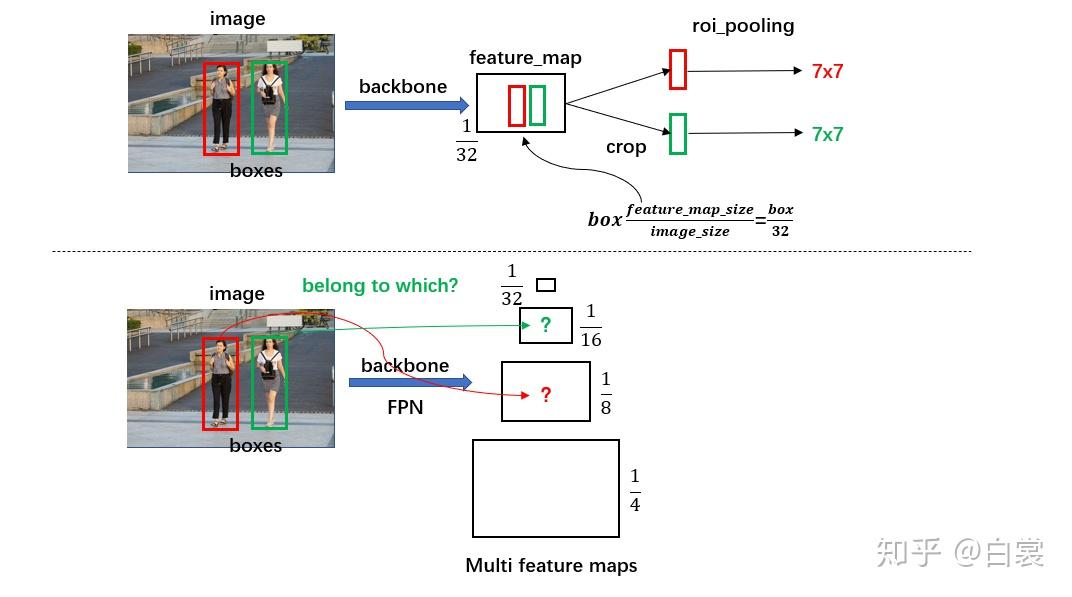

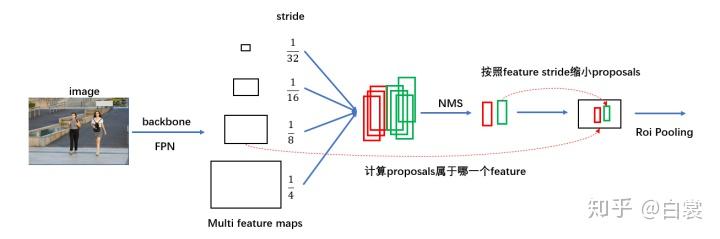

在 RegionProposalNetwork 之后已经生成了 boxes ,接下来就要提取 boxes 内的特征进行 roi_pooling :

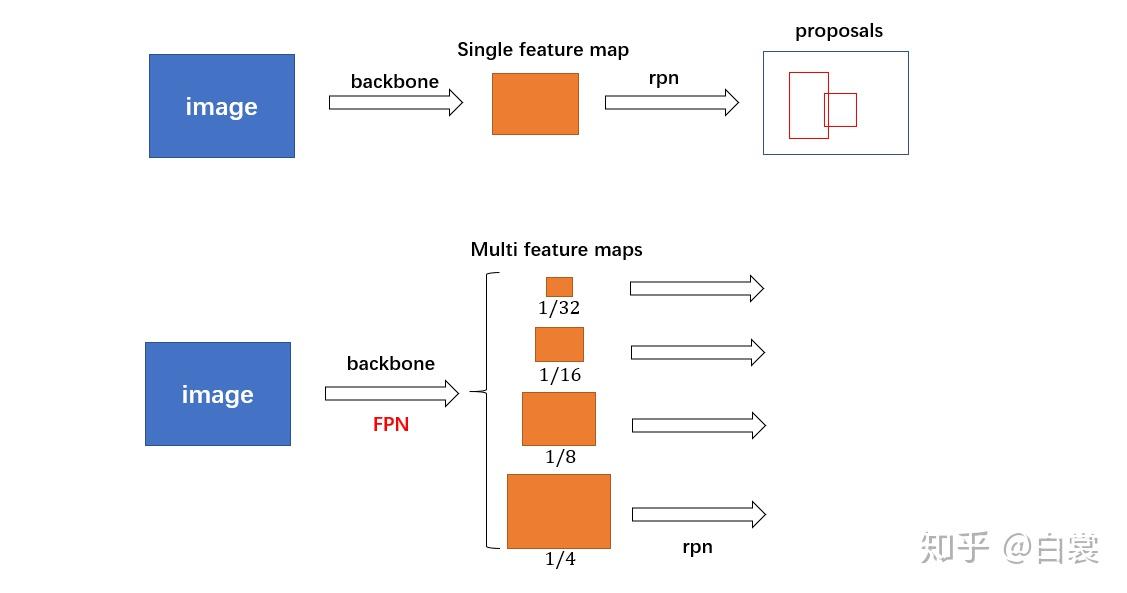

python">roi_heads = RoIHeads(# Boxbox_roi_pool, box_head, box_predictor,box_fg_iou_thresh, box_bg_iou_thresh,box_batch_size_per_image, box_positive_fraction,bbox_reg_weights,box_score_thresh, box_nms_thresh, box_detections_per_img)这里一点问题是如何计算 box 所属的 feature_map:

- 对于原始 FasterRCNN,只在 backbone 的最后一层 feature_map 提取 box 对应特征;

- 当加入 FPN 后 backbone 会输出多个特征图,由于RPN对anchor进行了box regression后改变了box的大小,所以此时需要重新计算当前 boxes 对应于哪一个特征。

如下图:

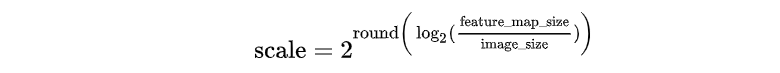

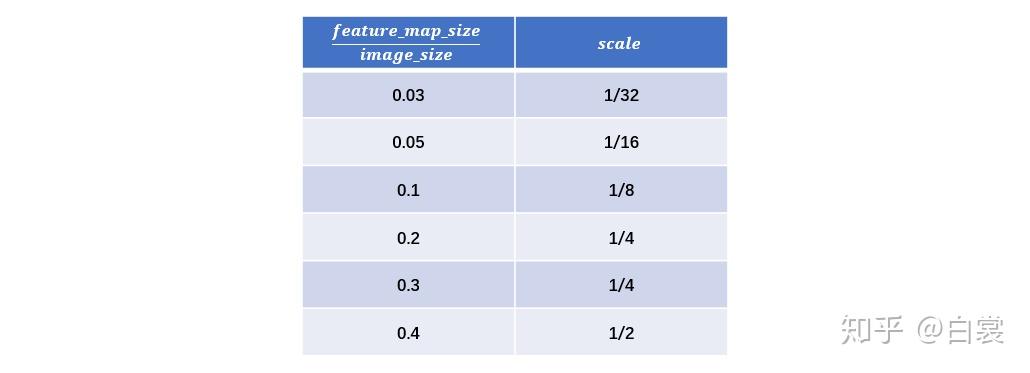

python">class MultiScaleRoIAlign(nn.Module):......# 根据feature map和original_size的比例,推断出该特征图的缩放因子def infer_scale(self, feature, original_size):# type: (Tensor, List[int])# assumption: the scale is of the form 2 ** (-k), with k integersize = feature.shape[-2:]possible_scales = torch.jit.annotate(List[float], [])# zip(size, original_size):将特征图尺寸(size)和原始图像尺寸(original_size)进行配对for s1, s2 in zip(size, original_size): approx_scale = float(s1) / float(s2)scale = 2 ** float(torch.tensor(approx_scale).log2().round())possible_scales.append(scale)# 断言在两个维度上得到的缩放因子相同。# 由于目标是多尺度对齐(即宽度和高度应具有相同的缩放比例),这步检查是必要的assert possible_scales[0] == possible_scales[1] return possible_scales[0]# 设置和计算每个特征图的缩放因子,并进一步计算特征图的层次(level)映射。# 它处理多个输入图像的不同尺寸,并根据特征图与原始图像之间的关系推导出相应的缩放因子def setup_scales(self, features, image_shapes):# type: (List[Tensor], List[Tuple[int, int]])assert len(image_shapes) != 0max_x = 0max_y = 0for shape in image_shapes:max_x = max(shape[0], max_x)max_y = max(shape[1], max_y)original_input_shape = (max_x, max_y)''' 通过调用 infer_scale 方法为每个特征图计算对应的缩放因子。这会根据特征图的尺寸与原始输入图像的尺寸之间的关系推断出缩放因子。'''scales = [self.infer_scale(feat, original_input_shape) for feat in features]# get the levels in the feature map by leveraging the fact that the network always# downsamples by a factor of 2 at each level.# 根据特征图的最小和最大缩放因子(scale[0],scale[-1])计算相应的levellvl_min = -torch.log2(torch.tensor(scales[0], dtype=torch.float32)).item()lvl_max = -torch.log2(torch.tensor(scales[-1], dtype=torch.float32)).item()self.scales = scales# 调用initLevelMapper函数初始化一个层次映射器,将不同的缩放因子映射到不同的特征图层次。self.map_levels = initLevelMapper(int(lvl_min), int(lvl_max))首先计算每个 feature_map 相对于网络输入 image 的下采样倍率 scale。其中 infer_scale 函数采用如下的近似公式:

该公式相当于做了一个简单的映射,将不同的 feature_map 与 image 大小比映射到附近的尺度:

例如对于 FasterRCNN 实际值为:

之后设置 lvl_min=2 和 lvl_max=5:

python"># MultiScaleRoIAlign.setup_scales(...)

# get the levels in the feature map by leveraging the fact that the network always

# downsamples by a factor of 2 at each level.

lvl_min = -torch.log2(torch.tensor(scales[0], dtype=torch.float32)).item()

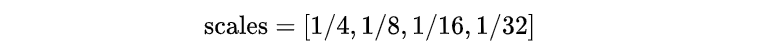

lvl_max = -torch.log2(torch.tensor(scales[-1], dtype=torch.float32)).item()接着使用 FPN 原文中的公式计算 box 所在 anchor(其中 𝑘0=4 , 𝑤ℎ 为 box 面积):

python"># 将roi映射到不同的尺寸层次上。在多尺度特征图上,可根据物体的尺寸(即面积)为每个候选框分配合适的特征图层次。

class LevelMapper(object)def __init__(self, k_min, k_max, canonical_scale=224, canonical_level=4, eps=1e-6):self.k_min = k_min # lvl_min=2self.k_max = k_max # lvl_max=5self.s0 = canonical_scale # 224 标定尺度,用于参考。self.lvl0 = canonical_level # 4 对应于标定尺度s0的特征图层次。按照当前说法,若物体面积为224*224,此时的特征图为第4层self.eps = eps # 小常数,避免数值计算中的除零错误或对数计算中的负值。def __call__(self, boxlists):s = torch.sqrt(torch.cat([box_area(boxlist) for boxlist in boxlists]))# Eqn.(1) in FPN paper'''torch.log2(s / self.s0):计算每个框的尺度与标定尺度(224)的比值的对数。self.lvl0 + ...:通过加上一个基准层次(lvl0,默认为 4),得到每个框的目标层次。torch.floor(...):向下取整,确保层次是整数。'''target_lvls = torch.floor(self.lvl0 + torch.log2(s / self.s0) + torch.tensor(self.eps, dtype=s.dtype))target_lvls = torch.clamp(target_lvls, min=self.k_min, max=self.k_max)return (target_lvls.to(torch.int64) - self.k_min).to(torch.int64)其中 torch.clamp(input, min, max) → Tensor 函数的作用是截断,防止越界:

可以看到,通过 LevelMapper 类将不同大小的 box 定位到某个 feature_map,如下图。之后就是按照图11中的流程进行 roi_pooling 操作。

在确定 proposal box 所属 FPN 中哪个 feature_map 之后,接着来看 MultiScaleRoIAlign 如何进行 roi_pooling 操作:

python">class MultiScaleRoIAlign(nn.Module):......def forward(self, x, boxes, image_shapes):# type: (Dict[str, Tensor], List[Tensor], List[Tuple[int, int]]) -> Tensor# 过滤特征图x_filtered = []for k, v in x.items(): # 遍历 x 字典中的所有键值对'''self.featmap_names 是一个包含所需特征图名称的列表,只有这些特征图才会被筛选出来并加入 x_filtered 列表中。这样可以确保只使用特定的特征图进行 RoI Align'''if k in self.featmap_names:x_filtered.append(v)num_levels = len(x_filtered)rois = self.convert_to_roi_format(boxes) # 转换格式if self.scales is None:self.setup_scales(x_filtered, image_shapes)scales = self.scalesassert scales is not None# 没有 FPN 时,只有1/32的最后一个feature_map进行roi_poolingif num_levels == 1:return roi_align(x_filtered[0], rois,output_size=self.output_size,spatial_scale=scales[0],sampling_ratio=self.sampling_ratio)# 有 FPN 时,有4个feature_map进行roi_pooling# 首先按照mapper = self.map_levels assert mapper is not Nonelevels = mapper(boxes) # 表示box所属哪个feature map''' 初始化张量结果 '''num_rois = len(rois)num_channels = x_filtered[0].shape[1]dtype, device = x_filtered[0].dtype, x_filtered[0].device# result是一个零初始化的张量,存储每个ROI在各个特征图层上的提取结果result = torch.zeros((num_rois, num_channels,) + self.output_size,dtype=dtype,device=device,)''' 在多个层次上进行roi align '''tracing_results = []for level, (per_level_feature, scale) in enumerate(zip(x_filtered, scales)):# 在所属feature map中进行roi_poolingidx_in_level = torch.nonzero(levels == level).squeeze(1) rois_per_level = rois[idx_in_level]# 从每个特征图层提取感兴趣区域,并将结果填充到result张量中。若是跟踪模式,则保存每个层的结果result_idx_in_level = roi_align(per_level_feature, rois_per_level,output_size=self.output_size,spatial_scale=scale, sampling_ratio=self.sampling_ratio)if torchvision._is_tracing():tracing_results.append(result_idx_in_level.to(dtype))else:result[idx_in_level] = result_idx_in_level# 合并多个层次的结果(ONNX跟踪模式下)if torchvision._is_tracing():result = _onnx_merge_levels(levels, tracing_results)return result在 MultiScaleRoIAlign.forward(...) 函数可以看到:

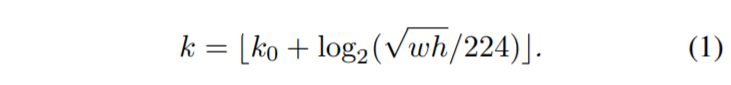

- 没有 FPN 时,只有1/32的最后一个 feature_map 进行 roi_pooling

python"> if num_levels == 1:return roi_align(x_filtered[0], rois,output_size=self.output_size,spatial_scale=scales[0],sampling_ratio=self.sampling_ratio)- 有 FPN 时,有4个 [1/4,1/8,1/16,1/32] 的 feature maps 参加计算。首先计算每个每个 box 所属哪个 feature map ,再在所属 feature map 进行 roi_pooling

python"> # 首先计算每个每个 box 所属哪个 feature maplevels = mapper(boxes) ......# 再在所属 feature map 进行 roi_pooling# 即 idx_in_level = torch.nonzero(levels == level).squeeze(1)for level, (per_level_feature, scale) in enumerate(zip(x_filtered, scales)):idx_in_level = torch.nonzero(levels == level).squeeze(1)rois_per_level = rois[idx_in_level]result_idx_in_level = roi_align(per_level_feature, rois_per_level,output_size=self.output_size,spatial_scale=scale, sampling_ratio=self.sampling_ratio)之后就获得了所谓的 7x7 特征(在 FasterRCNN.__init__(...) 中设置了 output_size=7)。需要说明,原始 FasterRCNN 应该是使用 roi_pooling,但是这里使用 roi_align 代替以提升检测器性能。

对于 torchvision.ops.roi_align 函数输入的参数,分别为:

- per_level_feature 代表 FPN 输出的某一 feature_map

- rois_per_level 为该特征 feature_map 对应的所有 proposal boxes(之前计算 level得到)

- output_size=7 代表输出为 7x7

- spatial_scale 代表特征 feature_map 相对输入 image 的下采样尺度(如 1/4,1/8,...)

- sampling_ratio 为 roi_align 采样率,有兴趣的读者请自行查阅 MaskRCNN 文章

接下来就是将特征转为最后针对 box 的类别信息(如人、猫、狗、车)和进一步的框回归信息。

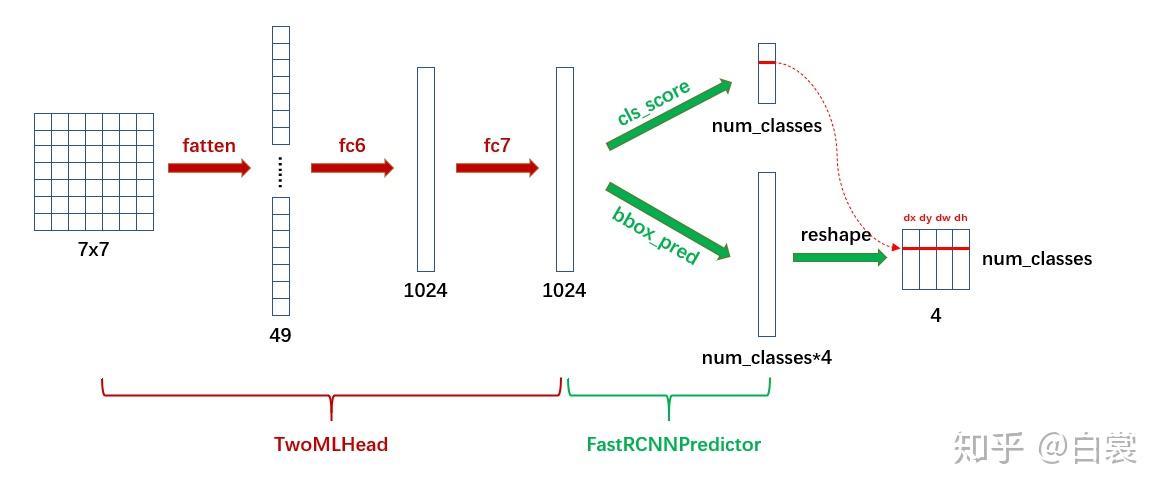

python">class TwoMLPHead(nn.Module):def __init__(self, in_channels, representation_size):super(TwoMLPHead, self).__init__()# 第一个全连接层,输入in_channels,输出representation_sizeself.fc6 = nn.Linear(in_channels, representation_size)# 第二个全连接层,输入representation_size,输出representation_sizeself.fc7 = nn.Linear(representation_size, representation_size)def forward(self, x):x = x.flatten(start_dim=1) # 除batch维度外,将所有维度拉成一维x = F.relu(self.fc6(x)) # 激活x = F.relu(self.fc7(x))return xclass FastRCNNPredictor(nn.Module):def __init__(self, in_channels, num_classes):super(FastRCNNPredictor, self).__init__()self.cls_score = nn.Linear(in_channels, num_classes) self.bbox_pred = nn.Linear(in_channels, num_classes * 4)def forward(self, x):if x.dim() == 4:assert list(x.shape[2:]) == [1, 1]x = x.flatten(start_dim=1)scores = self.cls_score(x)bbox_deltas = self.bbox_pred(x)return scores, bbox_deltas首先 TwoMLPHead 将 7x7 特征经过两个全连接层转为 1024,然后 FastRCNNPredictor 将每个 box 对应的 1024 维特征转为 cls_score 和 bbox_pred :

显然 cls_score 后接 softmax 即为类别概率,可以确定 box 的类别;在确定类别后,在 bbox_pred 中对应类别的 (𝑑𝑥,𝑑𝑦,𝑑𝑤,𝑑ℎ) 4个值即为第二次 bounding box regression 需要的4个偏移值。

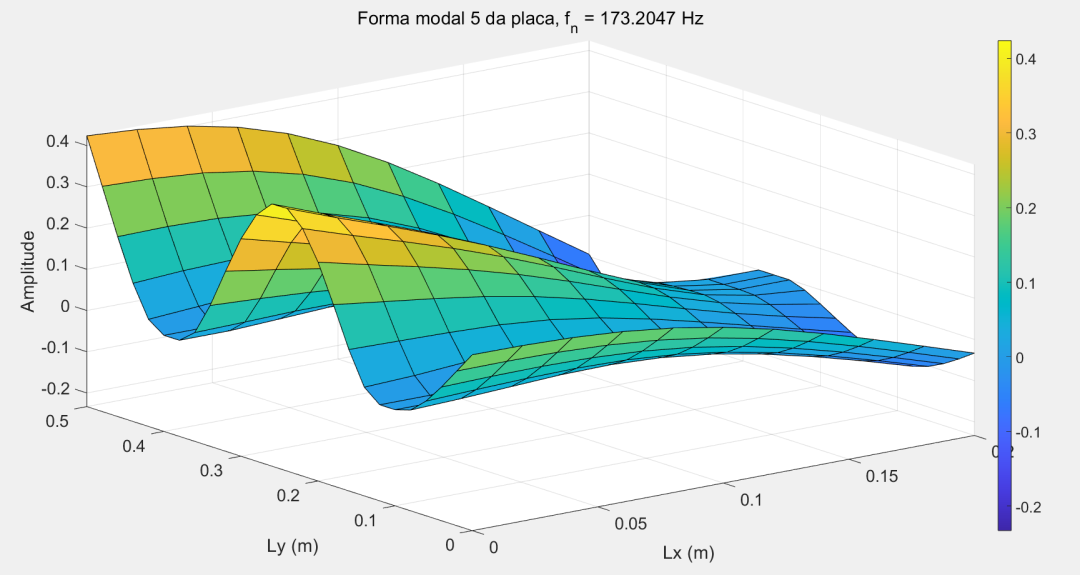

简单的说,带有FPN的FasterRCNN网络结构可以用下图表示:

3 关于训练

FasterRCNN模型在两处地方有损失函数:

- 在 RegionProposalNetwork 类,需要判别 anchor 中是否包含目标从而生成 proposals,这里需要计算 loss

- 在 RoIHeads 类,对 roi_pooling 后的全连接生成的 cls_score 和 bbox_pred 进行训练,也需要计算 loss

3.1 RPN中的损失函数

首先来看 RegionProposalNetwork 类中的 assign_targets_to_anchors 函数。

python">def assign_targets_to_anchors(self, anchors, targets):# type: (List[Tensor], List[Dict[str, Tensor]])labels = []matched_gt_boxes = []for anchors_per_image, targets_per_image in zip(anchors, targets):gt_boxes = targets_per_image["boxes"]if gt_boxes.numel() == 0:# Background image (negative example)device = anchors_per_image.devicematched_gt_boxes_per_image = torch.zeros(anchors_per_image.shape, dtype=torch.float32, device=device) # 匹配的目标框labels_per_image = torch.zeros((anchors_per_image.shape[0],), dtype=torch.float32, device=device) # 标签else:match_quality_matrix = box_ops.box_iou(gt_boxes, anchors_per_image) # IOU比# 使用自定义的proposal_macther获取每个锚框最合适的目标框索引matched_idxs = self.proposal_matcher(match_quality_matrix) # get the targets corresponding GT for each proposal# NB: need to clamp the indices because we can have a single# GT in the image, and matched_idxs can be -2, which goes# out of bounds(min=0以保证其中负值不会导致越界)matched_gt_boxes_per_image = gt_boxes[matched_idxs.clamp(min=0)]# 对标签进行赋值labels_per_image = matched_idxs >= 0 # 有效匹配则为1正样本,否则为0负样本labels_per_image = labels_per_image.to(dtype=torch.float32)# Background (negative examples)bg_indices = matched_idxs == self.proposal_matcher.BELOW_LOW_THRESHOLDlabels_per_image[bg_indices] = torch.tensor(0.0)# discard indices that are between thresholds中间样本inds_to_discard = matched_idxs == self.proposal_matcher.BETWEEN_THRESHOLDSlabels_per_image[inds_to_discard] = torch.tensor(-1.0)# 将标签和匹配的目标框添加到列表中labels.append(labels_per_image)matched_gt_boxes.append(matched_gt_boxes_per_image)return labels, matched_gt_boxes当图像中没有 gt_boxes 时,设置所有 anchor 都为 background(即 label 为 0):

python">if gt_boxes.numel() == 0# Background image (negative example)device = anchors_per_image.devicematched_gt_boxes_per_image = torch.zeros(anchors_per_image.shape, dtype=torch.float32, device=device)labels_per_image = torch.zeros((anchors_per_image.shape[0],), dtype=torch.float32, device=device)当图像中有 gt_boxes 时,计算 anchor 与 gt_box 的 IOU:

- 选择 IOU < 0.3 的 anchor 为 background,标签为 0

python">labels_per_image[bg_indices] = torch.tensor(0.0)- 选择 IOU > 0.7 的 anchor 为 foreground,标签为 1

python">labels_per_image = matched_idxs >= 0- 忽略 0.3 < IOU < 0.7 的 anchor,不参与训练

从 FasterRCNN 类的 __init__ 函数默认参数就可以清晰的看到这一点:

python">rpn_fg_iou_thresh=0.7, rpn_bg_iou_thresh=0.3,3.2 ROI Pooling中的损失

接着来看 RoIHeads 类中的 assign_targets_to_proposals 函数。

python">def assign_targets_to_proposals(self, proposals, gt_boxes, gt_labels):# type: (List[Tensor], List[Tensor], List[Tensor])matched_idxs = []labels = []for proposals_in_image, gt_boxes_in_image, gt_labels_in_image in zip(proposals, gt_boxes, gt_labels):if gt_boxes_in_image.numel() == 0:# Background imagedevice = proposals_in_image.deviceclamped_matched_idxs_in_image = torch.zeros((proposals_in_image.shape[0],), dtype=torch.int64, device=device)labels_in_image = torch.zeros((proposals_in_image.shape[0],), dtype=torch.int64, device=device)else:# set to self.box_similarity when https://github.com/pytorch/pytorch/issues/27495 landsmatch_quality_matrix = box_ops.box_iou(gt_boxes_in_image, proposals_in_image)matched_idxs_in_image = self.proposal_matcher(match_quality_matrix)clamped_matched_idxs_in_image = matched_idxs_in_image.clamp(min=0)labels_in_image = gt_labels_in_image[clamped_matched_idxs_in_image]labels_in_image = labels_in_image.to(dtype=torch.int64)# Label background (below the low threshold)bg_inds = matched_idxs_in_image == self.proposal_matcher.BELOW_LOW_THRESHOLDlabels_in_image[bg_inds] = torch.tensor(0)# Label ignore proposals (between low and high thresholds)ignore_inds = matched_idxs_in_image == self.proposal_matcher.BETWEEN_THRESHOLDSlabels_in_image[ignore_inds] = torch.tensor(-1) # -1 is ignored by samplermatched_idxs.append(clamped_matched_idxs_in_image)labels.append(labels_in_image)return matched_idxs, labels与 assign_targets_to_anchors 不同,该函数设置:

python">box_fg_iou_thresh=0.5, box_bg_iou_thresh=0.5,- IOU > 0.5 的 proposal 为 foreground,标签为对应的 class_id

python">labels_in_image = gt_labels_in_image[clamped_matched_idxs_in_image]这里与上面不同:RegionProposalNetwork 只需要判断 anchor 是否有目标,正类别为1;RoIHeads 需要判断 proposal 的具体类别,所以正类别为具体的 class_id。

- IOU < 0.5 的为 background,标签为 0

python">labels_in_image[bg_inds] = torch.tensor(0)