Whereabouts简介

whereabouts是一个cluster-wide(集群级别)的IPAM插件,非常适合用在NetworkAttachment的场景。之前我们描述过k8s为分配地址使用的是ipam,常见的ipam类型为host-local,calico-ipam。whereabouts是一款用于替换host-local的ipam。记录本地或者k8s已经分配的pool和ip地址。通常以 NAD 为地址段设定的模式,一个 nad 中描述 该 地址段的 ip 范围,网关等信息。一般和cni bridge,ipvlan,macvlan 等相结合使用,负责分配和管理 IP 的功能。

一、部署whereabouts

- 下载安装包

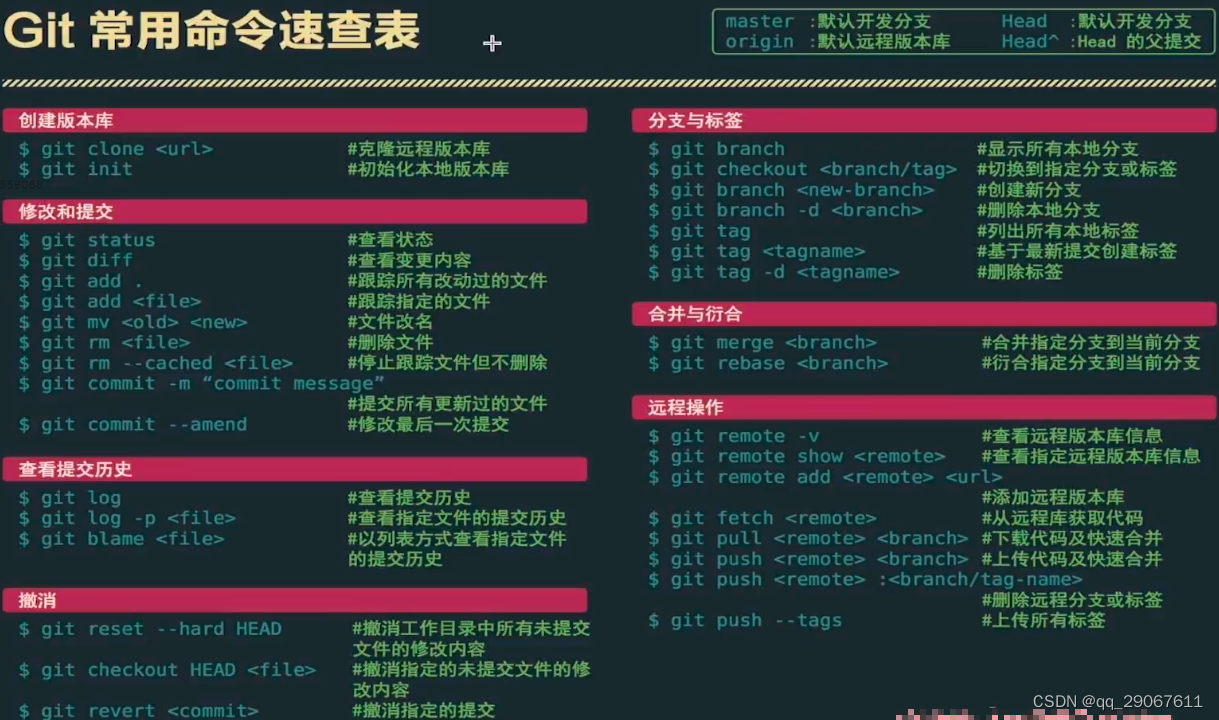

1:克隆代码

[root@node1 ~]# git clone https://github.com/k8snetworkplumbingwg/whereabouts

Cloning into 'whereabouts'...

remote: Enumerating objects: 23528, done.

remote: Counting objects: 100% (3556/3556), done.

remote: Compressing objects: 100% (1875/1875), done.

remote: Total 23528 (delta 1811), reused 2957 (delta 1580), pack-reused 19972

Receiving objects: 100% (23528/23528), 36.48 MiB | 9.56 MiB/s, done.

Resolving deltas: 100% (11207/11207), done.#################################

2;查看文件

[root@node1 ~]# cd whereabouts/doc/crds/

[root@node1 crds]# ll

total 12

-rw-r--r-- 1 root root 2662 Nov 29 14:27 daemonset-install.yaml

-rw-r--r-- 1 root root 2566 Nov 29 14:27 whereabouts.cni.cncf.io_ippools.yaml

-rw-r--r-- 1 root root 2039 Nov 29 14:27 whereabouts.cni.cncf.io_overlappingrangeipreservations.yaml##########################################

3:执行以下命令开始部署whereabouts

[root@node1 crds]# kubectl apply -f daemonset-install.yaml

serviceaccount/whereabouts created

clusterrolebinding.rbac.authorization.k8s.io/whereabouts created

clusterrole.rbac.authorization.k8s.io/whereabouts-cni created

daemonset.apps/whereabouts created

[root@node1 crds]# kubectl apply -f whereabouts.cni.cncf.io_ippools.yaml

customresourcedefinition.apiextensions.k8s.io/ippools.whereabouts.cni.cncf.io created

[root@node1 crds]# kubectl apply -f whereabouts.cni.cncf.io_overlappingrangeipreservations.yaml

customresourcedefinition.apiextensions.k8s.io/overlappingrangeipreservations.whereabouts.cni.cncf.io created

- 查看生成的crd

1:查看生成的crd

[root@node1 crds]# kubectl get crd | grep whereabouts

ippools.whereabouts.cni.cncf.io 2023-11-29T06:50:47Z

overlappingrangeipreservations.whereabouts.cni.cncf.io 2023-11-29T06:50:52Z

[root@node1 crds]# ################解释:

overlappingrangeipreservations 所有分配的 IP

Ippool 已经分配的ip的地址池

- 查看服务

1;查看whereabouts服务运行状态,以daemonset形式在每个节点运行

[root@node1 ~]# kubectl get po -A -o wide | grep whereabouts

kube-system whereabouts-4sjpf 1/1 Running 0 19m 192.168.5.27 node3 <none> <none>

kube-system whereabouts-rw5d5 1/1 Running 0 19m 192.168.5.126 node2 <none> <none>

kube-system whereabouts-v8nxd 1/1 Running 0 19m 192.168.5.79 node1 <none> ##########################################

2:会在每个节点的/opt/cni/bin 目录下生成一个二进制文件

[root@node1 ~]# cd /opt/cni/bin/

[root@node1 bin]# ll | grep where

-rwxr-xr-x 1 root root 45792495 Nov 29 15:03 whereabouts

[root@node1 bin]# ##########解释

daemonset服务 负责维护地址池

whereabouts插件负责申请地址

二、实验

以下测试皆是基于生产环境来配置。

实验环境基于kube-ovn + multus + ipvlan给容器设置双网卡,ipvlan使用whereabouts来分配置IP

- 网络规划

在我的环境中,ipvlan使用的是两块网卡做的bond,每个子接口走不同的业务,本次测试使用的是ipvl1720

[root@node1 ~]# ip a | grep bond_ipvl

2: enp35s0f0: <BROADCAST,MULTICAST,SLAVE,UP,LOWER_UP> mtu 1500 qdisc mq master bond_ipvl state UP group default qlen 1000

9: enp97s0f1: <BROADCAST,MULTICAST,SLAVE,UP,LOWER_UP> mtu 1500 qdisc mq master bond_ipvl state UP group default qlen 1000

77: bond_ipvl: <BROADCAST,MULTICAST,MASTER,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

78: bond_ipvl.1520@bond_ipvl: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

79: bond_ipvl.1511@bond_ipvl: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

80: bond_ipvl.1510@bond_ipvl: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

81: bond_ipvl.1521@bond_ipvl: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

82: bond_ipvl.1522@bond_ipvl: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

207: bond_ipvl.1720@bond_ipvl: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000- 创建NAD

[root@node1 ~]# cat ipvlan1720.yaml

apiVersion: k8s.cni.cncf.io/v1

kind: NetworkAttachmentDefinition

metadata:name: ipvlan1720namespace: devops

spec:config: '{ "cniVersion": "0.3.1", "LogFile": "/var/log/multus.log", "LogLevel": "debug", "name": "ipvlan1720", "type": "ipvlan", "master": "bond_ipvl.1720", ##使用的接口"mtu": 1500, "ipam": { "type": "whereabouts", ###指定ipam类型"datastore": "kubernetes", "range": "10.11.88.96/28", ###cidr"range_start": "10.11.88.98", ###起始地址"range_end": "10.11.88.98", ###终止地址"gateway": "10.11.8.97", ###网关"log_file" : "/tmp/whereabouts.log", "log_level" : "debug" } }'[root@node1 ~]# kubectl apply -f ipvl1720.yaml

networkattachmentdefinition.k8s.cni.cncf.io/ipvlan1720 created

[root@node1 ~]#

[root@node1 ~]#

[root@node1 ~]# kubectl get network-attachment-definition -n devops

NAME AGE

ipvlan1720 78s

[root@node1 ~]# - 启动pod

1;pod yaml内容如下

apiVersion: v1

kind: Pod

metadata:name: ipvlnamespace: devopslabels:app: nginxannotations:k8s.v1.cni.cncf.io/networks: ipvlan1720 ###指定使用的NAD

spec:containers:- name: nginximage: registry-1.ict-mec.net:18443/nginx:latestimagePullPolicy: IfNotPresentports:- name: nginx-portcontainerPort: 80####################################

2:查看pod,172.10.4.148为kube-ovn网络的ip地址

[root@node1 ~]# kubectl get po -n devops

NAME READY STATUS RESTARTS AGE

ipvl 1/1 Running 0 3s

[root@node1 ~]# kubectl get po -n devops -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

ipvl 1/1 Running 0 6s 172.10.4.148 node2 <none> <none>

[root@node1 ~]#

- 查看pod获取的地址

1:describe 查看pod信息

[root@node1 ~]# kubectl describe po ipvl -n devops

Name: ipvl

Namespace: devops

Priority: 0

Node: cmu52/23.1.1.36

Start Time: Thu, 30 Nov 2023 15:45:33 +0800

Labels: app=nginx

Annotations: io.kubernetes.pod.sandbox.uid: aa3576c85e9b699a8bbe5360edd5d84cccffdfc2e1c017b6b6e52c77c0308dcdk8s.v1.cni.cncf.io/network-status:[{"name": "kube-ovn","ips": ["172.10.4.148","fd00:10:16::494"],"default": true,"dns": {}},{"name": "devops/ipvlan1720","interface": "net1","ips": ["10.11.88.98"],"mac": "40:a6:b7:37:29:3c","dns": {}}]k8s.v1.cni.cncf.io/networks: ipvlan1720 ###使用的NADk8s.v1.cni.cncf.io/networks-status:[{"name": "kube-ovn","ips": ["172.10.4.148","fd00:10:16::494"],"default": true,"dns": {}},{"name": "devops/ipvlan1720","interface": "net1", ####pod内部的网卡"ips": ["10.11.88.98" ####可以看到获取的地址],"mac": "40:a6:b7:37:29:3c","dns": {}}]###############################

2:进入pod的宿主机的网络ns 中查看

[root@node2 ~]# crictl ps| grep nginx

38a9298403eda 12766a6745eea About a minute ago Running nginx 0 aa3576c85e9b6 ipvl

[root@node2 ~]# crictl inspect 38a9298403eda| grep -i pid"pid": 505586,"pid": 1"type": "pid"

[root@node2 ~]# nsenter -t 505586 -n bash

[root@node2 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00inet 127.0.0.1/8 scope host lovalid_lft forever preferred_lft foreverinet6 ::1/128 scope host valid_lft forever preferred_lft forever

2: ip_vti0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000link/ipip 0.0.0.0 brd 0.0.0.0

3: net1@if248: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN group default link/ether 40:a6:b7:37:29:3c brd ff:ff:ff:ff:ff:ffinet 10.11.88.98/28 brd 10.11.88.111 scope global net1valid_lft forever preferred_lft foreverinet6 fe80::40a6:b700:137:293c/64 scope link valid_lft forever preferred_lft forever

259: eth0@if260: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 9000 qdisc noqueue state UP group default link/ether 00:00:00:20:4f:93 brd ff:ff:ff:ff:ff:ff link-netnsid 0inet 172.10.4.148/16 brd 172.10.255.255 scope global eth0valid_lft forever preferred_lft foreverinet6 fd00:10:16::494/64 scope global valid_lft forever preferred_lft foreverinet6 fe80::200:ff:fe20:4f93/64 scope link valid_lft forever preferred_lft forever

[root@node2 ~]# ip r

default via 172.10.0.1 dev eth0

10.11.88.96/28 dev net1 proto kernel scope link src 10.11.88.98

172.10.0.0/16 dev eth0 proto kernel scope link src 172.10.4.148 - 查看分配的ip地址

1:查看已经分配的地址

[root@node1 ~]# kubectl get overlappingrangeipreservations.whereabouts.cni.cncf.io -n kube-system

NAME AGE

10.11.88.98 79m################

2:查看已经分配的地址池,因为我在上面的NAD中定义的网络为10.11.88.96/28,所以pool的名称规则是网络+掩码位数

[root@node1 ~]# kubectl get ippools.whereabouts.cni.cncf.io -n kube-system

NAME AGE

10.11.88.96-28 80m####################################

3:查看分配的ip地址信息

[root@node1 ~]# kubectl describe overlappingrangeipreservations.whereabouts.cni.cncf.io 10.11.88.98 -n kube-system

Name: 10.11.88.98

Namespace: kube-system

Labels: <none>

Annotations: <none>

API Version: whereabouts.cni.cncf.io/v1alpha1

Kind: OverlappingRangeIPReservation

Metadata:Creation Timestamp: 2023-11-30T07:45:34ZGeneration: 1Managed Fields:API Version: whereabouts.cni.cncf.io/v1alpha1Fields Type: FieldsV1fieldsV1:f:spec:.:f:containerid:f:podref:Manager: whereaboutsOperation: UpdateTime: 2023-11-30T07:45:34ZResource Version: 25214006UID: d1565403-02e1-45ff-b09f-a26710d7bb7d

Spec:Containerid: aa3576c85e9b699a8bbe5360edd5d84cccffdfc2e1c017b6b6e52c77c0308dcd ####可以看到绑定的容器idPodref: devops/ipvl

Events: <none>

[root@node1 ~]# ##############################

4:查看地址池的信息

[root@node1 ~]# kubectl describe ippools.whereabouts.cni.cncf.io 10.11.88.96-28 -n kube-system

Name: 10.11.88.96-28

Namespace: kube-system

Labels: <none>

Annotations: <none>

API Version: whereabouts.cni.cncf.io/v1alpha1

Kind: IPPool

Metadata:Creation Timestamp: 2023-11-30T07:44:44ZGeneration: 4Managed Fields:API Version: whereabouts.cni.cncf.io/v1alpha1Fields Type: FieldsV1fieldsV1:f:spec:.:f:allocations:.:f:2:.:f:id:f:podref:f:range:Manager: whereaboutsOperation: UpdateTime: 2023-11-30T07:45:34ZResource Version: 25214005UID: 10544b9b-9ad3-43a9-b747-d46293a54ebb

Spec:Allocations:2:Id: aa3576c85e9b699a8bbe5360edd5d84cccffdfc2e1c017b6b6e52c77c0308dcdPodref: devops/ipvl ###pod的名称Range: 10.11.88.96/28

Events: <none>

[root@node1 ~]#

- pod删除

1:测试多启动一个pod,发现在ippool 中会自动把发分配的ip更新进去

[root@node1 ~]# kubectl get ippool 10.11.88.96-28 -o yaml -n kube-system

apiVersion: whereabouts.cni.cncf.io/v1alpha1

kind: IPPool

metadata:creationTimestamp: "2023-11-30T09:38:48Z"generation: 3name: 10.11.88.96-28namespace: kube-systemresourceVersion: "25262851"uid: d00b0b1c-f848-461b-9e98-e4512307a485

spec:allocations:"2":id: cc4d323c718f08effc22ff05f4a7727a9b648e5bd4849ab0143c5439337209d1podref: devops/ipvl"3":id: 7a8bbfed7f98586779ac089358102078bca0f1946fe131c6abd8bd66e685688apodref: devops/ipvl2range: 10.11.88.96/28########################################

2:删除两个pod,查看ippool的变化

[root@node1 ~]# kubectl delete po ipvl -n devops

pod "ipvl" deleted

[root@cmu51 ~]# kubectl delete po ipvl2 -n devops

pod "ipvl2" deleted如下ippool中已经将分配到ip地址进行删除。

[root@node1 ~]# kubectl get ippool 10.11.88.96-28 -o yaml -n kube-system

apiVersion: whereabouts.cni.cncf.io/v1alpha1

kind: IPPool

metadata:creationTimestamp: "2023-11-30T09:38:48Z"generation: 5name: 10.11.88.96-28namespace: kube-systemresourceVersion: "25263222"uid: d00b0b1c-f848-461b-9e98-e4512307a485

spec:allocations: {}range: 10.11.88.96/28三、原理总结

1:创建一个NAD,内部定义一个IP range

2:创建pod,调度到宿主机时,本机的二进制文件whereabouts读取ip range

3:获取ippool,如果不存在就创建一个新的ippool,比如NAD中定义的IP range为10.11.88.96/28,ippool的名称为10.11.88.96-28

4:获取到 ippool 之后,根据NAD.ipam 地址段里的 IP range 里分配一个可用 ip,将 ip 更新到 ippool 里。

5:创建 OverlappingRangeIPReservation,名称以IP地址命名。

6:cni将结果返回给cri