一、下载Darknet_ros

mkidr -p yolo_ws/src

cd yolo_ws/src

git clone --recursive https://github.com/leggedrobotics/darknet_ros.git

#因为这样克隆的darknet文件夹是空的,将darknet_ros中的darknet的文件替换成如下

cd darknet_ros

git clone https://github.com/arnoldfychen/darknet.git

#进入darknet目录下进行make

cd darknet

make修改darknet文件夹下的Makefile文件

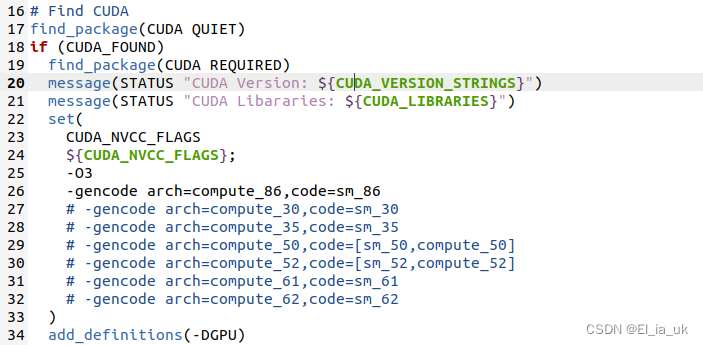

修改 darknet_ros/darknet_ros/CMakeLists.txt

将这算力改成自己电脑的算力

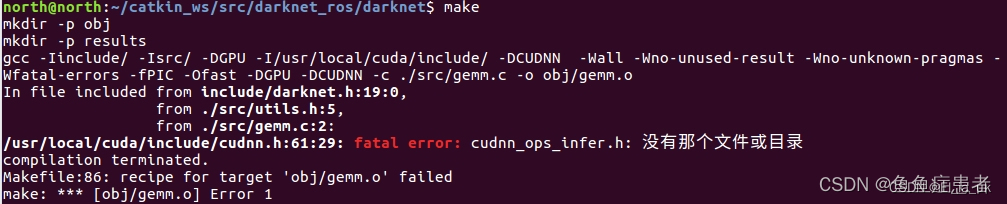

make可能会报如下错误:

一、

fatal error:cudnn_ros_infer.h:没有那个文件或目录

解决办法:

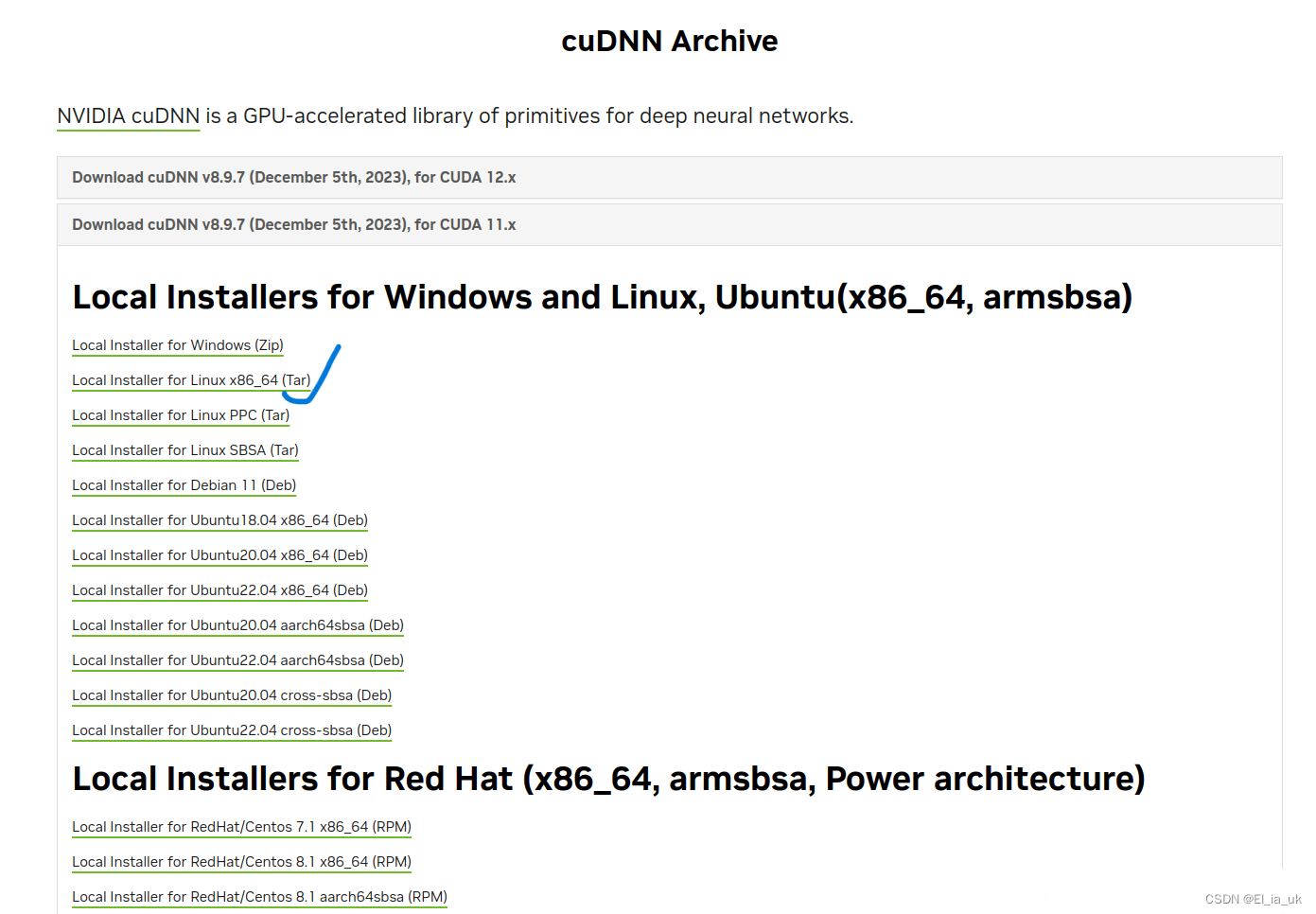

根据官方链接cuDNN Archive | NVIDIA Developer,选择与cuda版本相匹配的cudnn下载tar文件。在这里,我下载的是v8.9.6,可与cuda 12.x等版本适配。

解压cudnn-linux-x86_64-8.9.7.29_cuda11-archive.tar.xz后,进入该目录,将lib内的文件都复制到目录/usr/local/cuda-11.6/lib64/中,将include内的文件都复制到目录/usr/local/cuda-11.6/include/中

cd ./cudnn-linux-x86_64-8.9.6.50_cuda11-archive

sudo cp ./lib/* /usr/local/cuda-12.2/lib64/

sudo cp ./include/* /usr/local/cuda-12.2/include/

二、

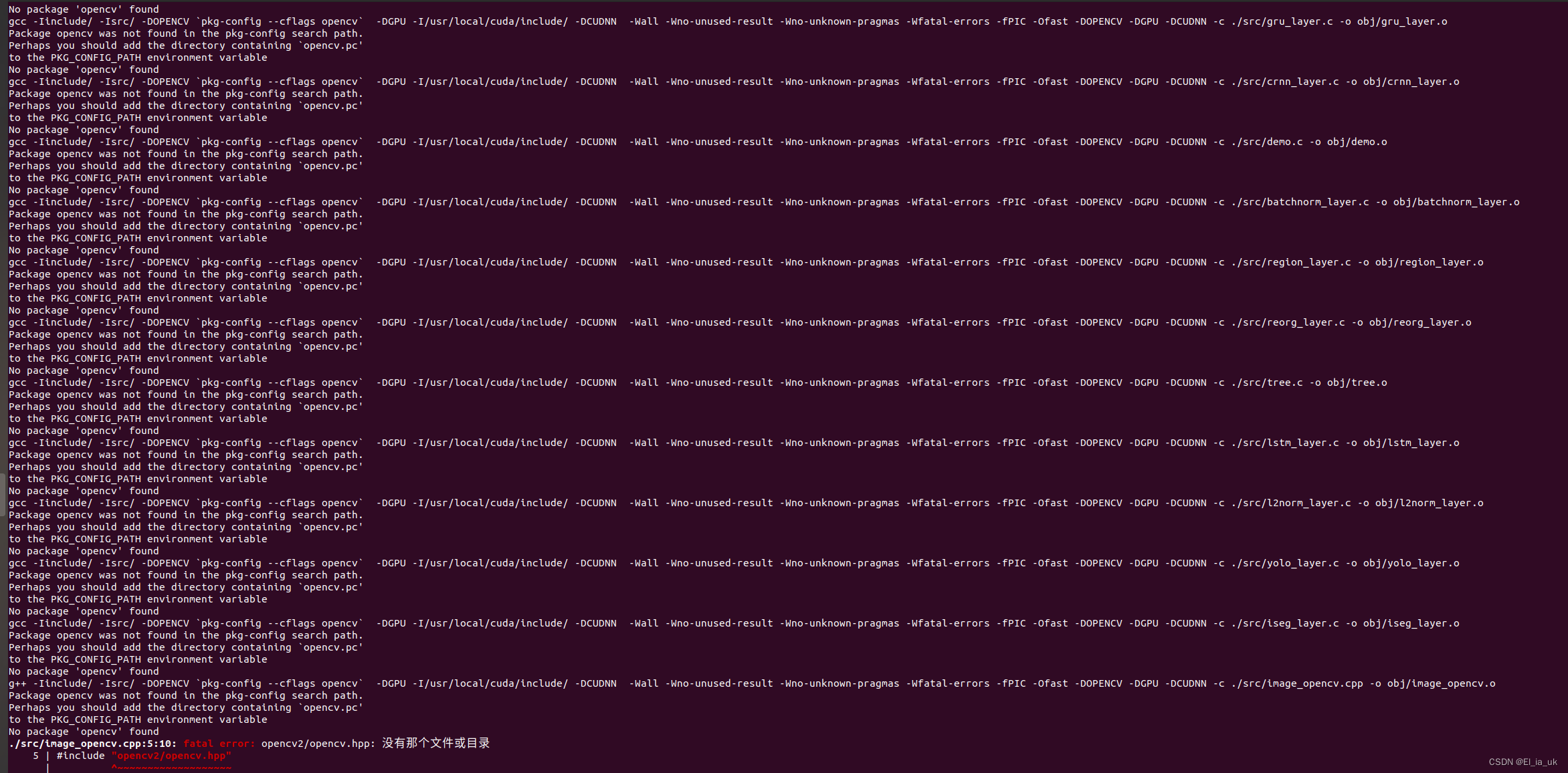

fatal error:opencv2/opencvhpp:没有那个文件或目录

fatal error:opencv2/opencvhpp:没有那个文件或目录

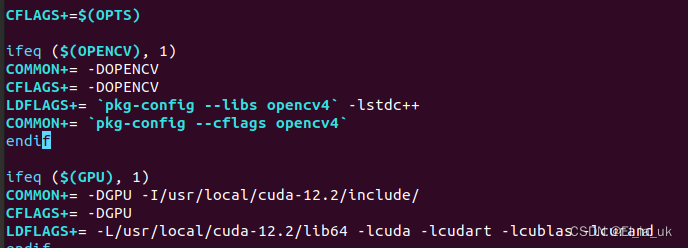

解决办法:修改修改darknet文件夹下的Makefile文件

三、

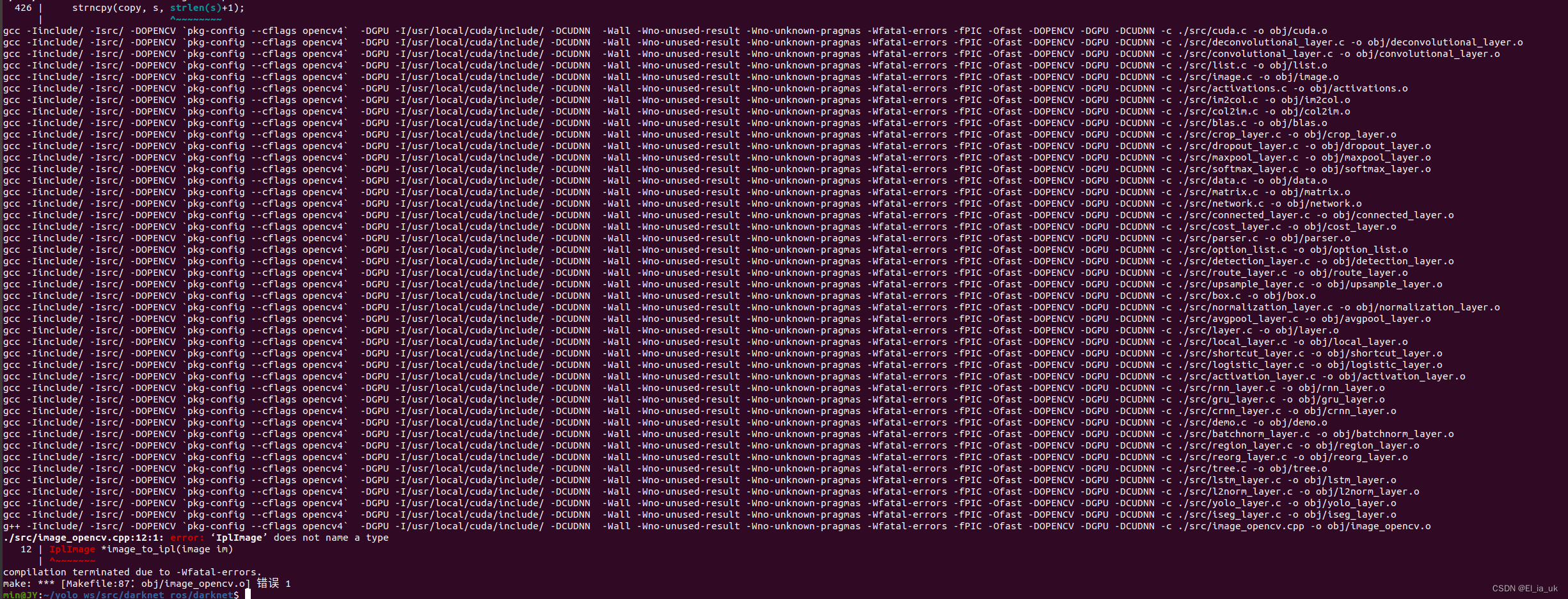

error: ‘IplImage’ does not name a type 12 | IplImage *image_to_ipl(image im)

把 home/darknet/src/imageopencv.cpp 修改如下:

#ifdef OPENCV#include "stdio.h"

#include "stdlib.h"

#include "opencv2/opencv.hpp"

#include "image.h"using namespace cv;extern "C" {/*IplImage *image_to_ipl(image im)

{int x,y,c;IplImage *disp = cvCreateImage(cvSize(im.w,im.h), IPL_DEPTH_8U, im.c);int step = disp->widthStep;for(y = 0; y < im.h; ++y){for(x = 0; x < im.w; ++x){for(c= 0; c < im.c; ++c){float val = im.data[c*im.h*im.w + y*im.w + x];disp->imageData[y*step + x*im.c + c] = (unsigned char)(val*255);}}}return disp;

}image ipl_to_image(IplImage* src)

{int h = src->height;int w = src->width;int c = src->nChannels;image im = make_image(w, h, c);unsigned char *data = (unsigned char *)src->imageData;int step = src->widthStep;int i, j, k;for(i = 0; i < h; ++i){for(k= 0; k < c; ++k){for(j = 0; j < w; ++j){im.data[k*w*h + i*w + j] = data[i*step + j*c + k]/255.;}}}return im;

}*//*Mat image_to_mat(image im)

{image copy = copy_image(im);constrain_image(copy);if(im.c == 3) rgbgr_image(copy);IplImage *ipl = image_to_ipl(copy);Mat m = cvarrToMat(ipl, true);cvReleaseImage(&ipl);free_image(copy);return m;

}image mat_to_image(Mat m)

{IplImage ipl = m;image im = ipl_to_image(&ipl);rgbgr_image(im);return im;

}*/Mat image_to_mat(image im)

{image copy = copy_image(im);constrain_image(copy);if(im.c == 3) rgbgr_image(copy);Mat m(cv::Size(im.w,im.h), CV_8UC(im.c));int x,y,c;int step = m.step;for(y = 0; y < im.h; ++y){for(x = 0; x < im.w; ++x){for(c= 0; c < im.c; ++c){float val = im.data[c*im.h*im.w + y*im.w + x];m.data[y*step + x*im.c + c] = (unsigned char)(val*255);}}}free_image(copy);return m;// free_image(copy);

// return m;

// IplImage *ipl = image_to_ipl(copy);

// Mat m = cvarrToMat(ipl, true);

// cvReleaseImage(&ipl);

// free_image(copy);

// return m;

}image mat_to_image(Mat m)

{int h = m.rows;int w = m.cols;int c = m.channels();image im = make_image(w, h, c);unsigned char *data = (unsigned char *)m.data;int step = m.step;int i, j, k;for(i = 0; i < h; ++i){for(k= 0; k < c; ++k){for(j = 0; j < w; ++j){im.data[k*w*h + i*w + j] = data[i*step + j*c + k]/255.;}}}rgbgr_image(im);return im;// IplImage ipl = m;// image im = ipl_to_image(&ipl);// rgbgr_image(im);// return im;

}void *open_video_stream(const char *f, int c, int w, int h, int fps)

{VideoCapture *cap;if(f) cap = new VideoCapture(f);else cap = new VideoCapture(c);if(!cap->isOpened()) return 0;//if(w) cap->set(CV_CAP_PROP_FRAME_WIDTH, w);//if(h) cap->set(CV_CAP_PROP_FRAME_HEIGHT, w);//if(fps) cap->set(CV_CAP_PROP_FPS, w);if(w) cap->set(CAP_PROP_FRAME_WIDTH, w);if(h) cap->set(CAP_PROP_FRAME_HEIGHT, w);if(fps) cap->set(CAP_PROP_FPS, w);return (void *) cap;

}image get_image_from_stream(void *p)

{VideoCapture *cap = (VideoCapture *)p;Mat m;*cap >> m;if(m.empty()) return make_empty_image(0,0,0);return mat_to_image(m);

}image load_image_cv(char *filename, int channels)

{int flag = -1;if (channels == 0) flag = -1;else if (channels == 1) flag = 0;else if (channels == 3) flag = 1;else {fprintf(stderr, "OpenCV can't force load with %d channels\n", channels);}Mat m;m = imread(filename, flag);if(!m.data){fprintf(stderr, "Cannot load image \"%s\"\n", filename);char buff[256];sprintf(buff, "echo %s >> bad.list", filename);system(buff);return make_image(10,10,3);//exit(0);}image im = mat_to_image(m);return im;

}int show_image_cv(image im, const char* name, int ms)

{Mat m = image_to_mat(im);imshow(name, m);int c = waitKey(ms);if (c != -1) c = c%256;return c;

}void make_window(char *name, int w, int h, int fullscreen)

{namedWindow(name, WINDOW_NORMAL); if (fullscreen) {//setWindowProperty(name, CV_WND_PROP_FULLSCREEN, CV_WINDOW_FULLSCREEN);setWindowProperty(name, WND_PROP_FULLSCREEN, WINDOW_FULLSCREEN);} else {resizeWindow(name, w, h);if(strcmp(name, "Demo") == 0) moveWindow(name, 0, 0);}

}}#endif

将之前在darknet文件进行make出错的文件删除,再重新编译

sudo make clean

make在工作空间中安装realsense_ros与机械臂启动包

cd yolo_ws

cd src

#安装realsense_ros

git clone https://github.com/IntelRealSense/realsense-ros.git

git clone https://github.com/UniversalRobots/Universal_Robots_ROS_Driver.git Universal_Robots_ROS_Driver

git clone -b calibration_devel https://github.com/fmauch/universal_robot.git fmauch_universal_robotcd ..

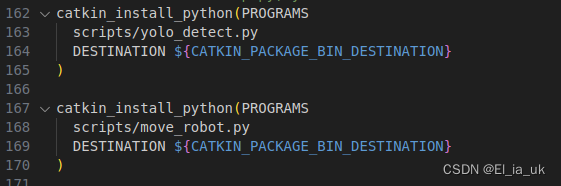

catkin_make -DCMAKE_BUILD_TYPE=Release使用VScode 在工作空间的src目录下创建新的文件夹std_msg,并添加相关的依赖:rospy roscpp std_msgs

在std_msg文件中创建scripts文件夹,创建两个py文件

一个为yolo目标检测文件及机械臂运动程序

#!/usr/bin/env python

# -*- coding: utf-8 -*-import rospy

from darknet_ros_msgs.msg import BoundingBoxes, BoundingBox

from sensor_msgs.msg import Image, CameraInfo

from cv_bridge import CvBridge

import numpy as np

import tf2_ros

import tf2_geometry_msgs

from geometry_msgs.msg import Pointclass Readyolo:def __init__(self):rospy.init_node('grasping_node', anonymous=True)self.bridge = CvBridge()self.tf_buffer = tf2_ros.Buffer()self.tf_listener = tf2_ros.TransformListener(self.tf_buffer)self.rgb_image_sub = rospy.Subscriber('/camera/color/image_raw', Image, self.rgb_image_callback)self.depth_image_sub = rospy.Subscriber('/camera/depth/image_rect_raw', Image, self.depth_image_callback)self.camera_info_sub = rospy.Subscriber('/camera/depth/camera_info', CameraInfo, self.camera_info_callback)self.object_detection = rospy.Subscriber('/darknet_ros/bounding_boxes', BoundingBoxes, self.yolo)self.pub = rospy.Publisher('/object_camera_coordinates', Point, queue_size=10)self.depth_intrinsics = Noneself.rgb_image = Noneself.depth_image = Nonedef camera_info_callback(self, msg):self.depth_intrinsics = msgprint(self.depth_intrinsics.K[0])print(self.depth_intrinsics.K[4])print()def rgb_image_callback(self, msg):self.rgb_image = self.bridge.imgmsg_to_cv2(msg, desired_encoding="passthrough")def depth_image_callback(self, msg):self.depth_image = self.bridge.imgmsg_to_cv2(msg, desired_encoding="passthrough")self.process_yolo_results()def process_yolo_results(self):if self.depth_intrinsics is None or self.rgb_image is None or self.depth_image is None:returnfor box in self.bounding_boxes:if box.Class == "bottle":yolo_center_point_x = (box.xmin + box.xmax) / 2yolo_center_point_y = (box.ymin + box.ymax) / 2depth = self.depth_image[int(yolo_center_point_y)][int(yolo_center_point_x)]camera_point = self.convert_pixel_to_camera_coordinates(yolo_center_point_x, yolo_center_point_y, depth)print("OI:",yolo_center_point_x,yolo_center_point_y)point_msg = Point(x=camera_point[0], y=camera_point[1], z=camera_point[2])self.pub.publish(point_msg)# self.pub.publish(box)def convert_pixel_to_camera_coordinates(self, u, v, depth):if self.depth_intrinsics is None:return Nonefx = self.depth_intrinsics.K[0]fy = self.depth_intrinsics.K[4]cx = self.depth_intrinsics.K[2]cy = self.depth_intrinsics.K[5]camera_x = (u - cx) * depth / fx/1000camera_y = (v - cy) * depth / fy/1000camera_z = float(depth)/1000print("camera_link:",camera_x,camera_y,camera_z)return [camera_x, camera_y, camera_z]def yolo(self, msg):self.bounding_boxes = msg.bounding_boxesself.process_yolo_results()def main():readyolo = Readyolo()rospy.spin()if __name__ == '__main__':main()

#!/usr/bin/env python

# -*- coding: utf-8 -*-import rospy, sys

import moveit_commander

from geometry_msgs.msg import PoseStamped, Pose

import tf2_geometry_msgs

import tf2_ros

from geometry_msgs.msg import Pointclass MoveItIkDemo:def __init__(self):# 初始化move_group的APImoveit_commander.roscpp_initialize(sys.argv)# 初始化ROS节点rospy.init_node('moveit_it_demo')# 初始化需要使用move group控制的机械臂中的arm groupself.arm = moveit_commander.MoveGroupCommander('manipulator')# 获取终端link的名称,这个在setup assistant中设置过了end_effector_link = self.arm.get_end_effector_link()# 设置目标位置所使用的参考坐标系reference_frame = "base"self.arm.set_pose_reference_frame(reference_frame)# 当运动规划失败后,允许重新规划self.arm.allow_replanning(True)# 设置位置(单位: 米)和姿态(单位:弧度)的允许误差# self.arm.set_goal_position_tolerance(0.001)# self.arm.set_goal_orientation_tolerance(0.01)self.arm.set_goal_position_tolerance(0.001000)self.arm.set_goal_orientation_tolerance(0.01000)# 设置允许的最大速度和加速度self.arm.set_max_acceleration_scaling_factor(0.5)self.arm.set_max_velocity_scaling_factor(0.5)# 控制机械臂先回到初始位置# self.arm.set_named_target('home')# self.arm.go(wait=True)# rospy.sleep(1)# 设置机械臂工作空间中的目标位姿,位置使用x y z坐标描述# 姿态使用四元数描述,基于base_link坐标系# target_pose = PoseStamped()# # 参考坐标系,前面设置了# target_pose.header.frame_id = reference_frame# target_pose.header.stamp = rospy.Time.now()# # 末端位置# target_pose.pose.position.x = 0.359300# target_pose.pose.position.y = 0.163600# target_pose.pose.position.z = 0.278700# # 末端姿态,四元数# target_pose.pose.orientation.x = 0.433680# target_pose.pose.orientation.y = 0.651417# target_pose.pose.orientation.z = 0.508190# target_pose.pose.orientation.w = 0.359611# # 设置机械臂当前的状态作为运动初始状态# self.arm.set_start_state_to_current_state()# # 设置机械臂终端运动的目标位姿# self.arm.set_pose_target(target_pose)# # 规划运动路径,返回虚影的效果# plan_success,traj,planning_time,error_code = self.arm.plan()# # traj = self.arm.plan()# # 按照规划的运动路径控制机械臂运动# self.arm.execute(traj)# # 执行完休息一秒# rospy.sleep(1)# 新建一个接收方用来接受话题并处理self.pose_sub = rospy.Subscriber("/object_camera_coordinates", Point, self.callback) def transform_pose(self, input_pose, from_frame, to_frame):try:tf_buffer = tf2_ros.Buffer()listener = tf2_ros.TransformListener(tf_buffer)transform = tf_buffer.lookup_transform(to_frame, from_frame, rospy.Time(0), rospy.Duration(1.0))transformed_pose = tf2_geometry_msgs.do_transform_pose(input_pose, transform)return transformed_poseexcept(tf2_ros.LookupException, tf2_ros.ConnectivityException, tf2_ros.ExtrapolationoException) as ex:rospy.logerr("TF2 error:%s",str(ex))return Nonedef callback(self, p):global object_position, grasp_poseobject_position = ptemp_pose = PoseStamped()temp_pose.header.frame_id = "camera_link"temp_pose.pose.position = ptemp_pose.pose.orientation.w = 1.0transformed_pose = self.transform_pose(temp_pose, "camera_link", "base")if transformed_pose is not None:grasp_pose = transformed_poseself.arm.set_start_state_to_current_state()self.arm.set_pose_target(grasp_pose)plan_success,traj,planning_time,error_code = self.arm.plan()self.arm.execute(traj)rospy.sleep(1)def main():moveitikdemo = MoveItIkDemo()rospy.spin()if __name__ == '__main__':main()修改py文件权限,并在CMakeLists.txt进行相对应的修改