一、常用结构体:

1、AVFormatContext结构体:

AVFormatContext是一个贯穿全局的数据结构,很多函数都要用它作为参数。FFmpeg代码中对这个数据结构的注释是format I/O context,此结构包含了一个视频流的格式内容。其中存有AVIputFormat(或AVOutputFormat,但是同一时间AVFormatContext内只能存在其中一个)、AVStream、AVPacket这几个重要的数据结构以及一些其他的相关信息,比如title、author、copyright等。另外,还有一些可能在编码中会用的信息,如duration、file_size、bit_rate等。

由于AVFormatContext结构体包含许多信息,因此初始化过程是分布完成的,而且其中有些变量如没有值可用,也可不初始化。但是一般声明都使用指针,因此分配内存的过程不可少。

AVFormatContext *mAvFormatContext = nullptr;mAvFormatContext = avformat_alloc_context();2、AVInputFormat结构体:

AVInputFormat是FFmpeg的解复用器对象,AVInputFormat是类似COM接口的数据结构,表示输入文件容器格式,着重于功能函数,一种文件容器格式对应一个AVInputFormat结构,在程序运行时有多个实例。next变量用于把智齿的所有输入文件容器格式连接成链表,便于遍历查找;priv_data_size标示具体的文件容器格式对应的Context的大小。

3、AVStream结构体:

AVStream是存储每一个视频、音频流信息的结构体。解复用器的目的就是从容器中分离(解析出来)不同的流,FFmpeg中的流对象为AVStream,它是由解复用器的read_header函数创建的,并保存在AVFormatContext的nb_streams(容器中流条数)及streams数组中。

AVStream *avStream = avformat_new_stream(mAvFormatContext, nullptr);4、AVCodecContext结构体:

这是一个描述解码器上下文的数据结构,包含了众多编解码器需要的参数信息,如是单纯地使用libavcodec,这部分信息需要调用者进行初始化;如是使用整个FFmpeg库,这部分信息在调用av_open_input_file和av_find_stream_info的过程中会根据文件的头部信息及媒体流内的头部信息完成初始化。

AVFormatContext *mAvFormatContext = nullptr;AVCodecContext *mAvCodecContext = nullptr;int mVideoIndex = -1;int FFmpegVideoPlay::initFFmpegCodec() {//3.寻找流的索引for (int i = 0; i < mAvFormatContext->nb_streams; ++i) {AVMediaType mediaType = mAvFormatContext->streams[i]->codecpar->codec_type;LOGD("find % d stream mediaType. i:%d", i, mediaType);if (mediaType == AVMEDIA_TYPE_VIDEO) {mVideoIndex = i;break;}}if (mVideoIndex < 0) {LOGD("Couldn't find a video stream.\n");return mVideoIndex;}//4.找到解码器上下文AVCodecParameters *pParameters = mAvFormatContext->streams[mVideoIndex]->codecpar;AVCodecID avCodecId = pParameters->codec_id;AVCodec *findDecoder = avcodec_find_decoder(avCodecId);mAvCodecContext = avcodec_alloc_context3(findDecoder);avcodec_parameters_to_context(mAvCodecContext, pParameters);//5.打开解码器ret = avcodec_open2(mAvCodecContext, findDecoder, nullptr);if (ret < 0) {LOGD("Couldn't open codec.\n");return ret;}LOGD("打开解码成功\n");return ret;

}

5、AVPacket结构体:

FFmpeg用AVPacket来存放编码后的视频帧数据,AVPacket保存了解复用之后、解码之前的数据(仍然是压缩后的数据)和关于这些数据的一些附加信息,如显示时间戳(PTS)、解码时间戳(DTS)、数据时长、所在媒体流的索引等;

对于视频(Video)来说,AVPacket通常包含一个压缩的帧,而音频(Audio)则有可能包含多个压缩的帧。并且,一个AVPacket有可能是空的,不包含任何压缩数据,只有side data(指的是容器提供的关于Packet的一些附加信息。例如,在编码结束时候更新一些流的参数。)

AVPacket是公共的ABI(public ABI)的一部分,这样的结构体在FFmpeg中很少,由此也可见AVPacket的重要性。它可以分配到栈空间上(可以使用语句AVPacket packet;在栈空间上定义了一个Packet),并且除非libavcodec和libavformat有很大的改动,不然不会在AVPacket中添加新的字段。

AVPacket实际上可用作一个容器,它本身并不包含压缩的媒体数据,而是通过data指针引用数据的缓存空间。所有当将一个Packet作为参数传递的时候,就要根据具体的需要,对data引用的这部分数据缓存空间进行特殊处理。当从一个Packet创建拎一个Packet的时候,有如下两种情况:

- 两个Packet的data引用的是同一数据缓存空间,这时候要注意数据缓存空间的释放问题;

- 两个Packet的data引用的是不同的数据缓存空间,每个Packet都有数据缓存空间的副本。

在第二种情况下,数据空间的管理比较简单,但是数据实际上有多个副本,这样造成了内存空间的浪费。所以要根据具体的需要,来选择到底是两个Packet共享一个数据缓存空间,还是每个Packet拥有自己独立的缓存空间。

对于多个Packet共享同一个缓存空间,FFmpeg使用引用计数(reference-count)机制。当有新的Packet引用共享的缓存空间时,就将引用计数加1;当释放了引用共享空间的引用的Packet时,就将引用计数减1;当引用计数为0时,释放引用过的缓存空间。

AVPacket中的AVBufferRef *buf 就是用来管理这个引用计数的:

在AVPacket中使用AVBufferRef,可以通过两个函数av_packet_ref和av_packet_unref。

/*** Setup a new reference to the data described by a given packet** If src is reference-counted, setup dst as a new reference to the* buffer in src. Otherwise allocate a new buffer in dst and copy the* data from src into it.** All the other fields are copied from src.** @see av_packet_unref** @param dst Destination packet. Will be completely overwritten.* @param src Source packet** @return 0 on success, a negative AVERROR on error. On error, dst* will be blank (as if returned by av_packet_alloc()).*/

int av_packet_ref(AVPacket *dst, const AVPacket *src);/*** Wipe the packet.** Unreference the buffer referenced by the packet and reset the* remaining packet fields to their default values.** @param pkt The packet to be unreferenced.*/

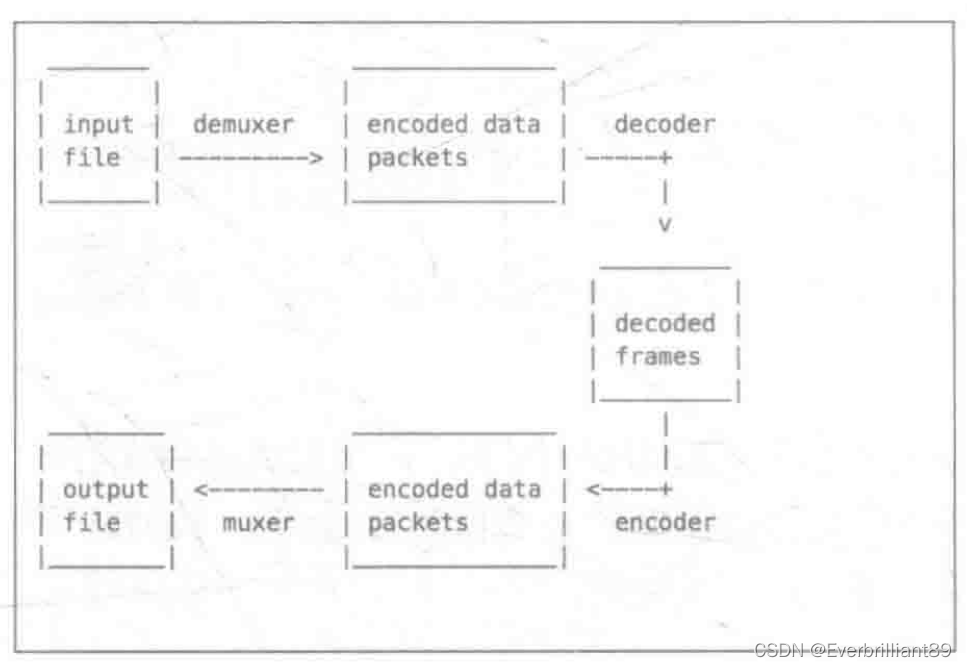

void av_packet_unref(AVPacket *pkt);AVPacket通常将Demuxer导出的数据包作为解码器的输入数据,或是收到来自解码器的数据包,通过Muxer进入输出数据:

6、AVCode结构体:

AVCode是存储编码器信息的结构体,AVCode codec通过avcode_find_decoder(code_id)找到对应的Codec。

AVCodec *findDecoder = avcodec_find_decoder(avCodecId);7、AVFrame结构体:

AVFrame结构体一般用于存储原始数据(即非压缩数据,如对视频来说是YUV、RGB,对音频来说是PCM),此外还包含了一些相关信息。比如说,解码的时候存储了宏块类型、QP表、运动矢量表等数据。编码的时候也存储了相关数据。

AVFrame存放从AVPacket中解码出来的原始数据,其必须通过av_frame_alloc来创建,通过av_frame__free来释放。和AVPacket类似,AVFrame中也有一块数据缓存空间,在调用av_frame_alloc的时候并不会为这块缓存区域分配空间,需要使用其他的方法。在解码的过程中使用两个AVFrame,这两个AVFrame分配缓存空间的方法并不相同,下面分别进行介绍:

- 一个AVFrame用来存放从AVPacket中解码出来的原始数据,这个AVFrame的数据缓存空间通过调用avcodec_receive_frame来分配和填充;

- 另一个AVFrame用来存放解码出来的原始数据变换为需要的格式(例如:RGB、RGBA)的数据,这个AVFrame需要手动分配数据缓存空间。

mAvFrame = av_frame_alloc();mAvPacket = av_packet_alloc();mRgbFrame = av_frame_alloc();mWidth = mAvCodecContext->width;mHeight = mAvCodecContext->height;LOGD("width: %d,==height:%d", mWidth, mHeight);int bufferSize = av_image_get_buffer_size(AV_PIX_FMT_RGBA, mWidth, mHeight, 1);LOGD("计算解码后的rgb %d\n", bufferSize);mOutbuffer = (uint8_t *) av_malloc(bufferSize * sizeof(uint8_t));// 转换器mSwsContext = sws_getContext(mWidth, mHeight, mAvCodecContext->pix_fmt,mWidth, mHeight, AV_PIX_FMT_RGBA,SWS_BICUBIC, nullptr, nullptr,nullptr);av_image_fill_arrays(mRgbFrame->data, mRgbFrame->linesize,mOutbuffer, AV_PIX_FMT_RGBA,mWidth, mHeight, 1);while (true) {int result = av_read_frame(mAvFormatContext, mAvPacket);if (result < 0) {break;}if (mAvPacket->stream_index == mVideoIndex) {int ret = codecAvFrame();if (ret == AVERROR(EAGAIN)) {continue;} else if (ret < 0) {break;}sendFrameDataToANativeWindow();}}int codecAvFrame() {ret = avcodec_send_packet(mAvCodecContext, mAvPacket);if (ret < 0 && ret != AVERROR(EAGAIN) && ret != AVERROR_EOF) {LOGD("解码出错");return -1;}ret = avcodec_receive_frame(mAvCodecContext, mAvFrame);return ret;

}int sendFrameDataToANativeWindow() {// 未压缩的数据sws_scale(mSwsContext, mAvFrame->data,mAvFrame->linesize, 0,mAvCodecContext->height, mRgbFrame->data,mRgbFrame->linesize);auto lock = ANativeWindow_lock(mNativeWindow, &windowBuffer, nullptr);if (lock < 0) {LOGD("cannot lock window");} else {//将图像绘制到界面上,注意这里pFrameRGBA一行的像素和windowBuffer一行的像素长度可能不一致//需要转换好,否则可能花屏auto *dst = (uint8_t *) windowBuffer.bits;for (int h = 0; h < mHeight; h++) {memcpy(dst + h * windowBuffer.stride * 4,mOutbuffer + h * mRgbFrame->linesize[0],mRgbFrame->linesize[0]);}}av_usleep(1000 * 33);ANativeWindow_unlockAndPost(mNativeWindow);return lock;

}调用av_frame_free来释放AVFrame,该函数不止会释放AVFrame本身的空间,还会释放包含在其内的其他对象动态申请的空间。

av_malloc和av_free,FFmpeg并没有提供垃圾回收机制,所有的内存管理都要手动进行。只要av_malloc在申请内存空间的时候会考虑内存对齐(2字节、4字节对齐),其申请的空间要调用av_free释放。

- 这个结构体用来描述解码的音视频数据;

- AVFrame必须使用av_frame_alloc分配;

- AVFrame必须使用av_frame_free释放;

- AVFrame通常分配一次,然后重复使用多次,当不同的帧数据(注:如一个AVFrame持有来自解码后的帧)再次被使用时,av_frame_unref将自由持有任何之前的帧引用并重置它变成初始态;

- 一个AVFrame所描述的数据通常时通过参考AVBuffer API计算的。内部的Buffer引用存储在AVFrame.buf/AVFrame.extend_buf;

- sizeof(AVFrame)不是一个公有API,因此新的成员将被添加到末尾。同样字段标记为只访问av_op_ptr就可以重新排序;

- uint8_t *data[AV_NUM_DATA_OPINTERS]:指针数据,存放YUV数据的地方;

- 对于packed格式的数据(例如RGB24)会存放到data[0]里面;

- 对于planar格式的数据(YUV420P),会分成data[0]、data[1]、data[2]....;

- int linesize[AV_NUM_DATA_POINTERS]:图像各个分量数据在此结构体重的宽度。

8、AVIOContext结构体:

协议(文件)操作的顶层结构是AVIOContext,这个对象实现了带缓冲的读/写操作。FFmpeg的输入对象AVFormat的pb字段指向一个AVIOContext。

mAvFormatContext = avformat_alloc_context();AVStream *avStream = avformat_new_stream(mAvFormatContext, nullptr);unsigned char * iobuffer = (unsigned char *) av_malloc(32768);AVIOContext *avio = avio_alloc_context(iobuffer, 32768, 0,nullptr, nullptr, nullptr,nullptr);mAvFormatContext->pb = avio;avformat_open_input(mAvFormatContext,url,NULL,NULL);9、URLProtocol结构体 :

URLProtocolFFmpeg操作文件结构(包括文件、网络数据流等),包括open、close、read、write、seek等操作。URLProtocol为协议操作对象,针对每种协议会有一个这样的对象,每个协议操作对象和协议对象关联。

10、AVIODirContext(URLContext)结构体:

AVIODirContext(URLContext)对象封装了协议对象及协议操作对象,AVIODirContext(URLContext)在avio.c中通过url_alloc_for_protocol进行初始化,并且分配空间(使用av_malloc(sizeof(URLContext)+strlen(filename)+1)函数)

二、FFmpeg关键函数:

1、av_regist_all函数(已经被废弃):

libavformat是用来处理多种媒体格式的,主要有两个作用,一个分离音视频,一个是反向合成具体的媒体格式。同时其也支持I/O模块,支持一系列的协议获取数据(文件、TCP、HTTP等),在使用libavformat之前,需要先调用av_register_all函数来注册所有编译的muxers、demuxers及protocol,如要使用libavformat的网络接口,需要使用avformat_network_init接口。

2、avformat_alloc_context函数:

当使用AVFormatContext时,需要对AVFormatContext分配内存空间,avformat_alloc_context函数的作用就基于此。

3、avio_open函数:

该函数用于打开FFmpeg的输入、输出文件。

4、avformat_open_input函数:

avformat_open_input的主要功能是打开一个文件,读取header,不会涉及打开解码器。与之对应的是通过av_format_close_input函数关闭文件。

5、avformat_find_stream_info函数:

该函数读取媒体文件的数据包以获取流信息。

6、av_read_frame函数:

返回流的下一帧。此函数返回文件中存储的内容,不验证解码器是否有有效的帧。它会将存储在文件中的内容拆分为多个帧,并为每个调用返回一个帧。

7、av_write_frame函数:

FFmpeg调用avformat_write_header函数写头部信息,调用av_write_frame函数写I帧数据,调用av_write_treailer函数写尾部信息。

8、avcodec_decode_video2函数:

avcodec_decode_video2函数将解码AVPacket的数据,并将解码后的数据填充到AVFrame中,AVFrame中保存的是解码后的原始数据。

三、FFPlay原理分析:

FFPlay是FFmpeg中拥有播放功能的可执行程序,它主要的源文件是ffplay.c文件。ffplay.c文件位置位于ffmpeg-6.1.1\fftools\ffplay.c。

1、ffplay.c播放视频的步骤:

- 注册所有容器格式和Codec:av_register_all;

- 打开文件:av_open_input_file;

- 从文件中提取流信息:av_find_stream_info;

- 遍历所有的数据流,查找其中的数据流种类;

- 查找对应的解码器:avcodec_find_decoder;

- 打开编解码器:avcodec_open;

- 为解码帧分配内存:avcodec_alloc_frame;

- 不停地从码流中提取出帧:av_read_frame;

- 判断帧的类型,调用视频帧:avcodec_decode_video;

- 解码完成后,释放解码器:avcodec_close;

- 关闭输入文件:av_close_input_file。

2、ffplay.c中的main函数分析:

重要函数stream_open():

A、传入input_filename,初始化填充VideoState的数据;

B、初始化FrameQueue、PacketQueue两个接收解析出来包装有AVFrame、AVPacket的队列;

C、并开启一个初始化1~11步骤打开文件,提取流信息。查找流种类、查找解码器、打开解码器、为解码帧分配存、提取出帧的线程read_thread。

/* Called from the main */

int main(int argc, char **argv)

{int flags, ret;VideoState *is;init_dynload();av_log_set_flags(AV_LOG_SKIP_REPEATED);parse_loglevel(argc, argv, options);/* register all codecs, demux and protocols */

#if CONFIG_AVDEVICE//1.注册所有容器格式和Codecavdevice_register_all();

#endifavformat_network_init();signal(SIGINT , sigterm_handler); /* Interrupt (ANSI). */signal(SIGTERM, sigterm_handler); /* Termination (ANSI). */show_banner(argc, argv, options);ret = parse_options(NULL, argc, argv, options, opt_input_file);if (ret < 0)exit(ret == AVERROR_EXIT ? 0 : 1);if (!input_filename) {show_usage();av_log(NULL, AV_LOG_FATAL, "An input file must be specified\n");av_log(NULL, AV_LOG_FATAL,"Use -h to get full help or, even better, run 'man %s'\n", program_name);exit(1);}if (display_disable) {video_disable = 1;}flags = SDL_INIT_VIDEO | SDL_INIT_AUDIO | SDL_INIT_TIMER;if (audio_disable)flags &= ~SDL_INIT_AUDIO;else {/* Try to work around an occasional ALSA buffer underflow issue when the* period size is NPOT due to ALSA resampling by forcing the buffer size. */if (!SDL_getenv("SDL_AUDIO_ALSA_SET_BUFFER_SIZE"))SDL_setenv("SDL_AUDIO_ALSA_SET_BUFFER_SIZE","1", 1);}if (display_disable)flags &= ~SDL_INIT_VIDEO;if (SDL_Init (flags)) {av_log(NULL, AV_LOG_FATAL, "Could not initialize SDL - %s\n", SDL_GetError());av_log(NULL, AV_LOG_FATAL, "(Did you set the DISPLAY variable?)\n");exit(1);}SDL_EventState(SDL_SYSWMEVENT, SDL_IGNORE);SDL_EventState(SDL_USEREVENT, SDL_IGNORE);if (!display_disable) {int flags = SDL_WINDOW_HIDDEN;if (alwaysontop)

#if SDL_VERSION_ATLEAST(2,0,5)flags |= SDL_WINDOW_ALWAYS_ON_TOP;

#elseav_log(NULL, AV_LOG_WARNING, "Your SDL version doesn't support SDL_WINDOW_ALWAYS_ON_TOP. Feature will be inactive.\n");

#endifif (borderless)flags |= SDL_WINDOW_BORDERLESS;elseflags |= SDL_WINDOW_RESIZABLE;#ifdef SDL_HINT_VIDEO_X11_NET_WM_BYPASS_COMPOSITORSDL_SetHint(SDL_HINT_VIDEO_X11_NET_WM_BYPASS_COMPOSITOR, "0");

#endifwindow = SDL_CreateWindow(program_name, SDL_WINDOWPOS_UNDEFINED, SDL_WINDOWPOS_UNDEFINED, default_width, default_height, flags);SDL_SetHint(SDL_HINT_RENDER_SCALE_QUALITY, "linear");if (window) {renderer = SDL_CreateRenderer(window, -1, SDL_RENDERER_ACCELERATED | SDL_RENDERER_PRESENTVSYNC);if (!renderer) {av_log(NULL, AV_LOG_WARNING, "Failed to initialize a hardware accelerated renderer: %s\n", SDL_GetError());renderer = SDL_CreateRenderer(window, -1, 0);}if (renderer) {if (!SDL_GetRendererInfo(renderer, &renderer_info))av_log(NULL, AV_LOG_VERBOSE, "Initialized %s renderer.\n", renderer_info.name);}}if (!window || !renderer || !renderer_info.num_texture_formats) {av_log(NULL, AV_LOG_FATAL, "Failed to create window or renderer: %s", SDL_GetError());do_exit(NULL);}}//A、传入input_filename,初始化填充VideoState的数据;//B、初始化FrameQueue、PacketQueue两个接收解析出来包装有AVFrame、AVPacket的队列;//C、并开启一个初始化1~11步骤打开文件,提取流信息。查找流种类、查找解码器、打开解码器、//为解码帧分配存、提取出帧的线程read_thread。is = stream_open(input_filename, file_iformat);if (!is) {av_log(NULL, AV_LOG_FATAL, "Failed to initialize VideoState!\n");do_exit(NULL);}//将VideoState数据不断发送到GUI显示event_loop(is);/* never returns */return 0;

}3、ffplay.c中read_thread线程分析:

read_thread中完成了打开文件,提取流信息。查找流种类、查找解码器、打开解码器、为解码帧分配存、提取出帧的操作,在stream_component_open静态函数是其主要的操作函数,实现了步骤6~7。并开启三个子线程audio_thread、video_thread、subtitle_thread(字幕线程)。

/* this thread gets the stream from the disk or the network */

static int read_thread(void *arg)

{VideoState *is = arg;AVFormatContext *ic = NULL;int err, i, ret;int st_index[AVMEDIA_TYPE_NB];AVPacket *pkt = NULL;int64_t stream_start_time;int pkt_in_play_range = 0;const AVDictionaryEntry *t;SDL_mutex *wait_mutex = SDL_CreateMutex();int scan_all_pmts_set = 0;int64_t pkt_ts;if (!wait_mutex) {av_log(NULL, AV_LOG_FATAL, "SDL_CreateMutex(): %s\n", SDL_GetError());ret = AVERROR(ENOMEM);goto fail;}memset(st_index, -1, sizeof(st_index));is->eof = 0;pkt = av_packet_alloc();if (!pkt) {av_log(NULL, AV_LOG_FATAL, "Could not allocate packet.\n");ret = AVERROR(ENOMEM);goto fail;}//1、注册所有容器格式和Codec,获取AVFormatContextic = avformat_alloc_context();if (!ic) {av_log(NULL, AV_LOG_FATAL, "Could not allocate context.\n");ret = AVERROR(ENOMEM);goto fail;}ic->interrupt_callback.callback = decode_interrupt_cb;ic->interrupt_callback.opaque = is;if (!av_dict_get(format_opts, "scan_all_pmts", NULL, AV_DICT_MATCH_CASE)) {av_dict_set(&format_opts, "scan_all_pmts", "1", AV_DICT_DONT_OVERWRITE);scan_all_pmts_set = 1;}// 2、打开文件err = avformat_open_input(&ic, is->filename, is->iformat, &format_opts);if (err < 0) {print_error(is->filename, err);ret = -1;goto fail;}if (scan_all_pmts_set)av_dict_set(&format_opts, "scan_all_pmts", NULL, AV_DICT_MATCH_CASE);if ((t = av_dict_get(format_opts, "", NULL, AV_DICT_IGNORE_SUFFIX))) {av_log(NULL, AV_LOG_ERROR, "Option %s not found.\n", t->key);ret = AVERROR_OPTION_NOT_FOUND;goto fail;}is->ic = ic;if (genpts)ic->flags |= AVFMT_FLAG_GENPTS;if (find_stream_info) {AVDictionary **opts;int orig_nb_streams = ic->nb_streams;err = setup_find_stream_info_opts(ic, codec_opts, &opts);if (err < 0) {av_log(NULL, AV_LOG_ERROR,"Error setting up avformat_find_stream_info() options\n");ret = err;goto fail;}//3、从文件中提取流信息err = avformat_find_stream_info(ic, opts);for (i = 0; i < orig_nb_streams; i++)av_dict_free(&opts[i]);av_freep(&opts);if (err < 0) {av_log(NULL, AV_LOG_WARNING,"%s: could not find codec parameters\n", is->filename);ret = -1;goto fail;}}if (ic->pb)ic->pb->eof_reached = 0; // FIXME hack, ffplay maybe should not use avio_feof() to test for the endif (seek_by_bytes < 0)seek_by_bytes = !(ic->iformat->flags & AVFMT_NO_BYTE_SEEK) &&!!(ic->iformat->flags & AVFMT_TS_DISCONT) &&strcmp("ogg", ic->iformat->name);is->max_frame_duration = (ic->iformat->flags & AVFMT_TS_DISCONT) ? 10.0 : 3600.0;if (!window_title && (t = av_dict_get(ic->metadata, "title", NULL, 0)))window_title = av_asprintf("%s - %s", t->value, input_filename);/* if seeking requested, we execute it */if (start_time != AV_NOPTS_VALUE) {int64_t timestamp;timestamp = start_time;/* add the stream start time */if (ic->start_time != AV_NOPTS_VALUE)timestamp += ic->start_time;ret = avformat_seek_file(ic, -1, INT64_MIN, timestamp, INT64_MAX, 0);if (ret < 0) {av_log(NULL, AV_LOG_WARNING, "%s: could not seek to position %0.3f\n",is->filename, (double)timestamp / AV_TIME_BASE);}}is->realtime = is_realtime(ic);if (show_status)av_dump_format(ic, 0, is->filename, 0);//4、遍历所有的数据流,查找其中的数据流种类for (i = 0; i < ic->nb_streams; i++) {AVStream *st = ic->streams[i];enum AVMediaType type = st->codecpar->codec_type;st->discard = AVDISCARD_ALL;if (type >= 0 && wanted_stream_spec[type] && st_index[type] == -1)if (avformat_match_stream_specifier(ic, st, wanted_stream_spec[type]) > 0)st_index[type] = i;}for (i = 0; i < AVMEDIA_TYPE_NB; i++) {if (wanted_stream_spec[i] && st_index[i] == -1) {av_log(NULL, AV_LOG_ERROR, "Stream specifier %s does not match any %s stream\n", wanted_stream_spec[i], av_get_media_type_string(i));st_index[i] = INT_MAX;}}//5、查找对应的解码器,av_find_best_streamif (!video_disable)st_index[AVMEDIA_TYPE_VIDEO] =av_find_best_stream(ic, AVMEDIA_TYPE_VIDEO,st_index[AVMEDIA_TYPE_VIDEO], -1, NULL, 0);if (!audio_disable)st_index[AVMEDIA_TYPE_AUDIO] =av_find_best_stream(ic, AVMEDIA_TYPE_AUDIO,st_index[AVMEDIA_TYPE_AUDIO],st_index[AVMEDIA_TYPE_VIDEO],NULL, 0);if (!video_disable && !subtitle_disable)st_index[AVMEDIA_TYPE_SUBTITLE] =av_find_best_stream(ic, AVMEDIA_TYPE_SUBTITLE,st_index[AVMEDIA_TYPE_SUBTITLE],(st_index[AVMEDIA_TYPE_AUDIO] >= 0 ?st_index[AVMEDIA_TYPE_AUDIO] :st_index[AVMEDIA_TYPE_VIDEO]),NULL, 0);is->show_mode = show_mode;if (st_index[AVMEDIA_TYPE_VIDEO] >= 0) {AVStream *st = ic->streams[st_index[AVMEDIA_TYPE_VIDEO]];AVCodecParameters *codecpar = st->codecpar;AVRational sar = av_guess_sample_aspect_ratio(ic, st, NULL);if (codecpar->width)set_default_window_size(codecpar->width, codecpar->height, sar);}/* open the streams *///根据流解码器,分别打开解码器。为解码帧分配内存if (st_index[AVMEDIA_TYPE_AUDIO] >= 0) {stream_component_open(is, st_index[AVMEDIA_TYPE_AUDIO]);}ret = -1;if (st_index[AVMEDIA_TYPE_VIDEO] >= 0) {ret = stream_component_open(is, st_index[AVMEDIA_TYPE_VIDEO]);}if (is->show_mode == SHOW_MODE_NONE)is->show_mode = ret >= 0 ? SHOW_MODE_VIDEO : SHOW_MODE_RDFT;if (st_index[AVMEDIA_TYPE_SUBTITLE] >= 0) {stream_component_open(is, st_index[AVMEDIA_TYPE_SUBTITLE]);}if (is->video_stream < 0 && is->audio_stream < 0) {av_log(NULL, AV_LOG_FATAL, "Failed to open file '%s' or configure filtergraph\n",is->filename);ret = -1;goto fail;}if (infinite_buffer < 0 && is->realtime)infinite_buffer = 1;for (;;) {if (is->abort_request)break;if (is->paused != is->last_paused) {is->last_paused = is->paused;if (is->paused)is->read_pause_return = av_read_pause(ic);elseav_read_play(ic);}

#if CONFIG_RTSP_DEMUXER || CONFIG_MMSH_PROTOCOLif (is->paused &&(!strcmp(ic->iformat->name, "rtsp") ||(ic->pb && !strncmp(input_filename, "mmsh:", 5)))) {/* wait 10 ms to avoid trying to get another packet *//* XXX: horrible */SDL_Delay(10);continue;}

#endifif (is->seek_req) {int64_t seek_target = is->seek_pos;int64_t seek_min = is->seek_rel > 0 ? seek_target - is->seek_rel + 2: INT64_MIN;int64_t seek_max = is->seek_rel < 0 ? seek_target - is->seek_rel - 2: INT64_MAX;

// FIXME the +-2 is due to rounding being not done in the correct direction in generation

// of the seek_pos/seek_rel variablesret = avformat_seek_file(is->ic, -1, seek_min, seek_target, seek_max, is->seek_flags);if (ret < 0) {av_log(NULL, AV_LOG_ERROR,"%s: error while seeking\n", is->ic->url);} else {if (is->audio_stream >= 0)packet_queue_flush(&is->audioq);if (is->subtitle_stream >= 0)packet_queue_flush(&is->subtitleq);if (is->video_stream >= 0)packet_queue_flush(&is->videoq);if (is->seek_flags & AVSEEK_FLAG_BYTE) {set_clock(&is->extclk, NAN, 0);} else {set_clock(&is->extclk, seek_target / (double)AV_TIME_BASE, 0);}}is->seek_req = 0;is->queue_attachments_req = 1;is->eof = 0;if (is->paused)step_to_next_frame(is);}if (is->queue_attachments_req) {if (is->video_st && is->video_st->disposition & AV_DISPOSITION_ATTACHED_PIC) {if ((ret = av_packet_ref(pkt, &is->video_st->attached_pic)) < 0)goto fail;packet_queue_put(&is->videoq, pkt);packet_queue_put_nullpacket(&is->videoq, pkt, is->video_stream);}is->queue_attachments_req = 0;}/* if the queue are full, no need to read more */if (infinite_buffer<1 &&(is->audioq.size + is->videoq.size + is->subtitleq.size > MAX_QUEUE_SIZE|| (stream_has_enough_packets(is->audio_st, is->audio_stream, &is->audioq) &&stream_has_enough_packets(is->video_st, is->video_stream, &is->videoq) &&stream_has_enough_packets(is->subtitle_st, is->subtitle_stream, &is->subtitleq)))) {/* wait 10 ms */SDL_LockMutex(wait_mutex);SDL_CondWaitTimeout(is->continue_read_thread, wait_mutex, 10);SDL_UnlockMutex(wait_mutex);continue;}if (!is->paused &&(!is->audio_st || (is->auddec.finished == is->audioq.serial && frame_queue_nb_remaining(&is->sampq) == 0)) &&(!is->video_st || (is->viddec.finished == is->videoq.serial && frame_queue_nb_remaining(&is->pictq) == 0))) {if (loop != 1 && (!loop || --loop)) {stream_seek(is, start_time != AV_NOPTS_VALUE ? start_time : 0, 0, 0);} else if (autoexit) {ret = AVERROR_EOF;goto fail;}}//8、不停地从码流中提取出帧:av_read_frameret = av_read_frame(ic, pkt);if (ret < 0) {if ((ret == AVERROR_EOF || avio_feof(ic->pb)) && !is->eof) {if (is->video_stream >= 0)packet_queue_put_nullpacket(&is->videoq, pkt, is->video_stream);if (is->audio_stream >= 0)packet_queue_put_nullpacket(&is->audioq, pkt, is->audio_stream);if (is->subtitle_stream >= 0)packet_queue_put_nullpacket(&is->subtitleq, pkt, is->subtitle_stream);is->eof = 1;}if (ic->pb && ic->pb->error) {if (autoexit)goto fail;elsebreak;}SDL_LockMutex(wait_mutex);SDL_CondWaitTimeout(is->continue_read_thread, wait_mutex, 10);SDL_UnlockMutex(wait_mutex);continue;} else {is->eof = 0;}/* check if packet is in play range specified by user, then queue, otherwise discard */stream_start_time = ic->streams[pkt->stream_index]->start_time;pkt_ts = pkt->pts == AV_NOPTS_VALUE ? pkt->dts : pkt->pts;pkt_in_play_range = duration == AV_NOPTS_VALUE ||(pkt_ts - (stream_start_time != AV_NOPTS_VALUE ? stream_start_time : 0)) *av_q2d(ic->streams[pkt->stream_index]->time_base) -(double)(start_time != AV_NOPTS_VALUE ? start_time : 0) / 1000000<= ((double)duration / 1000000);if (pkt->stream_index == is->audio_stream && pkt_in_play_range) {packet_queue_put(&is->audioq, pkt);} else if (pkt->stream_index == is->video_stream && pkt_in_play_range&& !(is->video_st->disposition & AV_DISPOSITION_ATTACHED_PIC)) {packet_queue_put(&is->videoq, pkt);} else if (pkt->stream_index == is->subtitle_stream && pkt_in_play_range) {packet_queue_put(&is->subtitleq, pkt);} else {av_packet_unref(pkt);}}ret = 0;fail:if (ic && !is->ic)avformat_close_input(&ic);av_packet_free(&pkt);if (ret != 0) {SDL_Event event;event.type = FF_QUIT_EVENT;event.user.data1 = is;SDL_PushEvent(&event);}SDL_DestroyMutex(wait_mutex);return 0;

}4、ffplay.c中stream_component_open:

stream_component_open这个函数是在read_thread函数中根据流解码器,为解码帧分配内存。依据codec_type的三种类型分别创建audio_thread、video_thread、subtitle_thread三个子线程不断地提取出AVFrame。decoder_init()初始化分配Decoder内存实际初始化PacketQueue队列,decoder_start创建子线程。用于提取各自codec_type的frame推送到队列中。

/* open a given stream. Return 0 if OK */

static int stream_component_open(VideoState *is, int stream_index)

{AVFormatContext *ic = is->ic;AVCodecContext *avctx;const AVCodec *codec;const char *forced_codec_name = NULL;AVDictionary *opts = NULL;const AVDictionaryEntry *t = NULL;int sample_rate;AVChannelLayout ch_layout = { 0 };int ret = 0;int stream_lowres = lowres;if (stream_index < 0 || stream_index >= ic->nb_streams)return -1;//初始化编码器上下文AVCodecContextavctx = avcodec_alloc_context3(NULL);if (!avctx)return AVERROR(ENOMEM);ret = avcodec_parameters_to_context(avctx, ic->streams[stream_index]->codecpar);if (ret < 0)goto fail;avctx->pkt_timebase = ic->streams[stream_index]->time_base;codec = avcodec_find_decoder(avctx->codec_id);switch(avctx->codec_type){case AVMEDIA_TYPE_AUDIO : is->last_audio_stream = stream_index; forced_codec_name = audio_codec_name; break;case AVMEDIA_TYPE_SUBTITLE: is->last_subtitle_stream = stream_index; forced_codec_name = subtitle_codec_name; break;case AVMEDIA_TYPE_VIDEO : is->last_video_stream = stream_index; forced_codec_name = video_codec_name; break;}if (forced_codec_name)codec = avcodec_find_decoder_by_name(forced_codec_name);if (!codec) {if (forced_codec_name) av_log(NULL, AV_LOG_WARNING,"No codec could be found with name '%s'\n", forced_codec_name);else av_log(NULL, AV_LOG_WARNING,"No decoder could be found for codec %s\n", avcodec_get_name(avctx->codec_id));ret = AVERROR(EINVAL);goto fail;}avctx->codec_id = codec->id;if (stream_lowres > codec->max_lowres) {av_log(avctx, AV_LOG_WARNING, "The maximum value for lowres supported by the decoder is %d\n",codec->max_lowres);stream_lowres = codec->max_lowres;}avctx->lowres = stream_lowres;if (fast)avctx->flags2 |= AV_CODEC_FLAG2_FAST;ret = filter_codec_opts(codec_opts, avctx->codec_id, ic,ic->streams[stream_index], codec, &opts);if (ret < 0)goto fail;if (!av_dict_get(opts, "threads", NULL, 0))av_dict_set(&opts, "threads", "auto", 0);if (stream_lowres)av_dict_set_int(&opts, "lowres", stream_lowres, 0);av_dict_set(&opts, "flags", "+copy_opaque", AV_DICT_MULTIKEY);//6、打开编解码器:avcodec_openif ((ret = avcodec_open2(avctx, codec, &opts)) < 0) {goto fail;}if ((t = av_dict_get(opts, "", NULL, AV_DICT_IGNORE_SUFFIX))) {av_log(NULL, AV_LOG_ERROR, "Option %s not found.\n", t->key);ret = AVERROR_OPTION_NOT_FOUND;goto fail;}is->eof = 0;ic->streams[stream_index]->discard = AVDISCARD_DEFAULT;switch (avctx->codec_type) {case AVMEDIA_TYPE_AUDIO:{AVFilterContext *sink;is->audio_filter_src.freq = avctx->sample_rate;ret = av_channel_layout_copy(&is->audio_filter_src.ch_layout, &avctx->ch_layout);if (ret < 0)goto fail;is->audio_filter_src.fmt = avctx->sample_fmt;if ((ret = configure_audio_filters(is, afilters, 0)) < 0)goto fail;sink = is->out_audio_filter;sample_rate = av_buffersink_get_sample_rate(sink);ret = av_buffersink_get_ch_layout(sink, &ch_layout);if (ret < 0)goto fail;}/* prepare audio output */if ((ret = audio_open(is, &ch_layout, sample_rate, &is->audio_tgt)) < 0)goto fail;is->audio_hw_buf_size = ret;is->audio_src = is->audio_tgt;is->audio_buf_size = 0;is->audio_buf_index = 0;/* init averaging filter */is->audio_diff_avg_coef = exp(log(0.01) / AUDIO_DIFF_AVG_NB);is->audio_diff_avg_count = 0;/* since we do not have a precise anough audio FIFO fullness,we correct audio sync only if larger than this threshold */is->audio_diff_threshold = (double)(is->audio_hw_buf_size) / is->audio_tgt.bytes_per_sec;is->audio_stream = stream_index;is->audio_st = ic->streams[stream_index];if ((ret = decoder_init(&is->auddec, avctx, &is->audioq, is->continue_read_thread)) < 0)goto fail;if (is->ic->iformat->flags & AVFMT_NOTIMESTAMPS) {is->auddec.start_pts = is->audio_st->start_time;is->auddec.start_pts_tb = is->audio_st->time_base;}//提取出audio的frameif ((ret = decoder_start(&is->auddec, audio_thread, "audio_decoder", is)) < 0)goto out;SDL_PauseAudioDevice(audio_dev, 0);break;case AVMEDIA_TYPE_VIDEO:is->video_stream = stream_index;is->video_st = ic->streams[stream_index];if ((ret = decoder_init(&is->viddec, avctx, &is->videoq, is->continue_read_thread)) < 0)goto fail;//提取出video的frameif ((ret = decoder_start(&is->viddec, video_thread, "video_decoder", is)) < 0)goto out;is->queue_attachments_req = 1;break;case AVMEDIA_TYPE_SUBTITLE:is->subtitle_stream = stream_index;is->subtitle_st = ic->streams[stream_index];if ((ret = decoder_init(&is->subdec, avctx, &is->subtitleq, is->continue_read_thread)) < 0)goto fail;//提取出subtitle的frameif ((ret = decoder_start(&is->subdec, subtitle_thread, "subtitle_decoder", is)) < 0)goto out;break;default:break;}goto out;fail:avcodec_free_context(&avctx);

out:av_channel_layout_uninit(&ch_layout);av_dict_free(&opts);return ret;

}

5、ffplay.c中video_thread线程分析:

在get_video_frame分离出AVFrame。给函数decoder_decode_frame丢到PacketQueue队列中去。

static int video_thread(void *arg)

{VideoState *is = arg;AVFrame *frame = av_frame_alloc();double pts;double duration;int ret;AVRational tb = is->video_st->time_base;AVRational frame_rate = av_guess_frame_rate(is->ic, is->video_st, NULL);AVFilterGraph *graph = NULL;AVFilterContext *filt_out = NULL, *filt_in = NULL;int last_w = 0;int last_h = 0;enum AVPixelFormat last_format = -2;int last_serial = -1;int last_vfilter_idx = 0;if (!frame)return AVERROR(ENOMEM);for (;;) {//8.不停地从码流中提取出帧:av_read_frameret = get_video_frame(is, frame);if (ret < 0)goto the_end;if (!ret)continue;if ( last_w != frame->width|| last_h != frame->height|| last_format != frame->format|| last_serial != is->viddec.pkt_serial|| last_vfilter_idx != is->vfilter_idx) {av_log(NULL, AV_LOG_DEBUG,"Video frame changed from size:%dx%d format:%s serial:%d to size:%dx%d format:%s serial:%d\n",last_w, last_h,(const char *)av_x_if_null(av_get_pix_fmt_name(last_format), "none"), last_serial,frame->width, frame->height,(const char *)av_x_if_null(av_get_pix_fmt_name(frame->format), "none"), is->viddec.pkt_serial);avfilter_graph_free(&graph);graph = avfilter_graph_alloc();if (!graph) {ret = AVERROR(ENOMEM);goto the_end;}graph->nb_threads = filter_nbthreads;if ((ret = configure_video_filters(graph, is, vfilters_list ? vfilters_list[is->vfilter_idx] : NULL, frame)) < 0) {SDL_Event event;event.type = FF_QUIT_EVENT;event.user.data1 = is;SDL_PushEvent(&event);goto the_end;}filt_in = is->in_video_filter;filt_out = is->out_video_filter;last_w = frame->width;last_h = frame->height;last_format = frame->format;last_serial = is->viddec.pkt_serial;last_vfilter_idx = is->vfilter_idx;frame_rate = av_buffersink_get_frame_rate(filt_out);}ret = av_buffersrc_add_frame(filt_in, frame);if (ret < 0)goto the_end;while (ret >= 0) {FrameData *fd;is->frame_last_returned_time = av_gettime_relative() / 1000000.0;ret = av_buffersink_get_frame_flags(filt_out, frame, 0);if (ret < 0) {if (ret == AVERROR_EOF)is->viddec.finished = is->viddec.pkt_serial;ret = 0;break;}fd = frame->opaque_ref ? (FrameData*)frame->opaque_ref->data : NULL;is->frame_last_filter_delay = av_gettime_relative() / 1000000.0 - is->frame_last_returned_time;if (fabs(is->frame_last_filter_delay) > AV_NOSYNC_THRESHOLD / 10.0)is->frame_last_filter_delay = 0;tb = av_buffersink_get_time_base(filt_out);duration = (frame_rate.num && frame_rate.den ? av_q2d((AVRational){frame_rate.den, frame_rate.num}) : 0);pts = (frame->pts == AV_NOPTS_VALUE) ? NAN : frame->pts * av_q2d(tb);ret = queue_picture(is, frame, pts, duration, fd ? fd->pkt_pos : -1, is->viddec.pkt_serial);av_frame_unref(frame);if (is->videoq.serial != is->viddec.pkt_serial)break;}if (ret < 0)goto the_end;}the_end:avfilter_graph_free(&graph);av_frame_free(&frame);return 0;

}以上是ffplay.c的流程分析,用了多线程对流进行分离解码。队列的保存解码出来的数据流。再从队列中循环读取到GUI中显示。